Environment-in-the-Loop Verification of Automotive Radar IC Designs

By Sainath Karlapalem, NXP Semiconductors

At NXP Semiconductors, my team and I have developed a new methodology for verifying automotive radar integrated circuit (IC) designs. This shift-left methodology combines early verification of datasheet-level metrics with virtual field trials. By focusing on metrics at the specification level rather than the hardware implementation level, we ensure that the verification signoff criteria we use to evaluate a design align with those our customers are most interested in. And, by simulating on-road scenarios in virtual field trials, we enable environment-in-the-loop verification with realistic test stimuli for radar IC hardware.

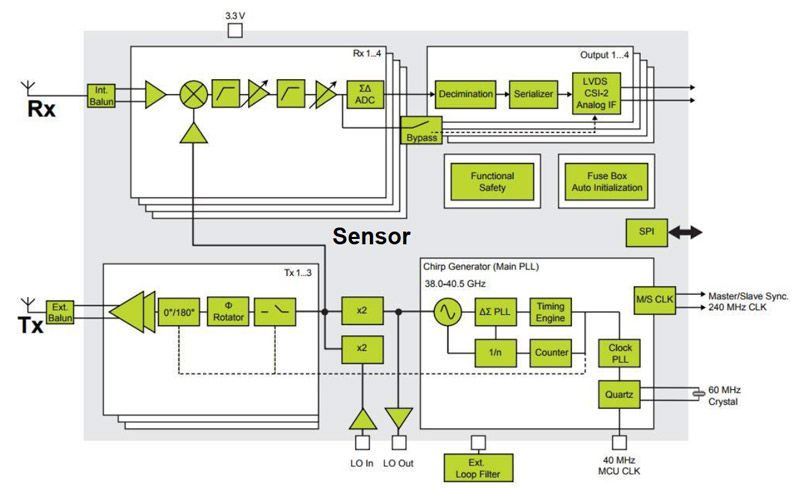

Our customers, who include many tier 1 automotive suppliers, are most interested in the kinds of performance metrics captured on a datasheet, such as signal-to-noise ratio (SNR) and total harmonic distortion (THD). They are less interested in individual component test results, code coverage results, and other metrics at the hardware implementation level, although these results are a primary concern of most IC verification teams. In addition, our customers use field trials and real-world driving scenarios to evaluate complete radar systems, while IC verification teams often use test patterns that are far removed from real-world signals to evaluate individual RF, analog, and digital components (Figure 1).

The shift-left methodology that my team and I have defined and implemented aligns the processes we use to verify IC designs with the criteria our customers use to evaluate them. The on-road driving scenarios we developed for virtual field trials are based on the European New Car Assessment Programme (Euro NCAP) standard that many of our customers follow, and the functional and performance metrics we produce (for example, SNR) are the same metrics that our customers use to evaluate IC components in their own products.

Early Verification of Datasheet-Level Metrics

When verifying the digital portions of automotive radar systems in the past, my team adopted an approach based on the Universal Verification Methodology (UVM). This approach involved replicating the functionality of the design under test (DUT) with a reference model created in a high-level language. The output of the DUT was then compared with the output of the reference model for a given input test vector. The UVM tests did not capture SNR measurements and other metrics our customers were interested in, and even relatively small implementation changes, such as updating the coefficients of a finite impulse response (FIR) filter, required a corresponding change in the testbench. Keeping the testbench in sync with the implementation required considerable effort and time.

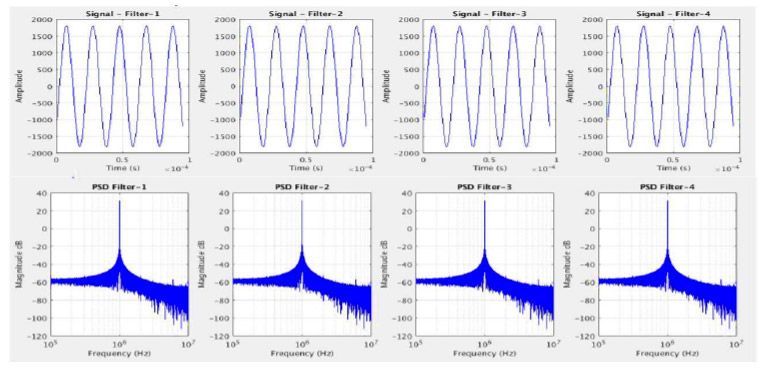

Given the drawbacks and limitations of this approach, we decided to focus our verification efforts on the functionality and performance of our design rather than on one-to-one equivalence between the implementation and reference model. Now, we develop MATLAB® algorithms that compute high-level design metrics such as SNR, THD, and power spectral density (PSD), as well as metrics for filters and other components, such as stopband attenuation and passband ripple. Using HDL Verifier™, we generate SystemVerilog DPI components from these MATLAB algorithms and integrate them into the HDL testbench for the Cadence® simulation environment (Figure 2).

Sample signal data is collected from the DUT and passed to the DPI-C function generated from our MATLAB verification code. We plot the results (Figure 3) and check them against the system requirements to ensure that the design matches the specifications.

Using DPI-C models generated from MATLAB enables us to compute functional and performance metrics at multiple interfaces in the Cadence HDL verification environment. We can decouple design implementation from verification and conduct testing at a level of abstraction more closely aligned with the metrics that interest our customers.

We can also reuse the C code generated from MATLAB to analyze the results from tests of initial silicon. For example, we collect sample data from our radar sensor IC and pass it through the same SNR calculation C functions generated from MATLAB that we used to verify our design in SystemVerilog.

Virtual Field Trials

In our transition to a metrics-driven verification approach we conduct virtual field trials using data from real-world driving scenarios. In the past, we verified the RF, analog, and digital subsystems separately, using a different set of test vectors for each subsystem. Few of these test vectors were derived from radar reflections obtained during on-road tests.

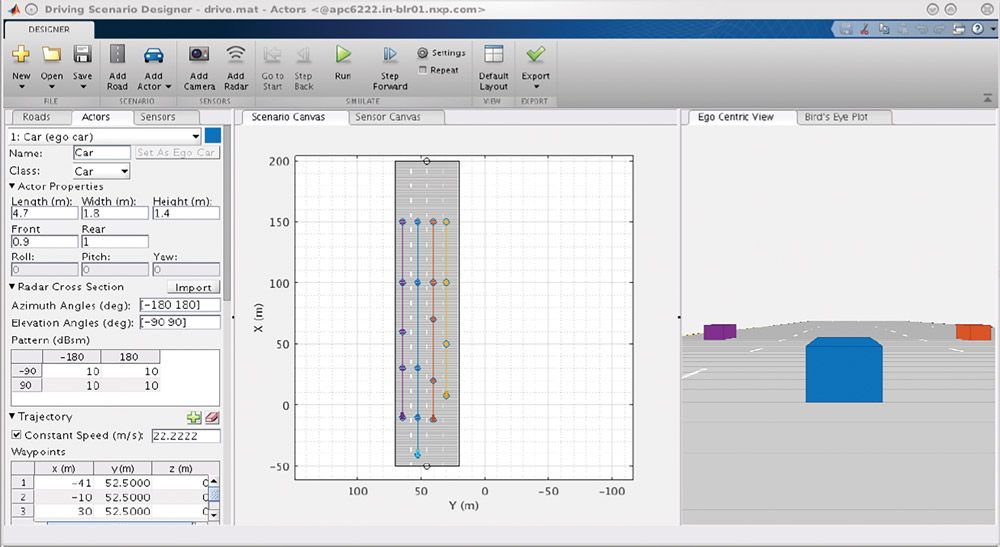

We have extended our methodology to include environment-in-the-loop verification. We now build driving scenarios using the Driving Scenario Designer app in Automated Driving Toolbox™ (Figure 4). Prebuilt scenarios in the app represent the Euro NCAP test protocols, our customers’ benchmark for evaluating radar system performance.

Next, we build a radar sensor model with Phased Array Toolbox™. To match this model with the datasheet specifications of our actual sensor, we adjust parameters for antenna aperture, peak transmit power, the receiver noise figure, and the number of antenna elements. We also adjust parameters that affect the frequency-modulated continuous-wave (FMCW) waveform, including maximum range, chirp duration, sweep bandwidth, and sample rate. We integrate the sensor model into the driving scenario that we created earlier, virtually mounting the radar sensor on the ego vehicle (Figure 5).

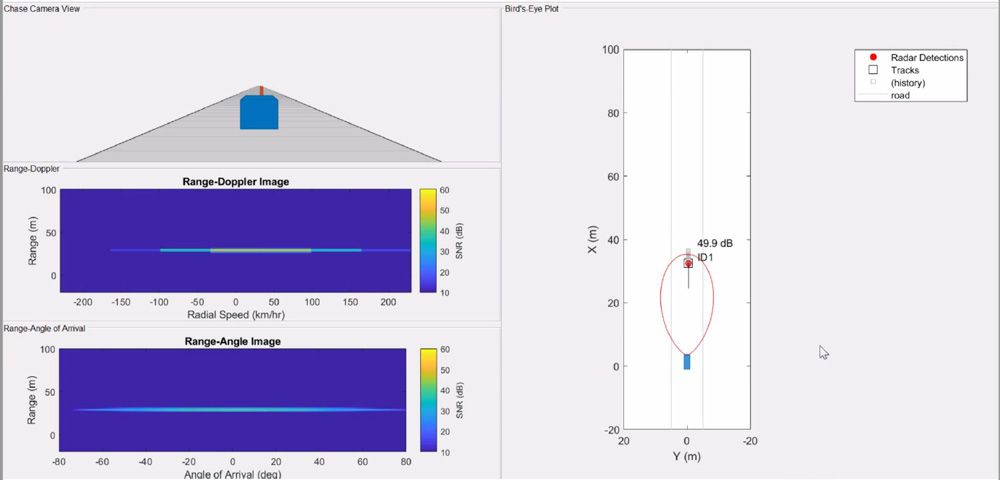

We then execute the driving scenario and capture the mixer output of the sensor, a signal dechirped from the radar reflections of objects in the scenario. We pass this dechirped signal through a Simulink® model of our ADC design to produce digital IQ data, which we feed into our digital baseband processing chain.

With this setup we can generate IQ data based on Euro NCAP driving scenarios and conduct virtual field trials of our digital processing chain early in the development phase—potentially a year or more before first silicon (Figure 6).

Future Work

We have extended our use of the new methodologies and workflows to next-generation radar transceivers. For these products, we will incorporate environmental effects into our scenarios so that we can see how the design performs in the presence of rain or fog, for example.

Recognizing that nothing restricts this new verification methodology to the digital components of automotive radar systems, we are looking forward to applying virtual field trials to analog components and to other applications, such as car-to-car communication systems. This article focused on verifying the digital portion of the sensor implementation, but this environment-in-the-loop approach can easily be extended to verify mixed-signal and RF designs such as the ADC in the sensor design.

Many thanks to my NXP Semi team member Kaushik Vasanth for implementing our environment-in-the-loop verification methodology, and to Vidya Viswanathan of MathWorks for offering timely technical support.

Published 2020