基于图像和特征数据训练网络

此示例说明如何使用图像和特征输入数据来训练对手写数字进行分类的网络。

加载训练数据

加载数字图像、标签和顺时针旋转角度。

load DigitsDataTrain要使用 trainnet 函数训练具有多个输入的网络,请创建包含训练预测变量和响应的单个数据存储。要将数值数组转换为数据存储,请使用 arrayDatastore。然后使用 combine 函数将它们合并到一个数据存储中。

dsX1Train = arrayDatastore(XTrain,IterationDimension=4); dsX2Train = arrayDatastore(anglesTrain); dsTTrain = arrayDatastore(labelsTrain); dsTrain = combine(dsX1Train,dsX2Train,dsTTrain);

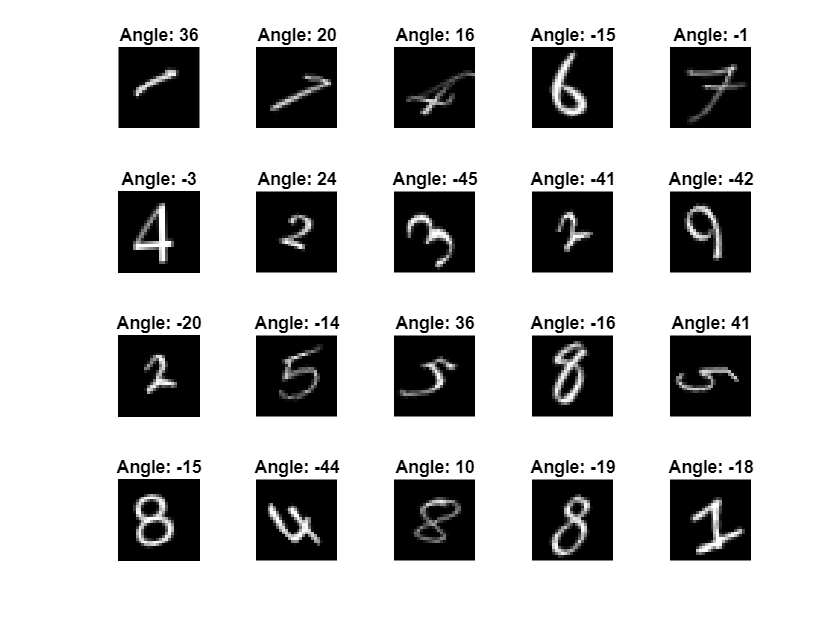

显示 20 个随机训练图像。

numObservationsTrain = numel(labelsTrain); idx = randperm(numObservationsTrain,20); figure tiledlayout("flow"); for i = 1:numel(idx) nexttile imshow(XTrain(:,:,:,idx(i))) title("Angle: " + anglesTrain(idx(i))) end

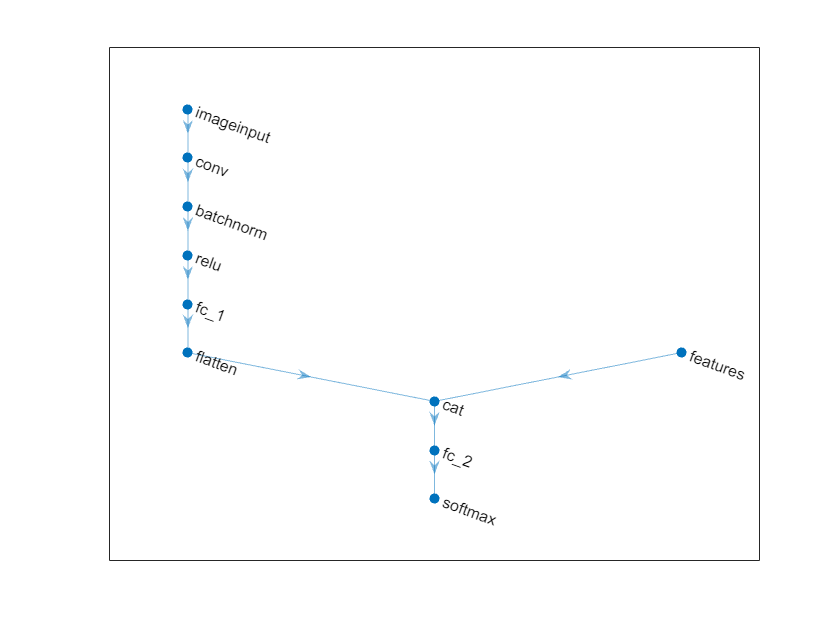

定义网络架构

定义以下网络。

对于图像输入,指定一个大小与输入数据匹配的图像输入层。

对于特征输入,指定一个大小与输入特征数匹配的特征输入层。

对于图像输入分支,指定卷积层、批量归一化层和 ReLU 层模块,其中卷积层有 16 个 5×5 滤波器。

要将批量规一化层的输出转换为特征向量,请包含一个大小为 50 的全连接层。

要将第一个全连接层的输出与特征输入串联起来,请使用一个展平层将全连接层的

"SSCB"(空间、空间、通道、批量)输出展平,使其格式为"CB"。沿第一个维度(通道维度)将展平层的输出与特征输入串联起来。

对于分类输出,包含一个输出大小与类数目匹配的全连接层,后接一个 softmax 层。

创建一个空的神经网络。

net = dlnetwork;

创建一个包含网络主分支的层数组,并将其添加到网络中。

[h,w,numChannels,numObservations] = size(XTrain);

numFeatures = 1;

classNames = categories(labelsTrain);

numClasses = numel(classNames);

imageInputSize = [h w numChannels];

filterSize = 5;

numFilters = 16;

layers = [

imageInputLayer(imageInputSize,Normalization="none")

convolution2dLayer(filterSize,numFilters)

batchNormalizationLayer

reluLayer

fullyConnectedLayer(50)

flattenLayer

concatenationLayer(1,2,Name="cat")

fullyConnectedLayer(numClasses)

softmaxLayer];

net = addLayers(net,layers);向网络添加一个特征输入层,并将其连接到串联层的第二个输入。

featInput = featureInputLayer(numFeatures,Name="features"); net = addLayers(net,featInput); net = connectLayers(net,"features","cat/in2");

在图中可视化网络。

figure plot(net)

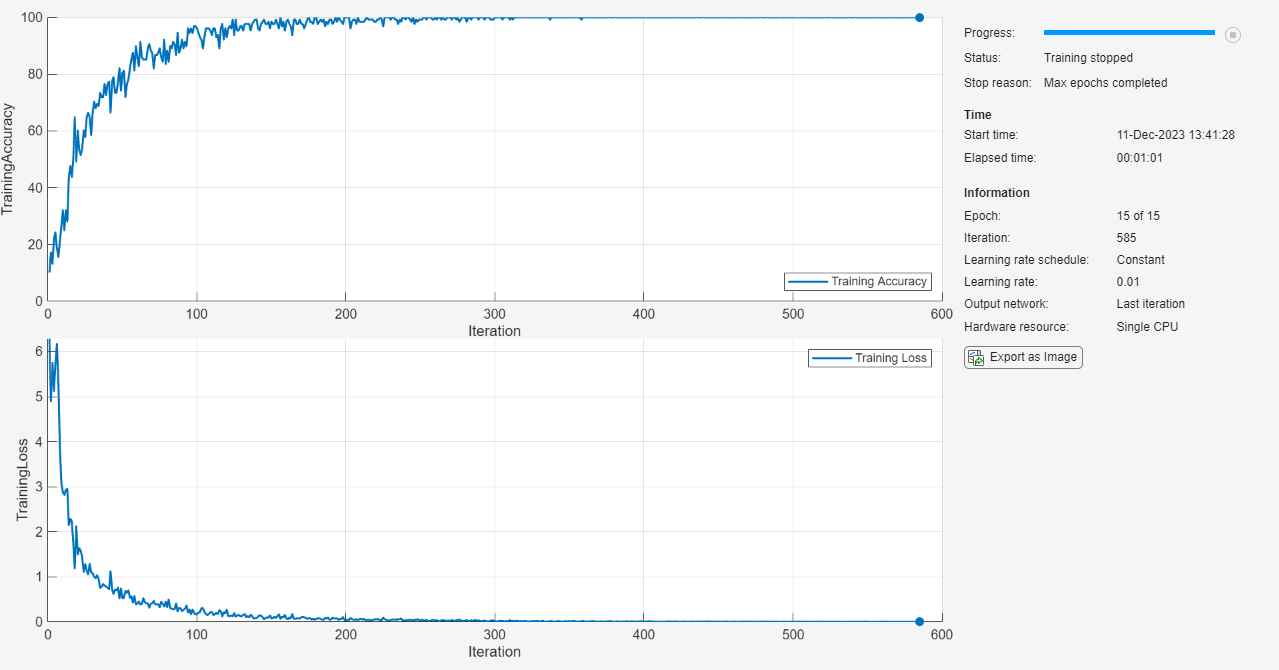

指定训练选项

指定训练选项。在选项中进行选择需要经验分析。要通过运行试验探索不同训练选项配置,您可以使用Experiment Manager。

使用 SGDM 优化器进行训练。

进行 15 轮训练。

以 0.01 的学习率进行训练。

在图中显示训练进度并监控准确度度量。

隐藏详细输出。

options = trainingOptions("sgdm", ... MaxEpochs=15, ... InitialLearnRate=0.01, ... Plots="training-progress", ... Metrics="accuracy", ... Verbose=0);

训练网络

使用 trainnet 函数训练神经网络。对于分类,使用交叉熵损失。默认情况下,trainnet 函数使用 GPU(如果有)。使用 GPU 需要 Parallel Computing Toolbox™ 许可证和受支持的 GPU 设备。有关受支持设备的信息,请参阅GPU 计算要求 (Parallel Computing Toolbox)。否则,该函数使用 CPU。要指定执行环境,请使用 ExecutionEnvironment 训练选项。

net = trainnet(dsTrain,net,"crossentropy",options);

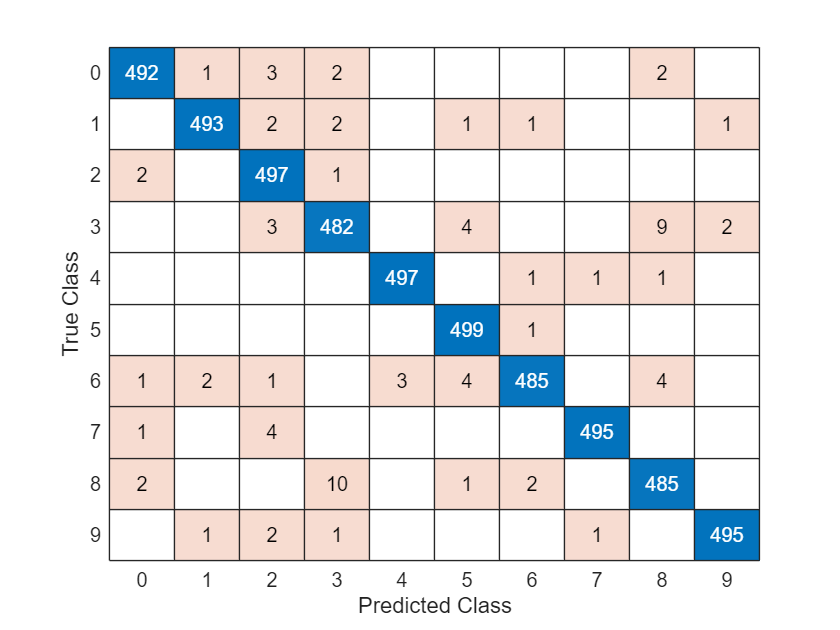

测试网络

使用与训练数据相同的步骤加载测试数据并创建数据存储。

load DigitsDataTest

dsX1Test = arrayDatastore(XTest,IterationDimension=4);

dsX2Test = arrayDatastore(anglesTest);

dsTTest = arrayDatastore(labelsTest);

dsTest = combine(dsX1Test,dsX2Test,dsTTest);使用 testnet 函数测试神经网络。对于单标签分类,需评估准确度。准确度是指正确预测的百分比。默认情况下,testnet 函数使用 GPU(如果有)。要手动选择执行环境,请使用 testnet 函数的 ExecutionEnvironment 参量。

accuracy = testnet(net,dsTest,"accuracy")accuracy = 98.4000

在混淆图中可视化预测。使用 minibatchpredict 函数进行预测,并使用 scores2label 函数将分数转换为标签。默认情况下,minibatchpredict 函数使用 GPU(如果有)。

scores = minibatchpredict(net,XTest,anglesTest); YTest = scores2label(scores,classNames); figure confusionchart(labelsTest,YTest)

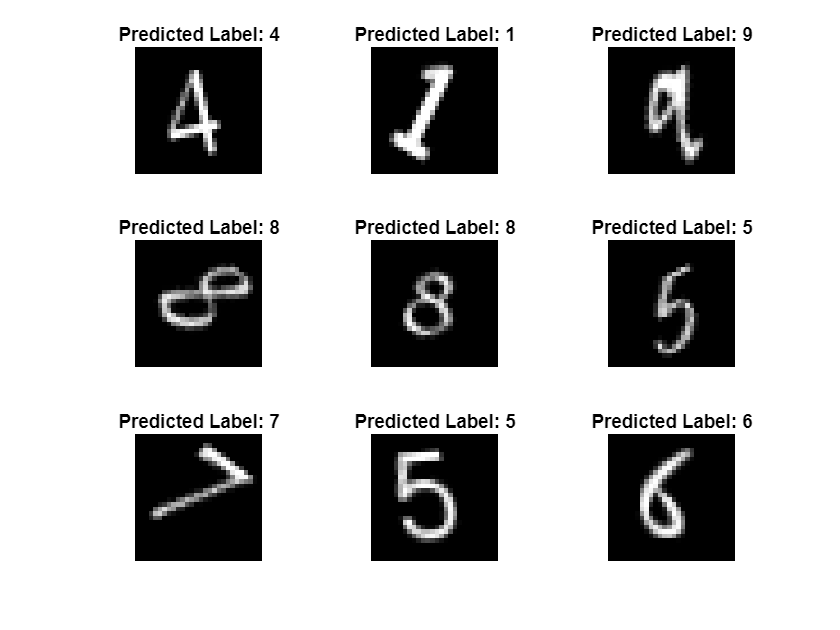

查看其中一些图像及其预测值。

idx = randperm(size(XTest,4),9); figure tiledlayout(3,3) for i = 1:9 nexttile I = XTest(:,:,:,idx(i)); imshow(I) label = string(YTest(idx(i))); title("Predicted Label: " + label) end

另请参阅

dlnetwork | dlfeval | dlarray | fullyConnectedLayer | 深度网络设计器 | featureInputLayer | minibatchqueue | onehotencode | onehotdecode