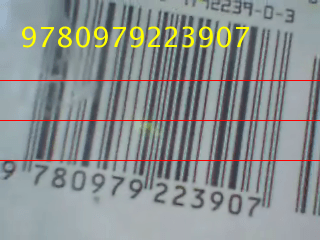

From Video Device

Capture live image data from image acquisition device

Libraries:

Image Acquisition Toolbox

Description

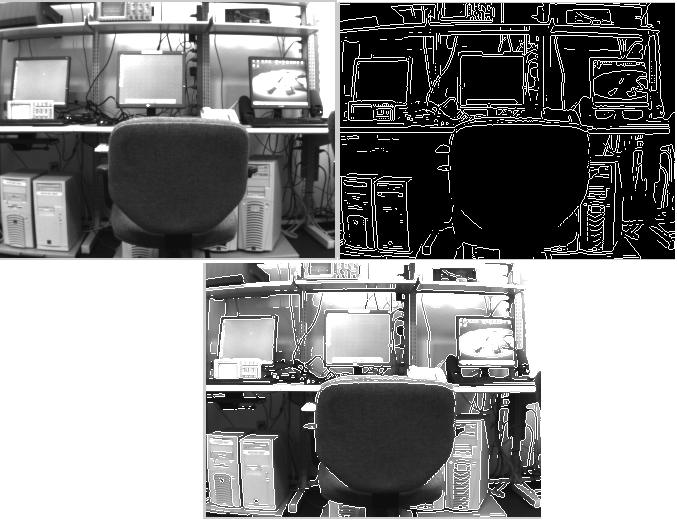

The From Video Device block lets you capture image and video data streams from image acquisition devices, such as cameras and frame grabbers, in order to bring the image data into a Simulink® model. The block also lets you configure and preview the acquisition directly from Simulink.

The From Video Device block opens, initializes, configures, and controls an acquisition device. The block opens, initializes, and configures only once, at the start of the model execution. While the Read All Frames option is selected, the block queues incoming image frames in a FIFO (first in, first out) buffer and delivers one image frame for each simulation time step. If the buffer underflows, the block waits for up to 10 seconds until a new frame is in the buffer.

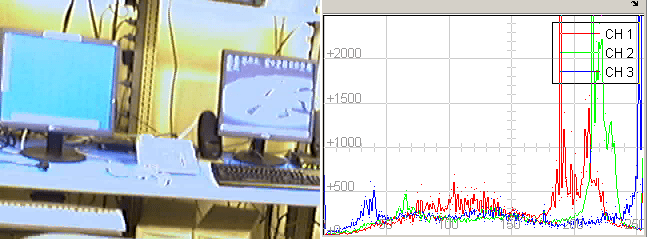

The block has no input ports. You can configure the block to have either one output port or three output ports corresponding to the uncompressed color bands red, green, and blue or Y, Cb, and Cr. For more information about configuring the output ports, see the Output section.

For an example of how to use this block, see Save Video Data to a File.

Other Supported Features

The From Video Device block supports the use of Simulink Accelerator mode. This feature speeds up the execution of Simulink models.

The From Video Device block supports the use of model referencing. This feature lets your model include other Simulink models as modular components.

The From Video Device block supports the use of Code Generation along with the

packNGofunction to group required source code and dependent shared libraries.

Examples

Ports

Output

Parameters

Extended Capabilities

Version History

Introduced in R2007a