imageMetrics

Syntax

Description

imageSummary = imageMetrics(metrics)metrics, for each image in

the data set.

[

evaluates the object detection metrics, imageSummary,imagePredictions,imageGroundTruth] = imageMetrics(metrics)metrics, for each image in

the data set, and returns the performance summary, predictions, and ground truth

information for each image.

[___] = imageMetrics(

evaluates the object detection metrics, metrics,imageIndices)metrics, for images in the

data set specified using the image indices, imageIndices.

[___] = imageMetrics(___,

specifies options that configure per-image metrics using name-value arguments, in

addition to the input arguments from the previous syntaxes. For example,

Name=Value)ClassNames=["person" "car"] specifies to evaluate per-image

metrics for the person and car classes.

Examples

Load Detection Results and Ground Truth Annotations

Load precomputed detection results and ground truth labels. The ground truth data is a subset of the Indoor Object Detection Dataset created by Bishwo Adhikari, and is in the form of a boxLabelDatastore that returns the object bounding boxes and their corresponding class labels for every image [1]. The MAT files containing the precomputed results and annotations are attached to this example as supporting files. To train an object detector on a custom dataset and generate the detection results, see Multiclass Object Detection Using YOLO v2 Deep Learning.

load("yolov2IndoorObjectDetectorAnnotation100.mat"); load("yolov2IndoorObjectDetectorResults100.mat");

Evaluate and Summarize Object Detection Metrics

Evaluate the object detection results against the ground truth using the evaluateObjectDetection function. Compute the metrics summary across the dataset and classes using the summarize function.

evalMetrics = evaluateObjectDetection(results,blds,[0.5,0.75,0.9]); [summaryDataset,summaryClass] = summarize(evalMetrics); disp(summaryDataset)

NumObjects mAPOverlapAvg mAP0.5 mAP0.75 mAP0.9

__________ _____________ _______ _______ ________

171 0.40012 0.74165 0.44671 0.012002

disp(summaryClass)

NumObjects APOverlapAvg AP0.5 AP0.75 AP0.9

__________ ____________ _______ _______ __________

exit 53 0.60733 0.9619 0.82106 0.039027

fireextinguisher 49 0.54426 0.93878 0.68903 0.0049712

chair 55 0.35992 0.88449 0.19463 0.00062696

clock 13 0.4891 0.92308 0.52885 0.015385

trashbin 1 0 0 0 0

screen 0 NaN NaN NaN NaN

printer 0 NaN NaN NaN NaN

The summary across classes shows that the "trashbin" class has only one ground truth object in this evaluation dataset, while the "screen" and "printer" classes do not have any ground truth objects present.

Evaluate Per-Image Metrics

Compute per-image metrics for the data set.

imageSummary = imageMetrics(evalMetrics);

Display the first ten images and their summarized per-image metrics.

disp(imageSummary(1:10,:) )

ImageIndex NumPredictedObjects NumGroundTruthObjects TP FP FN Precision Recall

__________ ___________________ _____________________ __ __ __ _________ _______

1 2 2 2 0 0 1 1

2 2 2 2 0 0 1 1

3 1 1 1 0 0 1 1

4 0 2 0 0 2 NaN 0

5 3 2 2 1 0 0.66667 1

6 2 3 2 0 1 1 0.66667

7 2 2 2 0 0 1 1

8 2 2 2 0 0 1 1

9 2 2 2 0 0 1 1

10 3 2 2 1 0 0.66667 1

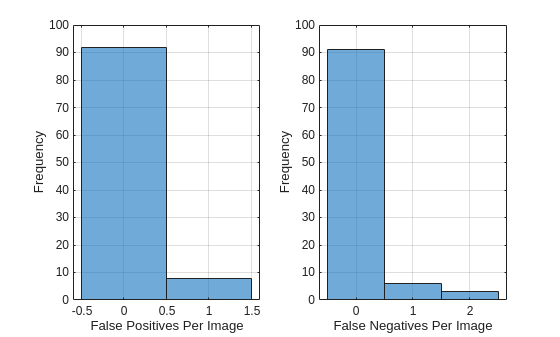

Plot the histogram of false positive (FP) and false negative (FN) counts for each image.

figure; subplot(1,2,1) histogram(imageSummary.FP) xlabel("False Positives Per Image") ylabel("Frequency") grid on subplot(1,2,2) histogram(imageSummary.FN) xlabel("False Negatives Per Image") ylabel("Frequency") grid on

Most images have very few mistakes. The FP histogram shows that 8 images have one false positive detection. The FN histogram shows that 6 images have one false negative, and only 3 images have two false negatives.

[1] Adhikari, Bishwo; Peltomaki, Jukka; Huttunen, Heikki. (2019). Indoor Object Detection Dataset [Data set]. 7th European Workshop on Visual Information Processing 2018 (EUVIP), Tampere, Finland.

Load precomputed detection results and ground truth labels. The ground truth data is a subset of the Indoor Object Detection Dataset created by Bishwo Adhikari [1]. The MAT files containing the precomputed results and annotations are attached to this example as supporting files.

load("yolov2IndoorObjectDetectorAnnotation100.mat"); load("yolov2IndoorObjectDetectorResults100.mat");

Evaluate Object Detection Results

Evaluate the object detection results against the ground truth using the evaluateObjectDetection function. Specify three overlap thresholds for the evaluation: 0.5, 0.75, and 0.9.

evalMetrics = evaluateObjectDetection(results,blds,[0.5 0.75 0.9]);

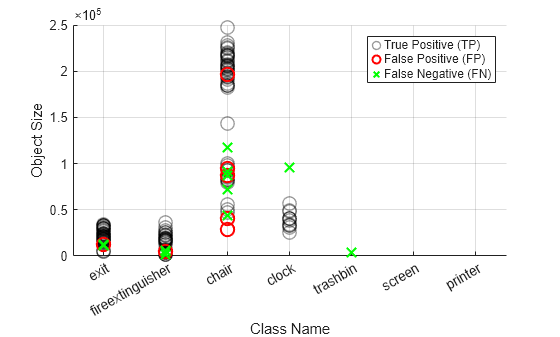

Analyze Detector Performance Across Object Size and Class

Compute per-image metrics for the data set.

[imageSummary,imagePredictions,imageGroundTruths] = imageMetrics(evalMetrics);

To analyze object size- and class-based detector performance, create a visual representation of the number of true positives, false positives, false negatives for the entire data set. First, concatenate all prediction tables from all images into a single table tblPreds.

tblPreds = vertcat(imagePredictions{:});Create a logical array indicating which predictions are matched to ground truth objects, or are true positives (TPs).

isMatchedPred = logical(tblPreds.IsMatched);

Extract true positives (TPs) by keeping only the Label (class name) and Area (object size) columns for rows where predictions are matched to ground truth.

tp = tblPreds(isMatchedPred,["Label" "Area"]);

Extract false positives (FPs) by keeping only the Label (class name) and Area (object size) columns for rows where predictions are not matched to ground truth.

fp = tblPreds(~isMatchedPred,["Label" "Area"]);

Concatenate all ground truth tables into tblTruths, and create a logical array indicating which ground truths were matched by predictions. Extract false negatives (FNs) by keeping only Label and Area for ground truths not matched to any prediction.

tblTruths = vertcat(imageGroundTruths{:});

isMatchedGT = logical(tblTruths.IsMatched);

fn = tblTruths(~isMatchedGT,["Label" "Area"]);Plot TPs as black circles using a swarm chart, with class name on the x-axis and object size on the y-axis. Plot false positives as red circles. Plot false negatives as green 'x' markers.

figure; swarmchart(tp.Label,tp.Area,[],"k",XJitter="none",... LineWidth=1,SizeData=100,MarkerEdgeAlpha=0.4,DisplayName="True Positive (TP)") hold on swarmchart(fp.Label,fp.Area,[],"r",XJitter="none",... LineWidth=1.5,SizeData=100,DisplayName="False Positive (FP)") swarmchart(fn.Label,fn.Area,[],"gx",XJitter="none",... LineWidth=1.5,SizeData=100,DisplayName="False Negative (FN)") hold off ylabel("Object Size") xlabel("Class Name") grid on legend()

[1] Adhikari, Bishwo; Peltomaki, Jukka; Huttunen, Heikki. (2019). Indoor Object Detection Dataset [Data set]. 7th European Workshop on Visual Information Processing 2018 (EUVIP), Tampere, Finland.

Load precomputed detection results and ground truth labels. The ground truth data is a subset of the Indoor Object Detection Dataset created by Bishwo Adhikari [1]. The MAT files containing the precomputed results and annotations are attached to this example as supporting files.

load("yolov2IndoorObjectDetectorAnnotation100.mat"); load("yolov2IndoorObjectDetectorResults100.mat");

Evaluate Object Detection Results

Evaluate the object detection results against the ground truth using the evaluateObjectDetection function. Specify three overlap thresholds for the evaluation: 0.5, 0.75, and 0.9.

evalMetrics = evaluateObjectDetection(results,blds,[0.5 0.75 0.9]);

Compute Per-Image Metrics for Subset of Images

Compute the per-image metrics for a subset of images, and display the results.

imageResultsSubset = imageMetrics(evalMetrics,1:2:10); disp(imageResultsSubset)

ImageIndex NumPredictedObjects NumGroundTruthObjects TP FP FN Precision Recall

__________ ___________________ _____________________ __ __ __ _________ ______

1 2 2 2 0 0 1 1

3 1 1 1 0 0 1 1

5 3 2 2 1 0 0.66667 1

7 2 2 2 0 0 1 1

9 2 2 2 0 0 1 1

Compute Image Metrics at Specific Score Threshold

Specify a higher score threshold using the ScoreThreshold name-value argument, and compute the per-image metrics. At a higher score threshold, low confidence score predictions are dropped, leading to which will lead to fewer detected objects and more misses, or false negatives (FNs):

imageResultsThresh = imageMetrics(evalMetrics,1:2:10,ScoreThreshold=0.7); disp(imageResultsThresh)

ImageIndex NumPredictedObjects NumGroundTruthObjects TP FP FN Precision Recall

__________ ___________________ _____________________ __ __ __ _________ ______

1 2 2 2 0 0 1 1

3 1 1 1 0 0 1 1

5 1 2 1 0 1 1 0.5

7 1 2 1 0 1 1 0.5

9 1 2 1 0 1 1 0.5

Compute Image Metrics at Specific Overlap Threshold

Specify a higher overlap threshold using the OverlapThreshold name-value argument, and compute the per-image metrics. The OverlapThreshold value must be one of the overlap thresholds at which the detection results were evaluated using the evaluateObjectDetection function.

imageResultsThresh = imageMetrics(evalMetrics,1:2:10,ScoreThreshold=0.7,OverlapThreshold=0.75); disp(imageResultsThresh)

ImageIndex NumPredictedObjects NumGroundTruthObjects TP FP FN Precision Recall

__________ ___________________ _____________________ __ __ __ _________ ______

1 2 2 2 0 0 1 1

3 1 1 1 0 0 1 1

5 1 2 0 1 2 0 0

7 1 2 1 0 1 1 0.5

9 1 2 1 0 1 1 0.5

When you specify a higher overlap threshold, the detector misses more detections (more FNs).

[1] Adhikari, Bishwo; Peltomaki, Jukka; Huttunen, Heikki. (2019). Indoor Object Detection Dataset [Data set]. 7th European Workshop on Visual Information Processing 2018 (EUVIP), Tampere, Finland.

Load precomputed detection results and ground truth labels. The ground truth data is a subset of the Indoor Object Detection Dataset created by Bishwo Adhikari [1]. The MAT files containing the precomputed results and annotations are attached to this example as supporting files.

load("yolov2IndoorObjectDetectorAnnotation100.mat"); load("yolov2IndoorObjectDetectorResults100.mat");

Evaluate Object Detection Results

Evaluate the object detection results against the ground truth using the evaluateObjectDetection function. Specify three overlap thresholds for the evaluation: 0.5, 0.75, and 0.9.

evalMetrics = evaluateObjectDetection(results,blds,[0.5 0.75 0.9]);

Identify Challenging Images Across All Classes

To identify images where the object detector performs poorly across all classes, analyze per-image detector performance using the false positive (FP) and false negative (FN) counts. First, compute the per-image metrics.

[summaryError,predsError,truthsError] = imageMetrics(evalMetrics,SortMetric="FP+FN",SortOrder="descend");

Select the 20 images with the highest number of incorrect detections, or the total number of FNs and FPs.

summaryError = summaryError(1:20,:); predsError = predsError(1:20); truthsError = truthsError(1:20);

Display the computed per-image metrics for the five images with the most incorrect detections.

disp(summaryError(1:5,:))

ImageIndex NumPredictedObjects NumGroundTruthObjects TP FP FN Precision Recall

__________ ___________________ _____________________ __ __ __ _________ _______

4 0 2 0 0 2 NaN 0

39 4 4 3 1 1 0.75 0.75

66 0 2 0 0 2 NaN 0

82 1 3 1 0 2 1 0.33333

5 3 2 2 1 0 0.66667 1

Display the object predictions for the image with the index 39. The third "chair" class detection for this image is a FP, with its IsMatched column field set to false.

disp(predsError{2}) ImageIndex Label Score OverlapScore IsMatched MatchedTruthLabel MatchedTruthIndex Area BoundingBox

__________ _____ _______ ____________ _________ _________________ _________________ _____ ____________________________

39 chair 0.87222 0.74144 true chair 2 85352 468 61 227 376

39 chair 0.86277 0.70789 true chair 1 98304 656 74 256 384

39 chair 0.66098 0 false <undefined> 0 28470 1018 1 146 195

39 chair 0.62006 0.67055 true chair 4 55566 317 71 189 294

Identify Challenging Images for Specific Class

To identify images which are challenging for the object detector in detecting a specified class, analyze per-image detector performance using the FP and FN counts. To identify images where the detector struggled to identify objects of the "exit" class, specify the ClassNames name-value argument as "exit".

[summaryErrorClass,predsErrorClass,truthsErrorClass] = imageMetrics(evalMetrics,ClassNames="exit",OverlapThreshold=0.75,... SortMetric="FP+FN",SortOrder="descend");

Select the 20 images with the highest number of incorrect detections, or the total number of FNs and FPs.

summaryErrorClass = summaryErrorClass(1:20,:); predsErrorClass = predsErrorClass(1:20); truthsErrorClass = truthsErrorClass(1:20,:);

Display the computed per-image metrics for the five images with the most incorrect detections.

disp(summaryErrorClass(1:5,:))

ImageIndex NumPredictedObjects NumGroundTruthObjects TP FP FN Precision Recall

__________ ___________________ _____________________ __ __ __ _________ ______

20 1 1 0 1 1 0 0

26 1 1 0 1 1 0 0

47 2 2 1 1 1 0.5 0.5

55 2 2 1 1 1 0.5 0.5

100 1 1 0 1 1 0 0

Display the object predictions for the image with the image index 47, which has one FP and one FN. The second "exit" class detection for this image is a FP, with its IsMatched column field set to false.

disp(predsErrorClass{3}) ImageIndex Label Score OverlapScore IsMatched MatchedTruthLabel MatchedTruthIndex Area BoundingBox

__________ _____ _______ ____________ _________ _________________ _________________ _____ ________________________

47 exit 0.90114 0.91124 true exit 1 19902 464 221 186 107

47 exit 0.68702 0.67807 false <undefined> 0 29346 785 295 219 134

Display the ground truth information for the image. The second ground truth row for this image indicates a FN, with its IsMatched column field set to false.

disp(truthsErrorClass{3}) ImageIndex Label IsMatched Area BoundingBox

__________ _____ _________ _____ ________________________

47 exit true 19890 463 221 195 102

47 exit false 27032 746 305 248 109

[1] Adhikari, Bishwo; Peltomaki, Jukka; Huttunen, Heikki. (2019). Indoor Object Detection Dataset [Data set]. 7th European Workshop on Visual Information Processing 2018 (EUVIP), Tampere, Finland.

Load precomputed detection results and ground truth labels. The ground truth data is a subset of the Indoor Object Detection Dataset created by Bishwo Adhikari [1]. The MAT files containing the precomputed results and annotations are attached to this example as supporting files.

load("yolov2IndoorObjectDetectorAnnotation100.mat"); load("yolov2IndoorObjectDetectorResults100.mat");

Evaluate Object Detection Results

Evaluate the object detection results against the ground truth using the evaluateObjectDetection function. Specify three overlap thresholds for the evaluation: 0.5, 0.75, and 0.9.

evalMetrics = evaluateObjectDetection(results,blds,[0.5 0.75 0.9]);

Compute F1 Score Per Image

The F1 score is the harmonic mean of precision and recall, providing a balanced measure of classification performance. An F1 score of 1 indicates perfect precision and recall, while 0 indicates the worst performance.

Compute the image metrics over the data set.

[summaryError,predsError,truthsError] = imageMetrics(evalMetrics);

Display the first five rows of the per-image summary.

disp(summaryError(1:5,:))

ImageIndex NumPredictedObjects NumGroundTruthObjects TP FP FN Precision Recall

__________ ___________________ _____________________ __ __ __ _________ ______

1 2 2 2 0 0 1 1

2 2 2 2 0 0 1 1

3 1 1 1 0 0 1 1

4 0 2 0 0 2 NaN 0

5 3 2 2 1 0 0.66667 1

Note that precision is the ratio of the number of true positives and the total number of predicted positives. The value of precision on the 4th row is NaN, since there are no predictions on this particular image, leading to a division by zero in the ratio calculation. Recall is the ratio of the number of true positives and the number of ground truth objects in that image. The value of recall for this image is zero, since no objects are detected, while there are two ground truth objects actually present in the image.

Compute the F1 score over the data set using the precision and recall values from the summaryError table.

precision = summaryError.Precision; recall = summaryError.Recall; f1Score = 2 * (precision .* recall) ./ (precision + recall);

Organize the precision, recall and F1 score values into a table.

prfTable = table(precision, recall, f1Score, VariableNames={'Precision', 'Recall', 'F1 Score'});Display the precision, recall, and F1 score values for the first five images.

disp(prfTable(1:5,:))

Precision Recall F1 Score

_________ ______ ________

1 1 1

1 1 1

1 1 1

NaN 0 NaN

0.66667 1 0.8

[1] Adhikari, Bishwo; Peltomaki, Jukka; Huttunen, Heikki. (2019). Indoor Object Detection Dataset [Data set]. 7th European Workshop on Visual Information Processing 2018 (EUVIP), Tampere, Finland.

Input Arguments

Object detection metrics, specified as an objectDetectionMetrics object.

Indices of images for which to compute per-image object detection metrics, specified as a N-element numeric vector. Each element of the vector is a positive integer that represents an image index, and N is the number of images for which to compute metrics. By default, per-image metrics are returned for all images.

Name-Value Arguments

Specify optional pairs of arguments as

Name1=Value1,...,NameN=ValueN, where Name is

the argument name and Value is the corresponding value.

Name-value arguments must appear after other arguments, but the order of the

pairs does not matter.

Example: imageMetrics(metrics,ClassNames=["person" "car"])

specifies to evaluate per-image metrics for the person and car classes.

Names of the classes for which to evaluate per-image metrics, specified as a

string scalar or character vector. If multiple classes are specified, the

function computes averaged metrics across the classes. By default, metrics will

be computed for all classes stored in the ClassNames property of the objectDetectionMetrics object metrics.

Confidence score threshold, specified as a non-negative scalar. The

imageMetrics function returns per-image metrics for

detections with a confidence score below this threshold. The value of

ScoreThreshold must be in the predicted confidence

score range specified by the detectionResults argument of the evaluateObjectDetection function. Set the score threshold above

the confidence threshold at which the detector was run.

Bounding box overlap threshold, specified as a numeric scalar or numeric

vector. Each element of the numeric vector represents a box overlap threshold

value over which the mean average precision is computed. By default, the

overlap threshold is the first element of the OverlapThreshold property of the objectDetectionMetrics object metrics.

When the intersection over union (IoU) of the pixels in the ground truth bounding box and the predicted bounding box is equal to or greater than the overlap threshold, the detection is a match to the ground truth. The IoU is the number of pixels in the intersection of the bounding boxes divided by the number of pixels in the union of the bounding boxes.

Sorting metric that sorts the rows of the imageMetrics

function output, specified as one of these options:

"none"— Do not sort."FP"— Sort by the number of false positive detections."FN"— Sort by the number of false negative detections."FP+FN"— Sort by the total number of false positive and false negative detections."Precision"— Sort by the precision value."Recall"— Sort by the recall value.

Tip

Use the SortMetric argument to identify images with

the most False Positives or False Negatives. This can help determine the

types of images for which the detector exhibits the lowest

performance.

Sorting order for the rows of the imageMetrics function

output, specified as one of these options:

"descend"— Sort in the descending order of the metric specified by theSortMetricname-value argument."ascend"— Sort in the descending order of the metric specified by theSortMetricname-value argument.

Output Arguments

Metrics by area, returned as a table with N rows. N is the number of evaluated images. The columns are specified by these table variables.

ImageIndex— Index of the image, based on image order in thedetectionResultsargument of theevaluateObjectDetectionfunction, returned as a non-negative integer.NumPredictedObjects— The number of predicted objects in each image, returned as a positive integer.NumGroundTruthObjects— Number of ground truth objects in each image, returned as a positive integer.TP— Number of true positive detections, returned as a positive integer.FP— Number of false positives detections, returned as a positive integer.FN— Number of false negatives, returned as a positive integer.Precision— Precision, returned as a positive numeric scalar. Precision is the ratio of the number of true positives (TP) and the total number of predicted positives.Precision = TP / (TP + FP)

FP is the number of false positives. Larger precision scores indicate that most detected objects match ground truth objects.

Recall— Recall, returned as a positive numeric scalar. Recall is the ratio of the number of true positives (TP) and the number of ground truth objects – the sum of true positives (TP) and false negatives (FN).Recall = TP / (TP + FN)

FN is the number of false negatives. Larger recall scores indicate that more of ground truth objects are detected.

Per-image object predictions, returned as an N-by-1 cell array. N is the number of evaluated images.

Each element of the cell array is a table with P rows, where P is the number of predictions each image, and these columns.

ImageIndex— Index of the image, based on the image order in thedetectionResultsargument of theevaluateObjectDetectionfunction, returned as a non-negative integer.Label— Predicted class label, returned as a string scalar or character vector.Score— Confidence score of the detector prediction, returned as a positive numeric scalar.OverlapScore— Bounding box overlap score between the ground truth and detected bounding boxes, returned as a positive numeric scalar.IsMatched— Logical that specifies if a predicted bounding box is a true positive or a false positive, returned as a numeric or logical1(true) or0(false), respectively.MatchedTruthLabel— If the predicted bounding box is a true positive (matched to a ground truth), thenMatchedTruthLabelspecifies the class of the ground truth box, returned as a categorical array. Otherwise, it is undefined.MatchedTruthIndex— If the predicted bounding box is a true positive (matched to a ground truth), thenMatchedTruthIndexspecifies the row index of the matched ground truth in theimageGroundTruthtable, returned as a positive integer. Otherwise, it is 0.Area— Area of the predicted bounding box, in pixels, returned as a positive integer.BoundingBox— Bounding box, returned as a 4-element or 5-element numeric vector. For an axis-aligned bounding box, the vector is of the form [x y width height]. For a rotated bounding box, the vector is of the form [xcenter ycenter width height yaw]. x andyspecify the upper-left corner of the rectangle. width and height specify the width and height of the rectangle, which are its lengths along the x- and y-axes, respectively.

If you specify "AOS" as an additional metric to compute

using the AdditionalMetrics name-value argument of the evaluateObjectDetection function, each table contains these

additional columns.

Orientation— The orientation angle of the predicted bounding box, returned as a non-negative numeric scalar. The angle specifies the clockwise rotation around the center of the bounding box. Units are in degrees.OrientationDifference— The difference between the ground truth and predicted bounding box orientations, returned as a non-negative numeric scalar. Units are in degrees.

Per-image ground truth, returned as an N-by-1 cell array. N is the number of evaluated images.

Each element of the cell array is a table with M rows and these columns. M is the number of ground truth objects for a given image.

ImageIndex— Index of the image, based on the image order in thedetectionResultsargument of theevaluateObjectDetectionfunction, returned as a non-negative integer.Label— Predicted class labels of the ground truth, returned as a string scalar or character vector.IsMatched— Logical that specifies if a prediction is matched to the ground truth or is a false negative, returned as a numeric or logical1(true) or0(false), respectively.Area— Area of the ground truth bounding box, in pixels, returned as a positive integer.BoundingBox— Bounding box, returned as a 4-element or 5-element numeric vector. For an axis-aligned bounding box, the vector is of the form [x y width height]. For a rotated bounding box, the vector is of the form [xcenter ycenter width height yaw]. x andyspecify the upper-left corner of the rectangle. width and height specify the width and height of the rectangle, which are its lengths along the x- and y-axes, respectively.

If you specify "AOS" as an additional metric to compute

using the AdditionalMetrics name-value argument of the evaluateObjectDetection function, each table contains this

additional column.

Orientation— The orientation angle of the ground truth bounding box, returned as a non-negative numeric scalar. The angle specifies the clockwise rotation around the center of the bounding box. Units are in degrees.

Version History

Introduced in R2026a

MATLAB Command

You clicked a link that corresponds to this MATLAB command:

Run the command by entering it in the MATLAB Command Window. Web browsers do not support MATLAB commands.

选择网站

选择网站以获取翻译的可用内容,以及查看当地活动和优惠。根据您的位置,我们建议您选择:。

您也可以从以下列表中选择网站:

如何获得最佳网站性能

选择中国网站(中文或英文)以获得最佳网站性能。其他 MathWorks 国家/地区网站并未针对您所在位置的访问进行优化。

美洲

- América Latina (Español)

- Canada (English)

- United States (English)

欧洲

- Belgium (English)

- Denmark (English)

- Deutschland (Deutsch)

- España (Español)

- Finland (English)

- France (Français)

- Ireland (English)

- Italia (Italiano)

- Luxembourg (English)

- Netherlands (English)

- Norway (English)

- Österreich (Deutsch)

- Portugal (English)

- Sweden (English)

- Switzerland

- United Kingdom (English)