Recognize Text Using Optical Character Recognition (OCR)

This example shows how to use the ocr function from the Computer Vision Toolbox™ to perform optical character recognition.

Text Recognition Using the ocr Function

Recognizing text in images is useful in many computer vision applications such as image search, document analysis, and robot navigation. The ocr function provides an easy way to add text recognition functionality to a wide range of applications.

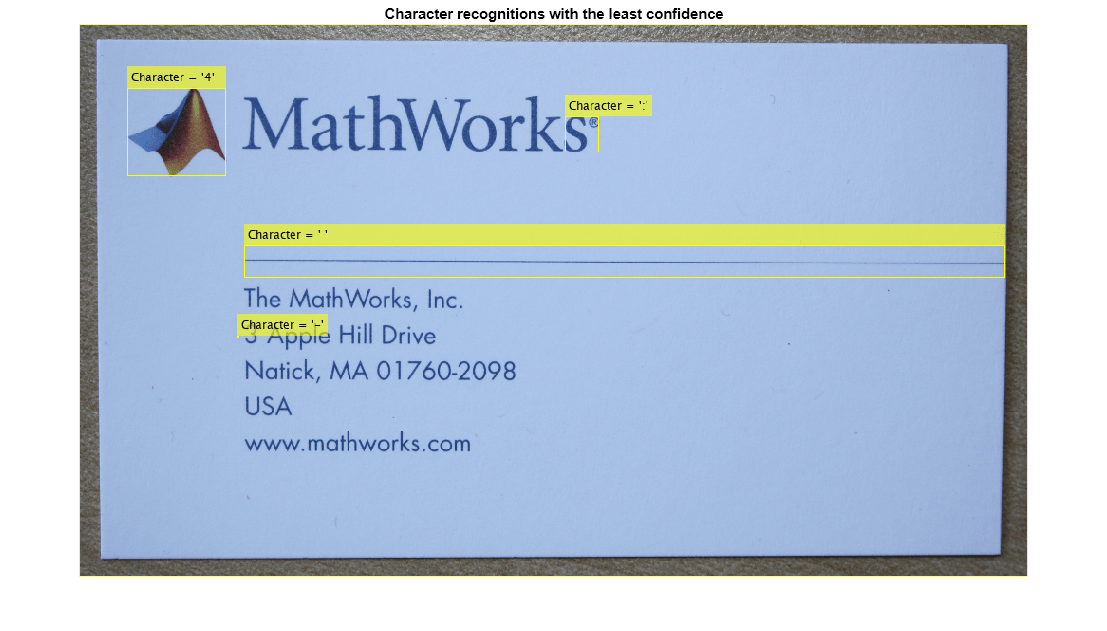

% Load an image. I = imread("businessCard.png"); % Perform OCR. results = ocr(I); % Display one of the recognized words. word = results.Words{2}

word = 'MathWorks:'

% Location of the word in I

wordBBox = results.WordBoundingBoxes(2,:)wordBBox = 1×4

173 66 376 82

% Show the location of the word in the original image. figure Iname = insertObjectAnnotation(I,"rectangle",wordBBox,word); imshow(Iname)

Information Returned by the ocr Function

The ocr functions returns the recognized text, the recognition confidence, and the location of the text in the original image. You can use this information to identify the location of misclassified text within the image.

% Find 5 characters with least confidences. [~ ,idx] = sort(results.CharacterConfidences); lowConfidenceIdx = idx(1:5); % Get the bounding box locations of the low confidence characters. lowConfBBoxes = results.CharacterBoundingBoxes(lowConfidenceIdx,:); % Get recognized characters. lowConfChars = results.Text(lowConfidenceIdx)'; % Annotate image with low confidence characters. str = "Character = '" + lowConfChars + "'"; Ilowconf = insertObjectAnnotation(I,"rectangle",lowConfBBoxes,str); figure imshow(Ilowconf) title("Character recognitions with the least confidence")

Here, the logo in the business card is incorrectly classified as a text character. These kind of OCR errors can be identified using the confidence values before any further processing takes place.

Challenges Obtaining Accurate Results

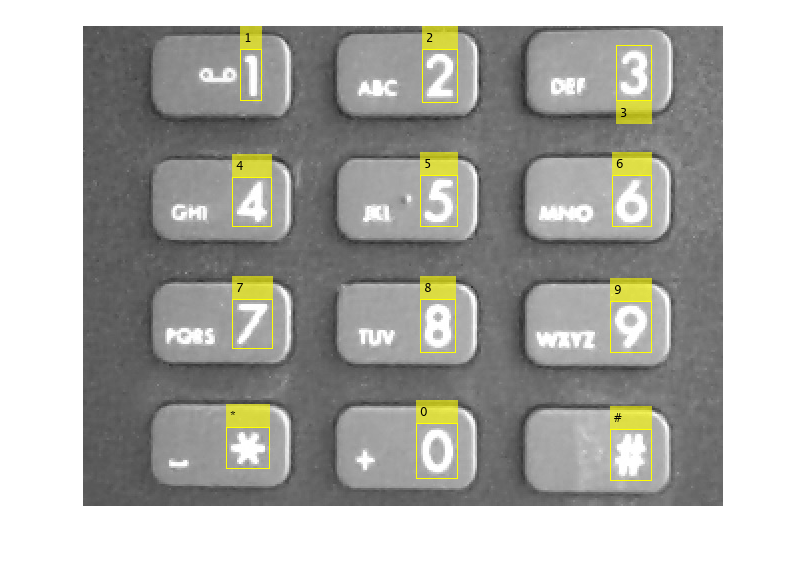

ocr performs best when the text is located on a uniform background and is formatted like a document with dark text on a light background. When the text appears on a non-uniform dark background, additional pre-processing steps are required to get the best OCR results. In this part of the example, you will try to locate the digits on a keypad. Although, the keypad image may appear to be easy for OCR, it is actually quite challenging because the text is on a non-uniform dark background.

I = imread("keypad.jpg");

I = im2gray(I);

figure

imshow(I)

% Run OCR on the image

results = ocr(I);

results.Textans =

'

'

The empty results.Text indicates that no text is recognized. In the keypad image, the text is sparse and located on an irregular background. In this case, the heuristics used for document layout analysis within ocr might be failing to find blocks of text within the image, and, as a result, text recognition fails. In this situation, disabling the automatic layout analysis, using the LayoutAnalysis parameter, may help improve the results.

% Set LayoutAnalysis to "Block" to instruct ocr to assume the image % contains just one block of text. results = ocr(I,LayoutAnalysis="Block"); results.Text

ans = 0×0 empty char array

What Went Wrong?

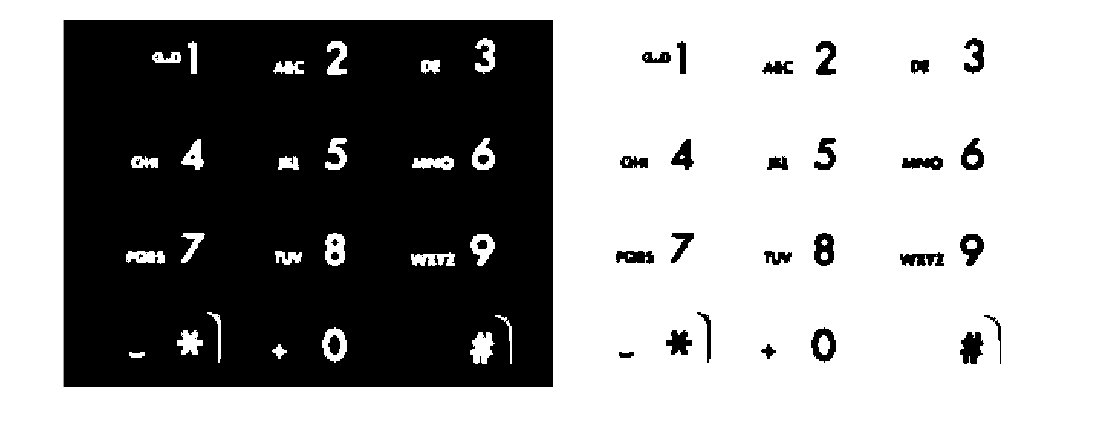

Adjusting the LayoutAnalysis parameter did not help. To understand why OCR continues to fail, you have to investigate the initial binarization step performed within ocr. You can use imbinarize to check this initial binarization step because both ocr and the default "global" method in imbinarize use Otsu's method for image binarization.

BW = imbinarize(I);

figure

imshowpair(I,BW,"montage")

After thresholding, the binary image contains no text. This is why ocr failed to recognize any text in the original image. You can help improve the results by pre-processing the image to improve text segmentation. The next part of the example explores two useful pre-processing techniques.

Image Pre-processing Techniques to Improve Results

The poor text segmentation seen above is caused by the non-uniform background in the image, i.e. the light-gray keys surrounded by dark gray. You can use the following pre-processing technique to remove the background variations and improve the text segmentation. Additional details about this technique are given in the example entitled Correct Nonuniform Illumination and Analyze Foreground Objects.

% Remove keypad background. Icorrected = imtophat(I,strel("disk",15)); BW1 = imbinarize(Icorrected); figure imshowpair(I,BW1,"montage")

After removing the background variation, the digits are now visible in the binary image. However, there are a few artifacts at the edge of the keys and the small text next to the digits that may continue to hinder accurate OCR of the whole image. Additional pre-processing using morphological reconstruction helps to remove these artifacts and produce a cleaner image for OCR.

% Perform morphological reconstruction and show binarized image. marker = imerode(Icorrected,strel("line",10,0)); Iclean = imreconstruct(marker,Icorrected); Ibinary = imbinarize(Iclean); figure imshowpair(Iclean,Ibinary,"montage")

Now invert the clean binarized image to produce an image containing dark text on a light background for OCR.

BW2 = imcomplement(Ibinary);

figure

imshowpair(Ibinary,BW2,"montage")

After these pre-processing steps, the digits are now well segmented from the background and ocr produces some results.

results = ocr(BW2,LayoutAnalysis="block");

results.Textans =

'ww] 2 x 3

md ud wb

on/ wB wm?

-* . 0 #)

'

The results look largely inaccurate except for few characters. This is due to difference in sizes of characters in the keypad which is causing the automatic layout analysis to fail.

One approach to improve the results is to leverage a priori knowledge about the text within the image. In this example, the text you are interested in contains only numeric digits and *# and ' characters. You can improve the results by constraining ocr to only select the best matches from the set "0123456789*#".

% Use the "CharacterSet" parameter to constrain OCR results = ocr(BW2,CharacterSet="0123456789*#"); results.Text

ans =

'4

78

*0

'

The results are now better and contain only characters from the given character set. However, there are still few characters of interest in the image that are missing in the recognition results.

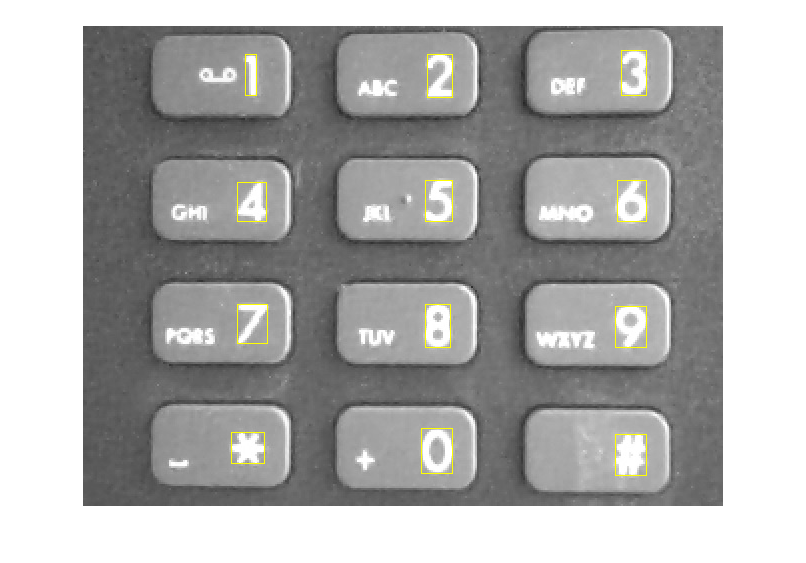

ROI-based Processing to Improve Results

To further improve the recognition results in this situation, identify specific regions in the image that ocr should process. In the keypad example image, these regions would be those that just contain the digits, *, and # characters. You may select the regions manually using imrect, or you can automate the process. For information about how to automatically detect text regions, see Segment and Read Text in Image and Automatically Detect and Recognize Text Using Pretrained CRAFT Network and OCR. In this example, you will use regionprops to find the characters of interest on the keypad.

% Use regionprops to find bounding boxes around text regions and measure their area. cc = bwconncomp(Ibinary); stats = regionprops(cc, ["BoundingBox","Area"]); % Extract bounding boxes and area from the output statistics. roi = vertcat(stats(:).BoundingBox); area = vertcat(stats(:).Area); % Show all the connected regions. img = insertObjectAnnotation(I,"rectangle",roi,area,"LineWidth",3); figure; imshow(img);

The smallest character of interest in this example is the digit "1". Use its area to filter any outliers.

% Define area constraint based on the area of smallest character of interest. areaConstraint = area > 347; % Keep regions that meet the area constraint. roi = double(roi(areaConstraint,:)); % Show remaining bounding boxes after applying the area constraint. img = insertShape(I,"rectangle",roi); figure; imshow(img);

Further processing based on a region's aspect ratio is applied to identify regions that are likely to contain a single character. This helps to remove the smaller text characters that are jumbled together next to the digits. In general, the larger the text the easier it is for ocr to recognize.

% Compute the aspect ratio. width = roi(:,3); height = roi(:,4); aspectRatio = width ./ height; % An aspect ratio between 0.25 and 1.25 is typical for individual characters % as they are usually not very short and wide or very tall and skinny. roi = roi( aspectRatio > 0.25 & aspectRatio < 1.25 ,:); % Show regions after applying the area and aspect ratio constraints. img = insertShape(I,"rectangle",roi); figure; imshow(img);

The remaining regions can be passed into the ocr function, which accepts rectangular regions of interest as input. The size of the regions are increased slightly to include additional background pixels around the text characters. This helps to improve the internal heuristics used to determine the polarity of the text on the background (e.g. light text on a dark background vs. dark text on a light background).

numAdditionalPixels = 5; roi(:,1:2) = roi(:,1:2) - numAdditionalPixels; roi(:,3:4) = roi(:,3:4) + 2*numAdditionalPixels;

Disable the automatic layout analysis by setting LayoutAnalysis to "none". When ROI inputs are provided manually, setting LayoutAnalysis to "block",“word”, “textline”, “character” or “none” may help improve results. Empirical analysis is required to determine the optimal layout analysis value.

results = ocr(BW2,roi,CharacterSet="0123456789*#",LayoutAnalysis="none");

The recognized text can be displayed on the original image using insertObjectAnnotation. The deblank function is used to remove any trailing characters, such as white space or new lines.

text = deblank({results.Text});

img = insertObjectAnnotation(I,"rectangle",roi,text);

figure;

imshow(img)

Although regionprops enabled you to find the digits in the keypad image, it may not work as well for images of natural scenes where there are many objects in addition to the text. For these types of images, the technique shown in the example Automatically Detect and Recognize Text Using Pretrained CRAFT Network and OCR may provide better text detection results.

Summary

This example showed how the ocr function can be used to recognize text in images, and how a seemingly easy image for OCR required extra pre-processing steps to produce good results.

References

[1] Ray Smith. Hybrid Page Layout Analysis via Tab-Stop Detection. Proceedings of the 10th international conference on document analysis and recognition. 2009.