Smile Detection by Using OpenCV Code in Simulink

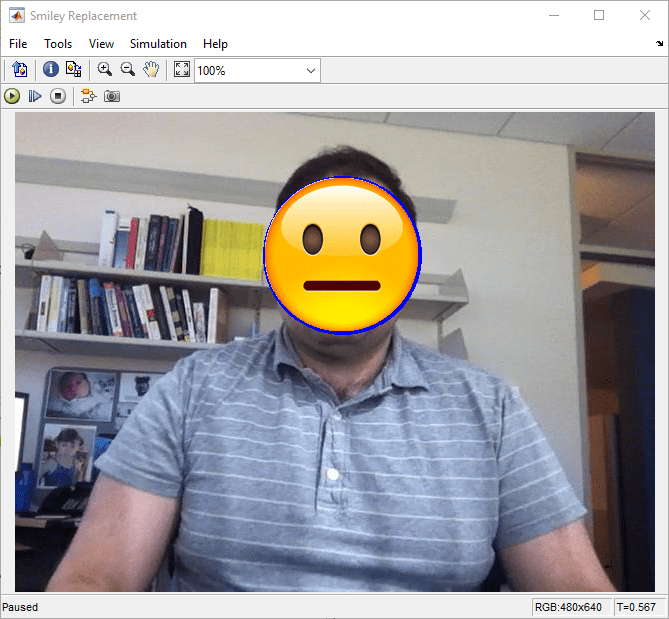

This example shows how to build a smile detector by using the OpenCV Importer app. The detector estimates the intensity of the smile on a face image or a video. Based on the estimated intensity, the detector identifies an appropriate emoji from its database, and then places the emoji on the smiling face.

First import an OpenCV function into Simulink® by following the Install and Use Computer Vision Toolbox Interface for OpenCV in Simulink. The app creates a Simulink library that contains a subsystem and a C Caller block for the specified OpenCV function. The subsystem is then used in a preconfigured Simulink model to accept the facial image or a video for smile detection. You can generate C++ code from the model, and then deploy the code on your target hardware.

You learn how to:

Import an OpenCV function into a Simulink library.

Use blocks from a generated library in a Simulink model.

Generate C++ code from a Simulink model.

Deploy the model on the Raspberry Pi hardware.

Set Up Your C++ Compiler

To build the OpenCV libraries, identify a compatible C++ compiler for your operating system, as described in Portable C Code Generation for Functions That Use OpenCV Library. Configure the identified compiler by using the mex -setup c++ command. For more information, see Choose a C++ Compiler.

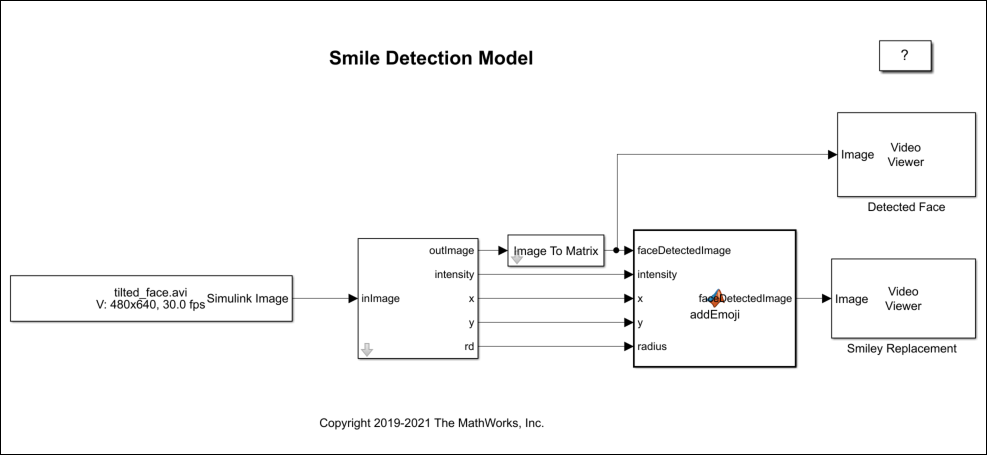

Model Description

In this example, a smile detector is implemented by using the Simulink model smileDetect.slx.

In this model, the subsystem_slwrap_detectAndDraw subsystem resides in the Smile_Detect_Lib library. You create the subsystem_slwrap_detectAndDraw subsystem by using the OpenCV Importer app. The subsystem accepts a face image or a video and provides these output values.

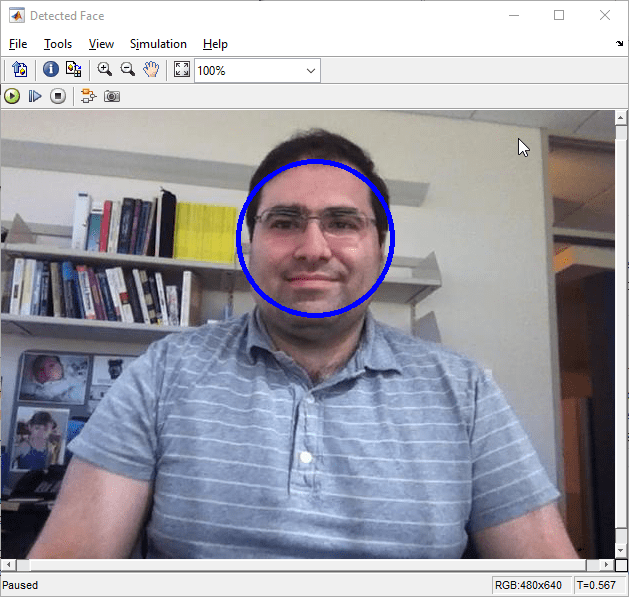

outImage: Face image with a circleintensity: Intensity of the smilex:xcoordinate of center of the circley:ycoordinate of center of the circlerd: Radius of the circle

The model is configured to use Simulink.ImageType datatype. The outImage from the subsystem is of Simulink.ImageType datatype. The Image To Matrix block converts outImage from Simulink.ImageType to a numerical matrix because a MATLAB Function block operates on numerical matrixes only.

The MATLAB Function block accepts input from the subsystem_slwrap_detectAndDraw subsystem block. The MATLAB Function block has a set of emoji images. The smile intensity of the emoji in these images ranges from low to high. From the emoji images, the block identifies the most appropriate emoji for the estimated intensity and places it on the face image. The output is then provided to the Detected Face and Smiley Replacement Video Viewer blocks.

Copy Example Folder to a Writable Location

To access the path to the example folder, at the MATLAB command line, enter:

OpenCVSimulinkExamples;

Each subfolder contains all the supporting files required to run the example.

Before proceeding with these steps, ensure that you copy the example folder to a writable folder location and change your current working folder to ...example\SmileDetector. All your output files are saved to this folder.

Step 1: Import OpenCV Function to Create a Simulink Library

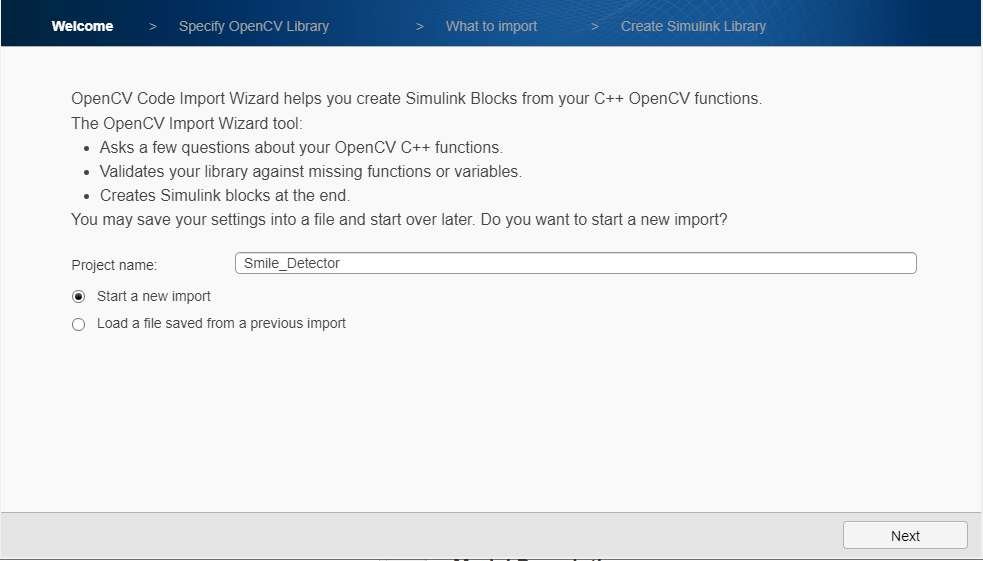

1. To start the OpenCV Importer app, click Apps on the MATLAB Toolstrip. In the Welcome page, specify the Project name as Smile_Detector. Make sure that the project name does not contain any spaces. Click Next.

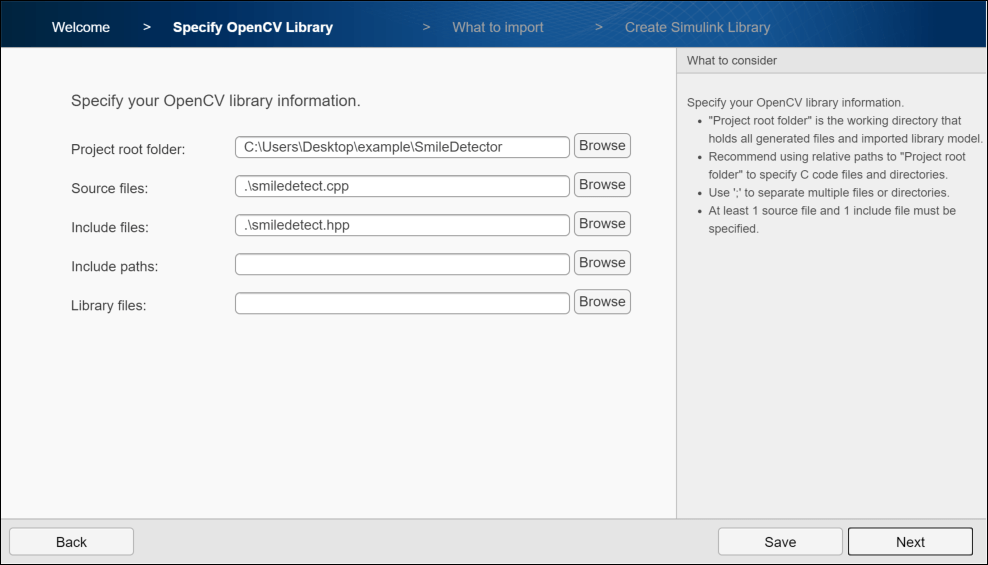

2. In Specify OpenCV Library, specify these file locations, and then click Next.

Project root folder: Specify the path of your example folder. This path is the path to the writable project folder where you have saved your example files. All your output files are saved to this folder.

Source files: Specify the path of the

.cppfile located inside your project folder assmiledetect.cpp.Include files: Specify the path of the

.hppheader file located inside your project folder assmiledetect.hpp.

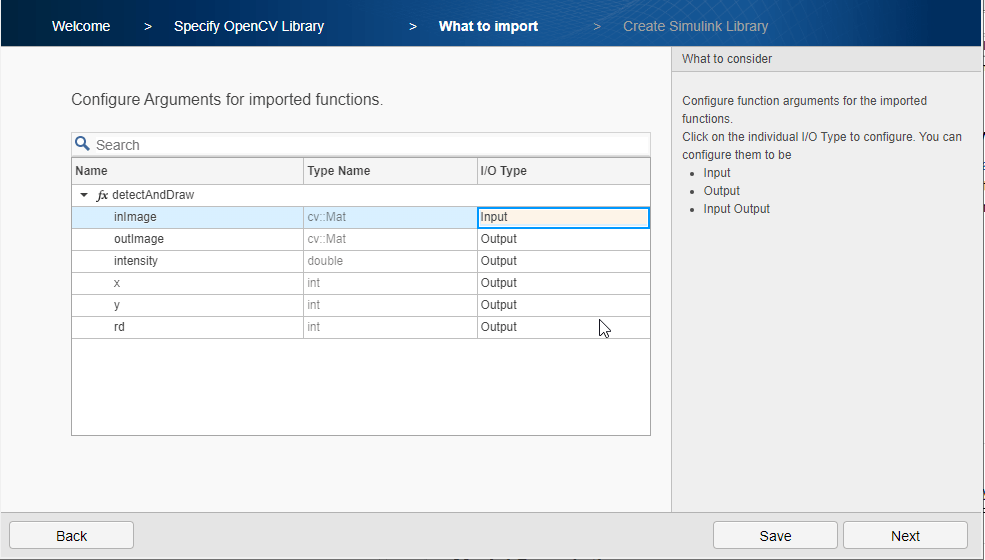

3. Analyze your library to find the functions and types for import. Once the analysis is complete, click Next. Select the detectAndDraw function and click Next.

4. From What to import, select the I/O Type for inImage as Input, and then click Next.

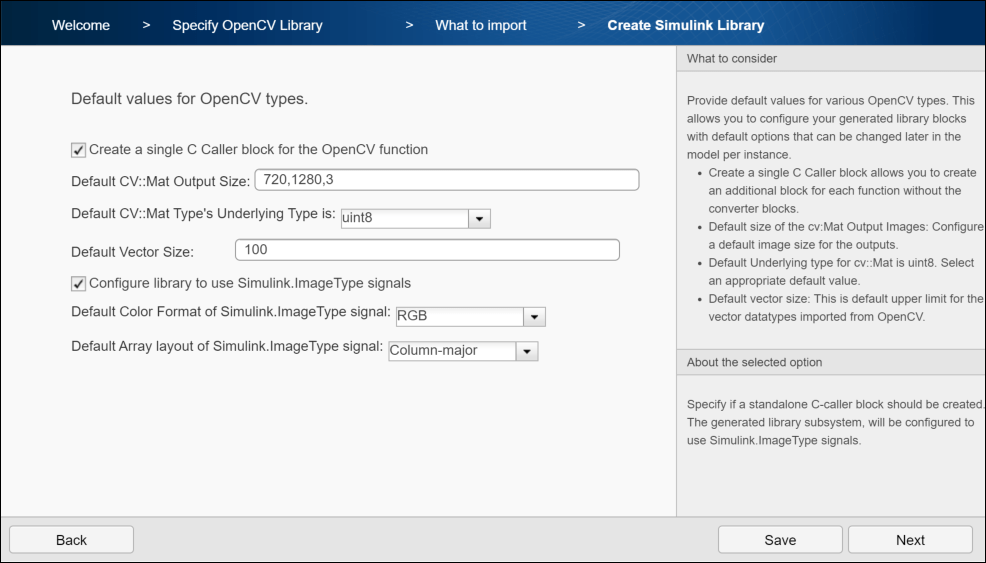

5. In Create Simulink Library, configure the default values of OpenCV types. By default, Create a single C-caller block for the OpenCV function is selected to create a C Caller block along with the subsystem in the generated Simulink library.

6. Select Configure library to use Simulink.ImageType signals to configure the generated library subsystem to use Simulink.ImageType signals.

7. Set Default Color Format of Simlink.ImageType signal to RGB, which is the default color format of the image.

8. Set Default Array layout of Simulink.ImageType signal to Column-major which, is the default array layout of the image.

9. To create a Simulink library, click Next.

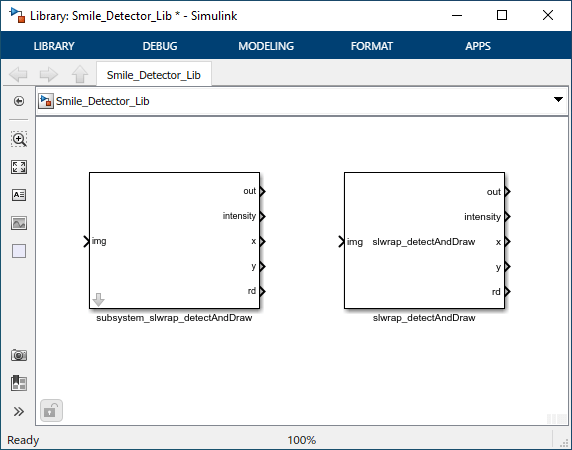

A Simulink library Smile_Detector_Lib is created from your OpenCV code into the project root folder. The library contains a subsystem and a C Caller block. You can use any of these blocks for model simulation. In this example, the subsystem subsystem_slwrap_detectAndDraw is used.

Step 2: Use Generated Subsystem in Simulink Model

To use the generated subsystem subsystem_slwrap_detectAndDraw with the Simulink model smileDetect.slx:

1. In your MATLAB current folder, right-click the model smileDetect.slx and click Open from the context menu. Drag the generated subsystem from the library to the model. Connect the subsystem to the MATLAB Function block.

2. Double-click the subsystem and configure these parameter values:

Rows:

480Columns:

640Channels:

3Underlying Type:

uint8

3. Click Apply, and then click OK.

Step 3: Simulate the Smile Detector

On the Simulink Toolstrip, in the Simulation tab, click on Run to simulate the model. After the simulation is complete, the Video Viewer blocks display the face detected. The model overlays an emoji on the face. The emoji represents the intensity of the smile.

Step 4: Generate C++ Code from the Smile Detector Model

Before you generate the code from the model, you must first ensure that you have write permission in your current folder.

To generate C++ code:

1. Open the smileDetect_codegen.slx model from your current MATLAB folder.

2. On the Apps tab on the Simulink toolstrip, select Embedded Coder. On the C++ Code tab, select the Settings list, then click C/C++ Code generation settings to open the Configuration Parameters dialog box. Verify these settings:

Under the Code Generation pane > in the Target selection section > Language is set to

C++.Under the Code Generation pane > in the Target selection section > Language standard is set to

C++11 (ISO).Under the Code Generation pane > Interface > in the Data exchange interface section > Array layout is set to

Row-major.

3. If you want to generate production C++ code, where images are represented using the OpenCV class cv::Mat instead of the C++ class images::datatypes::Image implemented by The MathWorks®, under Data Type Replacement pane > select Implement images using OpenCV Mat class.

4. Connect the generated subsystem subsystem_slwrap_detectAndDraw to the MATLAB Function block.

5. To generate C++ code, under the C++ Code tab, click the Build button. After the model finishes building, the generated code opens in the Code view.

6. You can inspect the generated code. When a model contains signals of Simulink.ImageType data type, the code generator produces additional shared utility files. These files declare and define utilities to construct, destruct, and return information about meta attributes of the images:

image_type.himage_type.cpp

The build process creates a ZIP file called smileDetect_with_ToOpenCV.zip in your current MATLAB working folder.

Deploy the Smile Detector on the Raspberry Pi Hardware

Before you deploy the model, connect the Raspberry Pi to your computer. Wait until the PWR LED on the hardware starts blinking.

In the Settings drop-down list, click Hardware Implementation to open the Configuration Parameters dialog box and verify these settings:

Set the Hardware board to

Raspberry Pi. The Device Vendor is set toARM Compatible.In the Code Generation pane, under Target selection, Language is set to C++. Under Build process, Zip file name is set to

smileDetect_with_ToOpenCV.zip. Under Toolchain settings, the Toolchain is specified asGNU GCC Raspberry Pi.

To deploy the code to your Raspberry Pi hardware:

1. From the generated zip file, copy these files to your Raspberry Pi hardware.

smiledetect.zipsmileDetect.mkmain.cpp

2. In Raspberry Pi, go to the location where you saved the files. To generate an elf file, enter this command:

make -f smileDetect.mk

3. Run the executable on Raspberry Pi. After successful execution, you see the output on Raspberry Pi with an emoji placed on the face image.

smileDetect.elf

See Also

ToOpenCV | FromOpenCV | Simulink.ImageType