Gamma Correction

This example shows how to model pixel-streaming gamma correction for hardware designs. The model compares the results from the Vision HDL Toolbox™ Gamma Corrector block with the results generated by the full-frame Gamma Correction block from Computer Vision Toolbox™.

This example model provides a hardware-compatible algorithm. You can implement this algorithm on a board using an AMD® Zynq® reference design. See Gamma Correction with Zynq-Based Hardware (SoC Blockset).

Structure of the Example

The Computer Vision Toolbox product models at a high level of abstraction. The blocks and objects perform full-frame processing, operating on one image frame at a time. However, FPGA or ASIC systems perform pixel-stream processing, operating on one image pixel at a time. This example simulates full-frame and pixel-streaming algorithms in the same model.

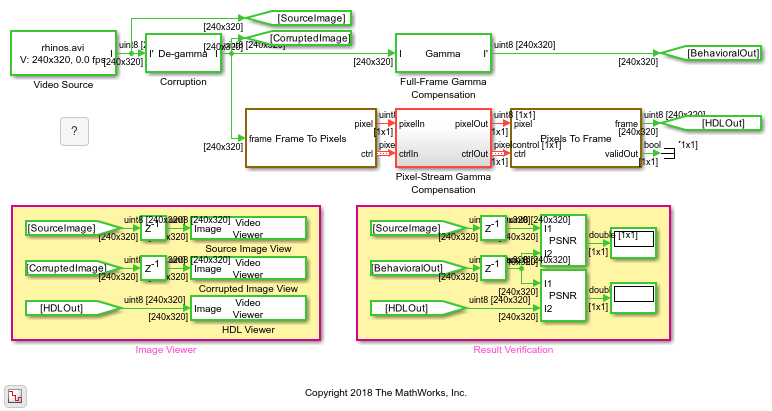

The GammaCorrectionHDL.slx system is shown.

The difference in the color of the lines feeding the Full-Frame Gamma Compensation block and the Pixel-Stream Gamma Compensation subsystem indicates the change in the image rate on the streaming branch of the model. This rate transition is because the pixel stream is sent out in the same amount of time as the full video frames and therefore it is transmitted at a higher rate.

In this example, gamma correction is used to correct dark images. Darker images are generated by feeding the input video to the Corruption block. The Video Source block outputs a 240p grayscale video, and the Corruption block applies a de-gamma operation to make the source video perceptually darker. Then, the downstream Full-Frame Gamma Compensation block or the Pixel-Stream Gamma Compensation subsystem removes the de-gamma operation from the corrupted video to recover the source video.

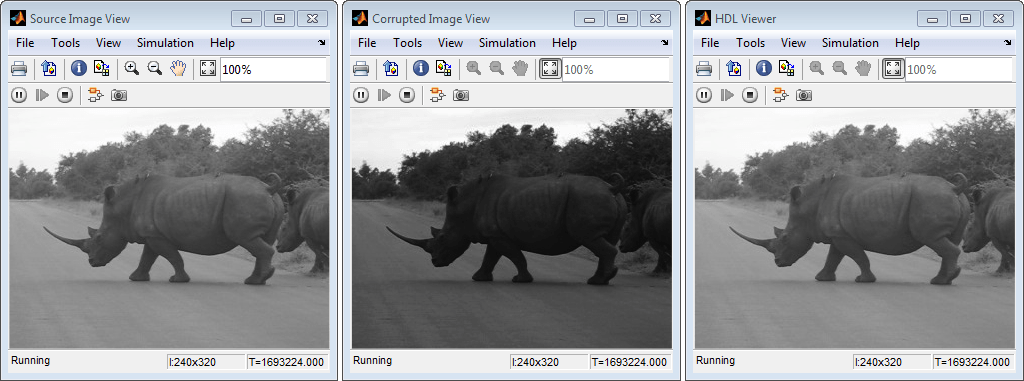

The diagram shows one frame of the source video, its corrupted version, and the recovered version, from left to right.

It is a good practice to develop a behavioral system using blocks that process full image frames, the Full-Frame Gamma Compensation block in this example, before moving forward to working on an FPGA-targeting design. Such a behavioral model helps verify the video processing design. Later on, it can serve as a reference for verifying the implementation of the algorithm targeted to an FPGA. Specifically, the lower PSNR (peak signal-to-noise ratio) block in the Result Verification section at the top level of the model compares the results from full-frame processing with those from pixel-stream processing.

Frame To Pixels: Generating a Pixel Stream

The Frame To Pixels block converts a full-frame image to pixel stream. To simulate the effect of horizontal and vertical blanking periods found in real life hardware video systems, the active image is augmented with non-image data. For more information on the streaming pixel protocol, see Streaming Pixel Interface. The Frame To Pixels block is configured as shown:

![]()

The Number of components parameter is set to 1 for grayscale image input, the Number of pixels parameter is set to 1 for scalar streaming, and the Video format parameter is 240p to match that of the video source.

In this example, the Active Video region corresponds to the 240x320 matrix of the dark image from the upstream Corruption block. Six other parameters, namely, Total pixels per line, Total video lines, Starting active line, Ending active line, Front porch, and Back porch specify how many non-image data will be augmented on the four sides of the Active Video.

The sample time of the Video Source block is determined by the product of Total pixels per line and Total video lines.

Gamma Correction

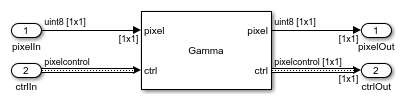

The Pixel-Stream Gamma Compensation subsystem contains only a Gamma Corrector block.

The Gamma Corrector block accepts the pixel stream, as well as a bus containing five synchronization signals, from the Frame To Pixels block. It passes the same set of signals to the downstream Pixels To Frame block. Such signal bundle and maintenance are necessary for pixel-stream processing.

Pixels To Frame: Converting Pixel Stream Back to Full Frame

As a companion to the Frame To Pixels block that converts a full image frame to pixel stream, the Pixels To Frame block, reversely, converts the pixel stream back to the full frame by making use of the synchronization signals. Since the output of the Pixels To Frame block is a 2-D matrix of a full image, there is no need to further carry on the bus containing five synchronization signals.

The Number of components and Video format parameters of both Frame To Pixels and Pixels To Frame are set at 1 and 240p, respectively, to match the format of the video source.

Image Viewer and Result Verification

When you run the simulation, three images are displayed (see the images in the "Structure of the Example" Section):

The source image given by the Video Source block

The dark image produced by the

CorruptionblockThe HDL output generated by the

Pixel-Stream Gamma Compensationsubsystem

The four Unit Delay blocks on top level of the model time-align the 2-D matrices for a fair comparison.

While building the streaming portion of the design, the PSNR block continuously verifies the HDLOut results against the original full-frame design BehavioralOut. During the course of the simulation, this PSNR block should give inf output, indicating that the output image from the Full-Frame Gamma Compensation block matches the image generated from the stream processing Pixel-Stream Gamma Compensation model.

Exploring the Example

The example allows you to experiment with different gamma values to examine their effect on the gamma and de-gamma operation. Specifically, a workspace variable gammaValue with an initial value 2.2 is created upon opening the model. You can modify its value in the MATLAB® workspace.

gammaValue=4

The updated gammaValue will be propagated to the Gamma parameter of the Corruption block, the Full-Frame Gamma Compensation block, and the Gamma Corrector block inside Pixel-Stream Gamma Compensation subsystem. Closing the model clears gammaValue from your workspace.

Although a gamma operation is conceptually the inverse of de-gamma, feeding an image to gamma followed by a de-gamma (or de-gamma first, then gamma) does not necessarily perfectly restore the original image. Distortions are expected. To measure this effect, another PSNR block compares the SourceImage and BehavioralOut images. The higher the PSNR, the less distortion has been introduced. Ideally, if HDL output and the source image are identical, PSNR outputs inf. In our example, this happens only when gammaValue equals 1 (i.e., both gamma and de-gamma blocks pass the source image through).

We can also use a gamma operation to corrupt a source image by making it brighter, followed by a de-gamma correction for image recovery.

Generate HDL Code and Verify Its Behavior

To check and generate the HDL code referenced in this example, you must have an HDL Coder™ license.

To generate the HDL code, use the following command:

makehdl('GammaCorrectionHDL/Pixel-Stream Gamma Compensation')

To allow the synthesis tool the option to implement the lookup table inside the Gamma Corrector block using a RAM, the LUTRegisterResetType property is set to none. To access this property, right click the Gamma Corrector block inside the Pixel-Stream Gamma Compensation subsystem, and in the HDL Coder app section, click HDL Block Properties.

To generate test bench, use the following command:

makehdltb('GammaCorrectionHDL/Pixel-Stream Gamma Compensation')

See Also

Frame To Pixels | Pixels To Frame | Gamma Corrector