Code Generation for Semantic Segmentation Network That Uses U-net

This example shows code generation for an image segmentation application that uses deep learning. It uses the codegen command to generate a MEX function that performs prediction on a DAG Network object for U-Net, a deep learning network for image segmentation.

For a similar example covering segmentation of images by using U-Net without the codegen command, see Semantic Segmentation of Multispectral Images Using Deep Learning (Image Processing Toolbox).

Third-Party Prerequisites

Required

This example generates CUDA MEX and has the following third-party requirements.

CUDA® enabled NVIDIA® GPU and compatible driver.

Optional

For non-MEX builds such as static, dynamic libraries or executables, this example has the following additional requirements.

NVIDIA toolkit.

NVIDIA cuDNN library.

Environment variables for the compilers and libraries. For more information, see Third-Party Hardware and Setting Up the Prerequisite Products.

Verify GPU Environment

Use the coder.checkGpuInstall function to verify that the compilers and libraries necessary for running this example are set up correctly.

envCfg = coder.gpuEnvConfig('host'); envCfg.DeepLibTarget = 'cudnn'; envCfg.DeepCodegen = 1; envCfg.Quiet = 1; coder.checkGpuInstall(envCfg);

Segmentation Network

U-Net [1] is a type of convolutional neural network (CNN) designed for semantic image segmentation. In U-Net, the initial series of convolutional layers are interspersed with max pooling layers, successively decreasing the resolution of the input image. These layers are followed by a series of convolutional layers interspersed with upsampling operators, successively increasing the resolution of the input image. Combining these two series paths forms a U-shaped graph. The network was originally trained for and used to perform prediction on biomedical image segmentation applications. This example demonstrates the ability of the network to track changes in forest cover over time. Environmental agencies track deforestation to assess and qualify the environmental and ecological health of a region.

Deep-learning-based semantic segmentation can yield a precise measurement of vegetation cover from high-resolution aerial photographs. One challenge is differentiating classes that have similar visual characteristics, such as trying to classify a green pixel as grass, shrubbery, or tree. To increase classification accuracy, some data sets contain multispectral images that provide additional information about each pixel. For example, the Hamlin Beach State Park data set supplements the color images with near-infrared channels that provide a clearer separation of the classes.

This example uses the Hamlin Beach State Park Data [2] along with a pretrained U-Net network in order to correctly classify each pixel.

The U-Net used is trained to segment pixels belonging to 18 classes which includes:

0. Other Class/Image Border 7. Picnic Table 14. Grass 1. Road Markings 8. Black Wood Panel 15. Sand 2. Tree 9. White Wood Panel 16. Water (Lake) 3. Building 10. Orange Landing Pad 17. Water (Pond) 4. Vehicle (Car, Truck, or Bus) 11. Water Buoy 18. Asphalt (Parking Lot/Walkway) 5. Person 12. Rocks 6. Lifeguard Chair 13. Other Vegetation

The segmentImageUnet Entry-Point Function

The segmentImageUnet.m entry-point function performs patchwise semantic segmentation on the input image by using the multispectralUnet network found in the multispectralUnet.mat file. The function loads the network object from the multispectralUnet.mat file into a persistent variable mynet and reuses the persistent variable on subsequent prediction calls.

type('segmentImageUnet.m')function out = segmentImageUnet(im,patchSize,trainedNet)

% OUT = segmentImageUnet(IM,patchSize,trainedNet) returns a semantically

% segmented image, segmented using the multi-spectral Unet specified in

% trainedNet. The segmentation is performed over each patch of size

% patchSize.

%

% Copyright 2019-2022 The MathWorks, Inc.

%#codegen

persistent mynet;

if isempty(mynet)

mynet = coder.loadDeepLearningNetwork(trainedNet);

end

[height, width, nChannel] = size(im);

patch = coder.nullcopy(zeros([patchSize, nChannel-1]));

% Pad image to have dimensions as multiples of patchSize

padSize = zeros(1,2);

padSize(1) = patchSize(1) - mod(height, patchSize(1));

padSize(2) = patchSize(2) - mod(width, patchSize(2));

im_pad = padarray (im, padSize, 0, 'post');

[height_pad, width_pad, ~] = size(im_pad);

out = zeros([size(im_pad,1), size(im_pad,2)], 'uint8');

for i = 1:patchSize(1):height_pad

for j =1:patchSize(2):width_pad

for p = 1:nChannel-1

patch(:,:,p) = squeeze( im_pad( i:i+patchSize(1)-1,...

j:j+patchSize(2)-1,...

p));

end

% Pass in input

segmentedLabels = activations(mynet, patch, 'Segmentation-Layer');

% Takes the max of each channel (6 total at this point)

[~,L] = max(segmentedLabels,[],3);

patch_seg = uint8(L);

% Populate section of output

out(i:i+patchSize(1)-1, j:j+patchSize(2)-1) = patch_seg;

end

end

% Remove the padding

out = out(1:height, 1:width);

Get Pretrained U-Net Network

This example uses the multispectralUnet MAT-file containing the pretrained U-Net network. This file is approximately 117 MB in size. Download the file from the MathWorks website.

trainedUnetFile = matlab.internal.examples.downloadSupportFile('vision/data','multispectralUnet.mat');

U-Net is a DAG network that contains 58 layers including convolution, max pooling, depth concatenation, and the pixel classification output layers.

load(trainedUnetFile); disp(net)

DAGNetwork with properties:

Layers: [58×1 nnet.cnn.layer.Layer]

Connections: [61×2 table]

InputNames: {'ImageInputLayer'}

OutputNames: {'Segmentation-Layer'}

To view the network architecture, use the analyzeNetwork (Deep Learning Toolbox) function.

analyzeNetwork(net);

Prepare Data

This example uses the high-resolution multispectral data from [2]. The image set was captured using a drone over the Hamlin Beach State Park, NY. The data contains labeled training, validation, and test sets, with 18 object class labels. The size of the data file is ~3.0 GB.

Download the MAT-file version of the data set using the downloadHamlinBeachMSIData helper function. This function is attached to the example as a supporting file.

if ~exist(fullfile(pwd,'data'),'dir') url = 'https://home.cis.rit.edu/~cnspci/other/data/rit18_data.mat'; downloadHamlinBeachMSIData(url,pwd+"/data/"); end

Load and examine the data in MATLAB.

load(fullfile(pwd,'data','rit18_data','rit18_data.mat')); % Examine data whos test_data

Name Size Bytes Class Attributes test_data 7x12446x7654 1333663576 uint16

The image has seven channels. The RGB color channels are the third, second, and first image channels. The next three channels correspond to the near-infrared bands and highlight different components of the image based on their heat signatures. Channel 7 is a mask that indicates the valid segmentation region.

The multispectral image data is arranged as numChannels-by-width-by-height arrays. In MATLAB, multichannel images are arranged as width-by-height-by-numChannels arrays. To reshape the data so that the channels are in the third dimension, use the helper function, switchChannelsToThirdPlane.

test_data = switchChannelsToThirdPlane(test_data); % Confirm data has the correct structure (channels last). whos test_data

Name Size Bytes Class Attributes test_data 12446x7654x7 1333663576 uint16

Run MEX Code Generation

To generate CUDA code for the segmentImageUnet.m entry-point function, create a GPU Configuration object for a MEX target setting the target language to C++. Use the coder.DeepLearningConfig function to create a CuDNN deep learning configuration object and assign it to the DeepLearningConfig property of the GPU code configuration object. Run the codegen command specifying an input size of 12446-by-7654-by-7 and a patch size of 1024-by-1024. These values correspond to the entire test_data size. The smaller patch sizes speed up inference. To see how the patches are calculated, see the segmentImageUnet entry-point function.

cfg = coder.gpuConfig('mex'); cfg.ConstantInputs = 'Remove'; cfg.TargetLang = 'C++'; cfg.DeepLearningConfig = coder.DeepLearningConfig('cudnn'); inputArgs = {ones(size(test_data),'uint16'),... coder.Constant([1024 1024]),coder.Constant(trainedUnetFile)}; codegen -config cfg segmentImageUnet -args inputArgs -report

Code generation successful: View report

Run Generated MEX to Predict Results for test_data

This segmentImageUnet function takes in the data to test (test_data) and a vector containing the dimensions of the patch size to use. Take patches of the image, predict the pixels in a particular patch, then combine all the patches together. Due to the size of test data (12446-by-7654-by-7), it is easier to process such a large image in patches.

segmentedImage = segmentImageUnet_mex(test_data);

To extract only the valid portion of the segmentation, multiply the segmented image by the mask channel of the test data.

segmentedImage = uint8(test_data(:,:,7)~=0) .* segmentedImage;

Because the output of the semantic segmentation is noisy, remove the noise and stray pixels by using the medfilt2 function.

segmentedImage = medfilt2(segmentedImage,[5,5]);

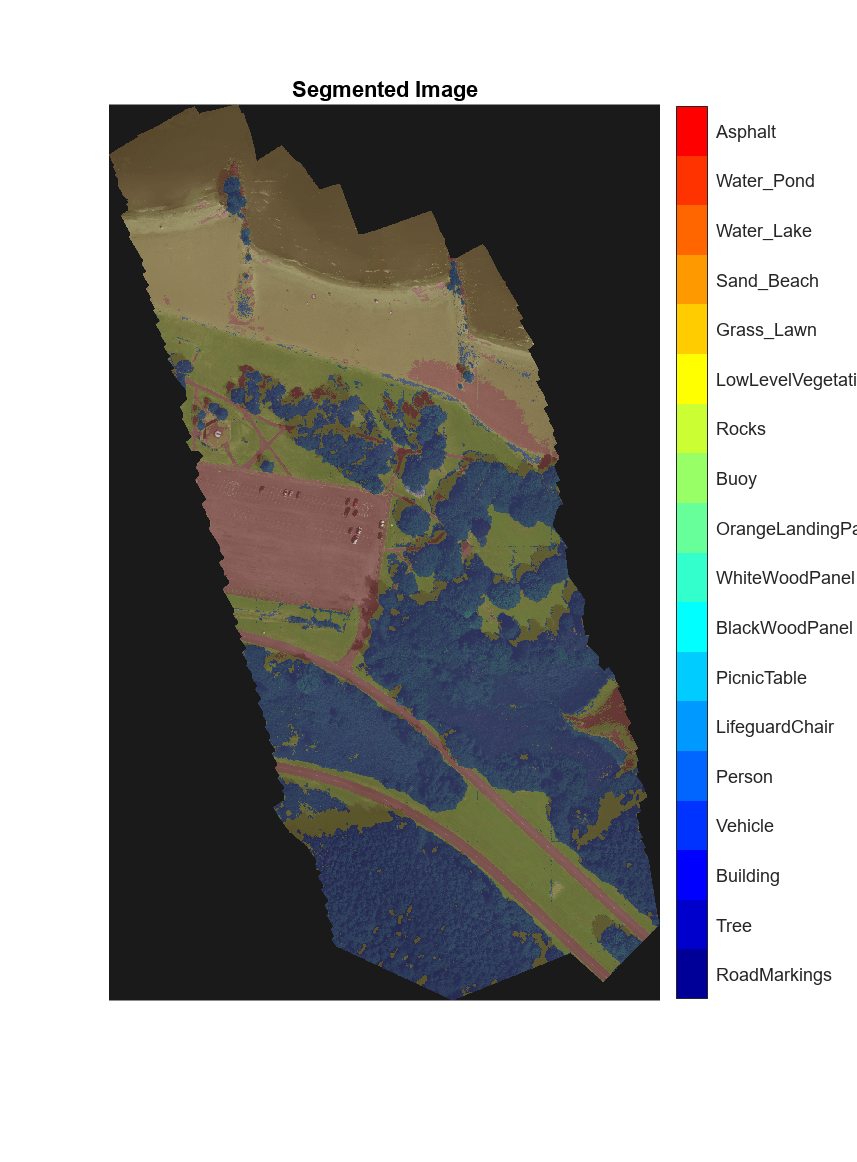

Display U-Net Segmented test_data

The following line of code creates a vector of the class names.

classNames = [ "RoadMarkings","Tree","Building","Vehicle","Person", ... "LifeguardChair","PicnicTable","BlackWoodPanel",... "WhiteWoodPanel","OrangeLandingPad","Buoy","Rocks",... "LowLevelVegetation","Grass_Lawn","Sand_Beach",... "Water_Lake","Water_Pond","Asphalt"];

Overlay the labels on the segmented RGB test image and add a color bar to the segmentation image.

cmap = jet(numel(classNames)); B = labeloverlay(imadjust(test_data(:,:,[3,2,1]),[0 0.6],[0.1 0.9],0.55),... segmentedImage,'Transparency',0.8,'Colormap',cmap); figure imshow(B) N = numel(classNames); ticks = 1/(N*2):1/N:1; colorbar('TickLabels',cellstr(classNames),'Ticks',ticks,'TickLength',0,... 'TickLabelInterpreter','none'); colormap(cmap) title('Segmented Image');

References

[1] Ronneberger, Olaf, Philipp Fischer, and Thomas Brox. "U-Net: Convolutional Networks for Biomedical Image Segmentation." arXiv preprint arXiv:1505.04597, 2015.

[2] Kemker, R., C. Salvaggio, and C. Kanan. "High-Resolution Multispectral Dataset for Semantic Segmentation." CoRR, abs/1703.01918, 2017.

[3] Kemker, Ronald, Carl Salvaggio, and Christopher Kanan. "Algorithms for Semantic Segmentation of Multispectral Remote Sensing Imagery Using Deep Learning." ISPRS Journal of Photogrammetry and Remote Sensing, Deep Learning RS Data, 145 (November 1, 2018): 60-77. https://doi.org/10.1016/j.isprsjprs.2018.04.014.

See Also

Functions

Objects

Topics

- Semantic Segmentation of Multispectral Images Using Deep Learning (Image Processing Toolbox)

- Semantic Segmentation Using Deep Learning (Computer Vision Toolbox)

- Semantic Segmentation on NVIDIA DRIVE

- Get Started with Semantic Segmentation Using Deep Learning (Computer Vision Toolbox)