rlIsDoneFunction

Is-done function approximator object for neural network-based environment

Since R2022a

Description

When creating a neural network-based environment using rlNeuralNetworkEnvironment, you can specify the is-done function approximator

using an rlIsDoneFunction object. Do so when you do not know a ground-truth

termination signal for your environment.

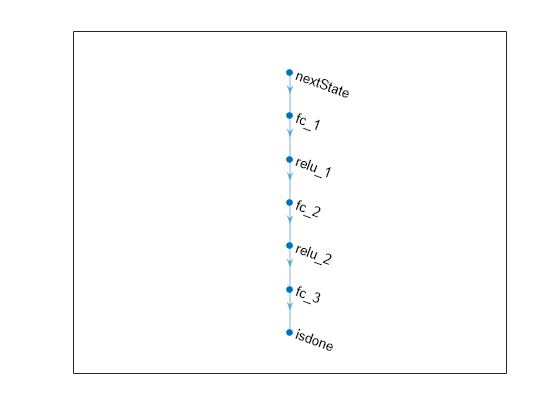

The is-done function approximator object uses a deep neural network as internal approximation model to predict the termination signal for the environment given one of the following input combinations.

Observations, actions, and next observations

Observations and actions

Actions and next observations

Next observations

Creation

Description

isdFcnAppx = rlIsDoneFunction(net,observationInfo,actionInfo,Name=Value)isdFcnAppx using the

deep neural network net and sets the

ObservationInfo and ActionInfo

properties.

When creating an is-done function approximator you must specify the names of the deep neural network inputs using one of the following combinations of name-value pair arguments.

ObservationInputNames,ActionInputNames, andNextObservationInputNamesObservationInputNamesandActionInputNamesActionInputNamesandNextObservationInputNamesNextObservationInputNames

You can also specify the UseDeterministicPredict and

UseDevice properties using optional name-value pair arguments. For

example, to use a GPU for prediction, specify UseDevice="gpu".

Input Arguments

Name-Value Arguments

Properties

Object Functions

rlNeuralNetworkEnvironment | Environment model with deep neural network transition models |

Examples

Version History

Introduced in R2022a