risk.validation.areaUnderCurveTest

Syntax

Description

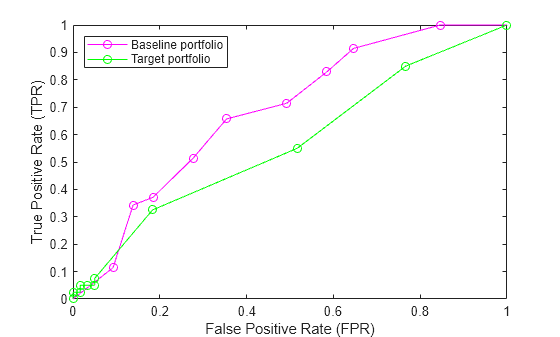

hAUCTest = risk.validation.areaUnderCurveTest(BaselineScore,BaselineBinaryResponse,TargetScore,TargetBinaryResponse)1 if the test rejects the

null hypothesis at the 95% confidence level, or 0

otherwise.

hAUCTest = risk.validation.areaUnderCurveTest(BaselineScore,BaselineBinaryResponse,TargetScore,TargetBinaryResponse,Name=Value)

Examples

Input Arguments

Name-Value Arguments

Output Arguments

More About

References

[1] European Central Bank, “Instructions for reporting the validation results of internal models.” February, 2019. https://www.bankingsupervision.europa.eu/activities/internal_models/shared/pdf/instructions_validation_reporting_credit_risk.en.pdf.

Version History

Introduced in R2026a