Blob Analysis

This example shows how to implement a single-pass 8-way connected- component labeling algorithm and perform blob analysis.

Blob analysis is a computer vision framework for detection and analysis of connected pixels called blobs. This algorithm can be challenging to implement in a streaming design because it usually involves two or more passes through the image. A first pass performs initial labeling, and additional passes connect any blobs not labeled correctly on the first pass. Streaming designs use a single-pass algorithm to apply and merge labels in hardware and store blob statistics in a RAM. This example has an output stage in software that reads the RAM results and overlays them onto the input video. This example labels blobs, and assigns each blob a unique identifier. Each blob is drawn in a different color in the output image. The example also computes the centroid, bounding box, and area of up to 1024 labeled blobs. The model can support up to 1080p@60 video.

Overview

The example model supports hardware-software co-design. The BlobDetector subsystem is the hardware part of the design, and supports HDL code generation. In a single pass, this subsystem labels each pixel in the incoming pixel stream, merges connected areas, and computes the centroid, area, and bounding box for each blob. The output of the subsystem is a stream of labeled pixels. The subsystem stores the blob statistics in a RAM. When the blob analysis is complete, the subsystem asserts the data_ready output port to indicate that the blob statistics are ready to be read.

Logic external to the subsystem reads the statistics one at a time from the BlobDetector RAM by using the blobIndex input port as an address. This external logic represents the software part of the design, and does not support HDL code generation. This part of the design reads the centroid, area, and bounding box of each blob, compiles them into vectors for use by the Overlay subsystem, and displays the blob statistics.

The BlobDetector subsystem provides these configuration ports that can be mapped to AXI registers for real-time software control.

GradThresh: Threshold used to create the intensity image.

AreaThresh: Number of pixels that define a blob. The default setting of 1 means that all blobs are processed.

CloseOp: Whether morphological closing is performed prior to labeling and analysis. Closing can be useful after thresholding to fill any introduced holes. By default, this signal is high and enables closing. If you disable closing, the darker coin is detected as two blobs rather than a single connected component.

VideoMode: Pixel stream returned by the subsystem. You can select the input video (0), labeled pixels (1), or intensity video after thresholding (2). You can use these different video views for debugging.

The BlobDetector subsystem returns the output video with associated control signals, and the bounding box, area, and centroid for each requested blobIndex. The subsystem also has these output signals to help with debugging.

index_o: Index of the blob currently returning statistics.

num_o: Number of blobs that meet the area threshold.

totalNum_o: Total number of blobs detected in the current frame. By comparing num_o and totalNum_o, you can fine-tune the input area threshold.

data_ready_o: Indicates when the blob statistics for the current frame are ready to be read from the RAM. In a hardware-software co-design implementation, you can map this signal to an AXI register, and the software can poll the register value to determine when to start reading the statistics.

Blob Detector

The BlobDetector subsystem performs connected component labeling and analysis in a single pass over the frame. At the top level, the subsystem contains the CCA_Algorithm subsystem and a cache for the results. The CCA_Algorithm subsystem performs labeling, the calculation of blob statistics, and blob merging.

Labeling Algorithm

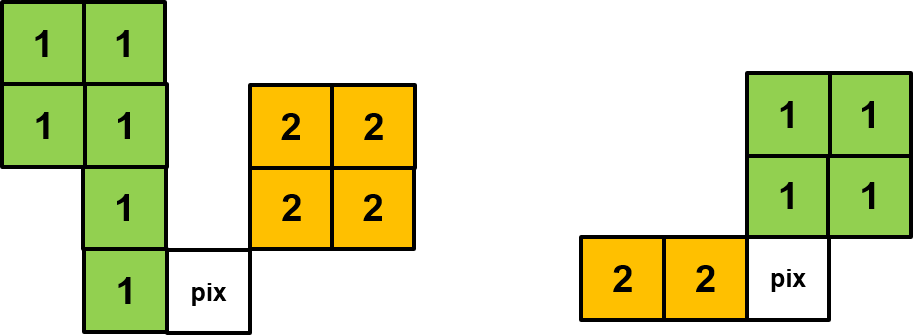

The labelandmerge MATLAB Function block performs 8-way pixel labeling relative to the current pixel. The possible labels are: previous label, top label, top-left label, and top-right label. The function assigns the current pixel an existing label in order of precedence. If no labels exist, and the pixel is a foreground pixel, then the function assigns a new label to the current pixel by incrementing the label counter. The function forms a labeling window as shown in the diagram by streaming in the current pixel, storing the previous label in a register, and storing the previous line of pixel labels in a RAM. The labels identified by labelandmerge are streamed out of the block as they are identified. For details of the merge operation, see the Merge Logic section.

Blob Statistics Calculation

The cca subsystem computes the bounding box, area, and centroid of each blob. This operation uses a set of accumulators and RAMs.

The area_accum subsystem increments the area of the blob represented by each detected label by incrementing a RAM address corresponding to the label.

The x_accum and y_accum subsystems accumulate the xpos and ypos values from the input ports. The xpos and ypos values are the coordinates of the pixel in the input frame. Using the area values, and the accumulated coordinates, the centroid is calculated from xaccum/area and yaccum/area. This calculation uses a Math Reciprocal block for 1/area and then multiplies that reciprocal by xaccum and yaccum to find the centroid coordinates. The bbox_store subsystem calculates the bounding box. The subsystem calculates the top-left coordinates, width, and height of the box by comparing the coordinates for each label against the previously cached coordinates.

Merge Logic

During the labeling step, each pixel is examined using only the current line and previous line of label values. This narrow focus means that labels can need correction after further parts of the blob are identified. Label correction can be a challenge for both frame-based and pixel-streaming implementations. The diagrams show two examples of when initial labeling requires correction.

The diagram on the left shows the current pixel connecting two regions through the previous label and top-right label. The diagram on the right shows the current pixel connecting two regions through the previous label and top label. The current pixel is the first location at which the algorithm detects that a merge is required. When the algorithm detects a merge, that pixel is flagged for correction. In both diagrams, the pixels are all part of the same blob and so each pixel must be assigned the same label, 1.

The labelandmerge MATLAB Function block checks for merges and returns a uint32 value that contains the two merged labels. The MergeQueue subsystem stores any merges that occur on the current line. At the end of each line, the cca subsystem reads the MergeQueue values and corrects the area, centroid, and bounding box values in the accumulators. The accumulated values for the two merged labels are added together and assigned to a single label. The input to each accumulator subsystem has a 2:1 multiplexer that enables the accumulator to be incremented either when a new label is found, or when a merge occurs.

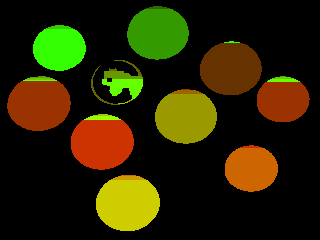

Output Display

At the end of each frame, the model updates two video displays. The Results On Image video display shows the input image with the bounding boxes (green rectangles) and centroids (red crosses) overlaid. The Label Image video display shows the results of the labeling stage before merging. In the Label Image display, the top of each coin has a different label than the rest of the coin. The merge stage corrects this behavior by merging the two labels into one. The bounding box returned for each blob shows that each coin was detected as a single label.

Implementation Results

To check and generate the HDL code referenced in this example, you must have the HDL Coder™ product. To generate the HDL code, use this command.

makehdl('BlobAnalysisHDL/BlobDetector')

The generated code was synthesized for a target of AMD® ZC706 SoC. The design met a 200 MHz timing constraint. The design uses very few hardware resources, as shown in the table.

T =

5×2 table

Resource Usage

________ ____________

DSP48 7 (0.78%)

Register 4827 (1.1%)

LUT 3800 (1.74%)

Slice 1507 (2.67%)

BRAM 25.5 (4.68%)