lloyds

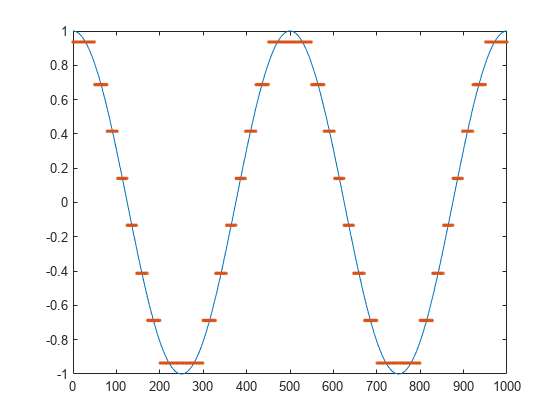

Optimize quantization parameters using Lloyd algorithm

Syntax

Description

[

optimizes the scalar quantization parameters partition,codebook] = lloyds(training_set,initcodebook) partition and

codebook for the

training data in the vector training_set.

initcodebook is

the initial guess of the codebook values.

Examples

Input Arguments

Output Arguments

Algorithms

The lloyds function uses an iterative process to minimize the

mean square distortion. Optimization processing ends when either:

References

[1] Lloyd, S.P., “Least Squares Quantization in PCM,” IEEE Transactions on Information Theory, Vol. IT-28, March, 1982, pp. 129–137.

[2] Max, J., “Quantizing for Minimum Distortion,” IRE Transactions on Information Theory, Vol. IT-6, March, 1960, pp. 7–12.

Version History

Introduced before R2006a