Pick Standard PVC Fittings of Different Shapes Using Semi-Structured Intelligent Bin Picking for UR5e

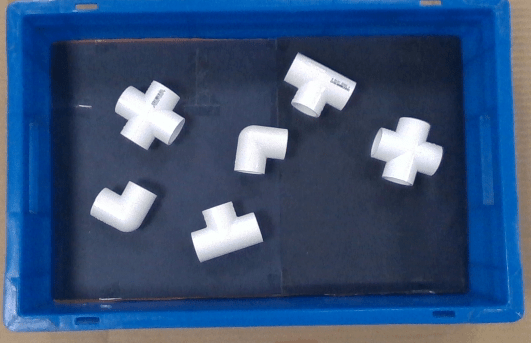

This example shows you how to implement semi-structured intelligent bin picking of four different shapes of standard PVC fittings. This example uses Universal Robots UR5e for doing the bin picking task, and lets you successfully detect and classify the objects, and then use the suction gripper connected to the cobot to sort the picked up items in four different bins.

Open the Project

To get started, open the example live script and access the supporting files by either clicking Open Live Script in the documentation or using the openExample function.

openExample('urseries/PickPVCFittingsDifferentShapesSemiStructuredUR5eExample');

Then, open the Simulink™ project file.

prj = openProject('PVCBinPickingUR5e/PVCBinPickingApplicationWithUR5eHW.prj');

Software Requirements

This example uses:

MATLAB

Robotics System Toolbox

Computer Vision Toolbox

Deep Learning Toolbox

Image Processing Toolbox

ROS Toolbox

Optimization Toolbox

Statistics and Machine Learning Toolbox

MATLAB Coder (Required if you want to use MEX function for motion planner)

Robotics System Toolbox Support Package for Universal Robots UR Series Manipulators

Computer Vision Toolbox Model for YOLO v4 Object Detection support package (Required if you want to train a detector model)

Prerequisites

To get an overview of the bin picking application workflow, refer these examples:

On a high level, bin picking task can be divided into two major modules:

Vision processing / Perception module

Motion planning module

Vision Processing or Perception Module

This workflow can be further divided into two areas:

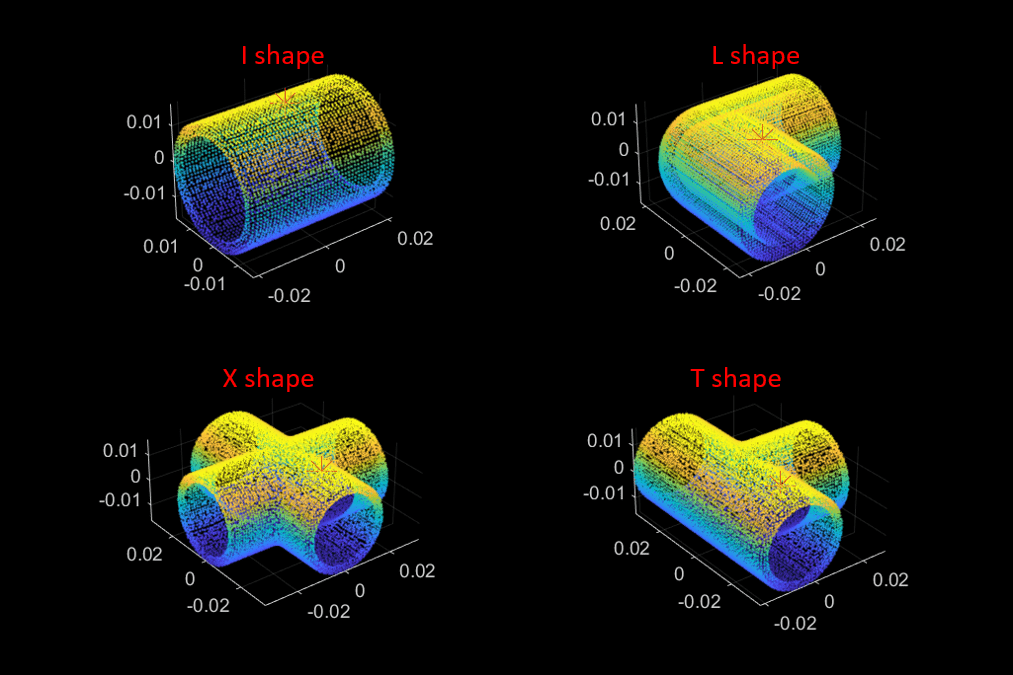

Object detection and classification using RGB data, which is object segmentation based on RGB and depth (RGBD) data using deep learning

Object pose estimation using 3D point cloud data, which can be used to estimate the pose of the identified object for motion planning

The perception process involves two main steps:

Training and validation - Training the RGB-based object detection and classification network (YOLO v4) and its validation against the test dataset.

Online object detection - Object detection using real-time raw RGBD data using a pre-trained YOLO v4 network, and object pose estimation using Principle Component Analysis (PCA) and Iterative Closest Point (ICP) algorithm.

Motion Planning Module

This workflow can be divided into two areas:

Smart motion planning, which selects the part to be picked for motion planning from the detected parts

Goal execution, which executes trajectory planning for pick and place operation using UR5e cobot

In this example, motion planning algorithm is designed using manipulatorCHOMP planner. This algorithm optimizes trajectories for smoothness and collision avoidance by minimizing a cost function comprised of a smoothness cost and collision cost.

Using the Live Script in the Motion Planning folder available in the MATLAB project of this example, you can generate a MEX function using C/C++ code generation for the motion planning of the bin picking application. The motion planning algorithm as explained in Pick-And-Place Workflow Using CHOMP for Manipulators is used to create a MEX function.

Generating a MEX function using C/C++ code generation helps you to reduce the computation time, thereby reducing the pick and place cycle time.

For more information on MEX function creation for the planner manipulatorRRT and manipulatorCHOMP algorithm-based planner, refer to Generate Code for Manipulator Motion Planning in Perceived Environment example.

Also, refer to this example to know more about how to generate MEX function to accelerate your MATLAB program execution.

Hardware Requirements

Universal Robots UR5e

Robotiq Epick suction gripper

Interface used for Universal Robots UR5e

The functionality from Robotics System Toolbox Support Package for Universal Robots UR Series Manipulators is used for trajectory and joint control of the Universal Robots UR5e hardware. This support package offers urROSNode object to enable control over the ROS interface.

Interface used for Robotiq Epick suction gripper

Most of the real-world cobots applications involve operations such as pick and place, dispensing, etc and this requires attaching and using any external end-effector tool according to the application. The urROSNode offers one useful method called handBackControl which allows users to perform end-effector operations using standard URCaps along with the ROS external control.

Interface used for Intel® RealSense™ D415

For perception purposes, Intel® RealSense™ D415 camera depth module is used in this application development. The MATLAB connection is established over the ROS using the IntelRealSense ROS driver.

The dataset used for the training of the YOLOv4 deep learning network has been created using this sensor.

You can follow the detailed steps given for the installation of the required ROS drivers for the connection.

Physical Setup Used in this Example

For the demonstration of the intelligent bin picking workflow using the Universal Robots UR5e, PVC Fittings are used..

Five rectangular bins (one bin for keeping the assorted PVC fittings, and four bins for placing the sorted PVC fittings) are used in this example.

Dimensions of the PVC fittings and bin are provided in the initializeParametersForPVCBinPickingHardware.m script.

RigidBodyTree Environment Setup for Motion Planning

In this example, we will create a RigidBodyTree environment for motion planning algorithm for manipulatorCHOMP.

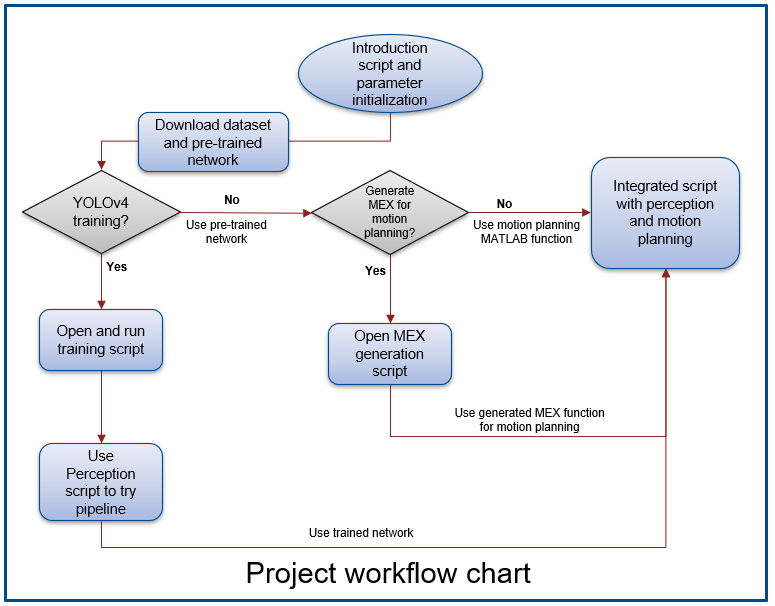

This flowchart shows the complete workflow that uses the Live Scripts available in Perception, Motion Planning, and Integration folders of the MATLAB project.

Parameter Initialization

The initialization script initializeParametersForPVCBinPickingHardware.m runs automatically when you open this MATLAB project. This script defines some of the important parameters used in the perception, motion planning, and integration project module. You can find this script inside the Initialize folder or you can run the below command to open the script. Change the parameters if you are running with some different setup accordingly.

open("initializeParametersForPVCBinPickingHardware.m");

Open Perception Module Script

Download the pre-trained YOLO v4 network and dataset

You need to download the dataset and pre-trained network from this link. We have used pre-trained network in this script for showing the perception pipeline.

Note: Downloading the content from this link is necessary to run this example as it includes the pre-tranined network and dataset for training the network.

Run the below command to open the script of the perception workflow. This script covers the full perception pipeline from training to the object pose estimation workflow.

open("DetectionAndPoseEstimationforPVCFittings.mlx");

Note: This script below demonstrates how to train a YOLOv4 object detector for identifying PVC Fittings objects.

open("trainYoloV4ForPVCObject.mlx");

Open Motion Planning Module Script

Run the below command to open the script for the motion planning workflow. This script covers the RigidBodyTree simulation workflow and MEX function generation steps for the motion planning module.

open("PVCBinPickingMotionPlanningMEXGeneration.mlx");

Open Integration Script

Run the below command to open the main script of the integrated workflow. This script shows how to use perception and motion planning modules for creating a full bin picking application workflow using the Universal Robots UR5e hardware.

open("UR5eIntelligentBinPickingHWForPVCFittings.mlx");