Automate PIL Testing for Forward Vehicle Sensor Fusion

This example shows how to generate embedded code for the Forward Vehicle Sensor Fusion algorithm and verify it using processor-in-the-loop (PIL) simulation on NVIDIA® Jetson® hardware. It also shows how to automate the testing of the algorithm on this board using Simulink® Test™. In this example, you:

Configure the model to run a PIL simulation, and verify the results against a normal simulation.

Perform automated PIL testing for the forward vehicle sensor fusion algorithm using Simulink Test.

Introduction

The forward vehicle sensor fusion component of an automated driving system performs information fusion from different sensors to perceive surrounding environment in front of an autonomous vehicle. This component is central to the decision-making process in various automated driving applications, such as highway lane following and forward collision warning. This component is commonly deployed to the target hardware.

This example shows how you can test a forward vehicle sensor fusion algorithm on NVIDIA Jetson hardware using PIL simulation. A PIL simulation cross-compiles generated source code, and then downloads and runs object code on your target hardware. By comparing the normal and PIL simulation results, you can test the numerical equivalence of your model and the generated code. For more information, see SIL and PIL Simulations (Embedded Coder).

This example builds upon the Forward Vehicle Sensor Fusion example.

In this example, you:

Review the test bench model — The model contains sensors, a sensor fusion and tracking algorithm, and metrics to assess functionality.

Simulate the model — Configure the test bench model for a test scenario. Simulate the model and visualize the results.

Generate C code — Configure the reference model to generate C code.

Run PIL simulation — Perform PIL simulation on NVIDIA Jetson hardware and assess the results.

Automate the PIL testing — Configure the Test Manager to simulate each test scenario, assess success criteria, and report results. Explore the results dynamically in the Test Manager, and export them to a PDF for external review.

Review Test Bench Model

To explore the test bench model, load the forward vehicle sensor fusion project.

openProject("FVSensorFusion");

This example reuses the ForwardVehicleSensorFusionTestBench model from the Forward Vehicle Sensor Fusion example.

Open the test bench model.

open_system("ForwardVehicleSensorFusionTestBench")

Opening this model runs the helperSLForwardVehicleSensorFusionSetup script, which initializes the scenario using the drivingScenario object in the base workspace. It also configures the sensor fusion and tracking parameters, vehicle parameters, and the Simulink bus signals required for defining the inputs and outputs for the ForwardVehicleSensorFusionTestBench model. The test bench model contains these subsystems:

Sensors and Environment— Specifies the scene, vehicles, and sensors used for simulation.Forward Vehicle Sensor Fusion— Implements the radar clustering, detection concatenation, and object tracking algorithms.Evaluate Tracker Metrics— Assesses the tracker performance using the generalized optimal subpattern assignment (GOSPA) metric between a set of tracks and their ground truths.

For more information about these subsystems, see the Forward Vehicle Sensor Fusion example.

Simulate Model

Configure the ForwardVehicleSensorFusionTestBench model to simulate the scenario_LFACC_03_Curve_StopnGo scenario. This scenario contains six vehicles, including the ego vehicle. The scenario function defines their trajectories. In this scenario, the ego vehicle has a lead vehicle in its lane. In the lane to the right of the ego vehicle, target vehicles indicated in green and blue are traveling in the same direction. In the lane to the left of the ego vehicle, target vehicles indicated in yellow and purple are traveling in the opposite direction.

helperSLForwardVehicleSensorFusionSetup(scenarioFcnName="scenario_LFACC_03_Curve_StopnGo")

Simulate the test bench model.

sim("ForwardVehicleSensorFusionTestBench")

Simulation opens the 3D Simulation window, which displays the scenario, but does not display detections or sensor coverage. Use the Bird's-Eye Scope to visualize the ego actor, target actors, sensor coverage and detections, and confirmed tracks. To visualize only the sensor data, turn off the 3D Simulation window during simulation by clearing the Display 3D simulation window parameter in the Simulation 3D Scene Configuration block.

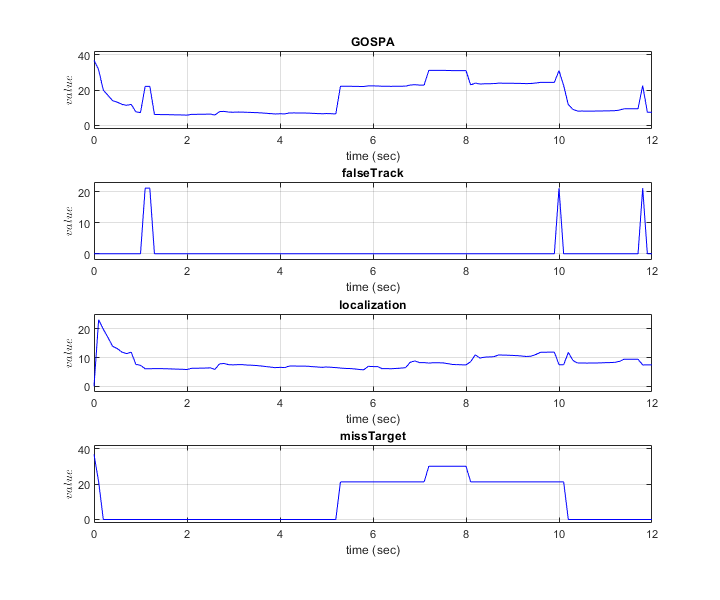

During the simulation, the model outputs the GOSPA metric and its components. The model logs the metrics, with the confirmed tracks and ground truth information, to the base workspace variable logsout. You can plot the values in logsout by using the helperPlotForwardVehicleSensorFusionResults function.

hFigResults = helperPlotForwardVehicleSensorFusionResults(logsout);

The plots show that the localization error accounts for most the GOSPA metric values. Notice that the missed target component starts from a high value, due to establishment delay of the tracker, and goes down to zero after some time. The other peaks in the missed target curve occur because there were no detections for the opposite vehicles initially as they are occluded due to lead vehicle and also once the vehicles approaches the ego vehicle in the opposite direction, there is some establishment delay of the tracker causing peaks in missed target.

Close the figure.

close(hFigResults)

Generate C Code

You can now generate C code for the algorithm, apply common optimizations, and generate a report to facilitate exploring the generated code. Configure the Forward Vehicle Sensor Fusion model to generate C code for real-time implementation of the algorithm. Set the model parameters to enable code generation and display the configuration values.

Set the model parameters to enable C code generation and save the model.

helperSetModelParametersForPIL("ForwardVehicleSensorFusion") save_system("ForwardVehicleSensorFusion")

Generate code for the reference model.

slbuild("ForwardVehicleSensorFusion");

Review the code generation report. Notice that the "Memory Information* metric shows that the algorithm requires less than 1 MB of memory during execution. As such, you can deploy and run the model on any hardware with 1 MB or more of RAM.

Run PIL Simulation

After generating the C code, you can now verify the code using PIL simulation on an NVIDIA Jetson board. The PIL simulation enables you to test the functional equivalence of the compiled, generated code on the intended hardware. For more information about PIL simulation, see SIL and PIL Simulations (Embedded Coder).

Follow these steps to perform a PIL simulation.

1. Create a hardware object for an NVIDIA Jetson board.

hwObj = jetson("jetson-name","ubuntu","ubuntu");

2. Set up the model configuration parameters for the Forward Vehicle Sensor Fusion reference model.

% Set the hardware board. set_param("ForwardVehicleSensorFusion",HardwareBoard="NVIDIA Jetson") save_system("ForwardVehicleSensorFusion")

% Set model parameters for PIL simulation. set_param("ForwardVehicleSensorFusionTestBench/Forward Vehicle Sensor Fusion",SimulationMode="Processor-in-the-loop") helperSetModelParametersForPIL("ForwardVehicleSensorFusionTestBench")

3. Simulate the model and observe the behavior.

sim("ForwardVehicleSensorFusionTestBench")

Compare the outputs from normal simulation mode and PIL simulation mode.

runIDs = Simulink.sdi.getAllRunIDs; normalSimRunID = runIDs(end - 1); PilSimRunID = runIDs(end); diffResult = Simulink.sdi.compareRuns(normalSimRunID,PilSimRunID);

Plot the differences in the sensor fusion metrics computed from normal mode and PIL mode.

hFigDiffResults = helperPlotFVSFDiffSignals(diffResult);

Note that the GOSPA metrics captured during both runs are same, which assures that the PIL mode simulation produces the same results as normal mode simulation.

Close the figure.

close(hFigDiffResults)

Assess Execution Time

During the PIL simulation, the host computer logs the execution-time metrics for the deployed code to the executionProfile variable in the MATLAB base workspace. You can use the execution-time metrics to determine whether the generated code meets the requirements for real-time deployment on your target hardware. These times indicate the performance of the generated code on the target hardware. For more information, see Create Execution-Time Profile for Generated Code (Embedded Coder).

Plot the execution time for the _step function.

hFigExecProfile = figure; plot(1e3*executionProfile.Sections(2).ExecutionTimeInSeconds) xlabel("Time step") ylabel("Time (ms)")

Notice that the run time of tracker for this scenario is less than two milliseconds, which is much smaller than 100 milliseconds of update time for camera and radar sensors. This shorter run time of tracker indicates that the algorithm is capable of real-time computation using this board.

Close the figure.

close(hFigExecProfile)

Automate PIL Testing

Simulink Test includes the Test Manager, which you can use to author test cases for Simulink models. After authoring your test cases, you can group them and execute them individually or in a batch. Open the ForwardVehicleSensorFusionPILTests.mldatx test file in the Test Manager. The Test Manager is configured to automate the PIL testing of the sensor fusion and tracking algorithm by using equivalence tests. Equivalence tests compare the normal and PIL simulation results. For more information, see Back-to-Back Equivalence Testing (Simulink Test).

This example reuses the test scenarios from the Automate Testing for Forward Vehicle Sensor Fusion example, which demonstrates the SIL verification of a forward vehicle sensor fusion algorithm.

sltestmgr

testFile = sltest.testmanager.load("ForwardVehicleSensorFusionPILTests.mldatx");

Each test case contains two sections: SIMULATION1 and SIMULATION2. The SIMULATION1 section uses its POST-LOAD callback to run the setup script with appropriate inputs, and configures the ForwardVehicleSensorFusion model to run in normal simulation mode, as shown in this figure.

The SIMULATION2 section uses its POST-LOAD callback to set up the hardware, and configures the ForwardVehicleSensorFusion model to run in PIL simulation mode, as shown in this figure.

Running the test scenarios from a test file requires you to create a hardware object. Execute first step in the Run PIL Simulation section to create the hardware object.

Run and Explore Results for Single Test Scenario

Test the system-level model on the scenario_LFACC_03_Curve_StopnGo scenario.

testSuite = getTestSuiteByName(testFile,"PIL Equivalence Tests"); testCase = getTestCaseByName(testSuite,"scenario_LFACC_03_Curve_StopnGo"); resultObj = run(testCase);

Generate the test reports obtained after the simulation.

sltest.testmanager.report(resultObj,"Report.pdf", ... Title="Forward Vehicle Sensor Fusion PIL Simulation Results", ... IncludeMATLABFigures=true, ... IncludeErrorMessages=true, ... IncludeTestResults=false, ... LaunchReport=true);

Examine Report.pdf. The Test environment section shows the platform on which the test is run and the MATLAB version used for testing. The Summary section shows the outcome of the test and the duration of the simulation, in seconds. The Results section shows pass or fail results based on the assessment criteria.

Run and Explore Results for All Test Scenarios

Run a simulation of the system for all the tests by using the run(testFile) command. Alternatively, you can click Play in the Test Manager app.

View the results in the Results and Artifacts tab of the Test Manager. You can visualize the overall pass or fail results.

You can find the generated report in your current working directory. This report contains a detailed summary of the pass or fail statuses and plots for each test case.

Conclusion

This example has shown you how to automate the PIL testing of forward vehicle sensor fusion algorithm on an NVIDIA Jetson hardware board. The generated code of this algorithm requires less than 1 MB of memory during execution, which makes it suitable for testing on any hardware with at least 1 MB of RAM.

See Also

Blocks

- Scenario Reader | Vehicle To World | Simulation 3D Scene Configuration | Cuboid To 3D Simulation | Multi-Object Tracker

Topics

- Forward Vehicle Sensor Fusion

- Integrate and Verify C++ Code of Sensor Fusion Algorithm in Simulink

- Automate Testing for Forward Vehicle Sensor Fusion

- Automate Real-Time Testing for Forward Vehicle Sensor Fusion

- Automate Real-Time Testing of Highway Lane Following Controller Using ASAM XIL

- Automate Testing for Lane Marker Detector

- Automate Testing for Vision Vehicle Detector

- Automate Real-Time Testing for Highway Lane Following Controller

- Highway Lane Following