Automate Testing for Vision Vehicle Detector

This example shows how to automate testing of a YOLOv2-based vehicle detector algorithm and the generated code by using Simulink® Test™. In this example, you:

Assess the behavior of the YOLOv2-based vehicle detector algorithm on different test scenarios with different test requirements.

Automate testing of the YOLOv2-based vehicle detector algorithm and the generated Math Kernel Library for Deep Neural Networks (MKL-DNN) code or CUDA code.

Introduction

A vehicle detector is a fundamental perception component of an automated driving application. The detector analyzes images captured using a monocular camera sensor and returns information about the vehicles present in the image. You can design and simulate a vehicle detector using MATLAB® or Simulink, and then assess its accuracy using known ground truth. You can define system requirements to configure test scenarios for simulation. You can integrate the detector into an external software environment and deploy it to a vehicle through generated code. Code generation and verification of the Simulink model ensures functional equivalence between simulation and real-time implementation.

For information about how to design and generate code for a vehicle detector, see Generate Code for Vision Vehicle Detector.

This example shows how to automate testing the vehicle detector and the code generation models against multiple scenarios using Simulink Test. The scenarios are based on system-level requirements. In this example, you:

Review requirements — Explore the test scenarios and review requirements that describe test conditions.

Review the test bench model — Review the vision vehicle detector test bench model, which contains metric assessments. These metric assessments integrate the test bench model with Simulink Test for automated testing.

Disable runtime visualizations — Disable runtime visualizations to reduce execution time for automated testing.

Automate testing — Configure a test manager to simulate each test scenario with the YOLOv2 vehicle detector, assess success criteria, and report the results. You can explore the results dynamically using the test manager and export them to a PDF for external review.

Automate testing with generated code — Configure the model to generate either MKL-DNN or CUDA code from the YOLOv2 vehicle detector, run automated tests on the generated code, and get coverage analysis results.

Automate testing in parallel — Reduce overall execution time for the tests by using parallel computing on a multicore computer.

In this example, you enable system-level simulation through integration with the Unreal Engine™ from Epic Games®.

if ~ispc error(['This example is supported only on Microsoft', char(174), ' Windows', char(174), '.']); end

Review Requirements

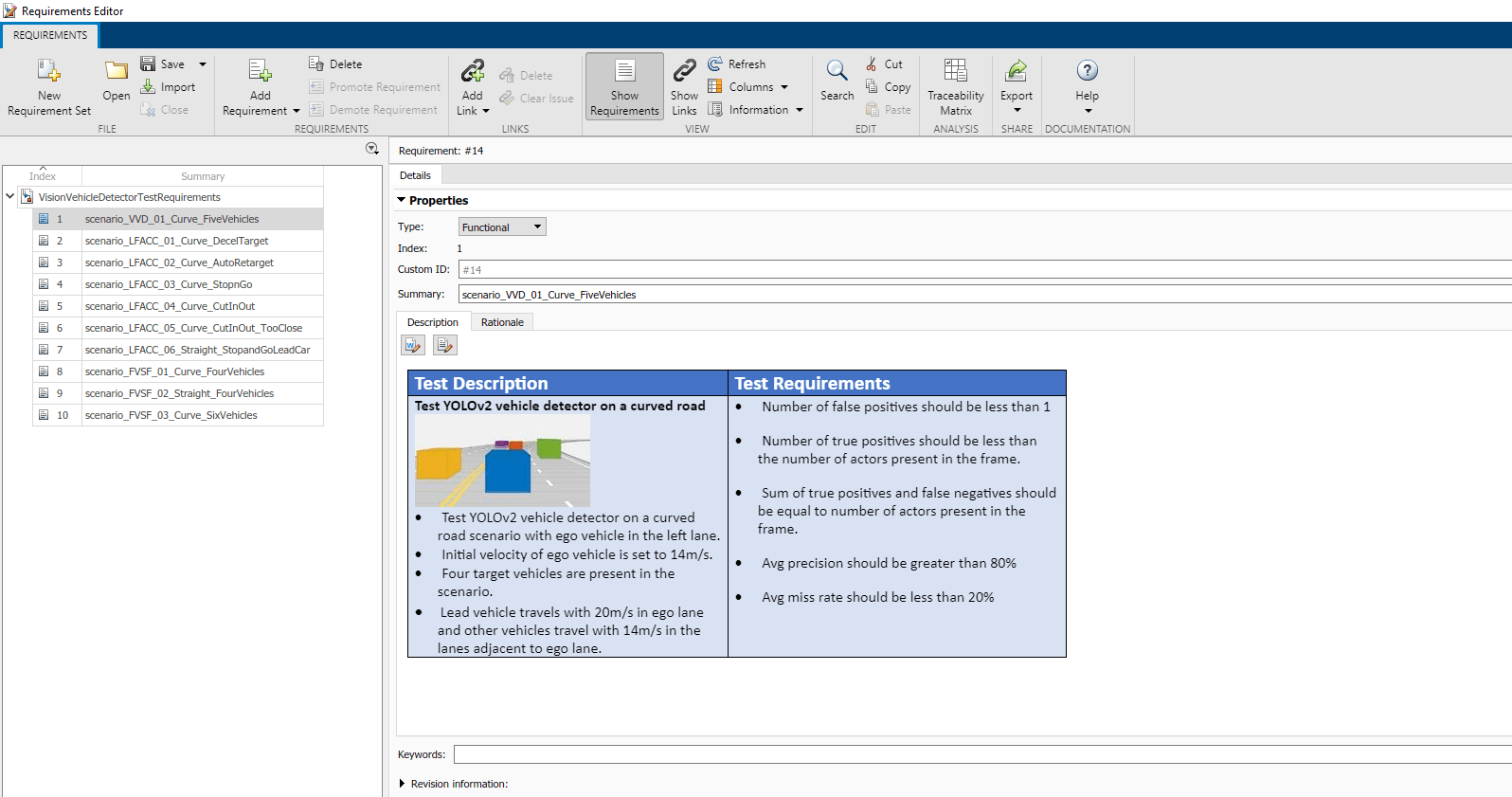

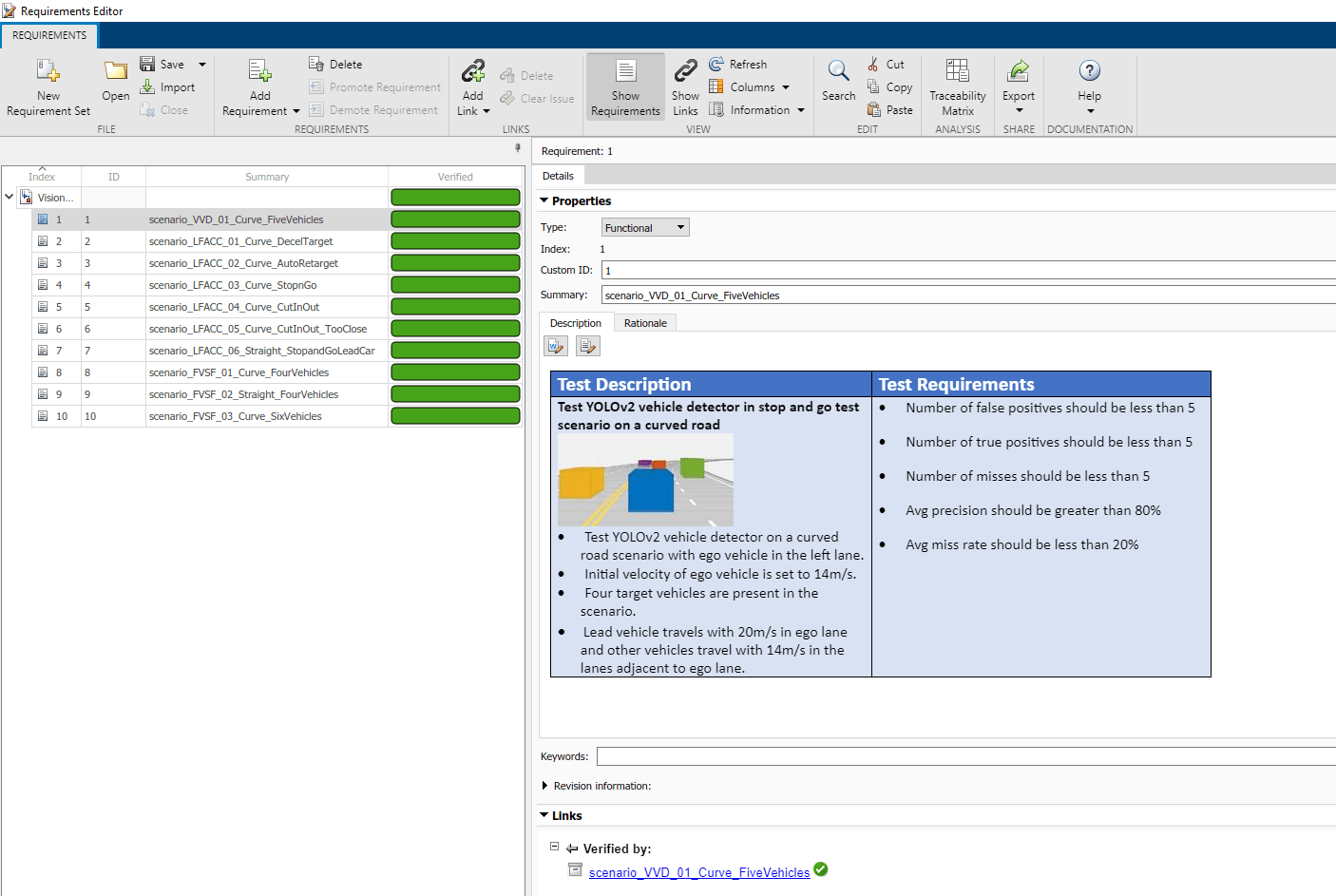

This example contains 10 test scenarios for evaluating the model. To define the high-level testing requirements for each scenario, use Requirements Toolbox™.

To explore the test bench model, load the vision vehicle detector project.

openProject("VisionVehicleDetector");

Open the test requirements file.

open("VisionVehicleDetectorTestRequirements.slreqx")

You can also open the file using the Requirements tab of the Requirements Manager app in Simulink.

The panel displays information about the test scenarios and the test requirements in textual and graphical format.

scenario_VVD_01_Curve_FiveVehicles— Curved road scenario with the ego vehicle in the left lane and four target vehicles traveling in adjacent lanes.scenario_LFACC_01_Curve_DecelTarget— Curved road scenario with a decelerating lead vehicle in the ego lane.scenario_LFACC_02_Curve_AutoRetarget— Curved road scenario with changing lead vehicles in the ego lane.scenario_LFACC_03_Curve_StopnGo— Curved road scenario with a lead vehicle slowing down in the ego lane.scenario_LFACC_04_Curve_CutInOut— Curved road scenario with a lead vehicle cutting into the ego lane to overtake a slow-moving vehicle in the adjacent lane, and then cutting out of the ego lane.scenario_LFACC_05_Curve_CutInOut_TooClose— Curved road scenario with a lead vehicle cutting aggressively into the ego lane to overtake a slow-moving vehicle in the adjacent lane, and then cutting out of the ego lane.scenario_LFACC_06_Straight_StopandGoLeadCar— Straight road scenario with a lead vehicle that breaks down in the ego lane.scenario_FVSF_01_Curve_FourVehicles— Curved road scenario with a lead vehicle cutting out of the ego lane to overtake a slow-moving vehicle.scenario_FVSF_02_Straight_FourVehicles— Straight road scenario where non-ego vehicles vary their velocities.scenario_FVSF_03_Curve_SixVehicles— Curved road scenario where the ego car varies its velocity.

Review Test Bench Model

The example reuses the model from Generate Code for Vision Vehicle Detector.

Open the test bench model.

open_system("VisionVehicleDetectorTestBench")

To configure the test bench model, use the helperSLVisionVehicleDetectorSetup script. Specify a test scenario as input to the setup script by using the scenarioFcnName input argument. The value for scenarioFcnName must be one of the scenario names specified in the test requirements. Specify the detector variant name as YOLOv2 to use the YOLOv2 vehicle detector in normal mode and in software-in-the-loop (SIL) mode.

Run the setup script.

detectorVariantName = "YOLOv2"; helperSLVisionVehicleDetectorSetup(scenarioFcnName="scenario_VVD_01_Curve_FiveVehicles", ... detectorVariantName=detectorVariantName)

You can now simulate the model and visualize the results. For more details on the simulation and analysis of the simulation results, see the Generate Code for Vision Vehicle Detector.

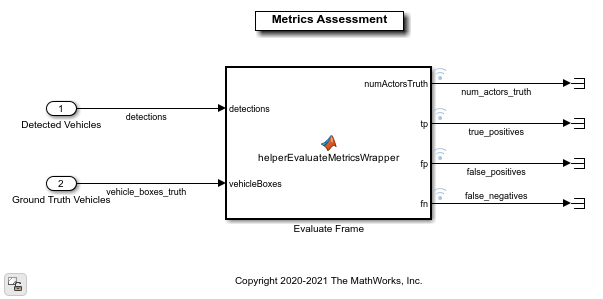

This example focuses on automating the simulation runs to test the YOLOv2 vehicle detector on different driving scenarios by using Simulink Test. The Metrics Assessment subsystem outputs the required signals to compute the metrics.

Open the Metrics Assessment subsystem.

open_system("VisionVehicleDetectorTestBench/Metrics Assessment");

The Metric Assessment subsystem outputs these values:

Number of actors — The number of vehicles in range of the camera sensor at any given time.

True positives — The number of vehicles that the algorithm detects correctly.

False negatives — The number of vehicles that are present, but that the algorithm does not detect.

False positives — The number of vehicles that the algorithm detects when the vehicles are not present in reality.

The model logs the output results from the Metric Assessment subsystem to the base workspace variable logsout. You can verify the performance of the YOLOv2 vehicle detector algorithm by validating and plotting the computed metrics. After the simulation, you can also compute average precision and average miss rate from these logged metrics, and verify the performance of the YOLOv2 vehicle detector using them.

Disable Runtime Visualizations

The test bench model visualizes intermediate outputs during the simulation. These visualizations are not required when the tests are automated. You can reduce the execution time for automated testing by disabling them.

Disable runtime visualizations for the Vision Vehicle Detector subsystem.

load_system("VisionVehicleDetector") blk = "VisionVehicleDetector/Pack Detections/Pack Vehicle Detections"; set_param(blk,EnableDisplay="off");

Configure the Simulation 3D Scene Configuration block to disable the 3D simulation window and run Unreal Engine in headless mode.

blk = "VisionVehicleDetectorTestBench/Sensors and Environment/Simulation 3D Scene Configuration"; set_param(blk,EnableWindow="off");

Automate Testing

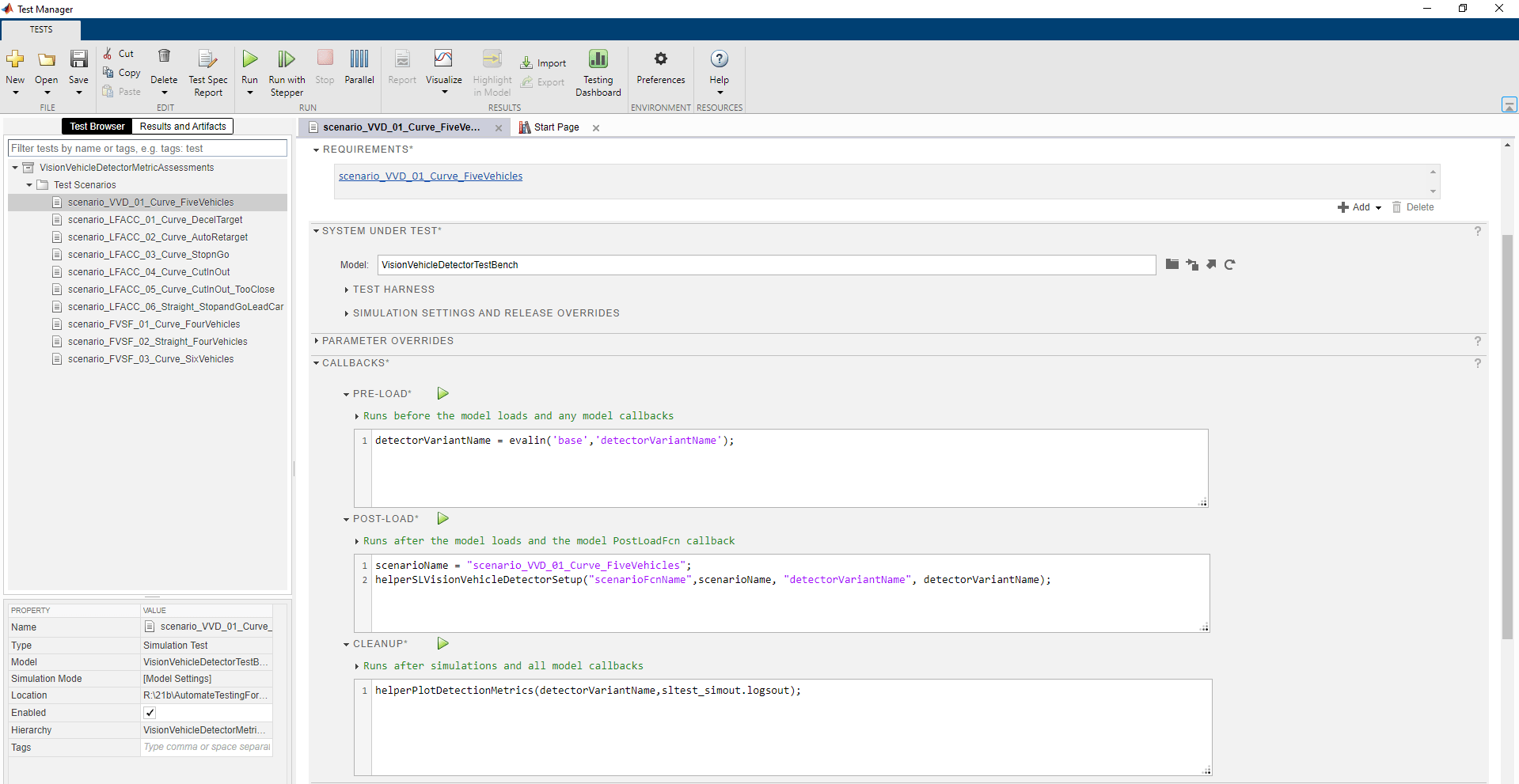

Open the VisionVehicleDetectorMetricAssessments.mldatx test file in the Test Manager. The Test Manager is configured to automate testing of the YOLOv2 vehicle detector algorithm.

sltestmgr

testFile = sltest.testmanager.load("VisionVehicleDetectorTestAssessments.mldatx");

The test cases in the Test Manager are linked to the test requirements in the Requirements Editor. Each test case uses the POST-LOAD callback to run the setup script with appropriate inputs. After simulating the test cases, the Test Manager invokes these assessments to evaluate the performance of the algorithm:

CLEANUP — Invokes the

helperPlotDetectionMetricsfunction to plot detection results from theMetric Assessmentsubsystem. For more information about these plots, see Generate Code for Vision Vehicle Detector example.LOGICAL AND TEMPORAL ASSESSMENTS — Invokes custom conditions to evaluate the algorithm.

CUSTOM CRITERIA — Invokes the

helperVerifyPrecisionAndSensitivityfunction to evaluate the precision and sensitivity metrics.

Run and Explore Results for Single Test Scenario

Test the system-level model on the scenario_VVD_01_Curve_FiveVehicles scenario.

testSuite = getTestSuiteByName(testFile,"Test Scenarios"); testCase = getTestCaseByName(testSuite,"scenario_VVD_01_Curve_FiveVehicles"); resultObj = run(testCase);

Generate the test reports obtained after simulation.

sltest.testmanager.report(resultObj,"Report.pdf", ... Title="YOLOv2 Vehicle Detector", ... IncludeMATLABFigures=true,IncludeErrorMessages=true, ... IncludeTestResults=false,LaunchReport=true);

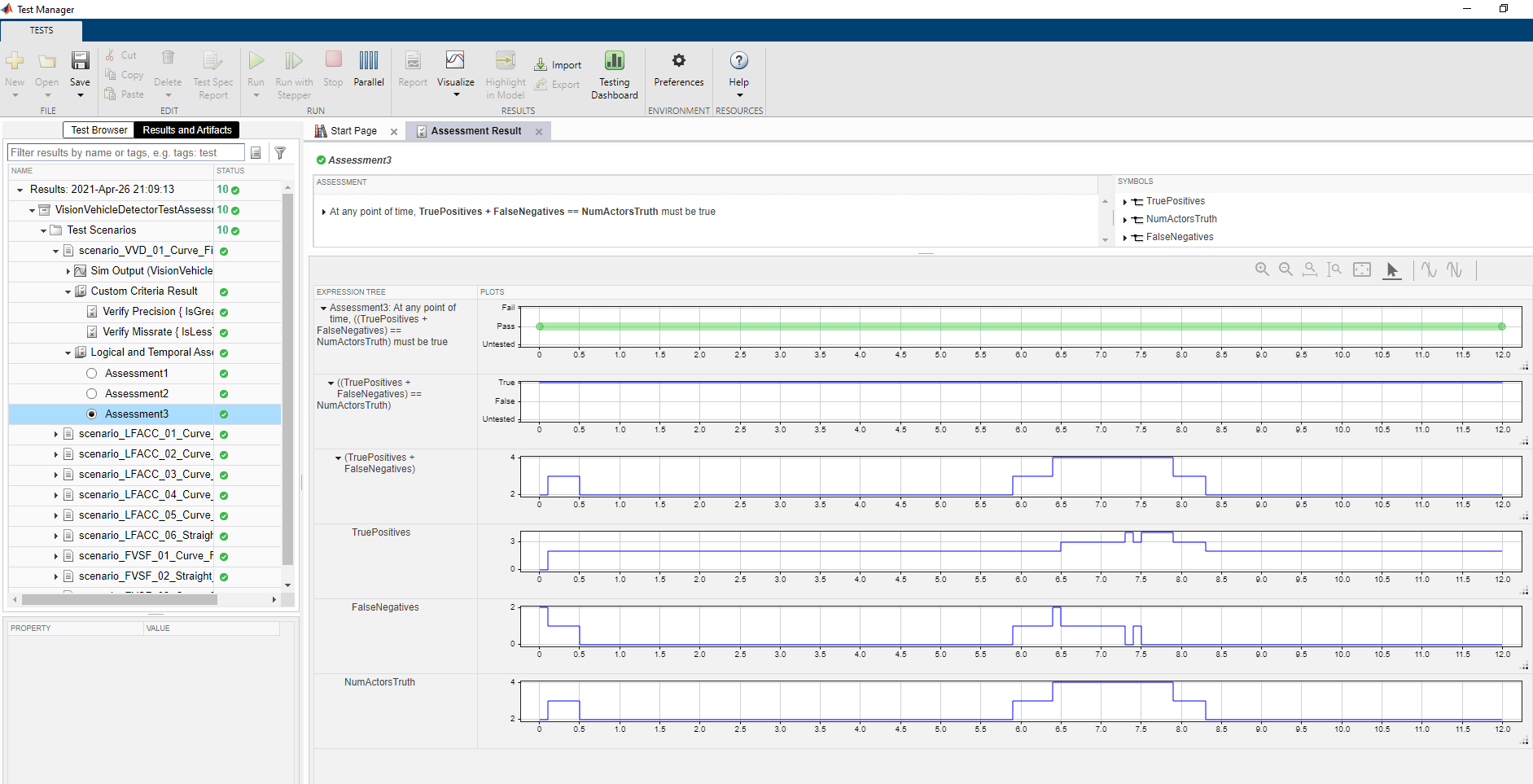

Examine Report.pdf. The Test environment section shows the platform on which the test is run and the MATLAB version used for testing. The Summary section shows the outcome of the test and the duration of the simulation in seconds. The Results section shows pass or fail results based on the logical and temporal assessment criteria. The customized criteria used to assess the algorithm for this test case are:

At any point of time,

TruePositives<=NumActorsTruthAt any point of time,

FalsePositives<=1At any point of time, (

TruePositives+FalseNegatives) ==NumActorsTruth

The report also displays the plots logged from the helperPlotDetectionMetrics function.

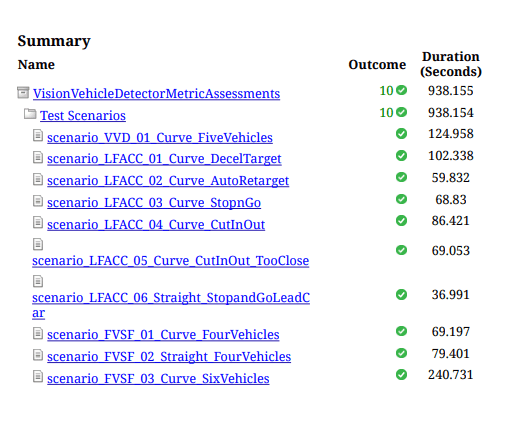

Run and Explore Results for All Test Scenarios

Run a simulation of the system for all the tests.

run(testFile)

Alternatively, you can select Play in the Test Manager app.

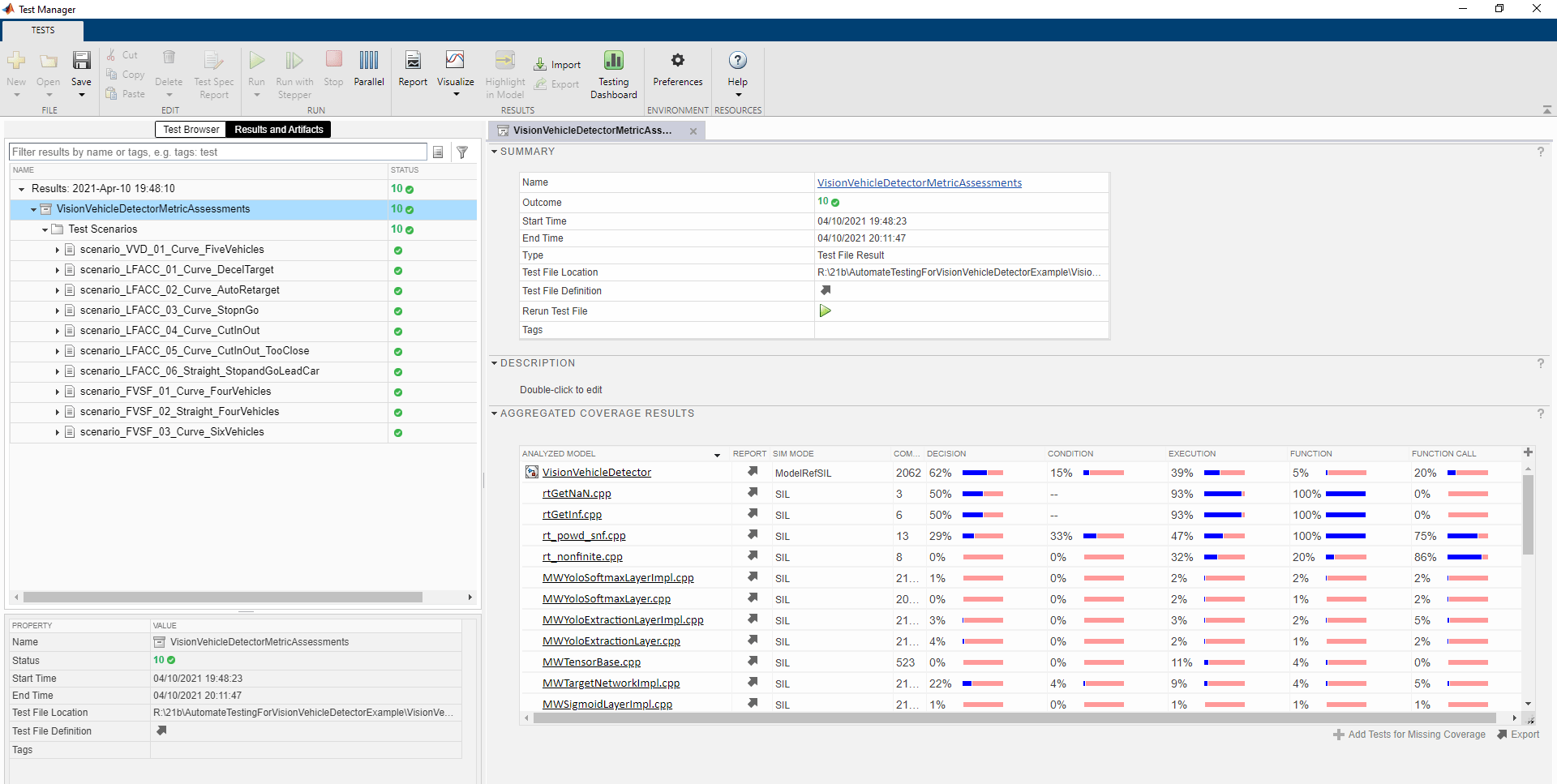

After completion of the test simulations, you can view the results for all the tests in the Results and Artifacts tab of the Test Manager. For each test case, check Custom Criteria Result and Logical And Temporal Assessments. You can visualize the overall pass or fail results.

You can find the generated report in the current working directory. This report contains a detailed summary of the pass or fail statuses and plots for each test case.

Verify Test Status in Requirements Editor

Open the Requirements Editor and select Display. Then, select Verification Status to see a verification status summary for each requirement. Green and red bars indicate the pass and fail status, respectively, for each simulation test result.

Automate Testing with Generated Code

The VisionVehicleDetectorTestBench model enables generating either MKL-DNN code or CUDA code from a YOLOv2 Vehicle detector component to perform regression testing of these components using SIL verification.

Configure YOLOv2 Detector to Generate MKL-DNN Code

If you have Embedded Coder™ and Simulink Coder™ licenses, you can generate MKLDNN code for the YOLOv2 vehicle detector. Set DLTargetLibrary to "MKL-DNN".

Configure the YOLOv2 vehicle detector to generate the MKL-DNN code.

set_param("VisionVehicleDetector",TargetLang="C++") set_param("VisionVehicleDetector",GenerateGPUCode="None") set_param("VisionVehicleDetector",DLTargetLibrary="MKL-DNN")

Configure YOLOv2 Detector to Generate CUDA code

If you have GPU Coder™ and Simulink Coder licenses, you can generate CUDA code for the YOLOv2 vehicle detector. To verify that the compilers and libraries necessary for running this section are set up correctly, use the coder.checkGpuInstall function. Set DLTargetLibrary to either "cudnn" or "tensorrt", based on the availability of the relevant libraries on the target. For more details on how to verify the GPU environment, see the Generate Code for Vision Vehicle Detector example.

Configure the model to generate the CUDA code.

set_param("VisionVehicleDetector",TargetLang="C++") set_param("VisionVehicleDetector",GenerateGPUCode="CUDA") set_param("VisionVehicleDetector",DLTargetLibrary="cuDNN")

Save the configured system using save_system("VisionVehicleDetector").

Configure and Simulate Model in SIL Mode for All Test Scenarios

Set the detector variant name to YOLOv2 and the simulation mode of the model to SIL mode.

detectorVariantName = "YOLOv2"; model = "VisionVehicleDetectorTestBench/Vision Vehicle Detector"; set_param(model,SimulationMode="Software-in-the-loop")

Simulate system for all the test scenarios and generate the test report by using the MATLAB command: run(testFile). Review the plots and results in the generated report.

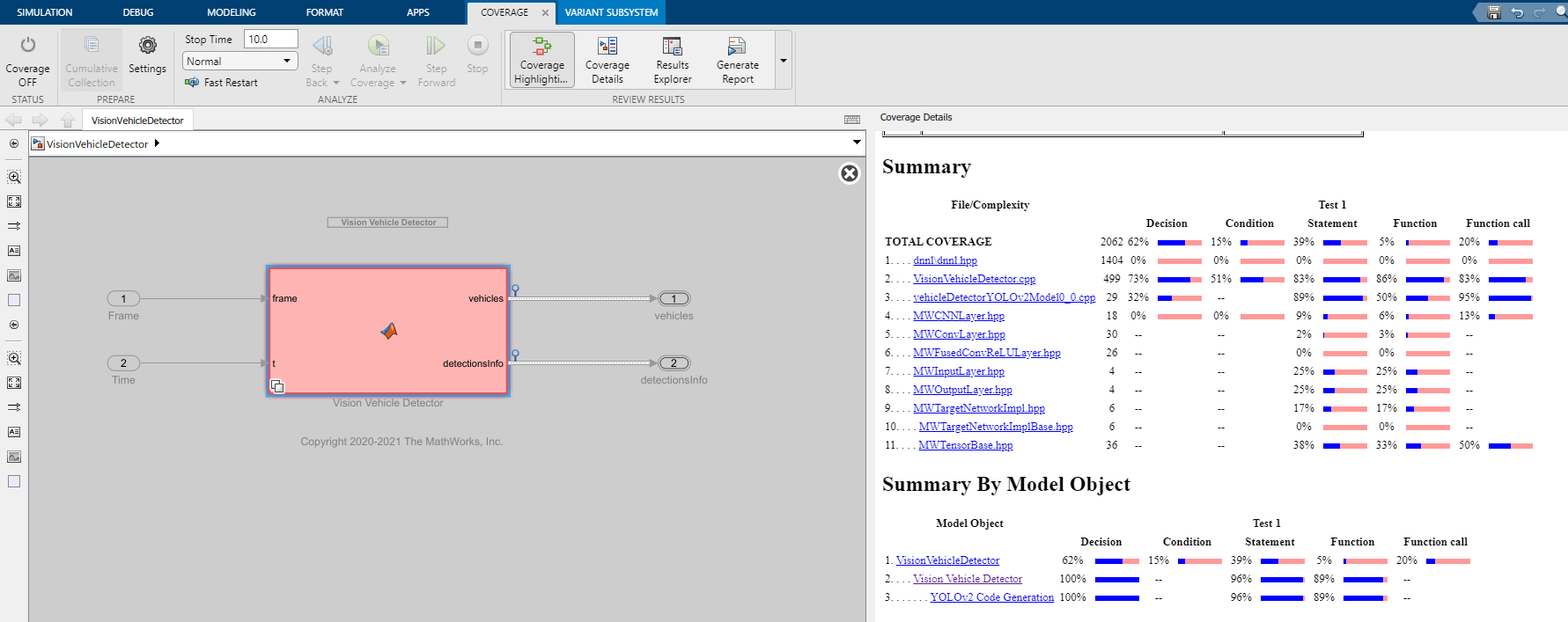

Capture Coverage Results

If you have a Simulink Coverage™ license, you can get the code coverage analysis for the generated code to measure testing completeness. You can use coverage data to find gaps in testing, missing requirements, or unintended functionality. You can visualize the coverage results for individual test cases, as well as aggregated coverage results.

Click the VisionVehicleDetector link in Test Manager to view a detailed report of the coverage results.

Automate Testing in Parallel

If you have a Parallel Computing Toolbox™ license, you can configure the test manager to execute tests in parallel using a parallel pool. To run the tests in parallel, disable the runtime visualizations and save the models using save_system("VisionVehicleDetector") and save_system("VisionVehicleDetectorTestBench"). Test Manager uses the default Parallel Computing Toolbox cluster and executes tests on only the local machine. Running tests in parallel speeds up execution and decreases the amount of time required for testing. For more information on how to configure tests in parallel using the Test Manager, see Run Tests Using Parallel Execution (Simulink Test).

See Also

Topics

- Generate Code for Vision Vehicle Detector

- Automate Testing for Lane Marker Detector

- Automate Testing for Forward Vehicle Sensor Fusion

- Automate Testing for Highway Lane Following Controller

- Automate Real-Time Testing for Highway Lane Following Controller

- Automate Real-Time Testing for Forward Vehicle Sensor Fusion

- Highway Lane Following