Lidar Labeler

Label ground truth data in lidar point clouds

Description

The Lidar Labeler app enables you to label objects in a point cloud or a point cloud sequence. The app reads point cloud data from PLY, PCAP, LAS, LAZ, ROS, PCD, and E57 files. Using the app, you can:

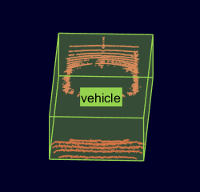

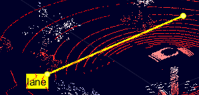

Define cuboid region of interest (ROI), line, voxel ROI labels, and scene labels. Use them to interactively label your ground truth data.

Define attributes for the labels and use them to provide further detail about the labels.

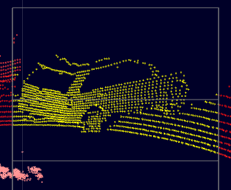

Use built-in algorithms for clustering, ground plane segmentation, automated labeling, and tracking.

Save label definitions, point cloud data, and ground truth data to a session file for future use.

Use the Projected View option to view the labels in top, front and side views simultaneously.

Use the Camera View option to create and reuse custom views of the point cloud data.

Use the Auto Align option to rotate and best fit the cuboid to the cluster.

Use the

lidar.syncImageViewer.SyncImageViewerclass to sync the app to an external visualization or analysis tool.Write, import, and use a custom automation algorithm for automated labeling.

Evaluate the performance of your label automation algorithms with a visual summary.

Export the labeled ground truth as a

groundTruthLidarobject. This object can be used for system verification and training an object detector.

To learn more about this app, see Get Started with the Lidar Labeler.

Open the Lidar Labeler App

MATLAB® Toolstrip: On the Apps tab, under Image Processing and Computer Vision, click the app icon.

MATLAB command prompt: Enter

lidarLabeler.

Examples

Programmatic Use

Limitations

The labels do not support sublabels.

The Label Summary window does not support sublabels.

More About

Tips

Use the

lidar.syncImageViewer.SyncImageViewerclass to create a tool for viewing the image corresponding to the point cloud data.Remove the ground plane to clearly view the created object labels.

Use the rotate, translate, expand, and shrink options to edit the cuboids after drawing them.

Use the Camera View option to save the a view of the data from the current angle and direction.

To avoid having to relabel ground truth with new labels, organize the labeling scheme you want to use before you begin marking your ground truth.

You can copy and paste the labels between signals that are of the same type.

Version History

Introduced in R2020b