Build Occupancy Map from 3-D Lidar Data Using SLAM

This example demonstrates how to build a 2-D occupancy map from 3-D Lidar data using a simultaneous localization and mapping (SLAM) algorithm. This occupancy map is useful for localization and path planning for vehicle navigation. You can also use this map as a prebuilt map to incorporate sensor information.

In this example, you process point clouds incrementally to estimate the trajectory of a vehicle mounted with a lidar sensor. You then use these estimates to build and visualize an occupancy map. The lidar point cloud data in this example has been collected from a scene in a simulation environment built using Unreal Engine® from Epic Games®.

In this example you learn how to:

Set up a scenario in the simulation environment with different scenes, vehicles, sensor configurations, and collect data.

Estimate the trajectory of a vehicle using the phase correlation registration technique.

Use the estimated poses to build and visualize an occupancy map.

Set Up Scenario in Simulation Environment

Load the prebuilt Large Parking Lot (Automated Driving Toolbox) scene. The Select Waypoints for Unreal Engine Simulation (Automated Driving Toolbox) example describes how to interactively select a sequence of waypoints from a scene and how to generate a reference vehicle trajectory. Use this approach to select a sequence of waypoints and generate a reference trajectory for the ego vehicle. Add additional vehicles by specifying the parking poses of the vehicles. Visualize the reference path and the parked vehicles on a 2-D bird's-eye view of the scene.

% Load reference path data = load("parkingLotReferenceData.mat"); % Set reference trajectory of the ego vehicle refPosesX = data.refPosesX; refPosesY = data.refPosesY; refPosesT = data.refPosesT; % Set poses of the parked vehicles parkedPoses = data.parkedPoses([12 1],:); % Display the reference path and the parked vehicle locations sceneName = "LargeParkingLot"; hScene = figure(Name="Large Parking Lot",NumberTitle="off"); helperShowSceneImage(sceneName); hold on plot(refPosesX(:,2),refPosesY(:,2),LineWidth=2,DisplayName="Reference Path"); scatter(parkedPoses(:,1),parkedPoses(:,2),[],"filled",DisplayName="Parked Vehicles"); xlim([-60 40]) ylim([10 60]) hScene.Position = [100, 100, 1000, 500]; % Resize figure legend hold off

Open the model and add parked vehicles using the helperAddParkedVehicle function.

modelName = 'GenerateLidarDataOfParkingLot';

open_system(modelName);

snapnow;

helperAddParkedVehicles(modelName,parkedPoses);Record and Visualize Data

Set up a simple model with a hatchback vehicle moving along the specified reference path by using the Simulation 3D Vehicle with Ground Following (Automated Driving Toolbox) block. Mount a lidar on the roof center of a vehicle using the Simulation 3D Lidar (Automated Driving Toolbox) block. Record and visualize the sensor data. The recorded data is used to develop the localization algorithm*.*

close(hScene) if ismac error(['3D Simulation is supported only on Microsoft' char(174) ' Windows' char(174) ' and Linux' char(174) '.']); end % Run simulation simOut = sim(modelName); close_system(modelName,0); % Extract lidar data ptCloudArr = helperGetPointClouds(simOut); % Extract ground truth for the lidar data as an array of rigidtform3d objects groundTruthPosesLidar = helperGetLidarGroundTruth(simOut);

Vehicle Odometry Using Phase Correlation Algorithm

In order to build a map using the collected point clouds, you must estimate the relative poses between successive point clouds. You then use these poses to estimate the trajectory of the vehicle. This approach of incrementally estimating the trajectory is called odometry.

To build a 2-D occupancy grid map, 2-D pose estimations are sufficient. Hence, convert the point clouds into 2-D lidarScan objects representative of a top down view of the point cloud. Use a phase correlation registration algorithm to calculate the 2-D relative transformation between two images. By successively composing these transformations, you transform each point cloud back to the reference frame of the first point cloud. This technique is also used in pcregistercorr [1].

In summary, these are the steps used to calculate the vehicle odometry:

Preprocess the point cloud.

Convert the point cloud to a 2-D lidar scan, then rasterize the scan to format it as an occupancy grid.

Register two occupancy grid images created from point clouds that correspond to the same scene. Use the

imregcorrfunction to register the grid images and estimate the pose.Use this estimated pose as an initial pose estimate for

matchScansto improve estimation results.Repeat steps on point clouds successively to estimate the relative poses between them.

Preprocess Point Cloud

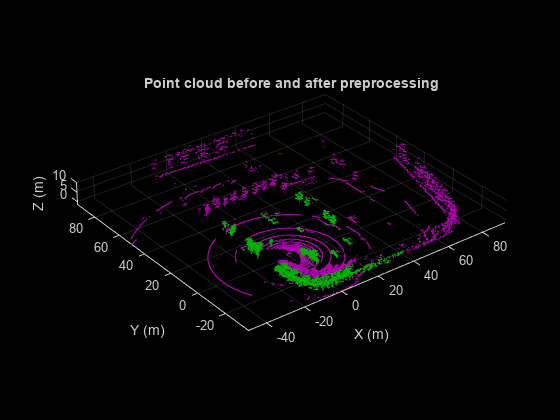

Point cloud preprocessing involves these steps:

Remove the outliers.

Clip the point cloud to concentrate on the area of interest.

Segment and remove the ego vehicle.

ptCloud = ptCloudArr(1); % Use the helper function to preprocess the point cloud ptCloudProcessed = helperPreprocessPtCloud(ptCloud); % Visualize and compare the point cloud before and after preprocessing. figure(Name="Processed Point Clouds",NumberTitle="off"); pcViewAxes = pcshowpair(ptCloud, ptCloudProcessed); title("Point cloud before and after preprocessing"); xlabel(pcViewAxes,"X (m)"); ylabel(pcViewAxes,"Y (m)"); zlabel(pcViewAxes,"Z (m)");

Create 2-D Lidar Scan

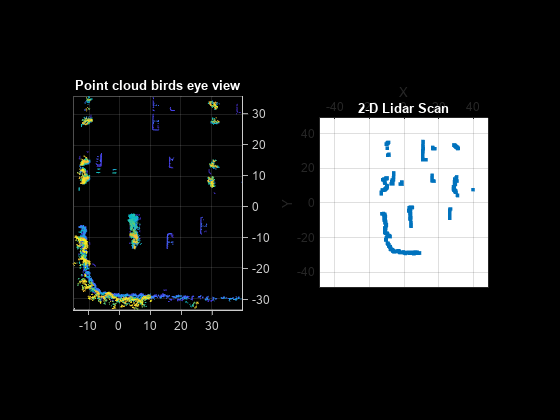

Follow the steps below to create a 2-D lidarScan object representative of a top-down view of the point cloud:

Determine the position of the sensor relative to the point cloud origin and convert it to a

rigidtform3dtransformationGenerate a 2-D representation of the top-down view of the point cloud based on the sensor position using

pc2scanVisualize a top-down view of the point cloud and compare the results to the 2-D lidar scan

% Set the occupancy grid size to 100 m with a resolution of 0.2 m gridSize = 100; gridStep = 0.2; % Set the sensor height. This information can be obtained from the lidar % point cloud sensorHeight = groundTruthPosesLidar(1).Translation(3); % Set pc2scan parameters sensorPosition = rigidtform3d([0 0 0], [0, 0, sensorHeight]); rangeLimits = [eps, inf]; angleLimits = [-180 180]; angleResolution = 0.2; elevationTolerance = 10; % Use pc2scan to generate a 2-D lidar scan representative of the same point cloud % contained in occupancyGrid scan = pc2scan(ptCloudProcessed, sensorPosition, ScanRangeLimits=rangeLimits, ... ScanAngleLimits=angleLimits, ElevationAngleTolerance=elevationTolerance, ... ScanAngleResolution=angleResolution); % Visualize top-down view of the point cloud figure; subplot(1,2,1); pcshow(ptCloudProcessed); view(2); tHandle1 = title("Point cloud birds eye view"); tHandle1.Color = [1 1 1]; % Compare with 2-D representation generated with pc2scan subplot(1,2,2); plot(scan); camroll(-90) tHandle3 = title("2-D Lidar Scan"); tHandle3.Color = [1 1 1];

Projecting the points onto the ground plane works well if the ground plane is flat. The data collected in this example has a relatively flat ground plane. If the ground plane is not flat, transform the ground plane so that it is parallel to the X-Y plane. For more information, see the Register Lidar Moving Point Cloud to Fixed Point Cloud example and the Tips section of pcregistercorr.

Register and Estimate Poses

Use a poseGraph (Navigation Toolbox) object to store the estimated poses.

% Pose graph for registration poses

occGridRegEstPoseGraph = poseGraph;

scanRegEstPoseGraph = poseGraph;

relTrans = groundTruthPosesLidar(1).T(4, 1:2);

relYaw = -atan2(groundTruthPosesLidar(1).T(2,1),groundTruthPosesLidar(1).T(1,1));1.) Rasterize the 2-D Lidar scan to convert the scan to an occupancy grid.

2.) Use imregcorr to estimate the relative transformation for the grid.

3.) Use the relative pose found from imregcorr as a pose estimate for matchScans to estimate the relative transformation for the 2-D scan.

4.) Add the poses obtained to the pose graphs using addRelativePose (Navigation Toolbox) method.

5.) Visualize the poses that are stored in the pose graphs as the algorithm progresses.

% Get ground truth pose graph gTPoseGraph = helperGetGTPoseGraph(groundTruthPosesLidar); % Obtain the nodes from the pose graphs gTNodes = gTPoseGraph.nodes(); % Plot the ground truth trajectory figure(Name="Vehicle Trajectory",NumberTitle="off"); set(gcf, Visible="on") compareTrajAxes = axes; compareTrajAxes.XLim = [-10 60]; compareTrajAxes.YLim = [-20 10]; compareTrajAxes.XGrid = "on"; compareTrajAxes.YGrid = "on"; title("Compare Trajectories") hold on; plot(gTNodes(:,1),gTNodes(:,2),Color="blue",LineWidth=3,DisplayName="Ground Truth Trajectory"); % Visualize the estimated trajectory and the location of the vehicle when % running the algorithm occGridEstimatedTraj = plot(gTNodes(1,1),gTNodes(1,2),Color="green",LineWidth=3,DisplayName="Estimated Trajectory (imregcorr)"); scanEstimatedTraj = plot(gTNodes(1,1),gTNodes(1,2),Color="magenta",LineStyle="--",LineWidth=3,DisplayName="Estimated Trajectory (matchScans)"); currentLoc = scatter(gTNodes(1,1),gTNodes(1,2),50,"red","filled",DisplayName="Vehicle Location"); legend; % Process every fourth frame. This is done to speed up the processing % without affecting much the accuracy. skipFrames = 3; numFrames = numel(groundTruthPosesLidar); scanFixed = scan; occGridFixed = helperRasterizeScan(scanFixed, gridSize, gridStep); %Preallocate relPoseHolder to store relative poses for use in future processing relPoseHolder = zeros(ceil(numFrames/skipFrames), 3); for frameIdx = 1+skipFrames:skipFrames:numFrames ptCloud = ptCloudArr(frameIdx); ptCloudProcessed = helperPreprocessPtCloud(ptCloud); scanMoving = pc2scan(ptCloudProcessed, sensorPosition, ScanRangeLimits=rangeLimits, ... ScanAngleLimits=angleLimits,ElevationAngleTolerance=elevationTolerance, ... ScanAngleResolution=angleResolution); % Use helper function to convert the lidarScan to a grid for use with % imregcorr occGridMoving = helperRasterizeScan(scanMoving, gridSize, gridStep); % Use helper function to estimate the relative pose between occGridMoving % and occGridFixed using imregcorr relPose = helperEstimateRelativePose(occGridMoving, occGridFixed, gridStep); % Add pose to occupancy grid registration estimate pose graph relTrans = relPose.Translation(1:2); relYaw = -atan2(relPose.T(2,1), relPose.T(1,1)); occGridRegEstPoseGraph.addRelativePose([relTrans relYaw]); occGridFixed = occGridMoving; % Estimate the relative pose between scanMoving and scanFixed updatedRelPose = matchScans(scanMoving, scanFixed, InitialPose=[relTrans relYaw], MaxIterations=500); % Add pose to scan registration estimate pose graph scanRegEstPoseGraph.addRelativePose(updatedRelPose); relPoseHolder(ceil(frameIdx/skipFrames), :) = updatedRelPose; scanFixed = scanMoving; % Update the estimated trajectory for the occupancy grid occGridEstimatedNode = occGridRegEstPoseGraph.nodes(occGridRegEstPoseGraph.NumNodes); occGridEstimatedTraj.XData(end+1) = occGridEstimatedNode(1); occGridEstimatedTraj.YData(end+1) = occGridEstimatedNode(2); % Update the estimated trajectory and vehicle location for the % scan scanEstimatedNode = scanRegEstPoseGraph.nodes(scanRegEstPoseGraph.NumNodes); scanEstimatedTraj.XData(end+1) = scanEstimatedNode(1); scanEstimatedTraj.YData(end+1) = scanEstimatedNode(2); set(currentLoc,XData=scanEstimatedNode(1),YData=scanEstimatedNode(2)); drawnow; end hold off;

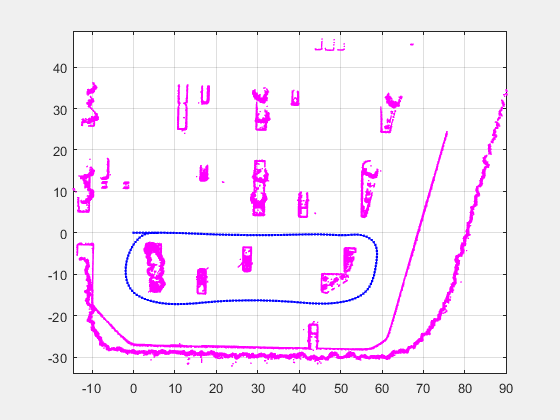

Build Lidar Scan Map

Use the lidarscanmap object to build the map using simultaneous localization and mapping (SLAM). Add successive lidar scans and their associated poses to the lidarscanmap object using its addScan object function. Use these steps to build a map from lidar scans:

Estimate relative pose of each scan by registering it with the first scan using

imregcorrormatchScansfunctions.Add each scan to the

lidarscanmapobject to build the map and store the poses.Correct drifts by detecting loop closures within the

lidarscanmapobject. You can then use these loop closures to perform optimization and correct drift. For this example, the estimated pose has minimal drift so loop closure detection is not necessary. For more information, see the Build Map from 2-D Lidar Scans Using SLAM example.Visualize the resulting lidar scan map.

% Set lidarscanmap parameters mapResolution = 10; maxLidarRange = 50; % Initialize lidarscanmap object scanMapObj = lidarscanmap(mapResolution, maxLidarRange); % Create figure to show the lidarsscanmap object figure(Name="Lidar Scan Map",NumberTitle="off"); set(gcf, Visible="on") title("Lidar Scan Map") for frameIdx = 1:skipFrames:numFrames ptCloud = ptCloudArr(frameIdx); ptCloudProcessed = helperPreprocessPtCloud(ptCloud); scanMoving = pc2scan(ptCloudProcessed, sensorPosition, ScanRangeLimits=rangeLimits, ... ScanAngleLimits=angleLimits, ElevationAngleTolerance=elevationTolerance, ... ScanAngleResolution=angleResolution); scanMoving = lidarScan(double(scanMoving.Ranges), double(scanMoving.Angles)); if frameIdx == 1 isScanAdded = addScan(scanMapObj, scanMoving); else % Add scan and associated relative pose found during registration % and estimation isScanAdded = addScan(scanMapObj, scanMoving, relPoseHolder(ceil(frameIdx/skipFrames), :)); end % Update the figure show(scanMapObj); hold on; drawnow; end hold off;

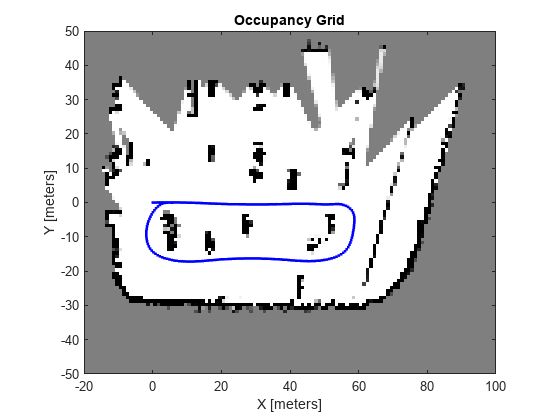

Build and Visualize Occupancy Grid Map

Use the buildMap (Navigation Toolbox) function to build the occupancy grid map. Obtain the required scans and poses using the ScanAttributes property of the lidarscanmap object.

map = buildMap(scanMapObj.ScanAttributes.LidarScan, scanMapObj.ScanAttributes.AbsolutePose, 1, 50); figure(Name="Occupancy Map", NumberTitle="off"); show(map); hold on pGraph = poseGraph(scanMapObj); show(pGraph, IDs="off"); xlim([-20 100]); ylim([-50 50]); hold off;

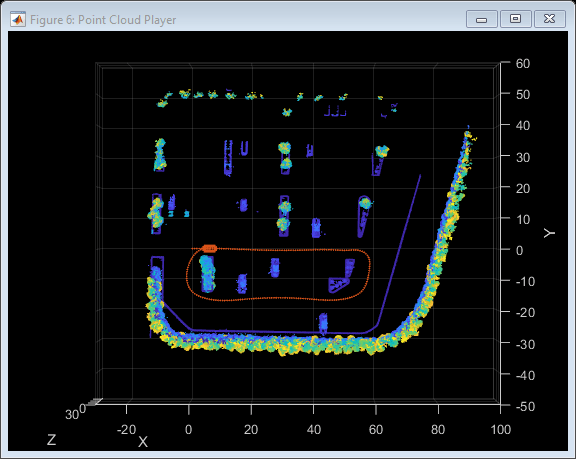

Stitch the point clouds together using the relative pose information from the pose graph to create a point cloud map.

sensorHeight = groundTruthPosesLidar(1).Translation(3); ptCloudAccum = ptCloud.empty; % Configure display xlimits = [ -30 100]; ylimits = [-50 60]; zlimits = [-3 30]; player = pcplayer(xlimits, ylimits, zlimits); % Define rigid transformation between the lidar sensor mounting position % and the vehicle reference point. lidarToVehicleTform = rigidtform3d([0 0 0],[0 0 -1.57]); % Specify vehicle dimensions centerToFront = 1.104; centerToRear = 1.343; frontOverhang = 0.828; rearOverhang = 0.589; vehicleWidth = 1.653; vehicleHeight = 1.513; vehicleLength = centerToFront + centerToRear + frontOverhang + rearOverhang; hatchbackDims = vehicleDimensions(vehicleLength,vehicleWidth,vehicleHeight, ... FrontOverhang=frontOverhang,RearOverhang=rearOverhang); vehicleDims = [hatchbackDims.Length,hatchbackDims.Width,hatchbackDims.Height]; vehicleColor = [0.85 0.325 0.098]; % Estimation trajectory handle markerSize = 3; hold(player.Axes,"on"); estTrajHandle = scatter3(player.Axes,NaN,NaN,NaN,markerSize,vehicleColor,"filled"); hold(player.Axes,"off"); for frameIdx = 1:skipFrames:numFrames % Obtain the point clouds ptCloud = ptCloudArr(frameIdx); ptCloudProcessed = helperPreprocessPtCloud(ptCloud); % Obtain the pose poseIdx = floor((frameIdx-1)/skipFrames) + 1; pose = scanMapObj.ScanAttributes.AbsolutePose(poseIdx, :); % Construct the rigidtform3d object required to concatenate point clouds. % This object holds the rigid transformation yaw = pose(3); rot = [cos(yaw) -sin(yaw) 0;... sin(yaw) cos(yaw) 0;... 0 0 1]; trans = [pose(1:2) sensorHeight]; absPose = rigidtform3d(rot,trans); % Accumulated point cloud map ptCloudAccum = pccat([ptCloudAccum,pctransform(ptCloudProcessed,absPose)]); % Update accumulated point cloud map view(player,ptCloudAccum); % Set viewing angle to top view view(player.Axes,2); % Convert current absolute pose of sensor to vehicle frame absVehiclePose = rigidtform3d( absPose.A * lidarToVehicleTform.A ); % Draw vehicle at current absolute pose helperDrawVehicle(player.Axes,absVehiclePose,vehicleDims,Color=vehicleColor); % Update the estimation trajectory handle estTrajHandle.XData(end+1) = trans(1); estTrajHandle.YData(end+1) = trans(2); estTrajHandle.ZData(end+1) = trans(3); end hold(player.Axes, "off");

References

[1] Dimitrievski, Martin, David Van Hamme, Peter Veelaert, and Wilfried Philips. “Robust Matching of Occupancy Maps for Odometry in Autonomous Vehicles.” In Proceedings of the 11th Joint Conference on Computer Vision, Imaging and Computer Graphics Theory and Applications, 626–33. Rome, Italy: SCITEPRESS - Science and Technology Publications, 2016.

Supporting Functions

helperShowSceneImage displays scene image.

helperAddParkedVehicles adds parked vehicles to the parking lot scene.

helperPreprocessPtCloud preprocesses the point cloud.

helperDrawVehicle draws a vehicle in the map.

helperGetSceneImage retrieves scene image and spatial reference.

helperGetPointClouds extracts an array of pointCloud objects that contain lidar sensor data.

function ptCloudArr = helperGetPointClouds(simOut) % Extract signal ptCloudData = simOut.ptCloudData.signals.values; % Create a pointCloud array ptCloudArr = pointCloud(ptCloudData(:,:,:,2)); % Ignore first frame for n = 3 : size(ptCloudData,4) ptCloudArr(end+1) = pointCloud(ptCloudData(:,:,:,n)); %#ok<AGROW> end end

helperGetLidarGroundTruth Extract ground truth location and orientation.

function gTruth = helperGetLidarGroundTruth(simOut) numFrames = size(simOut.lidarLocation.time,1); gTruth = repmat(rigidtform3d, numFrames-1, 1); for i = 2:numFrames gTruth(i-1).Translation = squeeze(simOut.lidarLocation.signals.values(:, :, i)); % Ignore the roll and the pitch rotations since the ground is flat yaw = double(simOut.lidarOrientation.signals.values(:, 3, i)); gTruth(i-1).R = [cos(yaw), -sin(yaw), 0; ... sin(yaw), cos(yaw), 0; ... 0, 0, 1]; end end

helperGetGTPoseGraph Obtain pose graph from Lidar ground truth

function gTPoseGraph = helperGetGTPoseGraph(gTruthLidar) gTPoseGraph = poseGraph; gTPoseGraph.addRelativePose([0 0 0]); for idx = 3:numel(gTruthLidar) relTform = gTruthLidar(idx).T * inv(gTruthLidar(idx-1).T); relTrans = relTform(4, 1:2); relYaw = -atan2(relTform(2,1),relTform(1,1)); gTPoseGraph.addRelativePose([relTrans relYaw]); end end

helperEstimateRelativePose performs registration using imregcorr to estimate the relative pose between two occupancy grids

function relPose = helperEstimateRelativePose(occGridMoving, occGridFixed, gridStep) %imregcorr reports the transformation from the origin. Hence provide a % referencing such that the sensor is at the center of the images Rgrid = imref2d(size(occGridMoving)); offsetX = mean(Rgrid.XWorldLimits); Rgrid.XWorldLimits = Rgrid.XWorldLimits - offsetX; offsetY = mean(Rgrid.YWorldLimits); Rgrid.YWorldLimits = Rgrid.YWorldLimits - offsetY; % Find relative pose in image coordinates relPoseImage = imregcorr(occGridMoving,Rgrid,occGridFixed,Rgrid); % Convert translation to grid coordinates transInGrid = relPoseImage.T(3,1:2) .* gridStep; % The tranformation is a rigid transformation. Since relative pose is % an affine2d object, convert to rigid2d object rotations = relPoseImage.T(1:2,1:2); [u,~,v] = svd(rotations); relPose = rigid2d(u*v', transInGrid); end

helperRasterizeScan creates a grid object from a lidarScan for use with imregcorr

function grid = helperRasterizeScan(scan, gridSize, gridStep) %rasterizeScan convert 2D lidar scan into grid. Grid cell % values are true when at least one scan point lies within the % cell boundaries. xyPoints = scan.Cartesian; gridCount = zeros(gridSize/gridStep); % Calculate x-y indices of the points in scan xIndices = discretize(xyPoints(:,2), -(gridSize/2):gridStep:(gridSize/2)); yIndices = discretize(xyPoints(:,1), -(gridSize/2):gridStep:(gridSize/2)); numPoints = scan.Count; for i = 1:numPoints xIdx = xIndices(i); yIdx = yIndices(i); if ~isnan(xIdx) && ~isnan(yIdx) gridCount(xIdx, yIdx) = gridCount(xIdx, yIdx) + 1; end end grid = gridCount>0; end