Feature Selection and Feature Transformation Using Regression Learner App

Investigate Features in the Response Plot

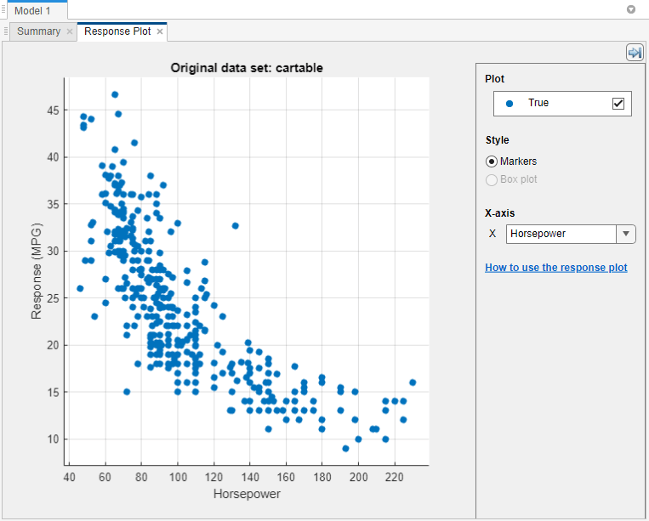

In Regression Learner, use the response plot to try to identify predictors that are useful for predicting the response. To visualize the relation between different predictors and the response, under X-axis, select different variables in the X list.

Before you train a regression model, the response plot shows the training data. If you have trained a regression model, then the response plot also shows the model predictions.

Observe which variables are associated most clearly with the response. When you

plot the carbig data set, the predictor

Horsepower shows a clear negative association with the

response.

Look for features that do not seem to have any association with the response and use Feature Selection to remove those features from the set of used predictors. See Select Features to Include.

You can export the response plots you create in the app to figures. See Export Plots in Regression Learner App.

Select Features to Include

In Regression Learner, you can specify different features (or predictors) to include in the model. See if you can improve models by removing features with low predictive power. If data collection is expensive or difficult, you might prefer a model that performs satisfactorily with fewer predictors.

You can determine which important predictors to include by using different feature

ranking algorithms. After you select a feature ranking algorithm, the app displays a

plot of the sorted feature importance scores, where larger scores (including

Infs) indicate greater feature importance. The app also

displays the ranked features and their scores in a table.

To use feature ranking algorithms in Regression Learner, click Feature Selection in the Options section of the Learn tab. The app opens a Default Feature Selection tab, where you can choose a feature ranking algorithm.

| Feature Ranking Algorithm | Supported Data Types | Description |

|---|---|---|

| MRMR | Categorical and continuous features | Rank features sequentially using the Minimum Redundancy Maximum Relevance (MRMR) Algorithm. For more information, see |

| F Test | Categorical and continuous features | Examine the importance of each predictor individually using an F-test, and then rank features using the p-values of the F-test statistics. Each F-test tests the hypothesis that the response values grouped by predictor variable values are drawn from populations with the same mean against the alternative hypothesis that the population means are not all the same. Scores correspond to –log(p). For more information, see

|

| RReliefF | Either all categorical features or all continuous features. ReliefF is not supported when either of the following is true:

| Rank features using the RReliefF algorithm with 10 nearest neighbors. This algorithm works best for estimating feature importance for distance-based supervised models that use pairwise distances between observations to predict the response. For more

information, see |

Note

When you select ReliefF, the app calculates feature

importance scores using predictor z-score values (see

normalize) instead of the actual predictor values.

Choose between selecting the highest ranked features and selecting individual features.

Choose Select highest ranked features to avoid bias in validation metrics. For example, if you use a cross-validation scheme, then for each training fold, the app performs feature selection before training a model. Different folds can select different predictors as the highest ranked features.

Choose Select individual features to include specific features in model training. If you use a cross-validation scheme, then the app uses the same features across all training folds.

When you are done selecting features, click Save and Apply. Your selections affect all draft models in the Models pane and will be applied to new draft models that you create using the gallery in the Models section of the Learn tab.

To select features for a single draft model, open and edit the model summary. Click the model in the Models pane, and then click the model Summary tab (if necessary). The Summary tab includes an editable Feature Selection section.

After you train a model, the Feature Selection section of the model Summary tab lists the features used to train the model. To learn more about how Regression Learner applies feature selection to your data, generate code for your trained regression model. For more information, see Generate MATLAB Code to Train Model with New Data.

You can export the feature ranking plots you create in the app to figures. See Export Plots in Regression Learner App.

For an example using feature selection, see Train Regression Trees Using Regression Learner App.

Transform Features with PCA in Regression Learner

Use principal component analysis (PCA) to reduce the dimensionality of the predictor space. Reducing the dimensionality can create regression models in Regression Learner that help prevent overfitting. PCA linearly transforms predictors to remove redundant dimensions, and generates a new set of variables called principal components.

On the Learn tab, in the Options section, select PCA.

In the Default PCA Options dialog box, select the Enable PCA check box, and then click Save and Apply.

The app applies the changes to all existing draft models in the Models pane and to new draft models that you create using the gallery in the Models section of the Learn tab.

When you next train a model using the Train All button, the

pcafunction transforms your selected features before training the model.By default, PCA keeps only the components that explain 95% of the variance. In the Default PCA Options dialog box, you can change the percentage of variance to explain by selecting the Explained variance value. A higher value risks overfitting, while a lower value risks removing useful dimensions.

If you want to limit the number of PCA components manually, select

Specify number of componentsin the Component reduction criterion list. Select the Number of numeric components value. The number of components cannot be larger than the number of numeric predictors. PCA is not applied to categorical predictors.

You can check PCA options for trained models in the PCA section of the Summary tab. Click a trained model in the Models pane, and then click the model Summary tab (if necessary). For example:

PCA is keeping enough components to explain 95% variance. After training, 2 components were kept. Explained variance per component (in order): 92.5%, 5.3%, 1.7%, 0.5%

To learn more about how Regression Learner applies PCA to your data, generate code

for your trained regression model. For more information on PCA, see the pca function.