fsulaplacian

Rank features for unsupervised learning using Laplacian scores

Description

idx = fsulaplacian(X)X using the Laplacian scores. The

function returns idx, which contains the indices of features ordered by

feature importance. You can use idx to select important features for

unsupervised learning.

idx = fsulaplacian(X,Name,Value)'NumNeighbors',10 to create a similarity graph using 10 nearest neighbors.

Examples

Load the sample data.

load ionosphereRank the features based on importance.

[idx,scores] = fsulaplacian(X);

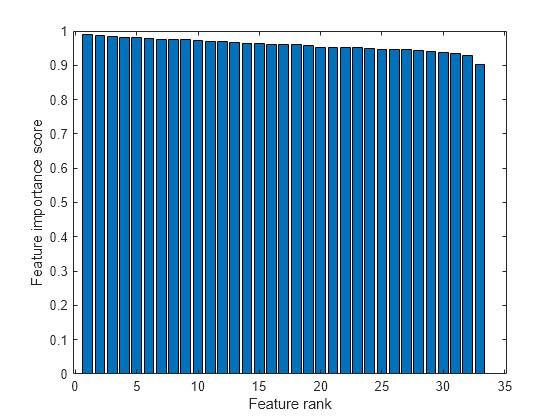

Create a bar plot of the feature importance scores.

bar(scores(idx)) xlabel('Feature rank') ylabel('Feature importance score')

Select the top five most important features. Find the columns of these features in X.

idx(1:5)

ans = 1×5

15 13 17 21 19

The 15th column of X is the most important feature.

Compute a similarity matrix from Fisher's iris data set and rank the features using the similarity matrix.

Load Fisher's iris data set.

load fisheririsFind the distance between each pair of observations in meas by using the pdist and squareform functions with the default Euclidean distance metric.

D = pdist(meas); Z = squareform(D);

Construct the similarity matrix and confirm that it is symmetric.

S = exp(-Z.^2); issymmetric(S)

ans = logical

1

Rank the features.

idx = fsulaplacian(meas,'Similarity',S)idx = 1×4

3 4 1 2

Ranking using the similarity matrix S is the same as ranking by specifying 'NumNeighbors' as size(meas,1).

idx2 = fsulaplacian(meas,'NumNeighbors',size(meas,1))idx2 = 1×4

3 4 1 2

Input Arguments

Input data, specified as an n-by-p numeric

matrix. The rows of X correspond to observations (or points), and the

columns correspond to features.

The software treats NaNs in X as missing

data and ignores any row of X containing at least one

NaN.

Data Types: single | double

Name-Value Arguments

Specify optional pairs of arguments as

Name1=Value1,...,NameN=ValueN, where Name is

the argument name and Value is the corresponding value.

Name-value arguments must appear after other arguments, but the order of the

pairs does not matter.

Before R2021a, use commas to separate each name and value, and enclose

Name in quotes.

Example: 'NumNeighbors',10,'KernelScale','auto' specifies the number

of nearest neighbors as 10 and the kernel scale factor as

'auto'.

Similarity matrix, specified as the comma-separated pair consisting of

'Similarity' and an

n-by-n symmetric matrix, where

n is the number of observations. The similarity matrix (or

adjacency matrix) represents the input data by modeling local neighborhood

relationships among the data points. The values in a similarity matrix represent the

edges (or connections) between nodes (data points) that are connected in a similarity graph. For more information,

see Similarity Matrix.

If you specify the 'Similarity' value, then you cannot

specify any other name-value pair argument. If you do not specify the

'Similarity' value, then the software computes a similarity

matrix using the options specified by the other name-value pair arguments.

Data Types: single | double

Distance metric, specified as the comma-separated pair consisting of

'Distance' and a character vector, string scalar, or function

handle, as described in this table.

| Value | Description |

|---|---|

'euclidean' | Euclidean distance (default) |

'seuclidean' | Standardized Euclidean distance. Each coordinate difference between

observations is scaled by dividing by the corresponding element of the

standard deviation computed from |

'mahalanobis' | Mahalanobis distance using the sample covariance of

|

'cityblock' | City block distance |

'minkowski' | Minkowski distance. The default exponent is 2. Use the

|

'chebychev' | Chebychev distance (maximum coordinate difference) |

'cosine' | One minus the cosine of the included angle between observations (treated as vectors) |

'correlation' | One minus the sample correlation between observations (treated as sequences of values) |

'hamming' | Hamming distance, which is the percentage of coordinates that differ |

'jaccard' | One minus the Jaccard coefficient, which is the percentage of nonzero coordinates that differ |

'spearman' | One minus the sample Spearman's rank correlation between observations (treated as sequences of values) |

@ | Custom distance function handle. A distance function has the form function D2 = distfun(ZI,ZJ) % calculation of distance ...

If your data is not sparse, you can generally compute distance more quickly by using a built-in distance instead of a function handle. |

For more information, see Distance Metrics.

When you use the 'seuclidean', 'minkowski',

or 'mahalanobis' distance metric, you can specify the additional

name-value pair argument 'Scale', 'P', or

'Cov', respectively, to control the distance metrics.

Example: 'Distance','minkowski','P',3 specifies to use the

Minkowski distance metric with an exponent of 3.

Exponent for the Minkowski distance metric, specified as a positive scalar.

This argument is valid only if Distance is

"minkowski".

Example: P=3

Data Types: single | double

Covariance matrix for the Mahalanobis distance metric, specified as the comma-separated pair

consisting of 'Cov' and a positive definite matrix.

This argument is valid only if 'Distance' is 'mahalanobis'.

Example: 'Cov',eye(4)

Data Types: single | double

Scaling factors for the standardized Euclidean distance metric, specified as the

comma-separated pair consisting of 'Scale' and a numeric vector of

nonnegative values.

Scale has length p (the number of columns in

X), because each dimension (column) of X has a

corresponding value in Scale. For each dimension of

X, fsulaplacian uses the corresponding value

in Scale to standardize the difference between observations.

This argument is valid only if 'Distance' is 'seuclidean'.

Data Types: single | double

Number of nearest neighbors used to construct the similarity

graph, specified as the comma-separated pair consisting of 'NumNeighbors'

and a positive integer.

Example: 'NumNeighbors',10

Data Types: single | double

Scale factor for the kernel, specified as the comma-separated pair consisting of 'KernelScale' and 'auto' or a positive scalar. The software uses the scale factor to transform distances to similarity measures. For more information, see Similarity Graph.

The

'auto'option is supported only for the'euclidean'and'seuclidean'distance metrics.If you specify

'auto', then the software selects an appropriate scale factor using a heuristic procedure. This heuristic procedure uses subsampling, so estimates can vary from one call to another. To reproduce results, set a random number seed usingrngbefore callingfsulaplacian.

Example: 'KernelScale','auto'

Output Arguments

Indices of the features in X ordered by feature importance,

returned as a numeric vector. For example, if idx(3) is

5, then the third most important feature is the fifth column in

X.

Feature scores, returned as a numeric vector. A large score value in

scores indicates that the corresponding feature is important. The

values in scores have the same order as the features in

X.

More About

A similarity graph models the local neighborhood relationships between data points in X as an undirected graph. The nodes in the graph represent data points, and the edges, which are directionless, represent the connections between the data points.

If the pairwise distance Disti,j between any two nodes i and j is positive (or larger than a certain threshold), then the similarity graph connects the two nodes using an edge [2]. The edge between the two nodes is weighted by the pairwise similarity Si,j, where , for a specified kernel scale σ value.

fsulaplacian constructs a similarity graph using the nearest

neighbor method. The function connects points in X that are nearest

neighbors. Use 'NumNeighbors' to specify the number of nearest

neighbors.

A similarity matrix is a matrix representation of a similarity graph. The n-by-n matrix contains pairwise similarity values between connected nodes in the similarity graph. The similarity matrix of a graph is also called an adjacency matrix.

The similarity matrix is symmetric because the edges of the similarity graph are

directionless. A value of Si,j =

0 means that nodes i and j of the

similarity graph are not connected.

A degree matrix Dg is an n-by-n diagonal matrix obtained by summing the rows of the similarity matrix S. That is, the ith diagonal element of Dg is

A Laplacian matrix, which is one way of representing a similarity graph, is defined as the difference between the degree matrix Dg and the similarity matrix S.

Algorithms

The fsulaplacian function ranks features using Laplacian scores

[1] obtained from a nearest

neighbor similarity graph.

fsulaplacian computes the values in scores as follows:

For each data point in

X, define a local neighborhood using the nearest neighbor method, and find pairwise distances for all points i and j in the neighborhood.Convert the distances to the similarity matrix S using the kernel transformation , where σ is the scale factor for the kernel as specified by the

'KernelScale'name-value pair argument.Center each feature by removing its mean.

where xr is the rth feature, Dg is the degree matrix, and .

Compute the score sr for each feature.

Note that [1] defines the Laplacian score as

where L is the Laplacian matrix, defined as

the difference between Dg and

S. The fsulaplacian function uses only the second

term of this equation for the score value of scores so that a large

score value indicates an important feature.

Selecting features using the Laplacian score is consistent with minimizing the value

where xir represents the ith observation of the rth feature. Minimizing this value implies that the algorithm prefers features with large variance. Also, the algorithm assumes that two data points of an important feature are close if and only if the similarity graph has an edge between the two data points.

References

[1] He, X., D. Cai, and P. Niyogi. "Laplacian Score for Feature Selection." NIPS Proceedings. 2005.

[2] Von Luxburg, U. “A Tutorial on Spectral Clustering.” Statistics and Computing Journal. Vol.17, Number 4, 2007, pp. 395–416.

Version History

Introduced in R2019b

MATLAB Command

You clicked a link that corresponds to this MATLAB command:

Run the command by entering it in the MATLAB Command Window. Web browsers do not support MATLAB commands.

选择网站

选择网站以获取翻译的可用内容,以及查看当地活动和优惠。根据您的位置,我们建议您选择:。

您也可以从以下列表中选择网站:

如何获得最佳网站性能

选择中国网站(中文或英文)以获得最佳网站性能。其他 MathWorks 国家/地区网站并未针对您所在位置的访问进行优化。

美洲

- América Latina (Español)

- Canada (English)

- United States (English)

欧洲

- Belgium (English)

- Denmark (English)

- Deutschland (Deutsch)

- España (Español)

- Finland (English)

- France (Français)

- Ireland (English)

- Italia (Italiano)

- Luxembourg (English)

- Netherlands (English)

- Norway (English)

- Österreich (Deutsch)

- Portugal (English)

- Sweden (English)

- Switzerland

- United Kingdom (English)