Classify Images on FPGA Using Quantized Neural Network

This example shows how to use Deep Learning HDL Toolbox™ to deploy a quantized deep convolutional neural network (CNN) to an FPGA. In the example you use the pretrained ResNet-18 CNN to perform transfer learning and quantization. You then deploy the quantized network and use MATLAB® to retrieve the prediction results.

ResNet-18 has been trained on over a million images and can classify images into 1000 object categories, such as keyboard, coffee mug, pencil, and many animals. The network has learned rich feature representations for a wide range of images. The network takes an image as input and outputs a label for the object in the image together with the probabilities for each of the object categories.

To perform classification on a new set of images, you fine-tune a pretrained ResNet-18 CNN by transfer learning. In transfer learning, you can take a pretrained network and use it as a starting point to learn a new task. Fine-tuning a network with transfer learning is usually much faster and easier than training a network with randomly initialized weights. You can quickly transfer learned features to a new task using a smaller number of training images.

Load Pretrained Network

Load the pretrained ResNet-18 network.

net = imagePretrainedNetwork("resnet18");View the layers of the pretrained network.

deepNetworkDesigner(net);

The first layer, the image input layer, requires input images of size 227-by-227-by-3, where three is the number of color channels.

inputSize = net.Layers(1).InputSize;

Load Data

This example uses the MathWorks® MerchData data set. This is a small data set containing 75 images of MathWorks merchandise, belonging to five different classes (cap, cube, playing cards, screwdriver, and torch).

curDir = pwd; unzip('MerchData.zip'); imds = imageDatastore('MerchData', ... 'IncludeSubfolders',true, ... 'LabelSource','foldernames');

Specify Training and Validation Sets

Divide the data into training and validation data sets, so that 30% percent of the images go to the training data set and 70% of the images to the validation data set. splitEachLabel splits the datastore imds into two new datastores, imdsTrain and imdsValidation.

[imdsTrain,imdsValidation] = splitEachLabel(imds,0.7,'randomized');Replace Final layers

To retrain ResNet-18 to classify new images, replace the last fully connected layer of the network. In ResNet-18 , this layer has the name 'fc1000'. The fully connected layer of the pretrained network net is configured for 1000 classes. This layer, fc1000 in ResNet-18, contains information on how to combine the features that the network extracts into class probabilities. The layer must be fine-tuned for the new classification problem. Extract all the layers, except the last layer, from the pretrained network.

numClasses = numel(categories(imdsTrain.Labels))

numClasses = 5

newLearnableLayer = fullyConnectedLayer(numClasses, ... 'Name','new_fc', ... 'WeightLearnRateFactor',10, ... 'BiasLearnRateFactor',10); net = replaceLayer(net,'fc1000',newLearnableLayer);

Prepare Data for Training

The network requires input images of size 224-by-224-by-3, but the images in the image datastores have different sizes. Use an augmented image datastore to automatically resize the training images. Specify additional augmentation operations to perform on the training images, such as randomly flipping the training images along the vertical axis and randomly translating them up to 30 pixels horizontally and vertically. Data augmentation helps prevent the network from overfitting and memorizing the exact details of the training images.

pixelRange = [-30 30]; imageAugmenter = imageDataAugmenter( ... 'RandXReflection',true, ... 'RandXTranslation',pixelRange, ... 'RandYTranslation',pixelRange);

To automatically resize the validation images without performing further data augmentation, use an augmented image datastore without specifying any additional preprocessing operations.

augimdsTrain = augmentedImageDatastore(inputSize(1:2),imdsTrain, ... 'DataAugmentation',imageAugmenter); augimdsValidation = augmentedImageDatastore(inputSize(1:2),imdsValidation);

Specify Training Options

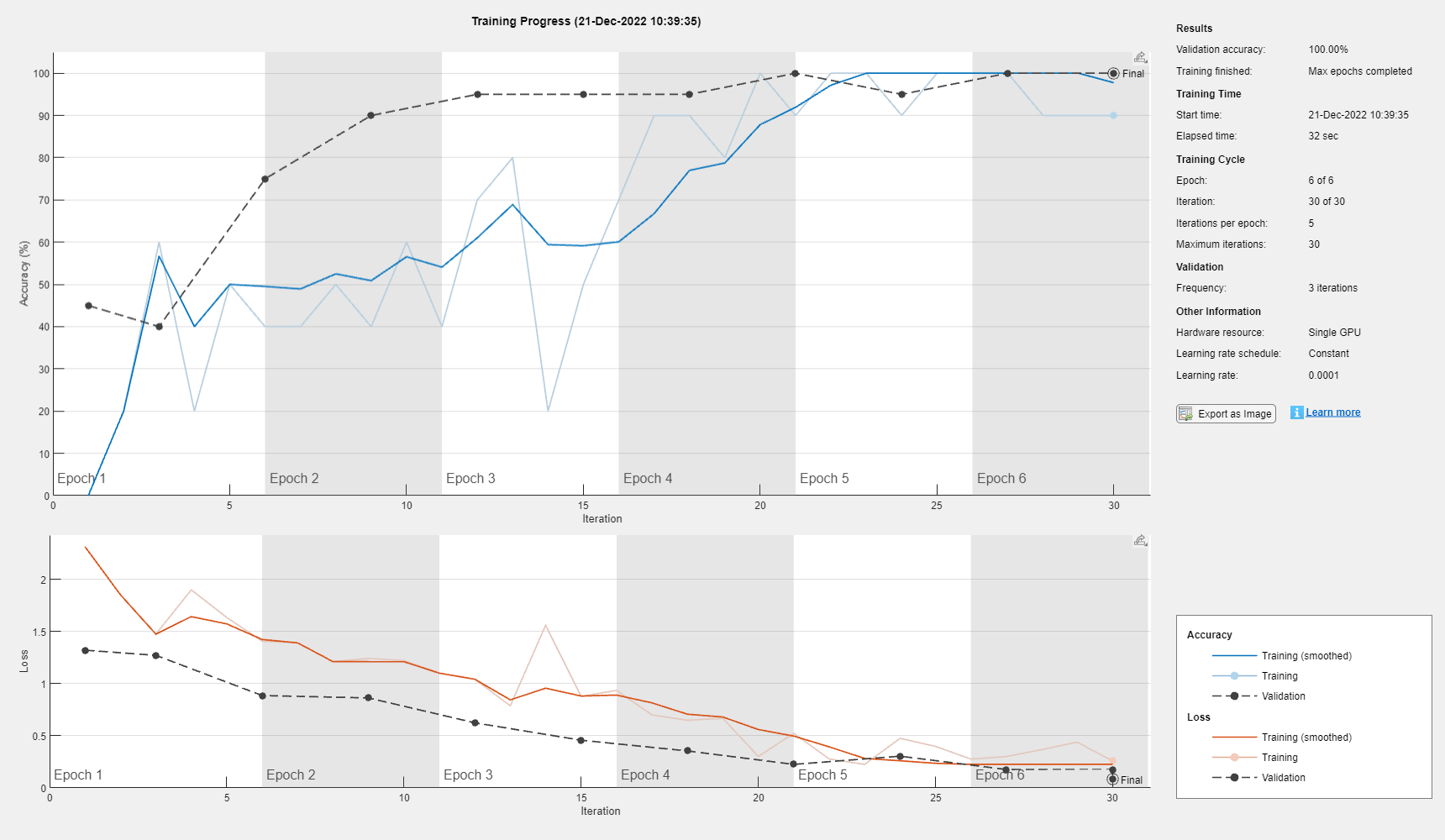

Specify the training options. For transfer learning, keep the features from the early layers of the pretrained network (the transferred layer weights). To slow down learning in the transferred layers, set the initial learning rate to a small value. Specify the mini-batch size and validation data. The software validates the network every ValidationFrequency iterations during training.

options = trainingOptions('sgdm', ... 'MiniBatchSize',10, ... 'MaxEpochs',6, ... 'InitialLearnRate',1e-4, ... 'Shuffle','every-epoch', ... 'ValidationData',augimdsValidation, ... 'ValidationFrequency',3, ... 'Verbose',false, ... 'Plots','training-progress');

Train Network

Train the network that consists of the transferred and new layers. By default, trainnet uses a GPU if one is available. Using this function on a GPU requires Parallel Computing Toolbox™ and a supported GPU device. For more information, see GPU Computing Requirements (Parallel Computing Toolbox). If a GPU is not available, the network uses a CPU (requires MATLAB Coder™ Interface for Deep Learning). You can also specify the execution environment by using the ExecutionEnvironment name-value argument of trainingOptions.

netTransfer = trainnet(augimdsTrain, net, 'crossentropy', options)

netTransfer =

dlnetwork with properties:

Layers: [70×1 nnet.cnn.layer.Layer]

Connections: [77×2 table]

Learnables: [82×3 table]

State: [40×3 table]

InputNames: {'data'}

OutputNames: {'prob'}

Initialized: 1

View summary with summary.

Quantize Network

Quantize the network using the dlquantizer object. Set the target execution environment to FPGA.

dlquantObj = dlquantizer(netTransfer,'ExecutionEnvironment','FPGA');

Calibrate Quantized Network

Use the calibrate function to exercise the network with sample inputs and collect the range information. The calibrate function collects the dynamic ranges of the weights and biases in the convolution and fully connected layers of the network and the dynamic ranges of the activations in all layers of the network. The function returns the information as a table, in which each row contains range information for a learnable parameter of the quantized network.

calibrate(dlquantObj,augimdsTrain)

ans=94×5 table

'conv1_Weights' 'conv1' "Weights" -0.6453 0.8982

'conv1_Bias' 'conv1' "Bias" -0.6403 0.6878

'res2a_branch2a_Weights' 'res2a_branch2a' "Weights" -0.3902 0.3393

'res2a_branch2a_Bias' 'res2a_branch2a' "Bias" -0.7996 1.2763

'res2a_branch2b_Weights' 'res2a_branch2b' "Weights" -0.7563 0.5779

'res2a_branch2b_Bias' 'res2a_branch2b' "Bias" -1.3255 1.7421

'res2b_branch2a_Weights' 'res2b_branch2a' "Weights" -0.3105 0.3347

'res2b_branch2a_Bias' 'res2b_branch2a' "Bias" -1.1135 1.4752

'res2b_branch2b_Weights' 'res2b_branch2b' "Weights" -1.1498 0.9335

'res2b_branch2b_Bias' 'res2b_branch2b' "Bias" -0.8447 1.2549

'res3a_branch2a_Weights' 'res3a_branch2a' "Weights" -0.1905 0.2458

'res3a_branch2a_Bias' 'res3a_branch2a' "Bias" -0.5382 0.6865

'res3a_branch2b_Weights' 'res3a_branch2b' "Weights" -0.5418 0.7319

'res3a_branch2b_Bias' 'res3a_branch2b' "Bias" -0.6842 1.1596

⋮

Define FPGA Board Interface

Define the target FPGA board programming interface by using the dlhdl.Target object. Create a programming interface with custom name for your target device and an Ethernet interface to connect the target device to the host computer.

hTarget = dlhdl.Target('Xilinx','Interface','Ethernet');

Prepare Network for Deployment

Prepare the network for deployment by creating a dlhdl.Workflow object. Specify the network and bitstream name. Ensure that the bitstream name matches the data type and the FPGA board that you are targeting. In this example, the target FPGA board is the Xilinx® Zynq® UltraScale+™ MPSoC ZCU102 board and the bitstream uses the int8 data type.

hW = dlhdl.Workflow(Network=dlquantObj,Bitstream='zcu102_int8',Target=hTarget);Compile Network

Run the compile method of the dlhdl.Workflow object to compile the network and generate the instructions, weights, and biases for deployment.

dn = compile(hW,'InputFrameNumberLimit',15)### Compiling network for Deep Learning FPGA prototyping ...

### Targeting FPGA bitstream zcu102_int8.

### An output layer called 'Output1_prob' of type 'nnet.cnn.layer.RegressionOutputLayer' has been added to the provided network. This layer performs no operation during prediction and thus does not affect the output of the network.

### Optimizing network: Fused 'nnet.cnn.layer.BatchNormalizationLayer' into 'nnet.cnn.layer.Convolution2DLayer'

### The network includes the following layers:

### Notice: The layer 'data' with type 'nnet.cnn.layer.ImageInputLayer' is implemented in software.

### Notice: The layer 'prob' with type 'nnet.cnn.layer.SoftmaxLayer' is implemented in software.

### Notice: The layer 'Output1_prob' with type 'nnet.cnn.layer.RegressionOutputLayer' is implemented in software.

### Compiling layer group: conv1>>pool1 ...

### Compiling layer group: conv1>>pool1 ... complete.

### Compiling layer group: res2a_branch2a>>res2a_branch2b ...

### Compiling layer group: res2a_branch2a>>res2a_branch2b ... complete.

### Compiling layer group: res2b_branch2a>>res2b_branch2b ...

### Compiling layer group: res2b_branch2a>>res2b_branch2b ... complete.

### Compiling layer group: res3a_branch1 ...

### Compiling layer group: res3a_branch1 ... complete.

### Compiling layer group: res3a_branch2a>>res3a_branch2b ...

### Compiling layer group: res3a_branch2a>>res3a_branch2b ... complete.

### Compiling layer group: res3b_branch2a>>res3b_branch2b ...

### Compiling layer group: res3b_branch2a>>res3b_branch2b ... complete.

### Compiling layer group: res4a_branch1 ...

### Compiling layer group: res4a_branch1 ... complete.

### Compiling layer group: res4a_branch2a>>res4a_branch2b ...

### Compiling layer group: res4a_branch2a>>res4a_branch2b ... complete.

### Compiling layer group: res4b_branch2a>>res4b_branch2b ...

### Compiling layer group: res4b_branch2a>>res4b_branch2b ... complete.

### Compiling layer group: res5a_branch1 ...

### Compiling layer group: res5a_branch1 ... complete.

### Compiling layer group: res5a_branch2a>>res5a_branch2b ...

### Compiling layer group: res5a_branch2a>>res5a_branch2b ... complete.

### Compiling layer group: res5b_branch2a>>res5b_branch2b ...

### Compiling layer group: res5b_branch2a>>res5b_branch2b ... complete.

### Compiling layer group: pool5 ...

### Compiling layer group: pool5 ... complete.

### Compiling layer group: new_fc ...

### Compiling layer group: new_fc ... complete.

### Allocating external memory buffers:

offset_name offset_address allocated_space

_______________________ ______________ ________________

"InputDataOffset" "0x00000000" "5.7 MB"

"OutputResultOffset" "0x005be000" "4.0 kB"

"SchedulerDataOffset" "0x005bf000" "712.0 kB"

"SystemBufferOffset" "0x00671000" "1.6 MB"

"InstructionDataOffset" "0x007fe000" "1.2 MB"

"ConvWeightDataOffset" "0x00936000" "13.5 MB"

"FCWeightDataOffset" "0x016ab000" "12.0 kB"

"EndOffset" "0x016ae000" "Total: 22.7 MB"

### Network compilation complete.

dn = struct with fields:

weights: [1×1 struct]

instructions: [1×1 struct]

registers: [1×1 struct]

syncInstructions: [1×1 struct]

constantData: {}

ddrInfo: [1×1 struct]

resourceTable: [6×2 table]

Program Bitstream onto FPGA and Download Network Weights

To deploy the network on the Xilinx ZCU102 hardware, run the deploy function of the dlhdl.Workflow object. This function uses the output of the compile function to program the FPGA board by using the programming file. It also downloads the network weights and biases. The deploy function starts programming the FPGA device, displays progress messages, and the time it takes to deploy the network.

deploy(hW)

### Programming FPGA Bitstream using Ethernet... ### Attempting to connect to the hardware board at 172.21.88.150... ### Connection successful ### Programming FPGA device on Xilinx SoC hardware board at 172.21.88.150... ### Attempting to connect to the hardware board at 172.21.88.150... ### Connection successful ### Copying FPGA programming files to SD card... ### Setting FPGA bitstream and devicetree for boot... # Copying Bitstream zcu102_int8.bit to /mnt/hdlcoder_rd # Set Bitstream to hdlcoder_rd/zcu102_int8.bit # Copying Devicetree devicetree_dlhdl.dtb to /mnt/hdlcoder_rd # Set Devicetree to hdlcoder_rd/devicetree_dlhdl.dtb # Set up boot for Reference Design: 'AXI-Stream DDR Memory Access : 3-AXIM' ### Programming done. The system will now reboot for persistent changes to take effect. ### Rebooting Xilinx SoC at 172.21.88.150... ### Reboot may take several seconds... ### Attempting to connect to the hardware board at 172.21.88.150... ### Connection successful ### Programming the FPGA bitstream has been completed successfully. ### Loading weights to Conv Processor. ### Conv Weights loaded. Current time is 30-Aug-2024 11:31:45 ### Loading weights to FC Processor. ### FC Weights loaded. Current time is 30-Aug-2024 11:31:45

Test Network

Load the example image.

imgFile = fullfile(pwd,'MathWorks_cube_0.jpg');

inputImg = imresize(imread(imgFile),[224 224]);

imshow(inputImg)

Classify the image on the FPGA by using the predict method of the dlhdl.Workflow object and display the results.

inputImg = dlarray(single(inputImg), 'SSCB'); [prediction,speed] = predict(hW,inputImg,'Profile','on');

### Finished writing input activations.

### Running single input activation.

Deep Learning Processor Profiler Performance Results

LastFrameLatency(cycles) LastFrameLatency(seconds) FramesNum Total Latency Frames/s

------------- ------------- --------- --------- ---------

Network 7405555 0.02962 1 7408123 33.7

conv1 1115422 0.00446

pool1 200956 0.00080

res2a_branch2a 270504 0.00108

res2a_branch2b 270422 0.00108

res2a 109005 0.00044

res2b_branch2a 270206 0.00108

res2b_branch2b 270217 0.00108

res2b 109675 0.00044

res3a_branch1 155444 0.00062

res3a_branch2a 156720 0.00063

res3a_branch2b 245246 0.00098

res3a 54876 0.00022

res3b_branch2a 245640 0.00098

res3b_branch2b 245443 0.00098

res3b 55736 0.00022

res4a_branch1 135533 0.00054

res4a_branch2a 136768 0.00055

res4a_branch2b 238039 0.00095

res4a 27602 0.00011

res4b_branch2a 237950 0.00095

res4b_branch2b 238645 0.00095

res4b 27792 0.00011

res5a_branch1 324200 0.00130

res5a_branch2a 326074 0.00130

res5a_branch2b 623097 0.00249

res5a 13961 0.00006

res5b_branch2a 623607 0.00249

res5b_branch2b 624000 0.00250

res5b 13621 0.00005

pool5 36826 0.00015

new_fc 2141 0.00001

* The clock frequency of the DL processor is: 250MHz

scores2label(prediction,categories(imdsTrain.Labels))

ans = categorical

MathWorks Cube

Performance Comparison

Compare the performance of the quantized network to the performance of the single data type network.

optionsFPGA = dlquantizationOptions('Bitstream','zcu102_int8','Target',hTarget, 'MetricFcn', {@(x)computeClassificationAccuracy(x,imdsValidation)}); predictionFPGA = validate(dlquantObj,imdsValidation,optionsFPGA)

### Compiling network for Deep Learning FPGA prototyping ...

### Targeting FPGA bitstream zcu102_int8.

### An output layer called 'Output1_prob' of type 'nnet.cnn.layer.RegressionOutputLayer' has been added to the provided network. This layer performs no operation during prediction and thus does not affect the output of the network.

### Optimizing network: Fused 'nnet.cnn.layer.BatchNormalizationLayer' into 'nnet.cnn.layer.Convolution2DLayer'

### The network includes the following layers:

### Notice: The layer 'data' with type 'nnet.cnn.layer.ImageInputLayer' is implemented in software.

### Notice: The layer 'prob' with type 'nnet.cnn.layer.SoftmaxLayer' is implemented in software.

### Notice: The layer 'Output1_prob' with type 'nnet.cnn.layer.RegressionOutputLayer' is implemented in software.

### Compiling layer group: conv1>>pool1 ...

### Compiling layer group: conv1>>pool1 ... complete.

### Compiling layer group: res2a_branch2a>>res2a_branch2b ...

### Compiling layer group: res2a_branch2a>>res2a_branch2b ... complete.

### Compiling layer group: res2b_branch2a>>res2b_branch2b ...

### Compiling layer group: res2b_branch2a>>res2b_branch2b ... complete.

### Compiling layer group: res3a_branch1 ...

### Compiling layer group: res3a_branch1 ... complete.

### Compiling layer group: res3a_branch2a>>res3a_branch2b ...

### Compiling layer group: res3a_branch2a>>res3a_branch2b ... complete.

### Compiling layer group: res3b_branch2a>>res3b_branch2b ...

### Compiling layer group: res3b_branch2a>>res3b_branch2b ... complete.

### Compiling layer group: res4a_branch1 ...

### Compiling layer group: res4a_branch1 ... complete.

### Compiling layer group: res4a_branch2a>>res4a_branch2b ...

### Compiling layer group: res4a_branch2a>>res4a_branch2b ... complete.

### Compiling layer group: res4b_branch2a>>res4b_branch2b ...

### Compiling layer group: res4b_branch2a>>res4b_branch2b ... complete.

### Compiling layer group: res5a_branch1 ...

### Compiling layer group: res5a_branch1 ... complete.

### Compiling layer group: res5a_branch2a>>res5a_branch2b ...

### Compiling layer group: res5a_branch2a>>res5a_branch2b ... complete.

### Compiling layer group: res5b_branch2a>>res5b_branch2b ...

### Compiling layer group: res5b_branch2a>>res5b_branch2b ... complete.

### Compiling layer group: pool5 ...

### Compiling layer group: pool5 ... complete.

### Compiling layer group: new_fc ...

### Compiling layer group: new_fc ... complete.

### Allocating external memory buffers:

offset_name offset_address allocated_space

_______________________ ______________ ________________

"InputDataOffset" "0x00000000" "11.5 MB"

"OutputResultOffset" "0x00b7c000" "4.0 kB"

"SchedulerDataOffset" "0x00b7d000" "720.0 kB"

"SystemBufferOffset" "0x00c31000" "1.6 MB"

"InstructionDataOffset" "0x00dbe000" "1.2 MB"

"ConvWeightDataOffset" "0x00ef6000" "13.5 MB"

"FCWeightDataOffset" "0x01c6b000" "12.0 kB"

"EndOffset" "0x01c6e000" "Total: 28.4 MB"

### Network compilation complete.

### FPGA bitstream programming has been skipped as the same bitstream is already loaded on the target FPGA.

### Loading weights to Conv Processor.

### Conv Weights loaded. Current time is 30-Aug-2024 11:33:06

### Loading weights to FC Processor.

### FC Weights loaded. Current time is 30-Aug-2024 11:33:06

### Finished writing input activations.

### Running single input activation.

### Finished writing input activations.

### Running single input activation.

### Finished writing input activations.

### Running single input activation.

### Finished writing input activations.

### Running single input activation.

### Finished writing input activations.

### Running single input activation.

### Finished writing input activations.

### Running single input activation.

### Finished writing input activations.

### Running single input activation.

### Finished writing input activations.

### Running single input activation.

### Finished writing input activations.

### Running single input activation.

### Finished writing input activations.

### Running single input activation.

### Finished writing input activations.

### Running single input activation.

### Finished writing input activations.

### Running single input activation.

### Finished writing input activations.

### Running single input activation.

### Finished writing input activations.

### Running single input activation.

### Finished writing input activations.

### Running single input activation.

### Finished writing input activations.

### Running single input activation.

### Finished writing input activations.

### Running single input activation.

### Finished writing input activations.

### Running single input activation.

### Finished writing input activations.

### Running single input activation.

### Finished writing input activations.

### Running single input activation.

### An output layer called 'Output1_prob' of type 'nnet.cnn.layer.RegressionOutputLayer' has been added to the provided network. This layer performs no operation during prediction and thus does not affect the output of the network.

### Optimizing network: Fused 'nnet.cnn.layer.BatchNormalizationLayer' into 'nnet.cnn.layer.Convolution2DLayer'

### Notice: The layer 'data' of type 'ImageInputLayer' is split into an image input layer 'data', an addition layer 'data_norm_add', and a multiplication layer 'data_norm' for hardware normalization.

### The network includes the following layers:

### Notice: The layer 'prob' with type 'nnet.cnn.layer.SoftmaxLayer' is implemented in software.

### Notice: The layer 'Output1_prob' with type 'nnet.cnn.layer.RegressionOutputLayer' is implemented in software.

Deep Learning Processor Estimator Performance Results

LastFrameLatency(cycles) LastFrameLatency(seconds) FramesNum Total Latency Frames/s

------------- ------------- --------- --------- ---------

Network 23781318 0.10810 1 23781318 9.3

data_norm_add 268453 0.00122

data_norm 163081 0.00074

conv1 2164700 0.00984

pool1 515128 0.00234

res2a_branch2a 966477 0.00439

res2a_branch2b 966477 0.00439

res2a 268453 0.00122

res2b_branch2a 966477 0.00439

res2b_branch2b 966477 0.00439

res2b 268453 0.00122

res3a_branch1 541373 0.00246

res3a_branch2a 541261 0.00246

res3a_branch2b 920141 0.00418

res3a 134257 0.00061

res3b_branch2a 920141 0.00418

res3b_branch2b 920141 0.00418

res3b 134257 0.00061

res4a_branch1 505453 0.00230

res4a_branch2a 511309 0.00232

res4a_branch2b 909517 0.00413

res4a 67152 0.00031

res4b_branch2a 909517 0.00413

res4b_branch2b 909517 0.00413

res4b 67152 0.00031

res5a_branch1 1045581 0.00475

res5a_branch2a 1052749 0.00479

res5a_branch2b 2017485 0.00917

res5a 33582 0.00015

res5b_branch2a 2017485 0.00917

res5b_branch2b 2017485 0.00917

res5b 33582 0.00015

pool5 55746 0.00025

new_fc 2259 0.00001

* The clock frequency of the DL processor is: 220MHz

### Finished writing input activations.

### Running single input activation.

predictionFPGA = struct with fields:

NumSamples: 20

MetricResults: [1×1 struct]

Statistics: [2×7 table]

View the frames per second performance for the quantized network and single-data-type network. The quantized network has a performance of 33.8 frames per second compared to 9.3 frames per second for the single-data-type network. You can use quantization to improve your frames per second performance, however you could lose accuracy when you quantize your networks.

predictionFPGA.Statistics.FramesPerSecond

ans = 2×1

9.2510

33.7498

However, in this case you can observe the accuracy to be the same for both networks.

predictionFPGA.MetricResults.Result

ans=2×2 table

'Floating-Point' 0.9000

'Quantized' 0.9000

Helper Functions

function accuracy = computeClassificationAccuracy(fpgaOutput, validationData) % Compute accuracy of FPGA result compared to the Deep Learning Toolbox. % Copyright 2024 The MathWorks, Inc. fpgaClassifications = scores2label(fpgaOutput, categories(validationData.Labels)); fpgaClassifications = reshape(fpgaClassifications, size(validationData.Labels)); accuracy = sum(fpgaClassifications == validationData.Labels)./numel(fpgaClassifications); end

See Also

dlhdl.Workflow | dlhdl.Target | compile | deploy | predict | dlquantizer | dlquantizationOptions | calibrate | validate