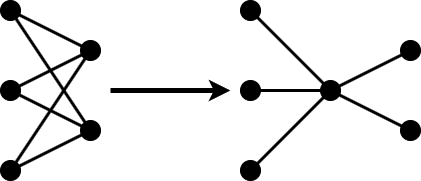

投影

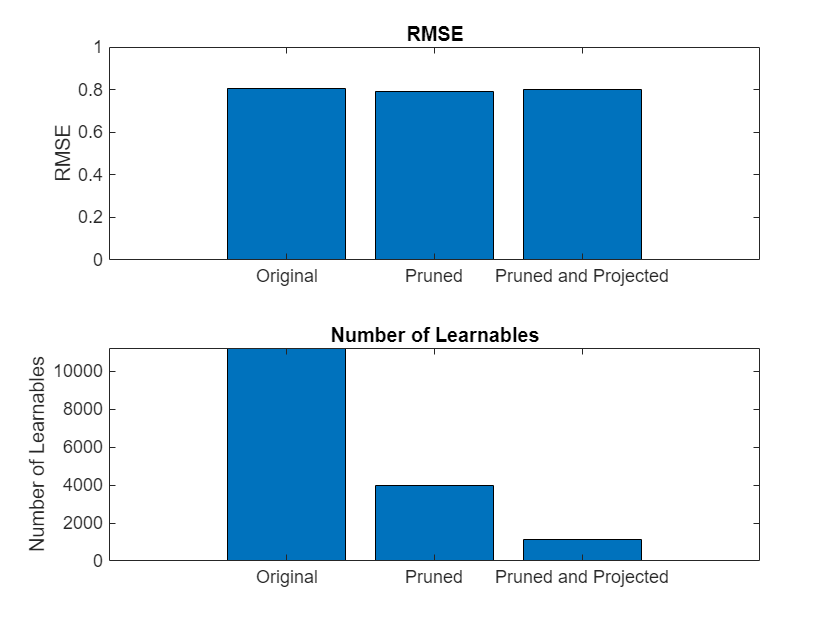

使用主成分分析 (PCA) 对网络层进行投影;减少可学习参数的数量

对层进行投影,先使用代表训练数据的数据集对层激活执行主成分分析 (PCA),然后对层的可学习参数应用线性投影。当您使用无库的 C/C++ 代码生成将网络部署到嵌入式硬件时,投影的深度神经网络的前向传导通常会更快。

有关 Deep Learning Toolbox™ Model Compression Library 中提供的压缩技术的详细概述,请参阅Reduce Memory Footprint of Deep Neural Networks。

函数

compressNetworkUsingProjection | Compress neural network using projection (自 R2022b 起) |

neuronPCA | Principal component analysis of neuron activations (自 R2022b 起) |

unpackProjectedLayers | Unpack projected layers of neural network (自 R2023b 起) |

ProjectedLayer | Compressed neural network layer using projection (自 R2023b 起) |

gruProjectedLayer | Gated recurrent unit (GRU) projected layer for recurrent neural network (RNN) (自 R2023b 起) |

lstmProjectedLayer | Long short-term memory (LSTM) projected layer for recurrent neural network (RNN) (自 R2022b 起) |

主题

- Compress Neural Network Using Projection

Compress a neural network using projection and principal component analysis (PCA).

- Train Smaller Neural Network Using Knowledge Distillation

Reduce the memory footprint of a deep neural network using knowledge distillation. (自 R2023b 起)