剪枝

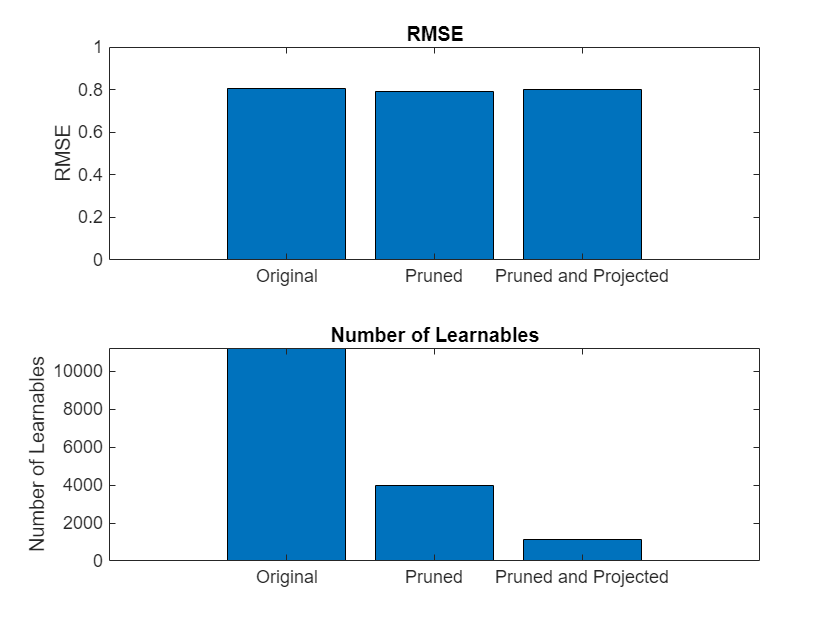

使用一阶泰勒逼近对网络滤波器进行剪枝;减少可学习参数的数量

使用一阶泰勒逼近从卷积层中对滤波器进行剪枝。然后,您可以从剪枝过的网络生成 C/C++ 或 CUDA® 代码。

有关 Deep Learning Toolbox™ Model Compression Library 中提供的压缩技术的详细概述,请参阅Reduce Memory Footprint of Deep Neural Networks。

函数

taylorPrunableNetwork | Neural network suitable for compression using Taylor pruning (自 R2022a 起) |

forward | Compute deep learning network output for training |

predict | 计算深度学习网络输出以进行推断 |

updatePrunables | Remove filters from prunable layers based on importance scores (自 R2022a 起) |

updateScore | Compute and accumulate Taylor-based importance scores for pruning (自 R2022a 起) |

主题

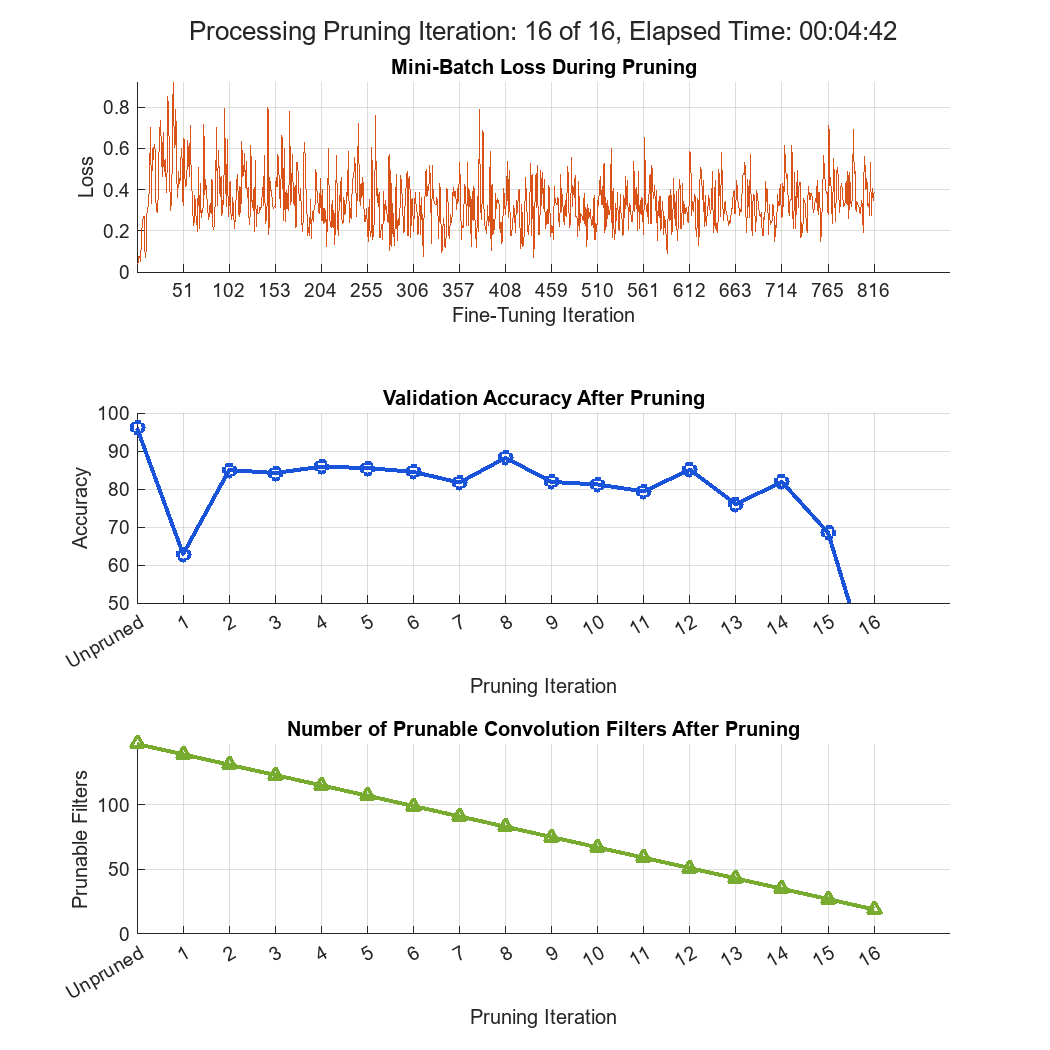

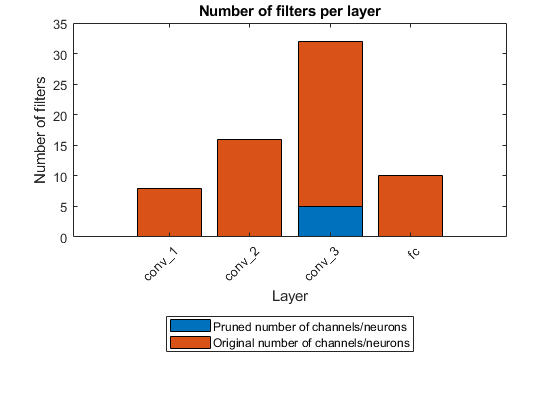

- Prune Image Classification Network Using Taylor Scores

Reduce the size of a deep neural network using Taylor pruning.

- Prune Filters in a Detection Network Using Taylor Scores

Reduce network size and increase inference speed by pruning convolutional filters in a you only look once (YOLO) v3 object detection network.