Understand Network Predictions Using LIME

This example shows how to use locally interpretable model-agnostic explanations (LIME) to understand why a deep neural network makes a classification decision.

Deep neural networks are very complex and their decisions can be hard to interpret. The LIME technique approximates the classification behavior of a deep neural network using a simpler, more interpretable model, such as a regression tree. Interpreting the decisions of this simpler model provides insight into the decisions of the neural network [1]. The simple model is used to determine the importance of features of the input data, as a proxy for the importance of the features to the deep neural network.

When a particular feature is very important to a deep network's classification decision, removing that feature significantly affects the classification score. That feature is therefore important to the simple model too.

Deep Learning Toolbox provides the imageLIME function to compute maps of the feature importance determined by the LIME technique. The LIME algorithm for images works by:

Segmenting an image into features.

Generating many synthetic images by randomly including or excluding features. Excluded features have every pixel replaced with the value of the image average, so they no longer contain information useful for the network.

Classifying the synthetic images with the deep network.

Fitting a simpler regression model using the presence or absence of image features for each synthetic image as binary regression predictors for the scores of the target class. The model approximates the behavior of the complex deep neural network in the region of the observation.

Computing the importance of features using the simple model, and converting this feature importance into a map that indicates the parts of the image that are most important to the model.

You can compare results from the LIME technique to other explainability techniques, such as occlusion sensitivity or Grad-CAM. For examples of how to use these related techniques, see the following examples.

Load Pretrained Network and Image

Load a pretrained GoogLeNet network and the corresponding class names. This requires the Deep Learning Toolbox™ Model for GoogLeNet Network support package. If this support package is not installed, then the software provides a download link. For a list of all available networks, see Pretrained Deep Neural Networks.

[net,classNames] = imagePretrainedNetwork("googlenet");Extract the image input size and the output classes of the network.

inputSize = net.Layers(1).InputSize(1:2);

Load the image. The image is of a retriever called Sherlock. Resize the image to the network input size.

img = imread("sherlock.jpg");

img = imresize(img,inputSize);Classify the image. To make prediction with a single observation, use the predict function. To convert the prediction scores to labels, use the scores2label function. To use a GPU, first convert the data to gpuArray. Using a GPU requires a Parallel Computing Toolbox™ license and a supported GPU device. For information on supported devices, see GPU Computing Requirements (Parallel Computing Toolbox).

if canUseGPU img = gpuArray(img); end scores = predict(net,single(img)); [label,score] = scores2label(scores,classNames); [topScores,topIdx] = maxk(scores, 3); topClasses = classNames(topIdx); imshow(img) titleString = compose("%s (%.2f)",topClasses,gather(topScores)'); title(sprintf(join(titleString, "; ")));

GoogLeNet classifies Sherlock as a golden retriever. Understandably, the network also assigns a high probability to the Labrador retriever class. You can use imageLIME to understand which parts of the image the network is using to make these classification decisions.

Identify Areas of an Image the Network Uses for Classification

You can use LIME to find out which parts of the image are important for a class. First, look at the predicted class of golden retriever. What parts of the image suggest this class?

By default, imageLIME identifies features in the input image by segmenting the image into superpixels. This method of segmentation requires Image Processing Toolbox; however, if you do not have Image Processing Toolbox, you can use the option "Segmentation","grid" to segment the image into square features.

Use the imageLIME function to map the importance of different superpixel features. By default, the simple model is a regression tree.

channel = find(label == categorical(classNames)); map = imageLIME(net,img,channel);

Display the image of Sherlock with the LIME map overlaid.

figure imshow(img) hold on imagesc(map,AlphaData=0.5) colormap jet colorbar title(sprintf("Image LIME (%s)", ... label)) hold off

The maps shows which areas of the image are important to the classification of golden retriever. Red areas of the map have a higher importance — when these areas are removed, the score for the golden retriever class goes down. The network focuses on the dog's face and ear to make its prediction of golden retriever. This is consistent with other explainability techniques like occlusion sensitivity or Grad-CAM.

Compare to Results of a Different Class

GoogLeNet predicts a score of 55% for the golden retriever class, and 40% for the Labrador retriever class. These classes are very similar. You can determine which parts of the dog are more important for both classes by comparing the LIME maps computed for each class.

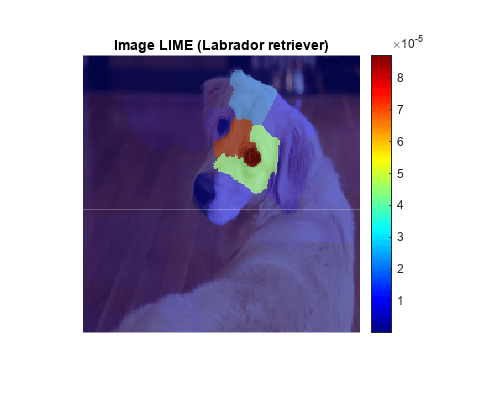

Using the same settings, compute the LIME map for the Labrador retriever class.

secondClass = topClasses(2); channel = find(secondClass == categorical(classNames)); map = imageLIME(net,img,channel); figure; imshow(img) hold on imagesc(map,AlphaData=0.5) colormap jet colorbar title(sprintf("Image LIME (%s)",secondClass)) hold off

For the Labrador retriever class, the network is more focused on the dog's nose and eyes, rather than the ear. While both maps highlight the dog's forehead, the network has decided that the dog's ear and neck indicate the golden retriever class, while the dog's eye and nose indicate the Labrador retriever class.

Compare LIME with Grad-CAM

Other image interpretability techniques such as Grad-CAM upsample the resulting map to produce a smooth heatmap of the important areas of the image. You can produce similar-looking maps with imageLIME, by calculating the importance of square or rectangular features and upsampling the resulting map.

To segment the image into a grid of square features instead of irregular superpixels, use the "Segmentation","grid" name-value pair. Upsample the computed map to match the image resolution using bicubic interpolation, by setting "OutputUpsampling","bicubic".

To increase the resolution of the initially computed map, increase the number of features to 100 by specifying the "NumFeatures",100 name-value pair. As the image is square, this produces a 10-by-10 grid of features.

The LIME technique generates synthetic images based on the original observation by randomly choosing some features and replacing all the pixels in those features with the average image pixel, effectively removing that feature. Increase the number of random samples to 6000 by setting "NumSamples",6000. When you increase the number of features, increasing the number of samples usually gives better results.

By default the imageLIME function uses a regression tree as its simple model. Instead, fit a linear regression model with lasso regression by setting "Model","linear".

channel = find(label == categorical(classNames)); map = imageLIME(net,img,channel, ... Segmentation="grid", ... OutputUpsampling="bicubic", ... NumFeatures=100, ... NumSamples=6000, ... Model="linear"); imshow(img) hold on imagesc(map,AlphaData=0.5) colormap jet title(sprintf("Image LIME (%s - linear model)", ... label)) hold off

Similar to the gradient map computed by Grad-CAM, the LIME technique also strongly identifies the dog's ear as significant to the prediction of golden retriever.

Display Only the Most Important Features

LIME results are often plotted by showing only the most important few features. When you use the imageLIME function, you can also obtain a map of the features used in the computation and the calculated importance of each feature. Use these results to determine the four most important superpixel features and display only the four most important features in an image.

Compute the LIME map and obtain the feature map and the calculated importance of each feature.

[map,featureMap,featureImportance] = imageLIME(net,img,channel);

Find the indices of the top four features.

numTopFeatures = 4; [~,idx] = maxk(featureImportance,numTopFeatures);

Next, mask out the image using the LIME map so only pixels in the most important four superpixels are visible. Display the masked image.

mask = ismember(featureMap,idx); maskedImg = uint8(mask).*img; figure imshow(maskedImg); title(sprintf("Image LIME (%s - top %i features)", ... label, numTopFeatures))

References

[1] Ribeiro, Marco Tulio, Sameer Singh, and Carlos Guestrin. “‘Why Should I Trust You?’: Explaining the Predictions of Any Classifier.” In Proceedings of the 22nd ACM SIGKDD International Conference on Knowledge Discovery and Data Mining, 1135–44. San Francisco California USA: ACM, 2016. https://doi.org/10.1145/2939672.2939778.

See Also

imagePretrainedNetwork | dlnetwork | trainingOptions | trainnet | occlusionSensitivity | imageLIME | gradCAM