rlQValueFunction

Q-Value function approximator object for reinforcement learning agents

Since R2022a

Description

This object implements a Q-value function approximator that you can use as a

critic for a reinforcement learning agent. A Q-value function (also known as action-value

function) is a mapping from an environment observation-action pair to the value of a policy.

Specifically, its output is a scalar that represents the expected discounted cumulative

long-term reward when an agent starts from the state corresponding to the given observation,

executes the given action, and keeps on taking actions according to the given policy

afterwards. A Q-value function critic therefore needs both the environment state and an action

as inputs. After you create an rlQValueFunction critic, use it to create an

agent such as rlQAgent, rlDQNAgent, rlSARSAAgent, rlDDPGAgent, or rlTD3Agent. For more

information on creating actors and critics, see Create Policies and Value Functions.

Creation

Syntax

Description

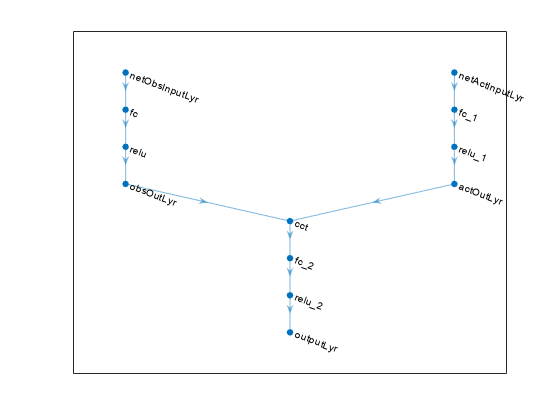

critic = rlQValueFunction(net,observationInfo,actionInfo)critic. Here,

net is the deep neural network used as an approximation model,

and it must have both observation and action as inputs and a single scalar output. The

network input layers are automatically associated with the environment observation and

action channels according to the dimension specifications in

observationInfo and actionInfo. This

function sets the ObservationInfo and

ActionInfo properties of critic to the

observationInfo and actionInfo input

arguments, respectively.

critic = rlQValueFunction(tab,observationInfo,actionInfo)critic with discrete

action and observation spaces from the Q-value table

tab. tab is a rlTable object

containing a table with as many rows as the number of possible observations and as many

columns as the number of possible actions. The function sets the ObservationInfo and ActionInfo

properties of critic respectively to the

observationInfo and actionInfo input

arguments, which in this case must be scalar rlFiniteSetSpec

objects.

critic = rlQValueFunction({basisFcn,W0},observationInfo,actionInfo)critic using a custom basis

function as underlying approximator. The first input argument is a two-element cell

array whose first element is the handle basisFcn to a custom basis

function and whose second element is the initial weight vector W0.

Here the basis function must have both observation and action as inputs and

W0 must be a column vector. The function sets the

ObservationInfo and ActionInfo properties of

critic to the observationInfo and

actionInfo input arguments, respectively.

critic = rlQValueFunction(___,Name=Value)UseDevice property of critic using one

or more name-value arguments. Specifying the input layer names allows you explicitly

associate the layers of your network approximator with specific environment channels.

For all types of approximators, you can specify the device where computations for

critic are executed, for example

UseDevice="gpu".

Input Arguments

Properties

Object Functions

rlDDPGAgent | Deep deterministic policy gradient (DDPG) reinforcement learning agent |

rlTD3Agent | Twin-delayed deep deterministic (TD3) policy gradient reinforcement learning agent |

rlDQNAgent | Deep Q-network (DQN) reinforcement learning agent |

rlQAgent | Q-learning reinforcement learning agent |

rlSARSAAgent | SARSA reinforcement learning agent |

rlSACAgent | Soft actor-critic (SAC) reinforcement learning agent |

getValue | Obtain estimated value from a critic given environment observations and actions |

getMaxQValue | Obtain maximum estimated value over all possible actions from a Q-value function critic with discrete action space, given environment observations |

evaluate | Evaluate function approximator object given observation (or observation-action) input data |

getLearnableParameters | Obtain learnable parameter values from agent, function approximator, or policy object |

setLearnableParameters | Set learnable parameter values of agent, function approximator, or policy object |

setModel | Set approximation model in function approximator object |

getModel | Get approximation model from function approximator object |

Examples

Version History

Introduced in R2022a