Simulation 3D Camera

Libraries:

Offroad Autonomy Library /

Simulation 3D

Automated Driving Toolbox /

Simulation 3D

Robotics System Toolbox /

Simulation 3D

Simulink 3D Animation /

Simulation 3D /

Sensors

UAV Toolbox /

Simulation 3D

Description

Note

Simulating models with the Simulation 3D Camera block requires Simulink® 3D Animation™.

The Simulation 3D Camera block provides an interface to a camera with a lens in a 3D simulation environment. This environment is rendered using the Unreal Engine® from Epic Games®. The sensor is based on the ideal pinhole camera model, with a lens added to represent a full camera model, including lens distortion. This camera model supports a field of view of up to 150 degrees without distortions. For more details, see Algorithms.

If you set Sample time to -1, the block uses the

sample time specified in the Simulation 3D Scene

Configuration block. To use this sensor, you must include a Simulation 3D

Scene Configuration block in your model.

The Coordinate system parameter of the block specifies how the actor transformations are applied in the 3D environment. The output of the block also follows the specified coordinate system.

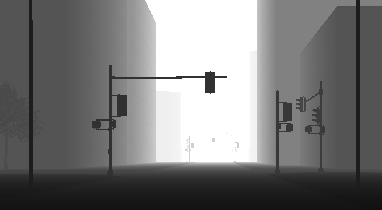

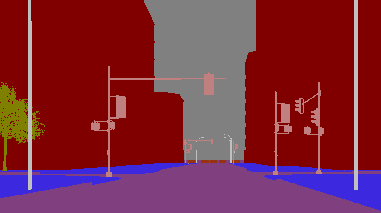

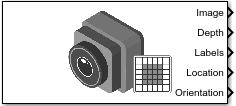

The block outputs images captured by the camera during simulation. You can use these images to visualize and verify your driving algorithms. In addition, on the Ground Truth tab, you can select options to output the ground truth data for developing depth estimation and semantic segmentation algorithms. You can also output the location and orientation of the camera in the world coordinate system of the scene. The image shows the block with all ports enabled.

The table summarizes the ports and how to enable them.

| Port | Description | Parameter for Enabling Port | Sample Visualization |

|---|---|---|---|

Image | Outputs an RGB image captured by the camera | n/a |

|

Depth | Outputs a depth map with values from 0 m to 1000 meters | Output depth |

|

Labels | Outputs a semantic segmentation map of label IDs that correspond to objects in the scene | Output semantic segmentation |

|

Translation | Outputs the location of the camera in the world coordinate system | Output location and orientation | n/a |

Rotation | Outputs the orientation of the camera in the world coordinate system | Output location and orientation | n/a |

Note

The Simulation 3D Scene Configuration

block must execute before the Simulation 3D Camera block. That way, the

Unreal Engine 3D visualization environment prepares the data before the Simulation 3D

Camera block receives it. To check the block execution order, right-click the

blocks and then click the Properties button ![]() . On the General tab, confirm these

Priority settings:

. On the General tab, confirm these

Priority settings:

Simulation 3D Scene Configuration —

0Simulation 3D Camera —

1

For more information about execution order, see How Unreal Engine Simulation for Robots Works.

Ports

Input

Output

Parameters

Tips

To visualize the camera images that are output by the Image port, use a Video Viewer (Computer Vision Toolbox) or To Video Display (Computer Vision Toolbox) block.

Because the Unreal Engine can take a long time to start between simulations, consider logging the signals that the sensors output. You can then use this data to develop perception algorithms in MATLAB. See Mark Signals for Logging (Simulink).

Algorithms

References

[1] Bouguet, J. Y. Camera Calibration Toolbox for Matlab. http://www.vision.caltech.edu/bouguetj/calib_doc

[2] Zhang, Z. "A Flexible New Technique for Camera Calibration." IEEE Transactions on Pattern Analysis and Machine Intelligence. Vol. 22, No. 11, 2000, pp. 1330–1334.

[3] Heikkila, J., and O. Silven. “A Four-step Camera Calibration Procedure with Implicit Image Correction.” IEEE International Conference on Computer Vision and Pattern Recognition. 1997.

Version History

Introduced in R2024aSee Also

Blocks

Apps

- Camera Calibrator (Computer Vision Toolbox)

Objects

cameraIntrinsics(Computer Vision Toolbox) |sim3d.sensors.Camera(Simulink 3D Animation)

Topics

- What Is Camera Calibration? (Computer Vision Toolbox)

- Fisheye Calibration Basics (Computer Vision Toolbox)

- Depth Estimation from Stereo Video (Computer Vision Toolbox)

- Semantic Segmentation Using Deep Learning (Computer Vision Toolbox)

- Prepare Camera and Capture Images for Camera Calibration (Computer Vision Toolbox)

- Evaluating the Accuracy of Single Camera Calibration (Computer Vision Toolbox)