fitcdiscr

Fit discriminant analysis classifier

Syntax

Description

Mdl = fitcdiscr(Tbl,ResponseVarName)Tbl and output (response or labels) contained in

ResponseVarName.

Mdl = fitcdiscr(___,Name=Value)

[

also returns Mdl,AggregateOptimizationResults] = fitcdiscr(___)AggregateOptimizationResults, which contains

hyperparameter optimization results when you specify the

OptimizeHyperparameters and

HyperparameterOptimizationOptions name-value arguments.

You must also specify the ConstraintType and

ConstraintBounds options of

HyperparameterOptimizationOptions. You can use this

syntax to optimize on compact model size instead of cross-validation loss, and

to perform a set of multiple optimization problems that have the same options

but different constraint bounds.

Note

For a list of supported syntaxes when the input variables are tall arrays, see Tall Arrays.

Examples

Load Fisher's iris data set.

load fisheririsTrain a discriminant analysis model using the entire data set.

Mdl = fitcdiscr(meas,species)

Mdl =

ClassificationDiscriminant

ResponseName: 'Y'

CategoricalPredictors: []

ClassNames: {'setosa' 'versicolor' 'virginica'}

ScoreTransform: 'none'

NumObservations: 150

DiscrimType: 'linear'

Mu: [3×4 double]

Coeffs: [3×3 struct]

Properties, Methods

Mdl is a ClassificationDiscriminant model. To access its properties, use dot notation. For example, display the group means for each predictor.

Mdl.Mu

ans = 3×4

5.0060 3.4280 1.4620 0.2460

5.9360 2.7700 4.2600 1.3260

6.5880 2.9740 5.5520 2.0260

To predict labels for new observations, pass Mdl and predictor data to predict.

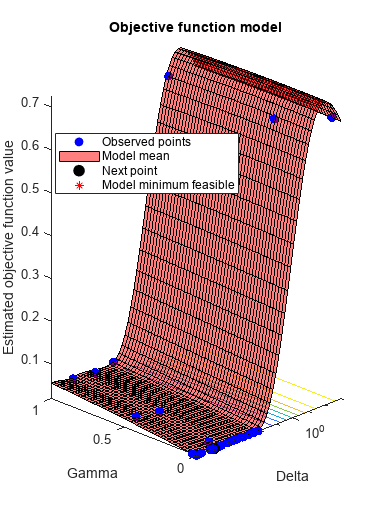

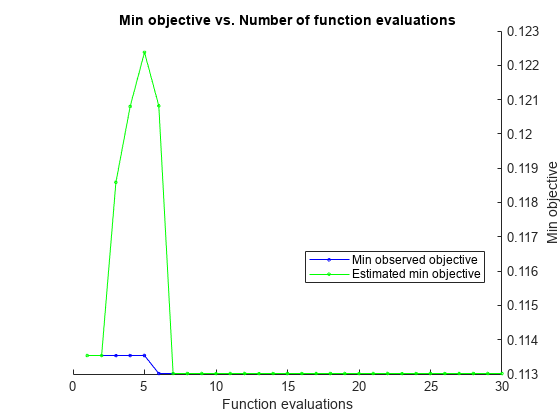

This example shows how to optimize hyperparameters automatically using fitcdiscr. The example uses Fisher's iris data.

Load the data.

load fisheririsFind hyperparameters that minimize five-fold cross-validation loss by using automatic hyperparameter optimization.

For reproducibility, set the random seed and use the 'expected-improvement-plus' acquisition function.

rng(1) Mdl = fitcdiscr(meas,species,'OptimizeHyperparameters','auto',... 'HyperparameterOptimizationOptions',... struct('AcquisitionFunctionName','expected-improvement-plus'))

|=====================================================================================================|

| Iter | Eval | Objective | Objective | BestSoFar | BestSoFar | Delta | Gamma |

| | result | | runtime | (observed) | (estim.) | | |

|=====================================================================================================|

| 1 | Best | 0.66667 | 0.39142 | 0.66667 | 0.66667 | 13.261 | 0.25218 |

| 2 | Best | 0.02 | 0.22086 | 0.02 | 0.064227 | 2.7404e-05 | 0.073264 |

| 3 | Accept | 0.04 | 0.12791 | 0.02 | 0.020084 | 3.2455e-06 | 0.46974 |

| 4 | Accept | 0.66667 | 0.066479 | 0.02 | 0.020118 | 14.879 | 0.98622 |

| 5 | Accept | 0.046667 | 0.043152 | 0.02 | 0.019907 | 0.00031449 | 0.97362 |

| 6 | Accept | 0.04 | 0.040999 | 0.02 | 0.028438 | 4.5092e-05 | 0.43616 |

| 7 | Accept | 0.046667 | 0.026823 | 0.02 | 0.031424 | 2.0973e-05 | 0.9942 |

| 8 | Accept | 0.02 | 0.037954 | 0.02 | 0.022424 | 1.0554e-06 | 0.0024286 |

| 9 | Accept | 0.02 | 0.031919 | 0.02 | 0.021105 | 1.1232e-06 | 0.00014039 |

| 10 | Accept | 0.02 | 0.028462 | 0.02 | 0.020948 | 0.00011837 | 0.0032994 |

| 11 | Accept | 0.02 | 0.045765 | 0.02 | 0.020172 | 1.0292e-06 | 0.027725 |

| 12 | Accept | 0.02 | 0.038594 | 0.02 | 0.020105 | 9.7792e-05 | 0.0022817 |

| 13 | Accept | 0.02 | 0.026919 | 0.02 | 0.020038 | 0.00036014 | 0.0015136 |

| 14 | Accept | 0.02 | 0.029418 | 0.02 | 0.019597 | 0.00021059 | 0.0044789 |

| 15 | Accept | 0.02 | 0.034802 | 0.02 | 0.019461 | 1.1911e-05 | 0.0010135 |

| 16 | Accept | 0.02 | 0.02442 | 0.02 | 0.01993 | 0.0017896 | 0.00071115 |

| 17 | Accept | 0.02 | 0.025781 | 0.02 | 0.019551 | 0.00073745 | 0.0066899 |

| 18 | Accept | 0.02 | 0.040556 | 0.02 | 0.019776 | 0.00079304 | 0.00011509 |

| 19 | Accept | 0.02 | 0.027135 | 0.02 | 0.019678 | 0.007292 | 0.0007911 |

| 20 | Accept | 0.046667 | 0.028511 | 0.02 | 0.019785 | 0.0074408 | 0.99945 |

|=====================================================================================================|

| Iter | Eval | Objective | Objective | BestSoFar | BestSoFar | Delta | Gamma |

| | result | | runtime | (observed) | (estim.) | | |

|=====================================================================================================|

| 21 | Accept | 0.02 | 0.025091 | 0.02 | 0.019043 | 0.0036004 | 0.0024547 |

| 22 | Accept | 0.02 | 0.02548 | 0.02 | 0.019755 | 2.5238e-05 | 0.0015542 |

| 23 | Accept | 0.02 | 0.025436 | 0.02 | 0.0191 | 1.5478e-05 | 0.0026899 |

| 24 | Accept | 0.02 | 0.026248 | 0.02 | 0.019081 | 0.0040557 | 0.00046815 |

| 25 | Accept | 0.02 | 0.040511 | 0.02 | 0.019333 | 2.959e-05 | 0.0011358 |

| 26 | Accept | 0.02 | 0.03883 | 0.02 | 0.019369 | 2.3111e-06 | 0.0029205 |

| 27 | Accept | 0.02 | 0.083116 | 0.02 | 0.019455 | 3.8898e-05 | 0.0011665 |

| 28 | Accept | 0.02 | 0.027187 | 0.02 | 0.019449 | 0.0035925 | 0.0020278 |

| 29 | Accept | 0.66667 | 0.027722 | 0.02 | 0.019479 | 998.93 | 0.064276 |

| 30 | Accept | 0.02 | 0.024449 | 0.02 | 0.01947 | 8.1557e-06 | 0.0008004 |

__________________________________________________________

Optimization completed.

MaxObjectiveEvaluations of 30 reached.

Total function evaluations: 30

Total elapsed time: 17.608 seconds

Total objective function evaluation time: 1.682

Best observed feasible point:

Delta Gamma

__________ ________

2.7404e-05 0.073264

Observed objective function value = 0.02

Estimated objective function value = 0.022693

Function evaluation time = 0.22086

Best estimated feasible point (according to models):

Delta Gamma

__________ _________

2.5238e-05 0.0015542

Estimated objective function value = 0.01947

Estimated function evaluation time = 0.03347

Mdl =

ClassificationDiscriminant

ResponseName: 'Y'

CategoricalPredictors: []

ClassNames: {'setosa' 'versicolor' 'virginica'}

ScoreTransform: 'none'

NumObservations: 150

HyperparameterOptimizationResults: [1×1 classreg.learning.paramoptim.SupervisedLearningBayesianOptimization]

DiscrimType: 'linear'

Mu: [3×4 double]

Coeffs: [3×3 struct]

Properties, Methods

The fit achieves about 2% loss for the default 5-fold cross validation.

This example shows how to optimize hyperparameters of a discriminant analysis model automatically using a tall array. The sample data set airlinesmall.csv is a large data set that contains a tabular file of airline flight data. This example creates a tall table containing the data and uses it to run the optimization procedure.

When you perform calculations on tall arrays, MATLAB® uses either a parallel pool (default if you have Parallel Computing Toolbox™) or the local MATLAB session. If you want to run the example using the local MATLAB session when you have Parallel Computing Toolbox, you can change the global execution environment by using the mapreducer function.

Create a datastore that references the folder location with the data. Select a subset of the variables to work with, and treat NA values as missing data so that datastore replaces them with NaN values. Create a tall table that contains the data in the datastore.

ds = datastore("airlinesmall.csv"); ds.SelectedVariableNames = ["Month","DayofMonth","DayOfWeek", ... "DepTime","ArrDelay","Distance","DepDelay"]; ds.TreatAsMissing = "NA"; tt = tall(ds) % Tall table

Starting parallel pool (parpool) using the 'Processes' profile ...

06-Nov-2025 13:50:13: Job Queued. Waiting for parallel pool job with ID 1 to start ...

06-Nov-2025 13:51:13: Job Queued. Waiting for parallel pool job with ID 1 to start ...

06-Nov-2025 13:52:13: Job Queued. Waiting for parallel pool job with ID 1 to start ...

06-Nov-2025 13:53:14: Job Queued. Waiting for parallel pool job with ID 1 to start ...

Connected to parallel pool with 6 workers.

tt =

M×7 tall table

Month DayofMonth DayOfWeek DepTime ArrDelay Distance DepDelay

_____ __________ _________ _______ ________ ________ ________

10 21 3 642 8 308 12

10 26 1 1021 8 296 1

10 23 5 2055 21 480 20

10 23 5 1332 13 296 12

10 22 4 629 4 373 -1

10 28 3 1446 59 308 63

10 8 4 928 3 447 -2

10 10 6 859 11 954 -1

: : : : : : :

: : : : : : :

Determine the flights that are late by 10 minutes or more by defining a logical variable that is true for a late flight. This variable contains the class labels. A preview of this variable includes the first few rows.

Y = tt.DepDelay > 10 % Class labelsY = M×1 tall logical array 1 0 1 1 0 1 0 0 : :

Create a tall array for the predictor data.

X = tt{:,1:end-1} % Predictor dataX =

M×6 tall double matrix

10 21 3 642 8 308

10 26 1 1021 8 296

10 23 5 2055 21 480

10 23 5 1332 13 296

10 22 4 629 4 373

10 28 3 1446 59 308

10 8 4 928 3 447

10 10 6 859 11 954

: : : : : :

: : : : : :

Remove rows in X and Y that contain missing data.

R = rmmissing([X Y]); % Data with missing entries removed

X = R(:,1:end-1);

Y = R(:,end); Standardize the predictor variables.

Z = zscore(X);

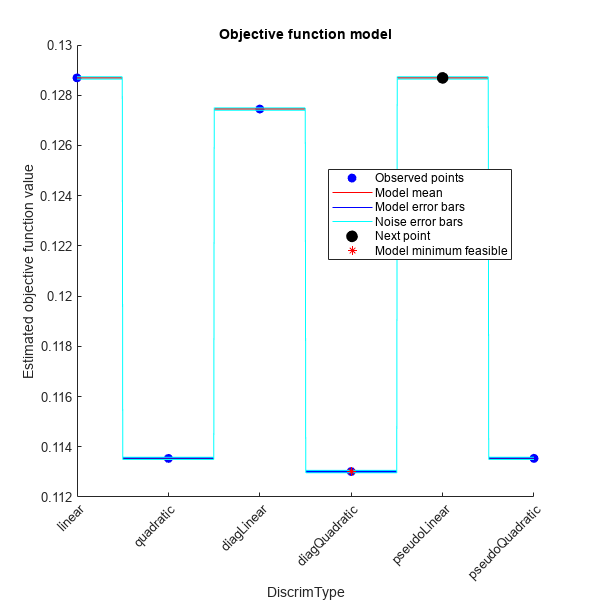

Optimize hyperparameters automatically using the OptimizeHyperparameters name-value argument. Note that when you use tall arrays, DiscrimType is the only hyperparameter you can optimize, regardless of whether you specify "auto" or "all". Find the optimal DiscrimType value that minimizes holdout cross-validation loss. For reproducibility, use the "expected-improvement-plus" acquisition function and set the seeds of the random number generators using rng and tallrng. The results can vary depending on the number of workers and the execution environment for the tall arrays. For details, see Control Where Your Code Runs.

rng("default") tallrng("default") [Mdl,FitInfo,HyperparameterOptimizationResults] = fitcdiscr(Z,Y, ... OptimizeHyperparameters="auto", ... HyperparameterOptimizationOptions=struct(Holdout=0.3, ... AcquisitionFunctionName="expected-improvement-plus"))

Evaluating tall expression using the Parallel Pool 'Processes':

- Pass 1 of 2: Completed in 6 sec

- Pass 2 of 2: Completed in 7.3 sec

Evaluation completed in 22 sec

Evaluating tall expression using the Parallel Pool 'Processes':

- Pass 1 of 1: Completed in 2.5 sec

Evaluation completed in 2.7 sec

|======================================================================================|

| Iter | Eval | Objective | Objective | BestSoFar | BestSoFar | DiscrimType |

| | result | | runtime | (observed) | (estim.) | |

|======================================================================================|

| 1 | Best | 0.11354 | 29.665 | 0.11354 | 0.11354 | quadratic |

Evaluating tall expression using the Parallel Pool 'Processes':

- Pass 1 of 1: Completed in 1.3 sec

Evaluation completed in 2.4 sec

Evaluating tall expression using the Parallel Pool 'Processes':

- Pass 1 of 1: Completed in 0.84 sec

Evaluation completed in 0.94 sec

| 2 | Accept | 0.11354 | 5.0412 | 0.11354 | 0.11354 | pseudoQuadra |

Evaluating tall expression using the Parallel Pool 'Processes':

- Pass 1 of 1: Completed in 1 sec

Evaluation completed in 2.2 sec

Evaluating tall expression using the Parallel Pool 'Processes':

- Pass 1 of 1: Completed in 1.4 sec

Evaluation completed in 1.5 sec

| 3 | Accept | 0.12869 | 5.2654 | 0.11354 | 0.11859 | pseudoLinear |

Evaluating tall expression using the Parallel Pool 'Processes':

- Pass 1 of 1: Completed in 1.3 sec

Evaluation completed in 1.9 sec

Evaluating tall expression using the Parallel Pool 'Processes':

- Pass 1 of 1: Completed in 0.74 sec

Evaluation completed in 0.85 sec

| 4 | Accept | 0.12745 | 3.8881 | 0.11354 | 0.1208 | diagLinear |

Evaluating tall expression using the Parallel Pool 'Processes':

- Pass 1 of 1: Completed in 0.88 sec

Evaluation completed in 1.5 sec

Evaluating tall expression using the Parallel Pool 'Processes':

- Pass 1 of 1: Completed in 0.74 sec

Evaluation completed in 0.84 sec

| 5 | Accept | 0.12869 | 3.5151 | 0.11354 | 0.12238 | linear |

Evaluating tall expression using the Parallel Pool 'Processes':

- Pass 1 of 1: Completed in 0.85 sec

Evaluation completed in 1.3 sec

Evaluating tall expression using the Parallel Pool 'Processes':

- Pass 1 of 1: Completed in 0.78 sec

Evaluation completed in 0.87 sec

| 6 | Best | 0.11301 | 3.1481 | 0.11301 | 0.12082 | diagQuadrati |

Evaluating tall expression using the Parallel Pool 'Processes':

- Pass 1 of 1: Completed in 0.85 sec

Evaluation completed in 1.4 sec

Evaluating tall expression using the Parallel Pool 'Processes':

- Pass 1 of 1: Completed in 1.1 sec

Evaluation completed in 1.2 sec

| 7 | Accept | 0.11301 | 3.5265 | 0.11301 | 0.11301 | diagQuadrati |

Evaluating tall expression using the Parallel Pool 'Processes':

- Pass 1 of 1: Completed in 0.86 sec

Evaluation completed in 1.3 sec

Evaluating tall expression using the Parallel Pool 'Processes':

- Pass 1 of 1: Completed in 0.74 sec

Evaluation completed in 0.83 sec

| 8 | Accept | 0.11301 | 3.0978 | 0.11301 | 0.11301 | diagQuadrati |

Evaluating tall expression using the Parallel Pool 'Processes':

- Pass 1 of 1: Completed in 0.9 sec

Evaluation completed in 1.4 sec

Evaluating tall expression using the Parallel Pool 'Processes':

- Pass 1 of 1: Completed in 0.73 sec

Evaluation completed in 0.81 sec

| 9 | Accept | 0.11301 | 3.144 | 0.11301 | 0.11301 | diagQuadrati |

Evaluating tall expression using the Parallel Pool 'Processes':

- Pass 1 of 1: Completed in 1.1 sec

Evaluation completed in 2.1 sec

Evaluating tall expression using the Parallel Pool 'Processes':

- Pass 1 of 1: Completed in 0.73 sec

Evaluation completed in 0.84 sec

| 10 | Accept | 0.11301 | 3.823 | 0.11301 | 0.11301 | diagQuadrati |

Evaluating tall expression using the Parallel Pool 'Processes':

- Pass 1 of 1: Completed in 1.3 sec

Evaluation completed in 1.8 sec

Evaluating tall expression using the Parallel Pool 'Processes':

- Pass 1 of 1: Completed in 0.72 sec

Evaluation completed in 0.8 sec

| 11 | Accept | 0.11301 | 3.4762 | 0.11301 | 0.11301 | diagQuadrati |

Evaluating tall expression using the Parallel Pool 'Processes':

- Pass 1 of 1: Completed in 0.92 sec

Evaluation completed in 1.4 sec

Evaluating tall expression using the Parallel Pool 'Processes':

- Pass 1 of 1: Completed in 0.84 sec

Evaluation completed in 0.94 sec

| 12 | Accept | 0.11301 | 3.2704 | 0.11301 | 0.11301 | diagQuadrati |

Evaluating tall expression using the Parallel Pool 'Processes':

- Pass 1 of 1: Completed in 0.83 sec

Evaluation completed in 1.4 sec

Evaluating tall expression using the Parallel Pool 'Processes':

- Pass 1 of 1: Completed in 0.71 sec

Evaluation completed in 0.81 sec

| 13 | Accept | 0.11301 | 3.1015 | 0.11301 | 0.11301 | diagQuadrati |

Evaluating tall expression using the Parallel Pool 'Processes':

- Pass 1 of 1: Completed in 0.83 sec

Evaluation completed in 1.3 sec

Evaluating tall expression using the Parallel Pool 'Processes':

- Pass 1 of 1: Completed in 1.2 sec

Evaluation completed in 1.3 sec

| 14 | Accept | 0.11301 | 3.4993 | 0.11301 | 0.11301 | diagQuadrati |

Evaluating tall expression using the Parallel Pool 'Processes':

- Pass 1 of 1: Completed in 0.96 sec

Evaluation completed in 1.4 sec

Evaluating tall expression using the Parallel Pool 'Processes':

- Pass 1 of 1: Completed in 0.72 sec

Evaluation completed in 0.82 sec

| 15 | Accept | 0.11301 | 3.1564 | 0.11301 | 0.11301 | diagQuadrati |

Evaluating tall expression using the Parallel Pool 'Processes':

- Pass 1 of 1: Completed in 0.82 sec

Evaluation completed in 1.3 sec

Evaluating tall expression using the Parallel Pool 'Processes':

- Pass 1 of 1: Completed in 0.71 sec

Evaluation completed in 0.8 sec

| 16 | Accept | 0.11354 | 2.9859 | 0.11301 | 0.11301 | pseudoQuadra |

Evaluating tall expression using the Parallel Pool 'Processes':

- Pass 1 of 1: Completed in 0.83 sec

Evaluation completed in 1.3 sec

Evaluating tall expression using the Parallel Pool 'Processes':

- Pass 1 of 1: Completed in 0.71 sec

Evaluation completed in 0.8 sec

| 17 | Accept | 0.11354 | 2.9703 | 0.11301 | 0.11301 | quadratic |

Evaluating tall expression using the Parallel Pool 'Processes':

- Pass 1 of 1: Completed in 1.3 sec

Evaluation completed in 1.8 sec

Evaluating tall expression using the Parallel Pool 'Processes':

- Pass 1 of 1: Completed in 0.73 sec

Evaluation completed in 0.82 sec

| 18 | Accept | 0.11301 | 3.4992 | 0.11301 | 0.11301 | diagQuadrati |

Evaluating tall expression using the Parallel Pool 'Processes':

- Pass 1 of 1: Completed in 1.3 sec

Evaluation completed in 1.8 sec

Evaluating tall expression using the Parallel Pool 'Processes':

- Pass 1 of 1: Completed in 0.71 sec

Evaluation completed in 0.8 sec

| 19 | Accept | 0.11301 | 3.5022 | 0.11301 | 0.11301 | diagQuadrati |

Evaluating tall expression using the Parallel Pool 'Processes':

- Pass 1 of 1: Completed in 0.83 sec

Evaluation completed in 1.3 sec

Evaluating tall expression using the Parallel Pool 'Processes':

- Pass 1 of 1: Completed in 0.73 sec

Evaluation completed in 0.82 sec

| 20 | Accept | 0.11301 | 3.019 | 0.11301 | 0.11301 | diagQuadrati |

Evaluating tall expression using the Parallel Pool 'Processes':

- Pass 1 of 1: Completed in 0.83 sec

Evaluation completed in 1.3 sec

Evaluating tall expression using the Parallel Pool 'Processes':

- Pass 1 of 1: Completed in 0.74 sec

Evaluation completed in 0.82 sec

|======================================================================================|

| Iter | Eval | Objective | Objective | BestSoFar | BestSoFar | DiscrimType |

| | result | | runtime | (observed) | (estim.) | |

|======================================================================================|

| 21 | Accept | 0.11301 | 3.016 | 0.11301 | 0.11301 | diagQuadrati |

Evaluating tall expression using the Parallel Pool 'Processes':

- Pass 1 of 1: Completed in 0.92 sec

Evaluation completed in 1.4 sec

Evaluating tall expression using the Parallel Pool 'Processes':

- Pass 1 of 1: Completed in 0.7 sec

Evaluation completed in 0.79 sec

| 22 | Accept | 0.11301 | 3.1365 | 0.11301 | 0.11301 | diagQuadrati |

Evaluating tall expression using the Parallel Pool 'Processes':

- Pass 1 of 1: Completed in 0.93 sec

Evaluation completed in 1.4 sec

Evaluating tall expression using the Parallel Pool 'Processes':

- Pass 1 of 1: Completed in 0.76 sec

Evaluation completed in 0.85 sec

| 23 | Accept | 0.11354 | 3.1681 | 0.11301 | 0.11301 | quadratic |

Evaluating tall expression using the Parallel Pool 'Processes':

- Pass 1 of 1: Completed in 0.84 sec

Evaluation completed in 1.3 sec

Evaluating tall expression using the Parallel Pool 'Processes':

- Pass 1 of 1: Completed in 0.72 sec

Evaluation completed in 0.81 sec

| 24 | Accept | 0.11301 | 2.9795 | 0.11301 | 0.11301 | diagQuadrati |

Evaluating tall expression using the Parallel Pool 'Processes':

- Pass 1 of 1: Completed in 0.8 sec

Evaluation completed in 1.3 sec

Evaluating tall expression using the Parallel Pool 'Processes':

- Pass 1 of 1: Completed in 0.8 sec

Evaluation completed in 1.2 sec

| 25 | Accept | 0.11301 | 3.4289 | 0.11301 | 0.11301 | diagQuadrati |

Evaluating tall expression using the Parallel Pool 'Processes':

- Pass 1 of 1: Completed in 1.1 sec

Evaluation completed in 1.6 sec

Evaluating tall expression using the Parallel Pool 'Processes':

- Pass 1 of 1: Completed in 0.68 sec

Evaluation completed in 0.78 sec

| 26 | Accept | 0.11301 | 3.2509 | 0.11301 | 0.11301 | diagQuadrati |

Evaluating tall expression using the Parallel Pool 'Processes':

- Pass 1 of 1: Completed in 0.83 sec

Evaluation completed in 1.3 sec

Evaluating tall expression using the Parallel Pool 'Processes':

- Pass 1 of 1: Completed in 0.71 sec

Evaluation completed in 0.8 sec

| 27 | Accept | 0.11354 | 3.0354 | 0.11301 | 0.11301 | pseudoQuadra |

Evaluating tall expression using the Parallel Pool 'Processes':

- Pass 1 of 1: Completed in 0.81 sec

Evaluation completed in 1.3 sec

Evaluating tall expression using the Parallel Pool 'Processes':

- Pass 1 of 1: Completed in 0.74 sec

Evaluation completed in 0.82 sec

| 28 | Accept | 0.11301 | 2.9747 | 0.11301 | 0.11301 | diagQuadrati |

Evaluating tall expression using the Parallel Pool 'Processes':

- Pass 1 of 1: Completed in 1.9 sec

Evaluation completed in 2.4 sec

Evaluating tall expression using the Parallel Pool 'Processes':

- Pass 1 of 1: Completed in 0.71 sec

Evaluation completed in 0.8 sec

| 29 | Accept | 0.11301 | 4.0534 | 0.11301 | 0.11301 | diagQuadrati |

Evaluating tall expression using the Parallel Pool 'Processes':

- Pass 1 of 1: Completed in 0.8 sec

Evaluation completed in 1.3 sec

Evaluating tall expression using the Parallel Pool 'Processes':

- Pass 1 of 1: Completed in 0.7 sec

Evaluation completed in 0.79 sec

| 30 | Accept | 0.11301 | 2.9555 | 0.11301 | 0.11301 | diagQuadrati |

__________________________________________________________

Optimization completed.

MaxObjectiveEvaluations of 30 reached.

Total function evaluations: 30

Total elapsed time: 155.2869 seconds

Total objective function evaluation time: 128.5936

Best observed feasible point:

DiscrimType

_____________

diagQuadratic

Observed objective function value = 0.11301

Estimated objective function value = 0.11301

Function evaluation time = 3.1481

Best estimated feasible point (according to models):

DiscrimType

_____________

diagQuadratic

Estimated objective function value = 0.11301

Estimated function evaluation time = 3.3967

Evaluating tall expression using the Parallel Pool 'Processes':

- Pass 1 of 1: Completed in 0.69 sec

Evaluation completed in 1.2 sec

Mdl =

CompactClassificationDiscriminant

PredictorNames: {'x1' 'x2' 'x3' 'x4' 'x5' 'x6'}

ResponseName: 'Y'

CategoricalPredictors: []

ClassNames: [0 1]

ScoreTransform: 'none'

DiscrimType: 'diagQuadratic'

Mu: [2×6 double]

Coeffs: [2×2 struct]

Properties, Methods

FitInfo = struct with no fields.

HyperparameterOptimizationResults =

SupervisedLearningBayesianOptimization

ObjectiveFcn: @createObjFcn/tallObjFcn

VariableDescriptions: [1×1 optimizableVariable]

Options: [1×1 struct]

MinObjective: 0.1130

XAtMinObjective: [1×1 table]

MinEstimatedObjective: 0.1130

XAtMinEstimatedObjective: [1×1 table]

NumObjectiveEvaluations: 30

TotalElapsedTime: 155.2869

NextPoint: [1×1 table]

XTrace: [30×1 table]

ObjectiveTrace: [30×1 double]

LossFun: 'mincost'

LossTrace: [30×1 double]

ConstraintsTrace: []

UserDataTrace: {30×1 cell}

ObjectiveEvaluationTimeTrace: [30×1 double]

IterationTimeTrace: [30×1 double]

ErrorTrace: [30×1 double]

FeasibilityTrace: [30×1 logical]

FeasibilityProbabilityTrace: [30×1 double]

IndexOfMinimumTrace: [30×1 double]

ObjectiveMinimumTrace: [30×1 double]

EstimatedObjectiveMinimumTrace: [30×1 double]

Input Arguments

Sample data used to train the model, specified as a table. Each row of

Tbl corresponds to one observation, and each column

corresponds to one predictor variable. Categorical predictor variables are

not supported. Optionally, Tbl can contain one additional

column for the response variable, which can be categorical. Multicolumn

variables and cell arrays other than cell arrays of character vectors are

not allowed.

If

Tblcontains the response variable, and you want to use all remaining variables inTblas predictors, then specify the response variable by usingResponseVarName.If

Tblcontains the response variable, and you want to use only a subset of the remaining variables inTblas predictors, then specify a formula by usingformula.If

Tbldoes not contain the response variable, then specify a response variable by usingY. The length of the response variable and the number of rows inTblmust be equal.

Response variable name, specified as the name of a variable in

Tbl.

You must specify ResponseVarName as a character vector or string scalar.

For example, if the response variable Y is

stored as Tbl.Y, then specify it as

"Y". Otherwise, the software

treats all columns of Tbl, including

Y, as predictors when training

the model.

The response variable must be a categorical, character, or string array; a logical or numeric

vector; or a cell array of character vectors. If

Y is a character array, then each

element of the response variable must correspond to one row of

the array.

A good practice is to specify the order of the classes by using the

ClassNames name-value

argument.

Data Types: char | string

Explanatory model of the response variable and a subset of the predictor variables,

specified as a character vector or string scalar in the form

"Y~x1+x2+x3". In this form, Y represents the

response variable, and x1, x2, and

x3 represent the predictor variables.

To specify a subset of variables in Tbl as predictors for

training the model, use a formula. If you specify a formula, then the software does not

use any variables in Tbl that do not appear in

formula.

The variable names in the formula must be both variable names in Tbl

(Tbl.Properties.VariableNames) and valid MATLAB® identifiers. You can verify the variable names in Tbl by

using the isvarname function. If the variable names

are not valid, then you can convert them by using the matlab.lang.makeValidName function.

Data Types: char | string

Class labels, specified as a categorical, character, or string array, a logical or numeric

vector, or a cell array of character vectors. Each row of Y

represents the classification of the corresponding row of X.

The software considers NaN, '' (empty character vector),

"" (empty string), <missing>, and

<undefined> values in Y to be missing

values. Consequently, the software does not train using observations with a missing

response.

Data Types: categorical | char | string | logical | single | double | cell

Predictor values, specified as a numeric matrix. Each column of

X represents one variable, and each row represents

one observation. Categorical predictor variables are not supported.

fitcdiscr considers NaN values in

X as missing values. fitcdiscr

does not use observations with missing values for X in

the fit.

Data Types: single | double

Name-Value Arguments

Specify optional pairs of arguments as

Name1=Value1,...,NameN=ValueN, where Name is

the argument name and Value is the corresponding value.

Name-value arguments must appear after other arguments, but the order of the

pairs does not matter.

Before R2021a, use commas to separate each name and value, and enclose

Name in quotes.

Example: 'DiscrimType','quadratic','SaveMemory','on' specifies a

quadratic discriminant classifier and does not store the covariance matrix in the

output object.

Note

You cannot use any cross-validation name-value argument together with the

OptimizeHyperparameters name-value argument. You can modify the

cross-validation for OptimizeHyperparameters only by using the

HyperparameterOptimizationOptions name-value argument.

Model Parameters

Names of classes to use for training, specified as a categorical, character, or string

array; a logical or numeric vector; or a cell array of character vectors.

ClassNames must have the same data type as the response variable

in Tbl or Y.

If ClassNames is a character array, then each element must correspond to one row of the array.

Use ClassNames to:

Specify the order of the classes during training.

Specify the order of any input or output argument dimension that corresponds to the class order. For example, use

ClassNamesto specify the order of the dimensions ofCostor the column order of classification scores returned bypredict.Select a subset of classes for training. For example, suppose that the set of all distinct class names in

Yis["a","b","c"]. To train the model using observations from classes"a"and"c"only, specifyClassNames=["a","c"].

The default value for ClassNames is the set of all distinct class names in the response variable in Tbl or Y.

Example: ClassNames=["b","g"]

Data Types: categorical | char | string | logical | single | double | cell

Cost of misclassification of a point, specified as one of the following:

Square matrix, where

Cost(i,j)is the cost of classifying a point into classjif its true class isi(that is, the rows correspond to the true class and the columns correspond to the predicted class). To specify the class order for the corresponding rows and columns ofCost, additionally specify theClassNamesname-value pair argument.Structure

Shaving two fields:S.ClassNamescontaining the group names as a variable of the same type asY, andS.ClassificationCostscontaining the cost matrix.

The default is Cost(i,j)=1 if i~=j,

and Cost(i,j)=0 if i=j.

Data Types: single | double | struct

Linear coefficient threshold, specified as the comma-separated

pair consisting of 'Delta' and a nonnegative scalar

value. If a coefficient of Mdl has magnitude smaller

than Delta, Mdl sets this coefficient

to 0, and you can eliminate the corresponding predictor

from the model. Set Delta to a higher value to

eliminate more predictors.

Delta must be 0 for quadratic

discriminant models.

Data Types: single | double

Discriminant type, specified as the comma-separated pair consisting of

'DiscrimType' and a character vector or string scalar in this

table.

| Value | Description | Predictor Covariance Treatment |

|---|---|---|

'linear' | Regularized linear discriminant analysis (LDA) |

|

'diaglinear' | LDA | All classes have the same, diagonal covariance matrix. |

'pseudolinear' | LDA | All classes have the same covariance matrix. The software inverts the covariance matrix using the pseudo inverse. |

'quadratic' | Quadratic discriminant analysis (QDA) | The covariance matrices can vary among classes. |

'diagquadratic' | QDA | The covariance matrices are diagonal and can vary among classes. |

'pseudoquadratic' | QDA | The covariance matrices can vary among classes. The software inverts the covariance matrix using the pseudo inverse. |

Note

To use regularization, you must specify 'linear'.

To specify the amount of regularization, use the Gamma name-value

pair argument.

Example: 'DiscrimType','quadratic'

Coeffs property flag, specified as the comma-separated pair consisting of

'FillCoeffs' and 'on' or

'off'. Setting the flag to 'on' populates the

Coeffs property in the classifier object. This can be

computationally intensive, especially when cross-validating. The default is

'on', unless you specify a cross-validation name-value pair, in

which case the flag is set to 'off' by default.

Example: 'FillCoeffs','off'

Amount of regularization to apply when estimating the covariance

matrix of the predictors, specified as the comma-separated pair consisting

of 'Gamma' and a scalar value in the interval [0,1]. Gamma provides

finer control over the covariance matrix structure than DiscrimType.

If you specify

0, then the software does not use regularization to adjust the covariance matrix. That is, the software estimates and uses the unrestricted, empirical covariance matrix.For linear discriminant analysis, if the empirical covariance matrix is singular, then the software automatically applies the minimal regularization required to invert the covariance matrix. You can display the chosen regularization amount by entering

Mdl.Gammaat the command line.For quadratic discriminant analysis, if at least one class has an empirical covariance matrix that is singular, then the software throws an error.

If you specify a value in the interval (0,1), then you must implement linear discriminant analysis, otherwise the software throws an error. Consequently, the software sets

DiscrimTypeto'linear'.If you specify

1, then the software uses maximum regularization for covariance matrix estimation. That is, the software restricts the covariance matrix to be diagonal. Alternatively, you can setDiscrimTypeto'diagLinear'or'diagQuadratic'for diagonal covariance matrices.

Example: 'Gamma',1

Data Types: single | double

Predictor variable names, specified as a string array of unique names or cell array of unique

character vectors. The functionality of PredictorNames depends on the

way you supply the training data.

If you supply

XandY, then you can usePredictorNamesto assign names to the predictor variables inX.The order of the names in

PredictorNamesmust correspond to the column order ofX. That is,PredictorNames{1}is the name ofX(:,1),PredictorNames{2}is the name ofX(:,2), and so on. Also,size(X,2)andnumel(PredictorNames)must be equal.By default,

PredictorNamesis{'x1','x2',...}.

If you supply

Tbl, then you can usePredictorNamesto choose which predictor variables to use in training. That is,fitcdiscruses only the predictor variables inPredictorNamesand the response variable during training.PredictorNamesmust be a subset ofTbl.Properties.VariableNamesand cannot include the name of the response variable.By default,

PredictorNamescontains the names of all predictor variables.A good practice is to specify the predictors for training using either

PredictorNamesorformula, but not both.

Example: PredictorNames=["SepalLength","SepalWidth","PetalLength","PetalWidth"]

Data Types: string | cell

Prior probabilities for each class, specified as a value in this table.

| Value | Description |

|---|---|

"empirical" | The class prior probabilities are the class relative frequencies

in Y. |

"uniform" | All class prior probabilities are equal to 1/K, where K is the number of classes. |

| numeric vector | Each element is a class prior probability. Order the elements

according to Mdl.ClassNames or

specify the order using the ClassNames name-value

pair argument. The software normalizes the elements such that they

sum to 1. |

| structure | A structure

|

If you set values for both Weights and Prior, the

weights are renormalized to add up to the value of

the prior probability in the respective

class.

Example: Prior="uniform"

Data Types: char | string | single | double | struct

Response variable name, specified as a character vector or string scalar.

If you supply

Y, then you can useResponseNameto specify a name for the response variable.If you supply

ResponseVarNameorformula, then you cannot useResponseName.

Example: ResponseName="response"

Data Types: char | string

Flag to save covariance matrix, specified as the comma-separated

pair consisting of 'SaveMemory' and either 'on' or 'off'.

If you specify 'on', then fitcdiscr does

not store the full covariance matrix, but instead stores enough information

to compute the matrix. The predict method computes the full covariance

matrix for prediction, and does not store the matrix. If you specify 'off',

then fitcdiscr computes and stores the full covariance

matrix in Mdl.

Specify SaveMemory as 'on' when

the input matrix contains thousands of predictors.

Example: 'SaveMemory','on'

Score transformation, specified as a character vector, string scalar, or function handle.

This table summarizes the available character vectors and string scalars.

| Value | Description |

|---|---|

"doublelogit" | 1/(1 + e–2x) |

"invlogit" | log(x / (1 – x)) |

"ismax" | Sets the score for the class with the largest score to 1, and sets the scores for all other classes to 0 |

"logit" | 1/(1 + e–x) |

"none" or "identity" | x (no transformation) |

"sign" | –1 for x < 0 0 for x = 0 1 for x > 0 |

"symmetric" | 2x – 1 |

"symmetricismax" | Sets the score for the class with the largest score to 1, and sets the scores for all other classes to –1 |

"symmetriclogit" | 2/(1 + e–x) – 1 |

For a MATLAB function or a function you define, use its function handle for the score transform. The function handle must accept a matrix (the original scores) and return a matrix of the same size (the transformed scores).

Example: ScoreTransform="logit"

Data Types: char | string | function_handle

Observation weights, specified as a numeric vector of positive values or name of a variable in

Tbl. The software weighs the observations in each row of

X or Tbl with the corresponding value in

Weights. The size of Weights must equal the

number of rows of X or Tbl.

If you specify the input data as a table Tbl, then

Weights can be the name of a variable in Tbl

that contains a numeric vector. In this case, you must specify

Weights as a character vector or string scalar. For example, if

the weights vector W is stored as Tbl.W, then

specify it as "W". Otherwise, the software treats all columns of

Tbl, including W, as predictors or the

response when training the model.

By default, Weights is

ones(, where

n,1)n is the number of observations in X

or Tbl.

The software normalizes Weights to sum up to the value of the prior

probability in the respective class. Inf weights are not supported.

Data Types: double | single | char | string

Cross-Validation Options

Cross-validation flag, specified as the comma-separated pair

consisting of 'Crossval' and 'on' or 'off'.

If you specify 'on', then the software implements

10-fold cross-validation.

To override this cross-validation setting, use one of these

name-value pair arguments: CVPartition, Holdout, KFold,

or Leaveout. To create a cross-validated model,

you can use one cross-validation name-value pair argument at a time

only.

Alternatively, cross-validate later by passing Mdl to crossval.

Example: 'CrossVal','on'

Cross-validation partition, specified as a cvpartition object that specifies the type of cross-validation and the

indexing for the training and validation sets.

To create a cross-validated model, you can specify only one of these four name-value

arguments: CVPartition, Holdout,

KFold, or Leaveout.

Example: Suppose you create a random partition for 5-fold cross-validation on 500

observations by using cvp = cvpartition(500,KFold=5). Then, you can

specify the cross-validation partition by setting

CVPartition=cvp.

Fraction of the data used for holdout validation, specified as a scalar value in the range

(0,1). If you specify Holdout=p, then the software completes these

steps:

Randomly select and reserve

p*100% of the data as validation data, and train the model using the rest of the data.Store the compact trained model in the

Trainedproperty of the cross-validated model.

To create a cross-validated model, you can specify only one of these four name-value

arguments: CVPartition, Holdout,

KFold, or Leaveout.

Example: Holdout=0.1

Data Types: double | single

Number of folds to use in the cross-validated model, specified as a positive integer value

greater than 1. If you specify KFold=k, then the software completes

these steps:

Randomly partition the data into

ksets.For each set, reserve the set as validation data, and train the model using the other

k– 1 sets.Store the

kcompact trained models in ak-by-1 cell vector in theTrainedproperty of the cross-validated model.

To create a cross-validated model, you can specify only one of these four name-value

arguments: CVPartition, Holdout,

KFold, or Leaveout.

Example: KFold=5

Data Types: single | double

Leave-one-out cross-validation flag, specified as "on" or

"off". If you specify Leaveout="on", then for

each of the n observations (where n is the number

of observations, excluding missing observations, specified in the

NumObservations property of the model), the software completes

these steps:

Reserve the one observation as validation data, and train the model using the other n – 1 observations.

Store the n compact trained models in an n-by-1 cell vector in the

Trainedproperty of the cross-validated model.

To create a cross-validated model, you can specify only one of these four name-value

arguments: CVPartition, Holdout,

KFold, or Leaveout.

Example: Leaveout="on"

Data Types: char | string

Hyperparameter Optimization Options

Parameters to optimize, specified as the comma-separated pair

consisting of 'OptimizeHyperparameters' and one of

the following:

'none'— Do not optimize.'auto'— Use{'Delta','Gamma'}.'all'— Optimize all eligible parameters.String array or cell array of eligible parameter names.

Vector of

optimizableVariableobjects, typically the output ofhyperparameters.

The optimization attempts to minimize the cross-validation loss

(error) for fitcdiscr by varying the parameters. To control the

cross-validation type and other aspects of the optimization, use the

HyperparameterOptimizationOptions name-value argument. When you use

HyperparameterOptimizationOptions, you can use the (compact) model size

instead of the cross-validation loss as the optimization objective by setting the

ConstraintType and ConstraintBounds options.

Note

The values of OptimizeHyperparameters override any values you

specify using other name-value arguments. For example, setting

OptimizeHyperparameters to "auto" causes

fitcdiscr to optimize hyperparameters corresponding to the

"auto" option and to ignore any specified values for the

hyperparameters.

The eligible parameters for fitcdiscr are:

Delta—fitcdiscrsearches among positive values, by default log-scaled in the range[1e-6,1e3].DiscrimType—fitcdiscrsearches among'linear','quadratic','diagLinear','diagQuadratic','pseudoLinear', and'pseudoQuadratic'.Gamma—fitcdiscrsearches among real values in the range[0,1].

Set nondefault parameters by passing a vector of

optimizableVariable objects that have nondefault

values. For example,

load fisheriris params = hyperparameters('fitcdiscr',meas,species); params(1).Range = [1e-4,1e6];

Pass params as the value of

OptimizeHyperparameters.

By default, the iterative display appears at the command line,

and plots appear according to the number of hyperparameters in the optimization. For the

optimization and plots, the objective function is the misclassification rate. To control the

iterative display, set the Verbose option of the

HyperparameterOptimizationOptions name-value argument. To control the

plots, set the ShowPlots field of the

HyperparameterOptimizationOptions name-value argument.

For an example, see Optimize Discriminant Analysis Model.

Example: 'auto'

Options for optimization, specified as a HyperparameterOptimizationOptions object or a structure. This argument

modifies the effect of the OptimizeHyperparameters name-value

argument. If you specify HyperparameterOptimizationOptions, you must

also specify OptimizeHyperparameters. All the options are optional.

However, you must set ConstraintBounds and

ConstraintType to return

AggregateOptimizationResults. The options that you can set in a

structure are the same as those in the

HyperparameterOptimizationOptions object.

| Option | Values | Default |

|---|---|---|

Optimizer |

| "bayesopt" |

ConstraintBounds | Constraint bounds for N optimization problems, specified as an N-by-2 numeric matrix or | [] |

ConstraintTarget | Constraint target for the optimization problems, specified as | If you specify ConstraintBounds and ConstraintType, then the default value is "matlab". Otherwise, the default value is []. |

ConstraintType | Constraint type for the optimization problems, specified as | [] |

AcquisitionFunctionName | Type of acquisition function:

Acquisition functions whose names include | "expected-improvement-per-second-plus" |

LossFun | Type of validation loss to optimize, specified as "auto",

"classifcost", "classiferror", or

"mincost". In the case of

fitcdiscr, the "auto" and

"mincost" options are equivalent, and the software uses

the minimal expected misclassification cost. "classifcost"

indicates to use the observed misclassification cost, and

"classiferror" indicates to use the misclassified rate in

decimal. | "auto" |

MaxObjectiveEvaluations | Maximum number of objective function evaluations. If you specify multiple optimization problems using ConstraintBounds, the value of MaxObjectiveEvaluations applies to each optimization problem individually. | 30 for "bayesopt" and "randomsearch", and the entire grid for "gridsearch" |

MaxTime | Time limit for the optimization, specified as a nonnegative real scalar. The time limit is in seconds, as measured by | Inf |

NumGridDivisions | For Optimizer="gridsearch", the number of values in each dimension. The value can be a vector of positive integers giving the number of values for each dimension, or a scalar that applies to all dimensions. The software ignores this option for categorical variables. | 10 |

ShowPlots | Logical value indicating whether to show plots of the optimization progress. If this option

is true, the software plots the best observed objective

function value against the iteration number. If you use Bayesian optimization

(Optimizer="bayesopt"), the software

also plots the best estimated objective function value. The best observed

objective function values and best estimated objective function values

correspond to the values in the BestSoFar (observed) and

BestSoFar (estim.) columns of the iterative display,

respectively. You can find these values in the properties ObjectiveMinimumTrace and EstimatedObjectiveMinimumTrace of the

SupervisedLearningBayesianOptimization object. If the

problem includes one or two optimization parameters for Bayesian optimization,

then ShowPlots also plots a model of the objective function

against the parameters. | true |

SaveIntermediateResults | Logical value indicating whether to save the optimization results. If this option is

true, the software overwrites a workspace variable named

SupervisedLearningBayesoptResults at each iteration. The

variable is a SupervisedLearningBayesianOptimization object. If you specify

multiple optimization problems using ConstraintBounds, the

workspace variable is an AggregateBayesianOptimization object named

AggregateBayesoptResults. | false |

Verbose | Display level at the command line:

For details, see the | 1 |

UseParallel | Logical value indicating whether to run the Bayesian optimization in parallel, which requires Parallel Computing Toolbox™. Due to the nonreproducibility of parallel timing, parallel Bayesian optimization does not necessarily yield reproducible results. For details, see Parallel Bayesian Optimization. | false |

Repartition | Logical value indicating whether to repartition the cross-validation at every iteration. If this option is A value of | false |

| Specify only one of the following three options. | ||

CVPartition | cvpartition object created by cvpartition | KFold=5 if you do not specify a cross-validation option |

Holdout | Scalar in the range (0,1) representing the holdout fraction | |

KFold | Integer greater than 1 | |

Example: HyperparameterOptimizationOptions=struct(UseParallel=true)

Output Arguments

Trained discriminant analysis classification model, returned as a ClassificationDiscriminant

object, a ClassificationPartitionedModel

object, or a cell array of model objects.

If you set any of the name-value arguments

CrossVal,CVPartition,Holdout,KFold, orLeaveout, thenMdlis aClassificationPartitionedModelobject.If you specify

OptimizeHyperparametersand set theConstraintTypeandConstraintBoundsoptions ofHyperparameterOptimizationOptions, thenMdlis an N-by-1 cell array of model objects, where N is equal to the number of rows inConstraintBounds. If none of the optimization problems yields a feasible model, then each cell array value is[].Otherwise,

Mdlis aClassificationDiscriminantmodel object.

To reference properties of a model object, use dot notation.

Aggregate optimization results for multiple optimization problems, returned as an AggregateBayesianOptimization object. To return

AggregateOptimizationResults, you must specify

OptimizeHyperparameters and

HyperparameterOptimizationOptions. You must also specify the

ConstraintType and ConstraintBounds

options of HyperparameterOptimizationOptions. For an example that

shows how to produce this output, see Hyperparameter Optimization with Multiple Constraint Bounds.

More About

The model for discriminant analysis is:

Each class (

Y) generates data (X) using a multivariate normal distribution. That is, the model assumesXhas a Gaussian mixture distribution (gmdistribution).For linear discriminant analysis, the model has the same covariance matrix for each class, only the means vary.

For quadratic discriminant analysis, both means and covariances of each class vary.

predict classifies so as to minimize the expected

classification cost:

where

is the predicted classification.

K is the number of classes.

is the posterior probability of class k for observation x.

is the cost of classifying an observation as y when its true class is k.

For details, see Prediction Using Discriminant Analysis Models.

Tips

After training a model, you can generate C/C++ code that predicts labels for new data. Generating C/C++ code requires MATLAB Coder™. For details, see Introduction to Code Generation for Statistics and Machine Learning Functions.

Algorithms

If you specify the

Cost,Prior, andWeightsname-value arguments, the output model object stores the specified values in theCost,Prior, andWproperties, respectively. TheCostproperty stores the user-specified cost matrix as is. ThePriorandWproperties store the prior probabilities and observation weights, respectively, after normalization. For details, see Misclassification Cost Matrix, Prior Probabilities, and Observation Weights.The software uses the

Costproperty for prediction, but not training. Therefore,Costis not read-only; you can change the property value by using dot notation after creating the trained model.

Alternative Functionality

Functions

The classify function also performs

discriminant analysis. classify is usually more awkward to

use.

classifyrequires you to fit the classifier every time you make a new prediction.classifydoes not perform cross-validation or hyperparameter optimization.classifyrequires you to fit the classifier when changing prior probabilities.

Extended Capabilities

The

fitcdiscr function supports tall arrays with the following usage

notes and limitations:

Supported syntaxes are:

Mdl = fitcdiscr(Tbl,Y)Mdl = fitcdiscr(X,Y)Mdl = fitcdiscr(___,Name=Value)[Mdl,FitInfo,HyperparameterOptimizationResults] = fitcdiscr(___,Name=Value)—fitcdiscrreturns the additional output argumentsFitInfoandHyperparameterOptimizationResultswhen you specify theOptimizeHyperparametersname-value argument.

The

FitInfooutput argument is an empty structure array currently reserved for possible future use.The

HyperparameterOptimizationResultsoutput argument is usually aSupervisedLearningBayesianOptimizationobject or a table of hyperparameters with associated values that describe the cross-validation optimization of hyperparameters. However, if you specifyHyperparameterOptimizationOptionsand setConstraintTypeandConstraintBounds, thenHyperparameterOptimizationResultsis anAggregateBayesianOptimizationobject.If you specify

HyperparameterOptimizationOptionsand do not setConstraintType,ConstraintBounds, orOptimizer="bayesopt", thenHyperparameterOptimizationResultsis a table of the hyperparameters used, observed objective function values, and rank of observations from lowest (best) to highest (worst).HyperparameterOptimizationResultsis nonempty when theOptimizeHyperparametersname-value argument is nonempty at the time you create the model. The values inHyperparameterOptimizationResultsdepend on the value you specify for theHyperparameterOptimizationOptionsname-value argument when you create the model.Supported name-value pair arguments, and any differences, are:

'ClassNames''Cost''DiscrimType'HyperparameterOptimizationOptions— For cross-validation, tall optimization supports onlyHoldoutvalidation. By default, the software selects and reserves 20% of the data as holdout validation data, and trains the model using the rest of the data. You can specify a different value for the holdout fraction by using this argument. For example, specifyHyperparameterOptimizationOptions=struct(Holdout=0.3)to reserve 30% of the data as validation data.'OptimizeHyperparameters'— The only eligible parameter to optimize is'DiscrimType'. Specifying'auto'uses'DiscrimType'.'PredictorNames''Prior''ResponseName''ScoreTransform''Weights'

For tall arrays and tall tables,

fitcdiscrreturns aCompactClassificationDiscriminantobject, which contains most of the same properties as aClassificationDiscriminantobject. The main difference is that the compact object is sensitive to memory requirements. The compact object does not include properties that include the data, or that include an array of the same size as the data. The compact object does not contain theseClassificationDiscriminantproperties:ModelParametersNumObservationsHyperparameterOptimizationResultsRowsUsedXCenteredWXY

Additionally, the compact object does not support these

ClassificationDiscriminantmethods:compactcrossvalcvshrinkresubEdgeresubLossresubMarginresubPredict

For more information, see Tall Arrays.

To perform parallel hyperparameter optimization, use the UseParallel=true

option in the HyperparameterOptimizationOptions name-value argument in

the call to the fitcdiscr function.

For more information on parallel hyperparameter optimization, see Parallel Bayesian Optimization.

For general information about parallel computing, see Run MATLAB Functions with Automatic Parallel Support (Parallel Computing Toolbox).

Version History

Introduced in R2014aYou can optimize classification model hyperparameters with respect to misclassification

cost. When you use a classification fit function, specify the

OptimizeHyperparameters and

HyperparameterOptimizationOptions name-value arguments. In the

HyperparameterOptimizationOptions structure or object, set the

LossFun value to "classifcost",

"mincost", or "auto-cost", depending on the

classification fit function.

Depending on the type of supervised learning fit function you use to perform

hyperparameter optimization, you can set the LossFun value to

"auto-cost", "classifcost",

"classiferror", "mincost",

"mse", or "quantile".

fitcdiscr defaults to serial hyperparameter optimization when

HyperparameterOptimizationOptions includes

UseParallel=true and the software cannot open a parallel pool.

In previous releases, the software issues an error under these circumstances.

MATLAB Command

You clicked a link that corresponds to this MATLAB command:

Run the command by entering it in the MATLAB Command Window. Web browsers do not support MATLAB commands.

选择网站

选择网站以获取翻译的可用内容,以及查看当地活动和优惠。根据您的位置,我们建议您选择:。

您也可以从以下列表中选择网站:

如何获得最佳网站性能

选择中国网站(中文或英文)以获得最佳网站性能。其他 MathWorks 国家/地区网站并未针对您所在位置的访问进行优化。

美洲

- América Latina (Español)

- Canada (English)

- United States (English)

欧洲

- Belgium (English)

- Denmark (English)

- Deutschland (Deutsch)

- España (Español)

- Finland (English)

- France (Français)

- Ireland (English)

- Italia (Italiano)

- Luxembourg (English)

- Netherlands (English)

- Norway (English)

- Österreich (Deutsch)

- Portugal (English)

- Sweden (English)

- Switzerland

- United Kingdom (English)