waveletPooling1dLayer

Description

A 1-D discrete wavelet pooling layer applies the forward and inverse discrete wavelet transforms to reconstruct approximations of the layer input. Use this layer to downsample the layer input along the time dimension. The layer supports learnable (adaptive) and nonadaptive pooling. For more information, see Discrete Wavelet Pooling. Use of this layer requires Deep Learning Toolbox™.

Creation

Description

layer = waveletPooling1dLayer

The input to waveletPooling1dLayer must be a real-valued dlarray (Deep Learning Toolbox) object

with format "CBT". The output is a dlarray object with

format "CBT". For information, see Layer Output Format.

Note

When you initialize the learnable parameters of waveletPooling1dLayer, the

layer weights are set to unity. It is not recommended to initialize the weights

directly.

layer = waveletPooling1dLayer(PropertyName=Value)

Example: layer =

waveletPooling1dLayer(Wavelet="db4",Boundary="zeropad") creates a wavelet

pooling layer that uses the extremal phase Daubechies wavelet with four vanishing moments

and zero padding at the boundaries.

Properties

DWT

This property is read-only after object creation.

Real-valued orthogonal or biorthogonal wavelet, specified as a string scalar or

character vector recognized by wavemngr. The layer uses the specified wavelet in the 1-D DWT.

Data Types: char | string

This property is read-only after object creation.

Reconstruction level, specified as a nonnegative integer between

0 and floor(log2(N)), where

N is the size of the layer input along the time

("T") dimension. The layer reconstructs the pooled output at

level ReconstructionLevel. By default, the layer output is a

factor-of-2 dimension reduction for each input feature.

If you set ReconstructionLevel, then the default value of

AnalysisLevel is ReconstructionLevel+1.

For more information about the size of the layer output, see Layer Output Format.

Data Types: single | double

This property is read-only after object creation.

Analysis level, or decomposition level, of the 1-D DWT, specified as a positive

integer between 1 and

floor(log2(N)), where N is

the size of the layer input along the time dimension.

AnalysisLevel must be greater than

ReconstructionLevel.

If you set AnalysisLevel, then the default value of

ReconstructionLevel is AnalysisLevel−1.

Data Types: single | double

This property is read-only after object creation.

Boundary extension mode to use in the DWT, specified as

"reflection", "periodic", or

"zeropad". The layer extends the coefficients at the boundary at

each decomposition level based on the corresponding mode in dwtmode:

"reflection"— Half-point symmetric extension,"sym""periodic"— Periodic extension,"per""zeropad— Zero padding,"zpd"

To learn how the boundary extension mode can affect the size of the layer output, see Layer Output Format.

This property is read-only after object creation.

Mask indicating which detail (wavelet) coefficients to include in pooling, specified as a logical array.

The shape of the array is Nchannel-by-Ndiff,

where Nchannel is the number of channels in the layer input and

Ndiff is the difference between the analysis level and

reconstruction level. The layer includes and excludes the detail coefficients for

pooling when the corresponding value in the mask is 1

(true) or 0 (false),

respectively. The columns correspond to the decomposition levels ordered from finer to

coarser scale.

If

Nchannelis equal to1and the input to the layer's forward / predict object function has more than one channel, the layer expands the values ofSelectedDetailCoefficientsin the 1-by-Ndiffarray to all channels.If you set

Ndiffto1and the difference between the analysis and reconstruction levels is greater than 1, the layer expands theSelectedDetailCoefficientsvalues to all levels.

The following example illustrates how

SelectedDetailCoefficients interacts with

DetailWeights. If

SelectedDetailCoefficients = [1 0 1]DetailWeights = [0.5 1e3 0.4]0, the coefficients at that level are ignored. The gain

corresponding to the middle level is effectively zero.

Data Types: logical

This property is read-only after object creation.

Expected size of the layer output along the time dimension, specified as

"none" or a scalar. By default, the output size is not verified.

If you set ExpectedOutputSize and the output size does not match

the specified value, the result is an error.

ExpectedOutputSize plays an analogous role to the bookkeeping

vector l output argument of wavedec, when that information is missing. The bookkeeping vector

contains the number of coefficients by level and the length of the input.

Parameters

Detail (wavelet) coefficient subband weights, specified as [],

a numeric array, or a dlarray object.

These layer weights are learnable parameters. DetailWeights

is a Nchannel-by-Ndiff matrix (vector), where

Nchannel is the number of channels in the layer input and

Ndiff is the difference between the analysis level and

reconstruction level. You can use the function initialize (Deep Learning Toolbox)

to initialize the learnable parameters of a deep learning neural network that includes

waveletPooling1dLayer objects. When you initialize the layers,

initialize sets DetailWeights to

ones(Nchannel,.AnalysisLevel-ReconstructionLevel)

You must initialize waveletPooling1dLayer before using the layer's

forward/predict method.

Data Types: single | double

Lowpass (scaling) coefficient subband weights, specified as [],

a numeric array, or a dlarray object.

These layer weights are learnable parameters. LowpassWeights

is a Nchannel-by-1 vector, where Nchannel is the

number of channels in the layer input. You can use the function initialize (Deep Learning Toolbox)

to initialize the learnable parameters of a deep learning neural network that includes

waveletPooling1dLayer objects. When you initialize the layers,

initialize sets LowpassWeights to

ones(Nchannel,1).

You must initialize waveletPooling1dLayer before using the

layer's forward/predict method.

It is not recommended to initialize the weights directly.

Data Types: single | double

Learning Rate

Learning rate factor for the detail (wavelet) coefficient subband weights,

specified as a nonnegative scalar. By default, the weights do not update with

training. You can also set this property using the function setLearnRateFactor (Deep Learning Toolbox).

To perform adaptive pooling, specify a nonzero learning rate factor. When

DetailWeightLearnRateFactor is nonzero, the layer uses the

detail subband weights to modify the wavelet coefficients.

Data Types: single | double

Learning rate factor for the lowpass (scaling) coefficient subband weights,

specified as a nonnegative scalar. By default, the weights do not update with

training. You can also set this property using the function setLearnRateFactor (Deep Learning Toolbox).

To perform adaptive pooling, specify a nonzero learning rate factor. When

LowpassWeightLearnRateFactor is nonzero, the layer uses the

lowpass weights to modify the lowpass (scaling) coefficients.

Data Types: single | double

Layer

This property is read-only.

Number of inputs to the layer, stored as 1. This layer accepts a

single input only.

Data Types: double

This property is read-only.

Input names, stored as {'in'}. This layer accepts a single input

only.

Data Types: cell

This property is read-only.

Number of outputs from the layer, stored as 1. This layer has a

single output only.

Data Types: double

This property is read-only.

Output names, stored as {'out'}. This layer has a single output

only.

Data Types: cell

Examples

Load the ECG signal. The data is arranged as a 2048-by-1 vector. Save the signal in single precision as a dlarray object with format "TCB".

load wecg len = length(wecg); dlsig = dlarray(single(wecg),"TCB");

Create the default 1-D discrete wavelet pooling layer. By default, the learnable parameters DetailWeights and LowpassWeights are both empty.

dwtPool = waveletPooling1dLayer

dwtPool =

waveletPooling1dLayer with properties:

Name: ''

DetailWeightLearnRateFactor: 0

LowpassWeightLearnRateFactor: 0

Wavelet: 'db1'

ReconstructionLevel: 1

AnalysisLevel: 2

Boundary: 'reflection'

SelectedDetailCoefficients: 1

ExpectedOutputSize: 'none'

Learnable Parameters

DetailWeights: []

LowpassWeights: []

State Parameters

No properties.

Show all properties

Include the default 1-D wavelet pooling layer in a Layer array.

layers = [ ...

sequenceInputLayer(1,MinLength=len)

waveletPooling1dLayer]layers =

2×1 Layer array with layers:

1 '' Sequence Input Sequence input with 1 channels

2 '' waveletPooling1dLayer waveletPooling1dLayer

Convert the layer array to a dlnetwork object. Because the layer array has an input layer and no other inputs, the software initializes the network.

dlnet = dlnetwork(layers)

dlnet =

dlnetwork with properties:

Layers: [2×1 nnet.cnn.layer.Layer]

Connections: [1×2 table]

Learnables: [2×3 table]

State: [0×3 table]

InputNames: {'sequenceinput'}

OutputNames: {'layer'}

Initialized: 1

View summary with summary.

Confirm the network learnable parameters are DetailWeights and LowpassWeights.

dlnet.Learnables

ans=2×3 table

Layer Parameter Value

_______ ________________ _____________

"layer" "DetailWeights" {1×1 dlarray}

"layer" "LowpassWeights" {1×1 dlarray}

Inspect the wavelet pooling layer in the network. Confirm the software initialized the weights.

dlnet.Layers(2)

ans =

waveletPooling1dLayer with properties:

Name: 'layer'

DetailWeightLearnRateFactor: 0

LowpassWeightLearnRateFactor: 0

Wavelet: 'db1'

ReconstructionLevel: 1

AnalysisLevel: 2

Boundary: 'reflection'

SelectedDetailCoefficients: 1

ExpectedOutputSize: 'none'

Learnable Parameters

DetailWeights: [1×1 dlarray]

LowpassWeights: [1×1 dlarray]

State Parameters

No properties.

Show all properties

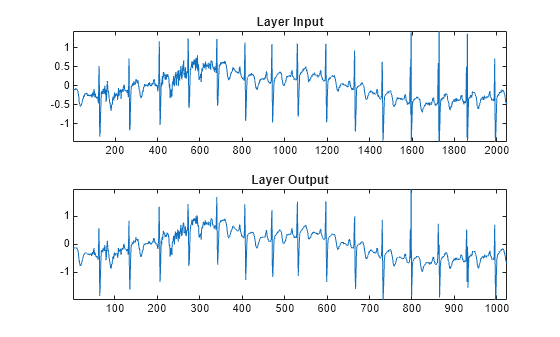

By default, the layer uses the Haar (db1) wavelet and outputs the level-one lowpass approximation of the input. Run the signal through the network. Confirm the output has 1024 samples.

dlnetout = forward(dlnet,dlsig); size(dlnetout)

ans = 1×3

1 1 1024

Plot the layer input and output.

dlnetout2 = squeeze(dlnetout); tiledlayout(2,1) nexttile plot(wecg) title("Layer Input") axis tight nexttile plot(dlnetout2) title("Layer Output") axis tight

Load the Espiga3 electroencephalogram (EEG) data set. The data consists of 23 channels of EEG sampled at 200 Hz. There are 995 samples in each channel. The channels are arranged column-wise. Save the multisignal in single precision as a dlarray, specifying the dimensions in order. dlarray permutes the array dimensions to the "CBT" shape expected by a deep learning network.

load Espiga3 [N,ch] = size(Espiga3); dlsig = dlarray(single(Espiga3),"TCB");

Create a Layer array containing a sequence input layer and a 1-D discrete wavelet pooling layer. For the pooling layer:

Set the analysis level to 5 and reconstruction level to 2.

Set the DWT boundary extension mode to

"zeropad".Specify a 1-by-3 mask so that the layer excludes the detail coefficients at levels 3 and 4 from the pooling. For multichannel input,

waveletPooling1dLayerautomatically expands the mask to all channels.

alevel = 5; rlevel = 2; bdy = "zeropad"; msk = [0 0 1]; layers = [ ... sequenceInputLayer(ch,MinLength=N) waveletPooling1dLayer(AnalysisLevel=alevel, ... ReconstructionLevel=rlevel, ... Boundary=bdy, ... SelectedDetailCoefficients=msk)];

Convert the layer array to a dlnetwork object.

dlnet = dlnetwork(layers); dlnet.Layers(2)

ans =

waveletPooling1dLayer with properties:

Name: 'layer'

DetailWeightLearnRateFactor: 0

LowpassWeightLearnRateFactor: 0

Wavelet: 'db1'

ReconstructionLevel: 2

AnalysisLevel: 5

Boundary: 'zeropad'

SelectedDetailCoefficients: [0 0 1]

ExpectedOutputSize: 'none'

Learnable Parameters

DetailWeights: [23×3 dlarray]

LowpassWeights: [23×1 dlarray]

State Parameters

No properties.

Show all properties

Run the signal through the network.

netout = forward(dlnet,dlsig);

Now use the same layer property values in the functions dldwt and dlidwt.

Use the dldwt function to obtain the differentiable DWT of the signal down to level 5. To specify the extension mode, use the PaddingMode name-value argument. To obtain the full wavelet decomposition, set the full wavelet decomposition option to true.

[A,D] = dldwt(dlsig, ... Level=alevel, ... FullTree=true, ... PaddingMode=bdy);

The dlidwt function expects the wavelet subband gains (masks) to be an NC-by-L matrix, where NC is the number of channels in the signal and L is the difference between the decomposition level (used to obtain the coefficients) and the reconstruction level. Repeat copies of the mask used in the pooling layer into a 23-by-1 matrix. Then use the dlidwt function to reconstruct the DWT up to level 2. Set the extension mode to zero padding. To exclude from all channels the detail coefficients at levels 3 and 4, set the DetailGain name-value argument to the new mask.

newmsk=repmat(msk,23,1); dlout = dlidwt(A,D, ... Level=rlevel, ... DetailGain=newmsk, ... PaddingMode=bdy);

Confirm the sizes of the layer output and dlidwt output are identical.

size(netout)

ans = 1×3

23 1 249

size(dlout)

ans = 1×3

23 1 249

Confirm the outputs are equal.

netoutEx = extractdata(netout);

dloutEx = extractdata(dlout);

norm(netoutEx(:)-dloutEx(:),"Inf")ans = single

0

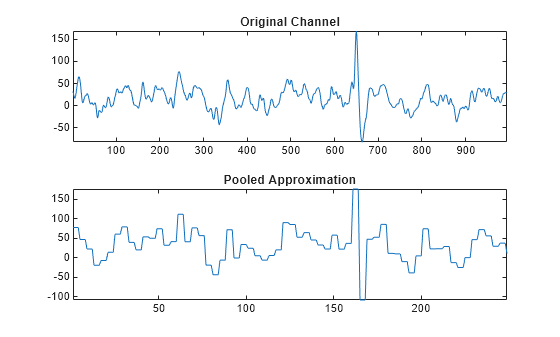

Choose a channel. Plot that channel and its pooled approximation.

chan = 7; orig = Espiga3(:,chan); pool = squeeze(netoutEx(chan,1,:)); tiledlayout(2,1) nexttile plot(orig) axis tight title("Original Channel") nexttile plot(pool) axis tight title("Pooled Approximation")

Create a signal with 2047 samples. Save the signal in single precision as a dlarray object. dlarray permutes the array dimensions to the "CBT" shape expected by a deep learning network.

load wecg sig = wecg(1:end-1); dlsig = dlarray(sig,"TCB"); [ch,N] = size(dlsig)

ch = 1

N = 2047

The reconstruction level and the difference between the analysis and reconstruction levels can affect the output size.

Reconstruction Level Greater Than 0, Difference Between Analysis and Reconstruction Levels Greater Than 1

Use the dldwt function to obtain the differentiable DWT of the signal down to level 4. Specify the sym4 wavelet and zero padding as the extension mode. Obtain the full wavelet decomposition by setting FullTree to true.

wv = "sym4"; alevel = 4; bdy = "zeropad"; [A,D] = dldwt(dlsig, ... Wavelet=wv, ... Level=alevel, ... FullTree=true, ... PaddingMode=bdy); %#ok<*ASGLU>

Create a waveletPooling1dLayer using the same parameters as the dldwt function. Specify a reconstruction level of 2.

rlevel = 2; wpl = waveletPooling1dLayer(Wavelet=wv, ... AnalysisLevel=alevel, ... ReconstructionLevel=rlevel, ... Boundary=bdy);

Create a Layer array containing a sequence input layer appropriate for the signal and the wavelet pooling layer. Convert the layer array to a dlnetwork object. Run the signal through the network.

layers = [

sequenceInputLayer(ch,MinLength=N)

wpl];

dlnet = dlnetwork(layers);

dlnetout = forward(dlnet,dlsig);Compare the size of the layer output along the time dimension with the size of the coefficients at the corresponding decomposition level. Because the reconstruction level is greater than 0 and the difference between the analysis and reconstruction levels is greater than 1, the sizes are equal. The layer effectively sets FullTree to true, which removes any ambiguity regarding the size of the reconstruction.

[size(dlnetout,3) size(D{rlevel},3)]ans = 1×2

517 517

Reconstruction Level is 0

Use the dldwt function to obtain the differentiable DWT of the signal down to level 2. Specify the sym4 wavelet and zero padding as the extension mode. Set FullTree to true to obtain the full wavelet decomposition.

wv = "sym4"; alevel = 2; bdy = "zeropad"; [A,D] = dldwt(dlsig, ... Wavelet=wv, ... Level=alevel, ... FullTree=true, ... PaddingMode=bdy);

Use the dlidwt function to obtain the reconstruction at level 0. Compare the size of the reconstruction with the size of the original signal. Even though FullTree is true, the sizes are different. Because the reconstruction level is 0, ambiguity exists.

rlevel = 0; xrec = dlidwt(A,D, ... Wavelet=wv, ... Level=rlevel, ... PaddingMode=bdy); [size(dlsig) ; size(xrec)]

ans = 2×3

1 1 2047

1 1 2048

Because the reconstruction level is 0, the size of the reconstruction must equal the size of the original signal. You can set ExpectedOutputSize to remove the ambiguity.

xrec2 = dlidwt(A,D, ... Wavelet=wv, ... Level=rlevel, ... PaddingMode=bdy, ... ExpectedOutputSize=size(dlsig,3)); [size(dlsig) ; size(xrec2)]

ans = 2×3

1 1 2047

1 1 2047

Similarly, to remove the ambiguity in the wavelet pooling layer, you can specify ExpectedOutputSize. Create a Layer array containing a sequence input layer and the wavelet pooling layer. Convert the layer array to a dlnetwork object. Run the signal through the network.

wpl = waveletPooling1dLayer(Wavelet=wv, ... AnalysisLevel=alevel, ... ReconstructionLevel=rlevel, ... Boundary=bdy, ... ExpectedOutputSize=size(dlsig,3)); layers = [ sequenceInputLayer(ch,MinLength=N) wpl]; dlnet = dlnetwork(layers); dlnetout = forward(dlnet,dlsig); [size(dlsig) ; size(dlnetout)]

ans = 2×3

1 1 2047

1 1 2047

Difference Between Analysis and Reconstruction Levels is 1

When the difference between the analysis and reconstruction levels is 1, the pooling layer behaves as if FullTree is false. Use the dldwt function to obtain the differentiable DWT of the signal down to level 3. Specify the sym4 wavelet and zero padding as the extension mode. Set FullTree to false.

wv = "sym4"; alevel = 3; bdy = "zeropad"; [A,D] = dldwt(dlsig, ... Wavelet=wv, ... Level=alevel, ... FullTree=false, ... PaddingMode=bdy);

If the input coefficients are a tensor, the dlidwt function performs a single-level IDWT. Obtain the size of the reconstruction.

xrec2 = dlidwt(A,D, ... Wavelet=wv, ... PaddingMode=bdy); size(xrec2,3)

ans = 518

Use the wavedec function to obtain the bookkeeping vector of a signal whose length equals the size of the dlarray signal. Use the same input parameters. Because dldwt uses zero padding boundary extension, set extmode to "zpd". Extract from the bookkeeping vector the size of the coefficients at level 2. Because FullTree is false, the size extracted from the bookkeeping vector does not equal the size of the reconstruction..

[~,l] = wavedec(sig,alevel,wv,extmode="zpd");

extractedSize = l(end-3+1)extractedSize = 517

Use the dlidwt function to obtain the reconstruction at level 2, but this time specify ExpectedOutputSize to equal the extracted value.

xrec2 = dlidwt(A,D, ... Wavelet=wv, ... PaddingMode=bdy, ... ExpectedOutputSize=extractedSize); size(xrec2,3)

ans = 517

Create a wavelet pooling layer. Set the layer properties to agree with the dldwt and dlidwt input arguments, including the expected output size. Create a Layer array containing a sequence input layer and the wavelet pooling layer. Convert the layer array to a dlnetwork object. Run the signal through the network. Confirm the size of the network output equals ExpectedOutputSize.

rlevel = 2; wpl = waveletPooling1dLayer(Wavelet=wv, ... AnalysisLevel=alevel, ... ReconstructionLevel=rlevel, ... Boundary=bdy, ... ExpectedOutputSize=extractedSize); layers = [ sequenceInputLayer(ch,MinLength=N) wpl]; dlnet = dlnetwork(layers); dlnetout = forward(dlnet,dlsig); size(dlnetout,3)

ans = 517

More About

A discrete wavelet pooling layer performs downsampling by outputting the wavelet approximation at the specified level.

Williams and Li proposed a wavelet pooling scheme for convolutional-based networks [1]. The layer first

obtains the DWT of the input down to level AnalysisLevel. The layer

then uses the coarse-scale lowpass (scaling) coefficients and the detail coefficients at

decomposition levels AnalysisLevel through

ReconstructionLevel+1ReconstructionLevel. In 1-D wavelet

pooling, the layer pools over the "T” (time) dimension. In 2-D wavelet

pooling, the layer pools over the two "S" (spatial) dimensions. This

diagram illustrates the process for 2-D wavelet pooling.

Wavelet Pooling Scheme

Walter and Garcke were the first to propose an adaptive wavelet pooling approach [2]. In adaptive learnable pooling, the main idea is to multiply the coefficients in each wavelet subband by a weight w and use those weighted subbands to obtain the modified approximation. Because each subband has a different weight, the model can learn which subbands are most relevant to the model’s objective. With the exception of the lowpass weight, Walter and Garcke’s approach is conceptually similar to what is done in traditional wavelet denoising. Their scheme uses gradient descent instead of some other method to determine the threshold. The algorithm for 1-D pooling can be described as follows:

Obtain the wavelet decomposition of the signal at level M.

Multiply the lowpass coefficients

cAMand detail coefficientscDMby the weightswLandwH, respectively.Compute the inverse DWT to obtain the modified approximation at level

M-1.

For more information about the 1-D DWT, see wavedec.

The output of waveletPooling1dLayer is a

dlarray object with format "CBT". The size of the

"T" (time) dimension depends on the size of the layer input along the

same dimension and these layer properties: Wavelet,

Boundary, AnalysisLevel, and

ReconstructionLevel. If you want waveletPooling1dLayer to

verify the output size along the time dimension, set

ExpectedOutputSize.

If ReconstructionLevel is not zero and the difference between

AnalysisLevel and ReconstructionLevel is greater

than 1, by default the layer output size always equals the size you can determine using

dldwt or

wavedec (see Determine Expected Output Size of 1-D Discrete Wavelet Pooling Layer).

If ReconstructionLevel is 0 or the difference

between AnalysisLevel and ReconstructionLevel is

exactly 1, there is ambiguity regarding what the layer output size should be. The size of

the coefficients of a single-level forward 1-D DWT is

ceil(N/2), where N is the size of

the input, for periodic boundary extension, and

floor((N+LF-1)/2), where

LF is the filter length, for reflection or zero padding boundary

extension (see wavedec). The floor and ceiling functions

introduce the ambiguity. When performing a single-level inverse 1-D DWT, the layer cannot

determine the size of the original data that was decomposed. In this scenario, you can use

dldwt or

wavedec to obtain the expected output size.

Otherwise, if you do not set ExpectedOutputSize, the layer output size

and the value you determine might differ by one.

References

[1] Williams, Travis and Robert Y. Li. “Wavelet Pooling for Convolutional Neural Networks.” International Conference on Learning Representations (2018), https://openreview.net/pdf?id=rkhlb8lCZ.

[2] Wolter, Moritz, and Jochen Garcke. “Adaptive Wavelet Pooling for Convolutional Neural Networks.” In Proceedings of The 24th International Conference on Artificial Intelligence and Statistics, edited by Arindam Banerjee and Kenji Fukumizu, vol. 130. PMLR, 2021. https://proceedings.mlr.press/v130/wolter21a.html.

Version History

Introduced in R2026a

See Also

Apps

- Deep Network Designer (Deep Learning Toolbox)

Functions

Objects

waveletPooling2dLayer|modwtLayer|dlarray(Deep Learning Toolbox) |dlnetwork(Deep Learning Toolbox)

Topics

- Practical Introduction to Multiresolution Analysis

- List of Deep Learning Layers (Deep Learning Toolbox)

MATLAB Command

You clicked a link that corresponds to this MATLAB command:

Run the command by entering it in the MATLAB Command Window. Web browsers do not support MATLAB commands.

选择网站

选择网站以获取翻译的可用内容,以及查看当地活动和优惠。根据您的位置,我们建议您选择:。

您也可以从以下列表中选择网站:

如何获得最佳网站性能

选择中国网站(中文或英文)以获得最佳网站性能。其他 MathWorks 国家/地区网站并未针对您所在位置的访问进行优化。

美洲

- América Latina (Español)

- Canada (English)

- United States (English)

欧洲

- Belgium (English)

- Denmark (English)

- Deutschland (Deutsch)

- España (Español)

- Finland (English)

- France (Français)

- Ireland (English)

- Italia (Italiano)

- Luxembourg (English)

- Netherlands (English)

- Norway (English)

- Österreich (Deutsch)

- Portugal (English)

- Sweden (English)

- Switzerland

- United Kingdom (English)