kfoldLoss

Classification loss for observations not used in training

Description

L = kfoldLoss(CVMdl)CVMdl.

That is, for every fold, kfoldLoss estimates the

classification error rate for observations that it holds out when

it trains using all other observations. kfoldLoss applies

the same data used create CVMdl (see fitcecoc).

L contains a classification loss for each

regularization strength in the linear classification models that compose CVMdl.

L = kfoldLoss(CVMdl,Name,Value)Name,Value pair

arguments. For example, specify a decoding scheme, which folds to

use for the loss calculation, or verbosity level.

Input Arguments

Cross-validated, ECOC model composed of linear classification

models, specified as a ClassificationPartitionedLinearECOC model

object. You can create a ClassificationPartitionedLinearECOC model

using fitcecoc and by:

Specifying any one of the cross-validation, name-value pair arguments, for example,

CrossValSetting the name-value pair argument

Learnersto'linear'or a linear classification model template returned bytemplateLinear

To obtain estimates, kfoldLoss applies the same data used

to cross-validate the ECOC model (X and Y).

Name-Value Arguments

Specify optional pairs of arguments as

Name1=Value1,...,NameN=ValueN, where Name is

the argument name and Value is the corresponding value.

Name-value arguments must appear after other arguments, but the order of the

pairs does not matter.

Before R2021a, use commas to separate each name and value, and enclose

Name in quotes.

Binary learner loss function, specified as the comma-separated

pair consisting of 'BinaryLoss' and a built-in loss function name or function handle.

This table contains names and descriptions of the built-in functions, where yj is the class label for a particular binary learner (in the set {-1,1,0}), sj is the score for observation j, and g(yj,sj) is the binary loss formula.

Value Description Score Domain g(yj,sj) "binodeviance"Binomial deviance (–∞,∞) log[1 + exp(–2yjsj)]/[2log(2)] "exponential"Exponential (–∞,∞) exp(–yjsj)/2 "hamming"Hamming [0,1] or (–∞,∞) [1 – sign(yjsj)]/2 "hinge"Hinge (–∞,∞) max(0,1 – yjsj)/2 "linear"Linear (–∞,∞) (1 – yjsj)/2 "logit"Logistic (–∞,∞) log[1 + exp(–yjsj)]/[2log(2)] "quadratic"Quadratic [0,1] [1 – yj(2sj – 1)]2/2 The software normalizes the binary losses such that the loss is 0.5 when yj = 0. Also, the software calculates the mean binary loss for each class.

For a custom binary loss function, e.g.,

customFunction, specify its function handle'BinaryLoss',@customFunction.customFunctionshould have this formwhere:bLoss = customFunction(M,s)

Mis the K-by-B coding matrix stored inMdl.CodingMatrix.sis the 1-by-B row vector of classification scores.bLossis the classification loss. This scalar aggregates the binary losses for every learner in a particular class. For example, you can use the mean binary loss to aggregate the loss over the learners for each class.K is the number of classes.

B is the number of binary learners.

For an example of passing a custom binary loss function, see Predict Test-Sample Labels of ECOC Model Using Custom Binary Loss Function.

By default, if all binary learners are linear classification models using:

SVM, then

BinaryLossis'hinge'Logistic regression, then

BinaryLossis'quadratic'

Example: 'BinaryLoss','binodeviance'

Data Types: char | string | function_handle

Decoding scheme that aggregates the binary losses, specified as the comma-separated pair

consisting of 'Decoding' and 'lossweighted' or

'lossbased'. For more information, see Binary Loss.

Example: 'Decoding','lossbased'

Fold indices to use for classification-score prediction, specified as a numeric vector of

positive integers. The elements of Folds must range from

1 through CVMdl.KFold.

Example: Folds=[1 4 10]

Data Types: single | double

Loss function, specified as 'classiferror',

'classifcost', or a function handle.

You can:

Specify the built-in function

'classiferror', then the loss function is the classification error.Specify the built-in function

'classifcost'. In this case, the loss function is the observed misclassification cost. If you use the default cost matrix (whose element value is 0 for correct classification and 1 for incorrect classification), then the loss values for'classifcost'and'classiferror'are identical.Specify your own function using function handle notation.

For what follows,

nis the number of observations in the training data (CVMdl.NumObservations) andKis the number of classes (numel(CVMdl.ClassNames)). Your function needs the signaturelossvalue =, where:lossfun(C,S,W,Cost)The output argument

lossvalueis a scalar.You choose the function name (

lossfun).Cis ann-by-Klogical matrix with rows indicating which class the corresponding observation belongs. The column order corresponds to the class order inCVMdl.ClassNames.Construct

Cby settingC(p,q) = 1if observationpis in classq, for each row. Set every element of rowpto0.Sis ann-by-Knumeric matrix of negated loss values for classes. Each row corresponds to an observation. The column order corresponds to the class order inCVMdl.ClassNames.Sresembles the output argumentNegLossofkfoldPredict.Wis ann-by-1 numeric vector of observation weights. If you passW, the software normalizes its elements to sum to1.Costis aK-by-Knumeric matrix of misclassification costs. For example,Cost=ones(K) -eye(K)specifies a cost of 0 for correct classification, and 1 for misclassification.

Specify your function using

'LossFun',@lossfun.

Data Types: function_handle | char | string

Loss aggregation level, specified as "average" or

"individual".

| Value | Description |

|---|---|

"average" | Returns losses averaged over all folds |

"individual" | Returns losses for each fold |

Example: Mode="individual"

Estimation options, specified as a structure array as returned by statset.

To invoke parallel computing you need a Parallel Computing Toolbox™ license.

Example: Options=statset(UseParallel=true)

Data Types: struct

Verbosity level, specified as 0 or 1.

Verbose controls the number of diagnostic messages that the

software displays in the Command Window.

If Verbose is 0, then the software does not display

diagnostic messages. Otherwise, the software displays diagnostic messages.

Example: Verbose=1

Data Types: single | double

Output Arguments

Cross-validated classification losses, returned

as a numeric scalar, vector, or matrix. The interpretation of L depends

on LossFun.

Let R be the number of regularizations

strengths is the cross-validated models (CVMdl.Trained{1}.BinaryLearners{1}.Lambda)

and F be the number of folds (stored in CVMdl.KFold).

If

Modeis'average', thenLis a 1-by-Rvector.L(is the average classification loss over all folds of the cross-validated model that uses regularization strengthj)j.Otherwise,

Lis aF-by-Rmatrix.L(is the classification loss for foldi,j)iof the cross-validated model that uses regularization strengthj.

Examples

Load the NLP data set.

load nlpdataX is a sparse matrix of predictor data, and Y is a categorical vector of class labels.

Cross-validate an ECOC model of linear classification models.

rng(1); % For reproducibility CVMdl = fitcecoc(X,Y,'Learner','linear','CrossVal','on');

CVMdl is a ClassificationPartitionedLinearECOC model. By default, the software implements 10-fold cross validation.

Estimate the average of the out-of-fold classification error rates.

ce = kfoldLoss(CVMdl)

ce = 0.0958

Alternatively, you can obtain the per-fold classification error rates by specifying the name-value pair 'Mode','individual' in kfoldLoss.

Load the NLP data set. Transpose the predictor data.

load nlpdata

X = X';For simplicity, use the label 'others' for all observations in Y that are not 'simulink', 'dsp', or 'comm'.

Y(~(ismember(Y,{'simulink','dsp','comm'}))) = 'others';Create a linear classification model template that specifies optimizing the objective function using SpaRSA.

t = templateLinear('Solver','sparsa');

Cross-validate an ECOC model of linear classification models using 5-fold cross-validation. Optimize the objective function using SpaRSA. Specify that the predictor observations correspond to columns.

rng(1); % For reproducibility CVMdl = fitcecoc(X,Y,'Learners',t,'KFold',5,'ObservationsIn','columns'); CMdl1 = CVMdl.Trained{1}

CMdl1 =

CompactClassificationECOC

ResponseName: 'Y'

ClassNames: [comm dsp simulink others]

ScoreTransform: 'none'

BinaryLearners: {6×1 cell}

CodingMatrix: [4×6 double]

Properties, Methods

CVMdl is a ClassificationPartitionedLinearECOC model. It contains the property Trained, which is a 5-by-1 cell array holding a CompactClassificationECOC model that the software trained using the training set of each fold.

Create a function that takes the minimal loss for each observation, and then averages the minimal losses across all observations. Because the function does not use the class-identifier matrix (C), observation weights (W), and classification cost (Cost), use ~ to have kfoldLoss ignore their positions.

lossfun = @(~,S,~,~)mean(min(-S,[],2));

Estimate the average cross-validated classification loss using the minimal loss per observation function. Also, obtain the loss for each fold.

ce = kfoldLoss(CVMdl,'LossFun',lossfun)ce = 0.0485

ceFold = kfoldLoss(CVMdl,'LossFun',lossfun,'Mode','individual')

ceFold = 5×1

0.0488

0.0511

0.0496

0.0479

0.0452

To determine a good lasso-penalty strength for an ECOC model composed of linear classification models that use logistic regression learners, implement 5-fold cross-validation.

Load the NLP data set.

load nlpdataX is a sparse matrix of predictor data, and Y is a categorical vector of class labels.

For simplicity, use the label 'others' for all observations in Y that are not 'simulink', 'dsp', or 'comm'.

Y(~(ismember(Y,{'simulink','dsp','comm'}))) = 'others';Create a set of 11 logarithmically-spaced regularization strengths from through .

Lambda = logspace(-7,-2,11);

Create a linear classification model template that specifies to use logistic regression learners, use lasso penalties with strengths in Lambda, train using SpaRSA, and lower the tolerance on the gradient of the objective function to 1e-8.

t = templateLinear('Learner','logistic','Solver','sparsa',... 'Regularization','lasso','Lambda',Lambda,'GradientTolerance',1e-8);

Cross-validate the models. To increase execution speed, transpose the predictor data and specify that the observations are in columns.

X = X'; rng(10); % For reproducibility CVMdl = fitcecoc(X,Y,'Learners',t,'ObservationsIn','columns','KFold',5);

CVMdl is a ClassificationPartitionedLinearECOC model.

Dissect CVMdl, and each model within it.

numECOCModels = numel(CVMdl.Trained)

numECOCModels = 5

ECOCMdl1 = CVMdl.Trained{1}ECOCMdl1 =

CompactClassificationECOC

ResponseName: 'Y'

ClassNames: [comm dsp simulink others]

ScoreTransform: 'none'

BinaryLearners: {6×1 cell}

CodingMatrix: [4×6 double]

Properties, Methods

numCLModels = numel(ECOCMdl1.BinaryLearners)

numCLModels = 6

CLMdl1 = ECOCMdl1.BinaryLearners{1}CLMdl1 =

ClassificationLinear

ResponseName: 'Y'

ClassNames: [-1 1]

ScoreTransform: 'logit'

Beta: [34023×11 double]

Bias: [-0.3169 -0.3169 -0.3168 -0.3168 -0.3168 -0.3167 -0.1725 -0.0805 -0.1762 -0.3450 -0.5174]

Lambda: [1.0000e-07 3.1623e-07 1.0000e-06 3.1623e-06 1.0000e-05 3.1623e-05 1.0000e-04 3.1623e-04 1.0000e-03 0.0032 0.0100]

Learner: 'logistic'

Properties, Methods

Because fitcecoc implements 5-fold cross-validation, CVMdl contains a 5-by-1 cell array of CompactClassificationECOC models that the software trains on each fold. The BinaryLearners property of each CompactClassificationECOC model contains the ClassificationLinear models. The number of ClassificationLinear models within each compact ECOC model depends on the number of distinct labels and coding design. Because Lambda is a sequence of regularization strengths, you can think of CLMdl1 as 11 models, one for each regularization strength in Lambda.

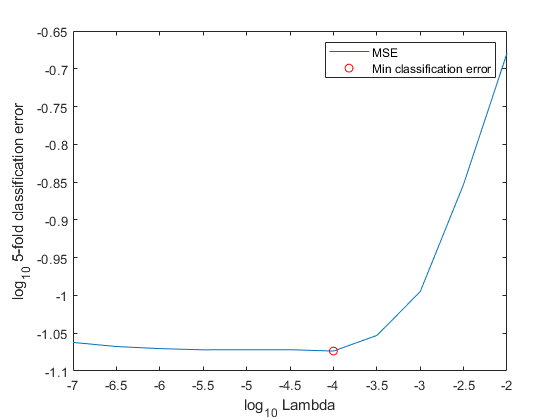

Determine how well the models generalize by plotting the averages of the 5-fold classification error for each regularization strength. Identify the regularization strength that minimizes the generalization error over the grid.

ce = kfoldLoss(CVMdl); figure; plot(log10(Lambda),log10(ce)) [~,minCEIdx] = min(ce); minLambda = Lambda(minCEIdx); hold on plot(log10(minLambda),log10(ce(minCEIdx)),'ro'); ylabel('log_{10} 5-fold classification error') xlabel('log_{10} Lambda') legend('MSE','Min classification error') hold off

Train an ECOC model composed of linear classification model using the entire data set, and specify the minimal regularization strength.

t = templateLinear('Learner','logistic','Solver','sparsa',... 'Regularization','lasso','Lambda',minLambda,'GradientTolerance',1e-8); MdlFinal = fitcecoc(X,Y,'Learners',t,'ObservationsIn','columns');

To estimate labels for new observations, pass MdlFinal and the new data to predict.

More About

The classification error has the form

where:

wj is the weight for observation j. The software renormalizes the weights to sum to 1.

ej = 1 if the predicted class of observation j differs from its true class, and 0 otherwise.

In other words, the classification error is the proportion of observations misclassified by the classifier.

The observed misclassification cost has the form

where:

wj is the weight for observation j. The software renormalizes the weights to sum to 1.

is the user-specified cost of classifying an observation into class when its true class is yj.

The binary loss is a function of the class and classification score that determines how well a binary learner classifies an observation into the class. The decoding scheme of an ECOC model specifies how the software aggregates the binary losses and determines the predicted class for each observation.

Assume the following:

mkj is element (k,j) of the coding design matrix M—that is, the code corresponding to class k of binary learner j. M is a K-by-B matrix, where K is the number of classes, and B is the number of binary learners.

sj is the score of binary learner j for an observation.

g is the binary loss function.

is the predicted class for the observation.

The software supports two decoding schemes:

Loss-based decoding [2] (

Decodingis"lossbased") — The predicted class of an observation corresponds to the class that produces the minimum average of the binary losses over all binary learners.Loss-weighted decoding [3] (

Decodingis"lossweighted") — The predicted class of an observation corresponds to the class that produces the minimum average of the binary losses over the binary learners for the corresponding class.The denominator corresponds to the number of binary learners for class k. [1] suggests that loss-weighted decoding improves classification accuracy by keeping loss values for all classes in the same dynamic range.

The predict, resubPredict, and

kfoldPredict functions return the negated value of the objective

function of argmin as the second output argument

(NegLoss) for each observation and class.

This table summarizes the supported binary loss functions, where yj is a class label for a particular binary learner (in the set {–1,1,0}), sj is the score for observation j, and g(yj,sj) is the binary loss function.

| Value | Description | Score Domain | g(yj,sj) |

|---|---|---|---|

"binodeviance" | Binomial deviance | (–∞,∞) | log[1 + exp(–2yjsj)]/[2log(2)] |

"exponential" | Exponential | (–∞,∞) | exp(–yjsj)/2 |

"hamming" | Hamming | [0,1] or (–∞,∞) | [1 – sign(yjsj)]/2 |

"hinge" | Hinge | (–∞,∞) | max(0,1 – yjsj)/2 |

"linear" | Linear | (–∞,∞) | (1 – yjsj)/2 |

"logit" | Logistic | (–∞,∞) | log[1 + exp(–yjsj)]/[2log(2)] |

"quadratic" | Quadratic | [0,1] | [1 – yj(2sj – 1)]2/2 |

The software normalizes binary losses so that the loss is 0.5 when yj = 0, and aggregates using the average of the binary learners [1].

Do not confuse the binary loss with the overall classification loss (specified by the

LossFun name-value argument of the kfoldLoss and

kfoldPredict object functions), which measures how well an ECOC

classifier performs as a whole.

References

[1] Allwein, E., R. Schapire, and Y. Singer. “Reducing multiclass to binary: A unifying approach for margin classifiers.” Journal of Machine Learning Research. Vol. 1, 2000, pp. 113–141.

[2] Escalera, S., O. Pujol, and P. Radeva. “Separability of ternary codes for sparse designs of error-correcting output codes.” Pattern Recog. Lett. Vol. 30, Issue 3, 2009, pp. 285–297.

[3] Escalera, S., O. Pujol, and P. Radeva. “On the decoding process in ternary error-correcting output codes.” IEEE Transactions on Pattern Analysis and Machine Intelligence. Vol. 32, Issue 7, 2010, pp. 120–134.

Extended Capabilities

To run in parallel, specify the Options name-value argument in the call to

this function and set the UseParallel field of the

options structure to true using

statset:

Options=statset(UseParallel=true)

For more information about parallel computing, see Run MATLAB Functions with Automatic Parallel Support (Parallel Computing Toolbox).

This function fully supports GPU arrays. For more information, see Run MATLAB Functions on a GPU (Parallel Computing Toolbox).

Version History

Introduced in R2016aStarting in R2023b, the following classification model object functions use observations with missing predictor values as part of resubstitution ("resub") and cross-validation ("kfold") computations for classification edges, losses, margins, and predictions.

In previous releases, the software omitted observations with missing predictor values from the resubstitution and cross-validation computations.

See Also

ClassificationPartitionedLinearECOC | ClassificationECOC | ClassificationLinear | loss | kfoldPredict | fitcecoc | statset

MATLAB Command

You clicked a link that corresponds to this MATLAB command:

Run the command by entering it in the MATLAB Command Window. Web browsers do not support MATLAB commands.

选择网站

选择网站以获取翻译的可用内容,以及查看当地活动和优惠。根据您的位置,我们建议您选择:。

您也可以从以下列表中选择网站:

如何获得最佳网站性能

选择中国网站(中文或英文)以获得最佳网站性能。其他 MathWorks 国家/地区网站并未针对您所在位置的访问进行优化。

美洲

- América Latina (Español)

- Canada (English)

- United States (English)

欧洲

- Belgium (English)

- Denmark (English)

- Deutschland (Deutsch)

- España (Español)

- Finland (English)

- France (Français)

- Ireland (English)

- Italia (Italiano)

- Luxembourg (English)

- Netherlands (English)

- Norway (English)

- Österreich (Deutsch)

- Portugal (English)

- Sweden (English)

- Switzerland

- United Kingdom (English)