Perform Instance Segmentation Using Mask R-CNN

This example shows how to segment individual instances of people and cars using a multiclass mask region-based convolutional neural network (R-CNN).

Instance segmentation is a computer vision technique in which you detect and localize objects while simultaneously generating a segmentation map for each of the detected instances. This example first shows how to perform instance segmentation using a pretrained Mask R-CNN that detects two classes. Then, you can optionally download a data set and train a multiclass Mask R-CNN using transfer learning.

Load Pretrained Network

Specify dataFolder as the desired location of the pretrained network and data.

dataFolder = fullfile(tempdir,"coco");Download the pretrained Mask R-CNN. The network is stored as a maskrcnn object.

trainedMaskRCNN_url = "https://www.mathworks.com/supportfiles/vision/data/maskrcnn_object_person_car_v2.mat"; downloadTrainedMaskRCNN(trainedMaskRCNN_url,dataFolder); load(fullfile(dataFolder,"maskrcnn_object_person_car_v2.mat"));

Segment People in Image

Read a test image that contains objects of the target classes.

imTest = imread("visionteam.jpg");Segment the objects and their masks using the segmentObjects function. The segmentObjects function performs these preprocessing steps on the input image before performing prediction.

Zero center the images using the COCO data set mean.

Resize the image to the input size of the network, while maintaining the aspect ratio (letter boxing).

[masks,labels,scores,boxes] = segmentObjects(net,imTest,Threshold=0.8);

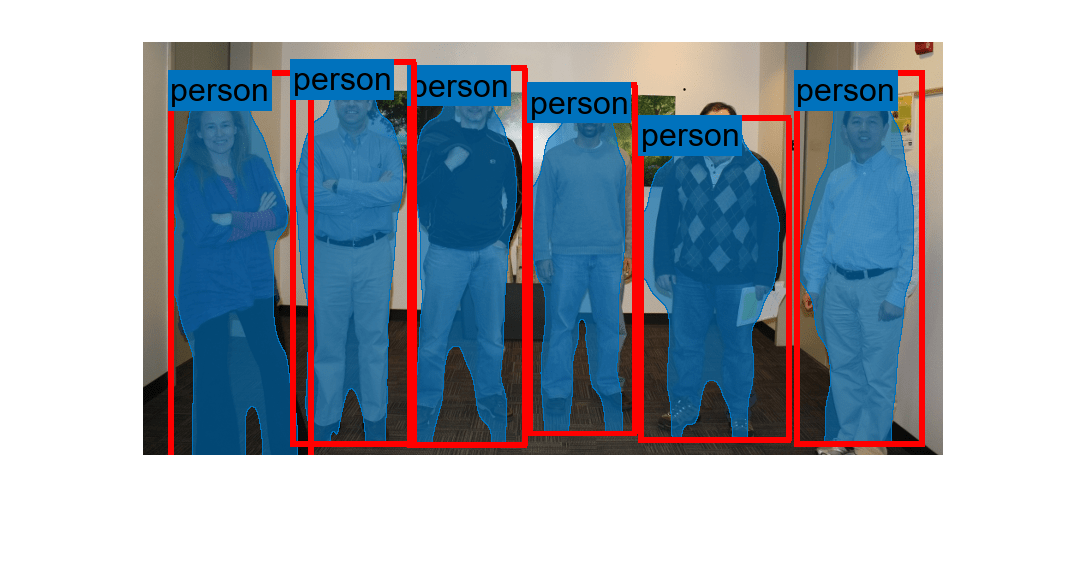

Visualize the predictions by overlaying the detected masks on the image using the insertObjectMask function.

overlayedImage = insertObjectMask(imTest,masks); imshow(overlayedImage)

Show the bounding boxes and labels on the objects.

showShape("rectangle",gather(boxes),Label=labels,LineColor="r")

Download Training Data

The COCO 2014 training images data set [2] consists of 82,783 images. The annotations data contains at least five captions corresponding to each image. Download the COCO 2014 training images and captions from https://cocodataset.org/#download by clicking the "2014 Train images" and "2014 Train/Val annotations" links, respectively. Extract the image files into the dataFolder created earlier and specify the location of the images and annotation folders.

imageFolder = fullfile(dataFolder,"train2014"); annotationFolder = fullfile(dataFolder,"annotations");

Specify the name of the COCO JSON file that defines the training data ground truth.

annotationFile = fullfile(annotationFolder,"instances_train2014.json");Prepare Data for Training

To train a Mask R-CNN, you need this data.

RGB images that serve as input to the network, specified as H-by-W-by-3 numeric arrays.

Bounding boxes for objects in the RGB images, specified as NumObjects-by-4 matrices, with rows in the format [x y w h]).

Instance labels, specified as NumObjects-by-1 string vectors.

Instance masks. Each mask is the segmentation of one instance in the image. The COCO data set specifies object instances using polygon coordinates formatted as NumObjects-by-2 cell arrays. Each row of the array contains the (x,y) coordinates of a polygon along the boundary of one instance in the image. However, the Mask R-CNN in this example requires binary masks specified as logical arrays of size H-by-W-by-NumObjects.

Initialize Training Data Parameters

trainClassNames = ["person","car"]; numClasses = length(trainClassNames); imageSizeTrain = [800 800 3];

Load COCO Ground Truth Data

Use groundTruthFromCOCO to load ground truth data stored in the COCO JSON file in a groundTruth object.

gTruthAllClasses = groundTruthFromCOCO(annotationFile,imageFolder);

Select the ground truth data for the "person" and "car" class.

gTruth = selectLabelsByName(gTruthAllClasses,trainClassNames);

To visualize and review the ground truth data, use the Image Labeler App:

imageLabeler(gTruth)

Create Datastore

The Mask R-CNN training function expects input data as a 1-by-4 cell array containing the RGB training image, bounding boxes, instance labels, and instance masks. The ground truth data is stored as polygons. The polygon data must be converted into a set of binary instance masks and axis-aligned rectangles for training. The process starts by loading the image and label data into datastores and then applying a transform to perform the conversion.

Use imageDatastore to load the training images.

imds = imageDatastore(gTruth.DataSource.Source);

Gather all the polygon data from the groundTruth object and group it by label type so that all the polygon data for all classes is combined.

labelData = gatherLabelData(gTruth,labelType.Polygon,GroupLabelData="LabelType");Identify which images have label data and only keep those rows of data.

hasData = rowfun(@(x)~isempty(x{1}),labelData{1});

imds = subset(imds,hasData{:,1});

labels = labelData{1}(hasData{:,1},:);Create an arrayDatastore to read the label data.

labelDS = arrayDatastore(labels{:,1},IterationDimension=1,OutputType="same");Combine the image and label data and apply a data transform to convert each polygon into a bounding box and a stack of binary masks for training Mask R-CNN and preview the results.

ds = combine(imds,labelDS); tds = transform(ds,@(data,info)helperPolygonToMaskTransform(data,info),IncludeInfo=true); data = preview(tds)

data=1×4 cell array

429×640×3 uint8 [4.8200,2.8900,478.1700,302.7100] "person" 429×640 logical

To speed-up training, write the binary instance masks to disk instead of computing them during training. The binary masks, bounding boxes, and labels are written into a MAT file. The name of the MAT-file matches the name of the corresponding image.

labelDataFolder = fullfile(dataFolder,"masksBoxesAndLabels"); if ~isfolder(labelDataFolder) mkdir(labelDataFolder); writeall(tds,labelDataFolder,WriteFcn=@(data,info,fmt)helperWriteMaskBoxesAndLabels(data,info,fmt,trainClassNames)); end

Create a fileDatastore to read MAT files during training.

labelDS = fileDatastore(labelDataFolder,... IncludeSubfolders=true,... ReadFcn=@helperReadMaskBoxesAndLabels,... UniformRead=true);

Order the image and MAT files so that they are in the same order.

[~,ordImgs] = sortrows(imds.Files); [~,ordLabels] = sortrows(labelDS.Files); imds = subset(imds,ordImgs); labelDS = subset(labelDS,ordLabels);

Combine image and label datastores.

ds = combine(imds,labelDS); preview(ds)

ans=1×4 cell array

640×429×3 uint8 [31.8000,169.8300,251.3700,401.8600;31.8000,169.8300,251.3700,401.8600] 2×1 categorical 640×429×2 logical

Configure Mask R-CNN Network

The Mask R-CNN builds upon a Faster R-CNN with a ResNet-50 base network. To transfer learn on the pretrained Mask R-CNN network, use the maskrcnn object to load the pretrained network and customize the network for the new set of classes and input size. By default, the maskrcnn object uses the same anchor boxes as used for training with COCO data set.

net = maskrcnn("resnet50-coco",trainClassNames,InputSize=imageSizeTrain)net =

maskrcnn with properties:

ModelName: 'maskrcnn'

ClassNames: {'person' 'car'}

InputSize: [800 800 3]

AnchorBoxes: [15×2 double]

If you want to use custom anchor boxes specific to the training data set, you can estimate the anchor boxes using the estimateAnchorBoxes function. Then, specify the anchor boxes using the AnchorBoxes name-value argument when you create the maskrcnn object.

Train Network

Specify the options for SGDM optimization and train the network for 10 epochs.

Specify the ExecutionEnvironment name-value argument as "gpu" to train on a GPU. It is recommended to train on a GPU with at least 12 GB of available memory. Using a GPU requires Parallel Computing Toolbox™ and a CUDA® enabled NVIDIA® GPU. For more information, see GPU Computing Requirements (Parallel Computing Toolbox).

options = trainingOptions("sgdm", ... InitialLearnRate=0.001, ... LearnRateSchedule="piecewise", ... LearnRateDropPeriod=1, ... LearnRateDropFactor=0.95, ... Plot="none", ... Momentum=0.9, ... MaxEpochs=10, ... MiniBatchSize=2, ... BatchNormalizationStatistics="moving", ... ResetInputNormalization=false, ... ExecutionEnvironment="gpu", ... VerboseFrequency=50);

To train the Mask R-CNN network, set the doTraining variable in the following code to true. Train the network using the trainMaskRCNN function. Because the training data set is similar to the data that the pretrained network is trained on, you can freeze the weights of the feature extraction backbone using the FreezeSubNetwork name-value argument.

doTraining = false; if doTraining [net,info] = trainMaskRCNN(ds,net,options,FreezeSubNetwork="backbone"); modelDateTime = string(datetime("now",Format="yyyy-MM-dd-HH-mm-ss")); save("trainedMaskRCNN-"+modelDateTime+".mat","net"); end

Epoch Iteration TimeElapsed LearnRate TrainingLoss TrainingRPNLoss TrainingRMSE TrainingMaskLoss

_____ _________ ___________ _________ ____________ _______________ ____________ ________________

3 50 00:00:57 0.0009025 1.181 0.63955 0.013308 0.35861

5 100 00:01:54 0.00081451 0.94322 0.47035 0.010836 0.33167

7 150 00:02:49 0.00073509 0.6531 0.25798 0.0098662 0.27047

9 200 00:03:45 0.00066342 1.5889 0.68232 0.007739 0.67676

Using the trained network, you can perform instance segmentation on test images, as demonstrated in the "Segment People in Image" section of this example.

Supporting Functions

function [out,info] = helperPolygonToMaskTransform(data,info) % Transform polygons into binary instance masks and bouning boxes. out = cell(size(data,1),4); for i = 1:size(data,1) I = data{i,1}; annotationStruct = data{i,2}; if isempty(annotationStruct) maskset = false(0,0,0); boxes = zeros(0,4); labels = createArray(0,1,Like=""); else s = data{i,2}; % Convert polygon into a set of binary masks and a set of bounding % boxes. [maskset,boxes,labels] = helperPolygonsToMaskSetAndBoxes(s,size(I)); end % Package output in the required for training. out{i,1} = I; out{i,2} = boxes; out{i,3} = labels; out{i,4} = maskset; end end function [maskset,boxes,labels] = helperPolygonsToMaskSetAndBoxes(s,sizeI) % Transform polygons into binary instance masks and bouning boxes. % The input s is M-by-N cell. Each row contains ROI data for a particular class. numInstances = sum(cellfun(@(x)numel(x),s(:,1))); maskset = false([sizeI(1:2) numInstances]); % Generate labels for each instance. labels = cell(size(s,1),1); for i = 1:size(s,1) roiData = s{i,1}; labels{i} = repmat(string(s{i,2}),numel(roiData),1); end labels = reshape(vertcat(labels{:}),[],1); % Convert polygon into binary instance masks and bounding boxes. s = [s{:,1}]; boxes = zeros(numInstances,4); for i = 1:numInstances poly = s.Position; minxy = min(poly); maxxy = max(poly); boxes(i,:) = reshape([minxy maxxy-minxy],[],4); maskset(:,:,i) = poly2mask(poly(:,1),poly(:,2),sizeI(1),sizeI(2)); end end function helperWriteMaskBoxesAndLabels(data,writeInfo,~,classes) % Write binary instance masks, boxes, and labels into a MAT-file. [fPath,fName,~] = fileparts(writeInfo.SuggestedOutputName); labelData.Boxes = data{2}; labelData.Labels = categorical(string(data{3}),classes); labelData.Masks = data{4}; save(fullfile(fPath,fName+".mat"),"labelData"); end function data = helperReadMaskBoxesAndLabels(filename) % Read instance masks, boxes, and labels from a MAT-file. loaded = load(filename); data = cell(1,3); data{1} = loaded.labelData.Boxes; data{2} = loaded.labelData.Labels; data{3} = loaded.labelData.Masks; end

References

[1] He, Kaiming, Georgia Gkioxari, Piotr Dollár, and Ross Girshick. “Mask R-CNN.” Preprint, submitted January 24, 2018. https://arxiv.org/abs/1703.06870.

[2] Lin, Tsung-Yi, Michael Maire, Serge Belongie, Lubomir Bourdev, Ross Girshick, James Hays, Pietro Perona, Deva Ramanan, C. Lawrence Zitnick, and Piotr Dollár. “Microsoft COCO: Common Objects in Context,” May 1, 2014. https://arxiv.org/abs/1405.0312v3.

See Also

maskrcnn | trainMaskRCNN | segmentObjects | transform | insertObjectMask

Topics

- Perform Instance Segmentation Using SOLOv2

- Getting Started with Mask R-CNN for Instance Segmentation

- Deep Learning in MATLAB (Deep Learning Toolbox)

- Datastores for Deep Learning (Deep Learning Toolbox)