dlgradient

使用自动微分计算自定义训练循环的梯度

说明

dlgradient 函数使用自动微分来计算导数。

提示

对于大多数深度学习任务,您可以使用预训练神经网络,并使其适应您自己的数据。有关说明如何使用迁移学习来重新训练卷积神经网络以对一组新图像进行分类的示例,请参阅重新训练神经网络以对新图像进行分类。您也可以使用 trainnet 和 trainingOptions 函数从头创建和训练神经网络。

如果 trainingOptions 函数没有提供您的任务所需的训练选项,则您可以使用自动微分创建自定义训练循环。要了解详细信息,请参阅使用自定义训练循环训练网络。

如果 trainnet 函数没有提供您的任务所需的损失函数,您可以将 trainnet 的自定义损失函数指定为函数句柄。对于需要比预测值和目标值更多输入的损失函数(例如,需要访问神经网络或额外输入的损失函数),请使用自定义训练循环来训练模型。要了解详细信息,请参阅使用自定义训练循环训练网络。

如果 Deep Learning Toolbox™ 没有提供您的任务所需的层,则您可以创建一个自定义层。要了解详细信息,请参阅定义自定义深度学习层。对于无法指定为由层组成的网络的模型,可以将模型定义为函数。要了解详细信息,请参阅Train Network Using Model Function。

有关对哪项任务使用哪种训练方法的详细信息,请参阅Train Deep Learning Model in MATLAB。

[ 返回 dydx1,...,dydxk] = dlgradient(y,x1,...,xk)y 关于变量 x1 到 xk 的梯度。

从传递给 dlfeval 的函数内部调用 dlgradient。请参阅使用自动微分计算梯度和Use Automatic Differentiation In Deep Learning Toolbox。

[ 返回梯度并使用一个或多个名称-值对组指定其他选项。例如,dydx1,...,dydxk] = dlgradient(y,x1,...,xk,Name,Value)dydx = dlgradient(y,x,'RetainData',true) 会导致梯度保留中间值,以供在后续 dlgradient 调用中重用。此语法可以节省时间,但会使用更多内存。有关详细信息,请参阅提示。

示例

输入参数

名称-值参数

输出参量

限制

当使用的

dlnetwork对象包含具有自定义后向函数的自定义层时,dlgradient函数不支持计算高阶导数。当使用包含以下层的

dlnetwork对象时,dlgradient函数不支持计算高阶导数:gruLayerlstmLayerbilstmLayer

dlgradient函数不支持计算依赖于以下函数的高阶导数:grulstmembedprodinterp1

详细信息

提示

dlgradient调用必须在函数内部。要获取梯度的数值,您必须使用dlfeval计算函数,并且函数的参量必须是dlarray。请参阅Use Automatic Differentiation In Deep Learning Toolbox。为了能够正确计算梯度,

y参量必须仅使用dlarray支持的函数。请参阅List of Functions with dlarray Support。如果将

'RetainData'名称-值对组参量设置为true,则软件会在dlfeval函数调用期间保留跟踪,而不是在导数计算后立即擦除跟踪。这种保留可以加快同一dlfeval调用中后续dlgradient调用的执行速度,但使用的内存更多。例如,在训练对抗网络时,'RetainData'设置很有用,因为两个网络在训练期间共享数据和函数。请参阅训练生成对抗网络 (GAN)。当您只需要计算一阶导数时,请确保

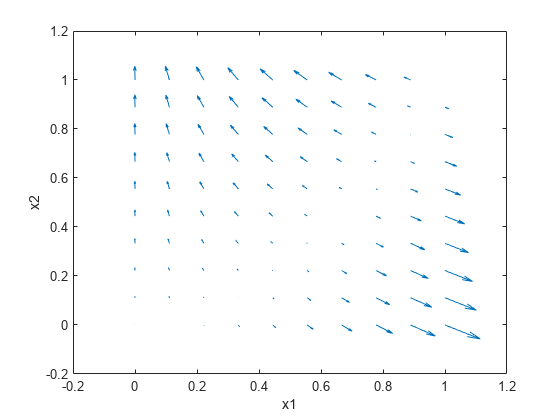

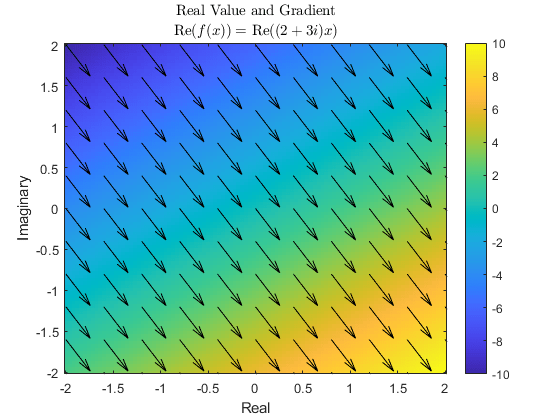

'EnableHigherDerivatives'选项为false,因为这通常速度更快并且需要的内存更少。复数梯度是使用 Wirtinger 导数计算的。梯度沿着要微分的函数的实部增加的方向定义。这是因为即使函数是复数函数,要微分的变量(例如损失)也必须是实数。

要加快对深度学习函数(例如模型函数和模型损失函数)的调用,可以使用

dlaccelerate函数。该函数返回一个AcceleratedFunction对象,该对象自动优化、缓存和重用跟踪。

扩展功能

版本历史记录

在 R2019b 中推出

另请参阅

dlarray | dlfeval | dlnetwork | dljacobian | dldivergence | dllaplacian | dlaccelerate