Inertial Sensor Fusion

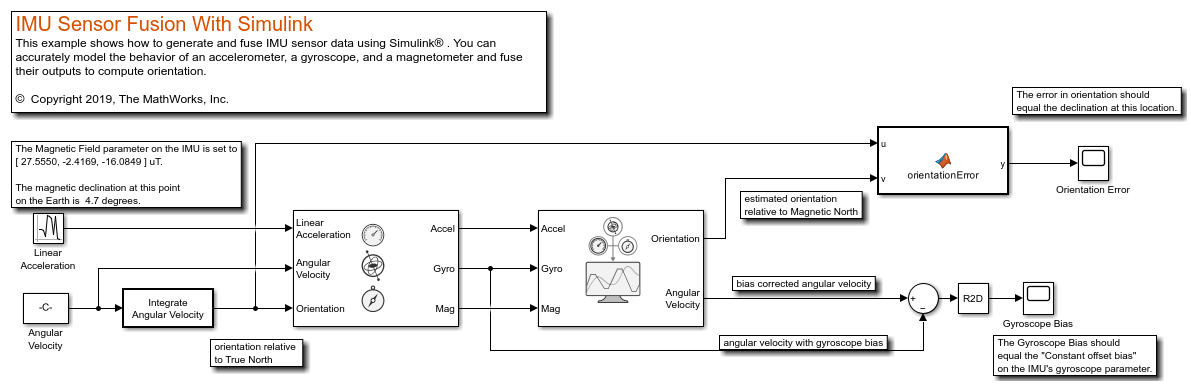

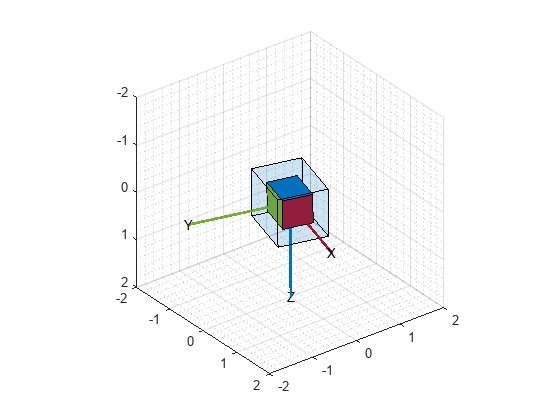

Use inertial sensor fusion algorithms to estimate orientation and position over time. The algorithms are optimized for different sensor configurations, output requirements, and motion constraints. You can directly fuse IMU data from multiple inertial sensors. You can also fuse IMU data with GPS data.

Functions

Blocks

| AHRS | Orientation from accelerometer, gyroscope, and magnetometer readings |

| IMU Filter | Estimate orientation using IMU Filter (Since R2023b) |

| ecompass | Compute orientation from accelerometer and magnetometer readings (Since R2024a) |

| Complementary Filter | Estimate orientation using complementary filter (Since R2023a) |

Topics

- Choose Inertial Sensor Fusion Filters

Applicability and limitations of various inertial sensor fusion filters.

- Fuse Inertial Sensor Data Using insEKF-Based Flexible Fusion Framework

The

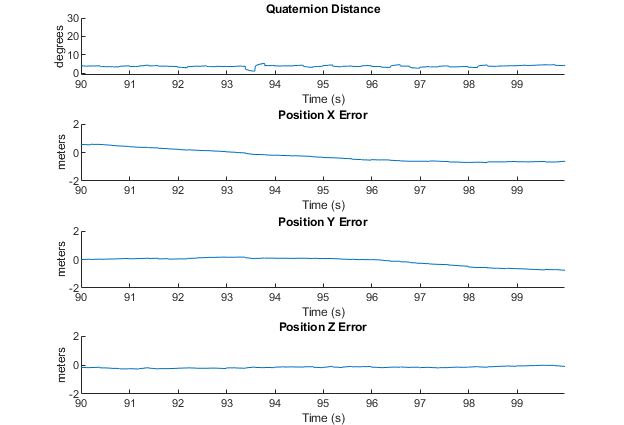

insEKFfilter object provides a flexible framework that you can use to fuse inertial sensor data. - Determine Orientation Using Inertial Sensors

Fuse inertial measurement unit (IMU) readings to determine orientation.

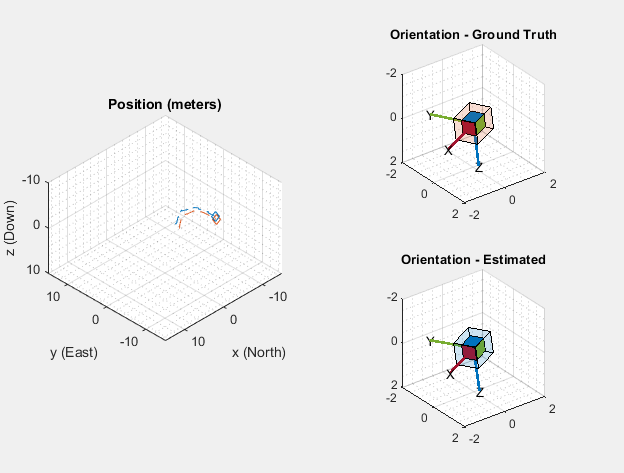

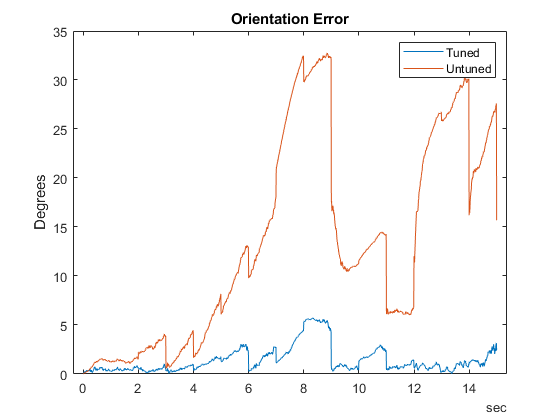

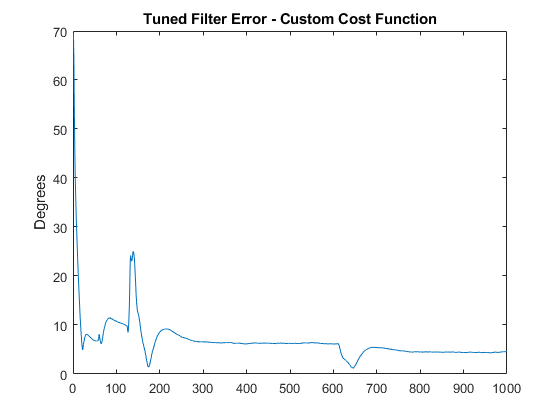

- Estimate Orientation Through Inertial Sensor Fusion

This example shows how to use 6-axis and 9-axis fusion algorithms to compute orientation.

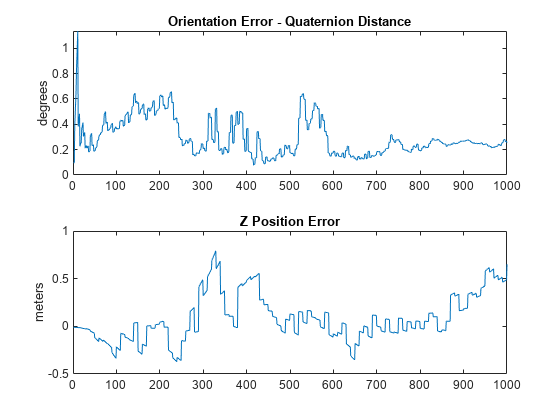

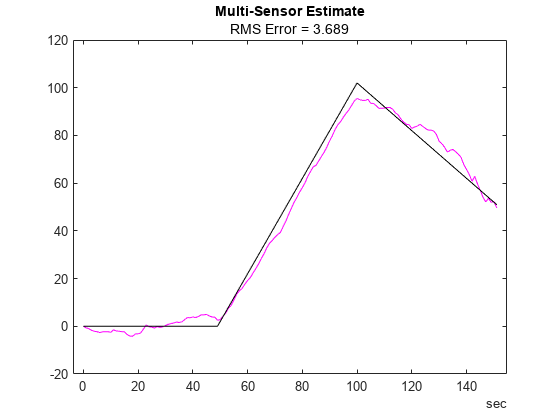

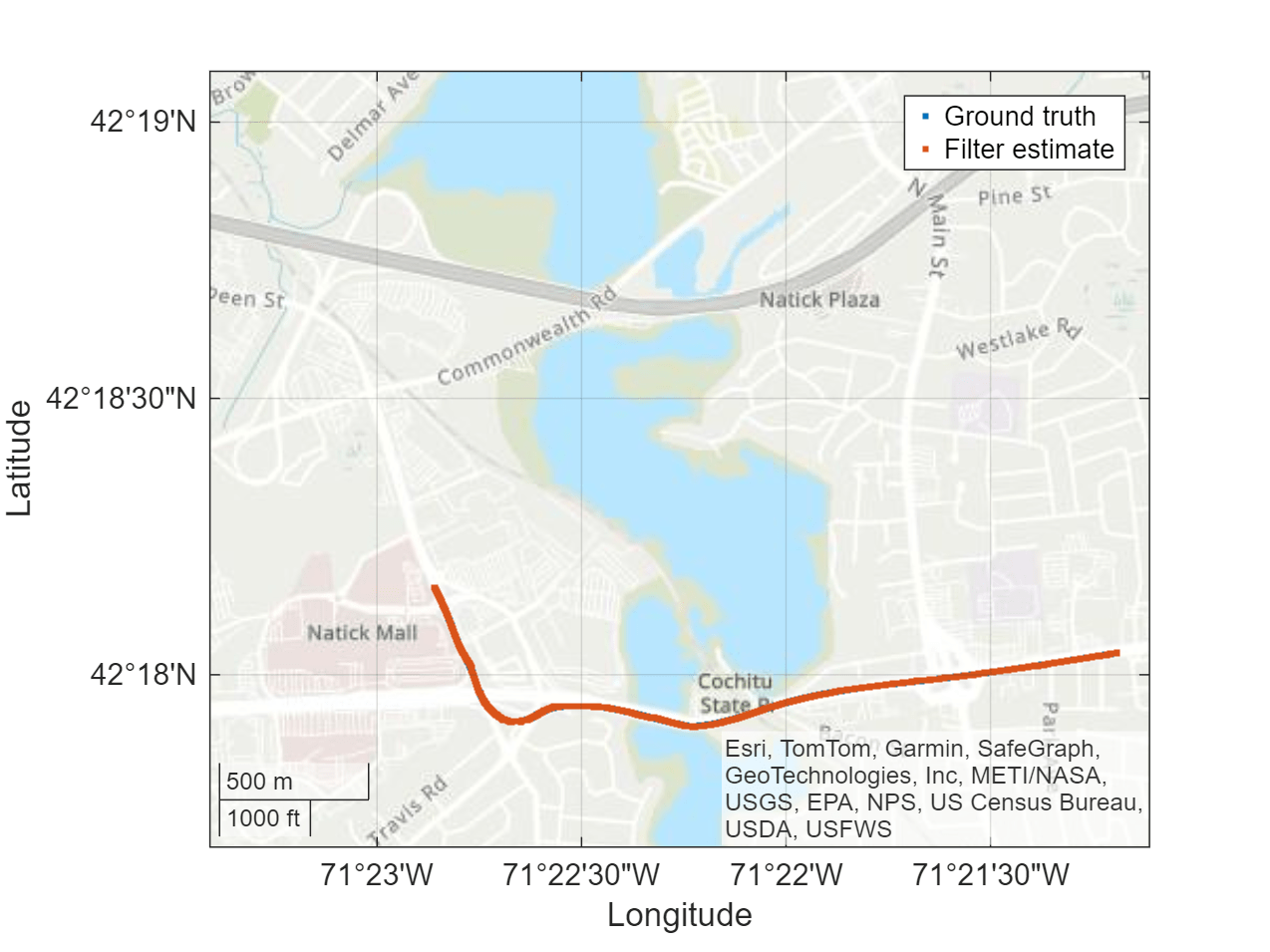

- Determine Pose Using Inertial Sensors and GPS

Use Kalman filters to fuse IMU and GPS readings to determine pose.

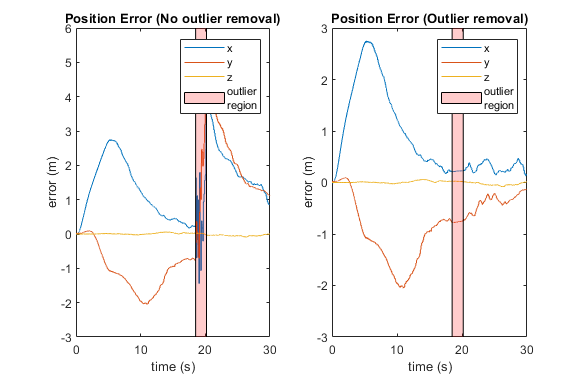

- Logged Sensor Data Alignment for Orientation Estimation

This example shows how to align and preprocess logged sensor data.