Performant and Deployable Monocular Visual SLAM

Visual simultaneous localization and mapping (vSLAM) refers to the process of calculating the position and orientation of a camera with respect to its surroundings while simultaneously mapping the environment. Applications for vSLAM include augmented reality, robotics, and autonomous driving. In this example, the algorithm uses only visual inputs from the camera.

MATLAB® vSLAM examples each show one of these vSLAM implementations:

Modular and Modifiable ─ Builds a visual SLAM pipeline step-by-step by using functions and objects. For more details and a list of these functions and objects, see the Implement Visual SLAM in MATLAB (Computer Vision Toolbox) topic. The approach described in the topic contains modular code, and is designed to teach the details of a vSLAM implementation, that is loosely based on the popular and reliable ORB-SLAM [1] algorithm. The code is easily navigable, enabling you to explore the algorithm and test how its parameters can impact the performance of the system. This modular implementation is most suitable for learning, experimentation, and modification to test your own ideas.

Performant and Deployable ─ Uses the monovslam object which contains a complete vSLAM workflow. The object offers a practical solution with greatly improved execution speed and code generation. To generate multi-threaded C/C++ code from

monovslam(Computer Vision Toolbox), you can use MATLAB Coder™. The generated code is portable, and you can deploy it on non-PC hardware as well as a ROS node, as demonstrated in the Build and Deploy Visual SLAM Algorithm with ROS in MATLAB (Computer Vision Toolbox) example.

This example shows the Performant and Deployable implementation for processing image data from a monocular camera. For more details about the modular and modifiable implementation, see the Monocular Visual Simultaneous Localization and Mapping (Computer Vision Toolbox) example.

Download Data

This example uses data from the TUM RGB-D benchmark [2]. The size of the data set is 1.38 GB. You can download the data set to a temporary folder using this code.

baseDownloadURL = "https://vision.in.tum.de/rgbd/dataset/freiburg3/rgbd_dataset_freiburg3_long_office_household.tgz"; dataFolder = fullfile(tempdir,"tum_rgbd_dataset",filesep); options = weboptions(Timeout=Inf); tgzFileName = dataFolder + "fr3_office.tgz"; folderExists = exist(dataFolder,"dir"); % Create a folder in a temporary directory in which to save the downloaded file if ~folderExists mkdir(dataFolder) disp("Downloading fr3_office.tgz (1.38 GB). This download can take a few minutes.") websave(tgzFileName,baseDownloadURL,options); % Extract contents of the downloaded file disp("Extracting fr3_office.tgz (1.38 GB) ...") untar(tgzFileName,dataFolder); end

Create an imageDatastore object to store the RGB images.

imageFolder = dataFolder + "rgbd_dataset_freiburg3_long_office_household/rgb/";

imds = imageDatastore(imageFolder);Implement Visual SLAM

Create a cameraIntrinsics object to store the camera intrinsic parameters. The intrinsic parameters for the data set are available on the data set webpage [3]. Note that the images in the data set are already undistorted, hence there is no need to specify the distortion coefficients. If you are using your own camera, you can estimate the intrinsic parameters using the Camera Calibrator (Computer Vision Toolbox) app.

focalLength = [535.4,539.2]; % in units of pixels principalPoint = [320.1,247.6]; % in units of pixels imageSize = [480,640]; % in units of pixels intrinsics = cameraIntrinsics(focalLength,principalPoint,imageSize);

Create a monovslam object with the camera intrinsics object. You may also need to modify these properties based on the video data you intend to use.

MaxNumPoints─ This property controls the number of ORB features extracted from every frame. For an image resolution of 480-by-640 pixels, setMaxNumPoints1000. For higher resolutions, such as 720-by-1280, set it to 2000. Larger values slow down the execution speed.SkipMaxFrames─ When tracking from frame to frame is reliable, this property sets the maximum number of frames that the object can skip before the next frame to be a key frame. With a frame rate of 30 fps, setSkipMaxFramesto 15. For a slower frame rate, choose a smaller value. IncreasingSkipMaxFramesenhances the processing speed, but may lead to tracking loss during fast camera motion.TrackFeatureRange─ This property specifies the minimum and maximum numbers of tracked features points a frame must contain for the object to identify it as a key frame. TheTrackFeatureRangeand theSkipMaxFramesproperties enable you to control the frequency at which frames in the tracking process adds key frames.

numPoints = 1000; numSkipFrames = 15; trackedFeatureRange = [30 120]; vslam = monovslam(intrinsics,MaxNumPoints=numPoints,SkipMaxFrames=numSkipFrames,TrackFeatureRange=trackedFeatureRange);

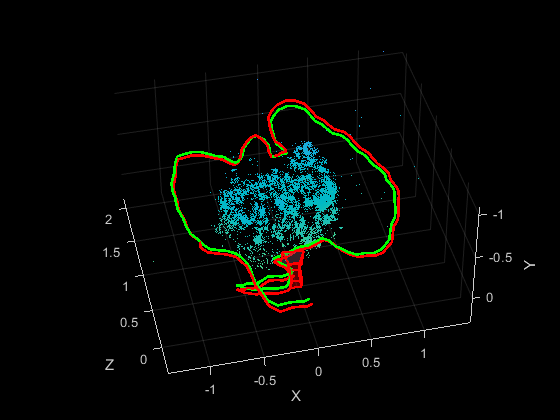

Process each image by using the addFrame (Computer Vision Toolbox) function. Use the plot (Computer Vision Toolbox) method to visualize the estimated camera trajectory and the 3-D map points.

ax = gca; for i=1:numel(imds.Files) I = readimage(imds,i); addFrame(vslam, I); if hasNewKeyFrame(vslam) % Display 3-D map points and camera trajectory plot(vslam, Parent=ax); end end

Note that the monovslam object runs several algorithm parts on separate threads, which can introduce a latency in processing of an image frame added by using the addFrame (Computer Vision Toolbox) function.

% Plot intermediate results and wait until all images are processed while ~isDone(vslam) if hasNewKeyFrame(vslam) plot(vslam, Parent=ax); end end

After all the images are processed, you can collect the final 3-D map points and camera poses for further analysis.

xyzPoints = mapPoints(vslam); [camPoses,viewIds] = poses(vslam);

Compare with the Ground Truth

You can compare the optimized camera trajectory with the ground truth to evaluate the accuracy of ORB-SLAM. The comparison can also help tune the parameters used the monovslam (Computer Vision Toolbox) object. The downloaded data contains a groundtruth.txt file that stores the ground truth of the camera pose of each frame. You can import the data using the helperImportGroundTruth function. Once you import the ground truth, you can visualize the actual camera trajectory and calculate the root-mean-square-error (RMSE) of trajectory estimates.

% Load ground truth from the downloaded data gTruthFileName = dataFolder+"rgbd_dataset_freiburg3_long_office_household/groundtruth.txt"; gTruth = helperImportGroundTruth(gTruthFileName,imds); % Compute the scale between the estimated camera trajectory and % the actual camera trajectory metrics = compareTrajectories(camPoses, gTruth(viewIds), AlignmentType="similarity"); disp(['Absolute RMSE for key frame location (m): ', num2str(metrics.AbsoluteRMSE(2))]);

Absolute RMSE for key frame location (m): 0.051681

% Plot the absolute translation error at each key frame figure ax = plot(metrics, "absolute-translation"); view(ax, [2.70 -49.20]);

Code Generation

You can generate efficient multi-threaded C++ code from the monovslam (Computer Vision Toolbox) object, which is suitable for deployment on a host computer or an embedded platform that has all the third-party dependencies, including OpenCV [4] and g2o [5]. For illustrative purposes, in this section, you generate MEX code. For more information about deploying the generated code as a ROS node, see the Build and Deploy Visual SLAM Algorithm with ROS in MATLAB (Computer Vision Toolbox) example.

To meet the requirements of MATLAB Coder, you must restructure the code to isolate the algorithm from the visualization code. The algorithmic content was encapsulated in the helperMonoVisualSLAM function, which takes an image as the input and outputs 3-D world points and camera poses as matrices. The function internally creates a monovslam (Computer Vision Toolbox) object and saves it into a persistent variable called vslam. Note that helperMonoVisualSLAM function does not display the reconstructed 3-D point cloud or the camera poses. The plot (Computer Vision Toolbox) method of the monovslam (Computer Vision Toolbox) class was not designed to generate code because visualization is typically not deployed on embedded systems.

type("helperMonoVisualSLAM.m"); % display function contents

function [xyzPoint, camPoses] = helperMonoVisualSLAM(image)

% Copyright 2023-2025 The MathWorks Inc.

%#codegen

persistent vslam xyzPointsInternal camPosesInternal

if isempty(vslam)

% Create a monovslam class to process the image data

focalLength = [535.4, 539.2]; % in units of pixels

principalPoint = [320.1, 247.6]; % in units of pixels

imageSize = [480, 640]; % in units of pixels

intrinsics = cameraIntrinsics(focalLength, principalPoint, imageSize);

numPoints = 1000;

numSkipFrames = 15;

trackedFeatureRange = [30 120];

vslam = monovslam(intrinsics,MaxNumPoints=numPoints,SkipMaxFrames=numSkipFrames,TrackFeatureRange=trackedFeatureRange);

end

% Process each image frame

addFrame(vslam, image);

% Get 3-D map points and camera poses

if isempty(xyzPointsInternal) || hasNewKeyFrame(vslam)

xyzPointsInternal = mapPoints(vslam);

camPosesInternal = poses(vslam);

end

xyzPoint = xyzPointsInternal;

% Convert camera poses to homogeneous transformation matrices

camPoses = cat(3, camPosesInternal.A);

You can compile the helperMonoVisualSLAM function into a MEX file by using the codegen command. Note that the generated MEX file has the same name as the original MATLAB file with _mex appended, unless you use the -o option to specify the name of the executable.

compileTimeInputs = {coder.typeof(I)};

codegen helperMonoVisualSLAM -args compileTimeInputsCode generation successful.

Process the image data frame-by-frame using the MEX-file.

% Process each image frame for i=1:numel(imds.Files) [xyzPoints, camPoses] = helperMonoVisualSLAM_mex(readimage(imds,i)); end

Implementations for Other Sensors

To address the challenge of unknown scale in monocular visual SLAM, you can utilize a stereo camera or an RGB-D sensor, both of which can calculate the actual dimensions of the scene. You can find more details about the implementation of stereo and RGB-D visual SLAM on the stereovslam (Computer Vision Toolbox) and rgbdvslam (Computer Vision Toolbox) object pages, respectively. Alternatively, you can integrate the camera with an IMU sensor to recover the actual scale using the visual-inertial SLAM algorithm. These examples demonstrate some of these implementations:

Reference

[1] Mur-Artal, Raul, J. M. M. Montiel, and Juan D. Tardos. “ORB-SLAM: A Versatile and Accurate Monocular SLAM System.” IEEE Transactions on Robotics 31, no. 5 (October 2015): 1147–63. https://doi.org/10.1109/TRO.2015.2463671.

[2] Sturm, Jürgen, Nikolas Engelhard, Felix Endres, Wolfram Burgard, and Daniel Cremers. "A benchmark for the evaluation of RGB-D SLAM systems". In Proceedings of IEEE/RSJ International Conference on Intelligent Robots and Systems, pp. 573-580, 2012.

[3] https://cvg.cit.tum.de/data/datasets/rgbd-dataset/file_formats

[4] “OpenCV: OpenCV Modules. ”https://docs.opencv.org/4.7.0/.

[5] Kümmerle, Rainer, Giorgio Grisetti, Hauke Strasdat, Kurt Konolige, and Wolfram Burgard. “G2o: A General Framework for Graph Optimization.” In 2011 IEEE International Conference on Robotics and Automation, 3607–13, 2011. https://doi.org/10.1109/ICRA.2011.5979949.

See Also

monovslam (Computer Vision Toolbox)

Topics

- Develop Visual SLAM Algorithm Using Unreal Engine Simulation (Computer Vision Toolbox)

- Monocular Visual-Inertial Odometry (VIO) Using Factor Graph (Computer Vision Toolbox)

- Build and Deploy Visual SLAM Algorithm with ROS in MATLAB