writeViews

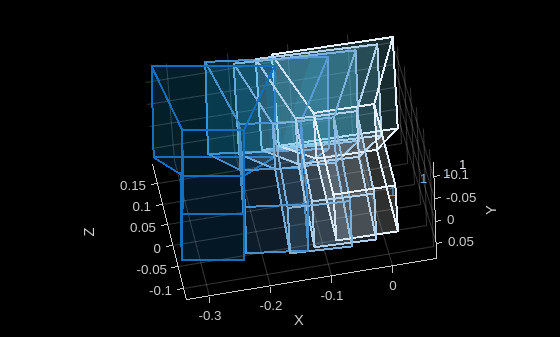

Write novel views from Nerfacto Neural Radiance Field (NeRF) model to image files

Since R2026a

Syntax

Description

Add-On Required: This feature requires the Computer Vision Toolbox Interface for Nerfstudio Library add-on.

imds = writeViews(nerf,cameraPoses)cameraPoses to a folder named

render in the current working directory. The function returns an image

datastore that contains the paths to the image files.

Note

This feature requires the Computer Vision Toolbox Interface for Nerfstudio Library add-on, a Deep Learning Toolbox™ license, a Parallel Computing Toolbox™ license, and a CUDA® enabled NVIDIA® GPU with at least 16 GB of available GPU memory.

imds = writeViews(nerf,cameraPoses,outputFolder)

imds = writeViews(___,Name=Value)

For example, ImageFormat="jpg" specifies to write the generated novel

view images to .jpg files.

Examples

Input Arguments

Name-Value Arguments

Output Arguments

References

[1] Mildenhall, Ben, Pratul P. Srinivasan, Matthew Tancik, Jonathan T. Barron, Ravi Ramamoorthi, and Ren Ng. “NeRF: Representing Scenes as Neural Radiance Fields for View Synthesis.” In Computer Vision – ECCV 2020, edited by Andrea Vedaldi, Horst Bischof, Thomas Brox, and Jan-Michael Frahm. Springer International Publishing, 2020. https://doi.org/10.1007/978-3-030-58452-8_24.

[2] Tancik, Matthew, Ethan Weber, Evonne Ng, et al. “Nerfstudio: A Modular Framework for Neural Radiance Field Development.” Special Interest Group on Computer Graphics and Interactive Techniques Conference Proceedings, ACM, July 23, 2023, 1–12. https://doi.org/10.1145/3588432.3591516.

Version History

Introduced in R2026a

See Also

nerfacto | generatePointCloud | generateViews | cameraIntrinsics | monovslam