trainNerfacto

Syntax

Description

Add-On Required: This feature requires the Computer Vision Toolbox Interface for Nerfstudio Library add-on.

nerf = trainNerfacto(trainingData,cameraPoses,intrinsics,outputFolder)outputFolder

argument.

Note

This feature requires the Computer Vision Toolbox Interface for Nerfstudio Library add-on, a Deep Learning Toolbox™ license, a Parallel Computing Toolbox™ license, and a CUDA® enabled NVIDIA® GPU with at least 16 GB of available GPU memory.

nerf = trainNerfacto(pretrainedModelFolder)

nerf = trainNerfacto(___,Name=Value)MaxIterations=1000 specifies to perform a maximum of 1000 training

iterations on the NeRF model.

Examples

Input Arguments

Name-Value Arguments

Output Arguments

Tips

Training a Nerfacto NeRF model using high-resolution training images can cause

out-of-memory errors. To resolve this, reduce the size of your training images using the

imresize

function, then obtain the camera poses and camera intrinsic parameters of the resized images.

Algorithms

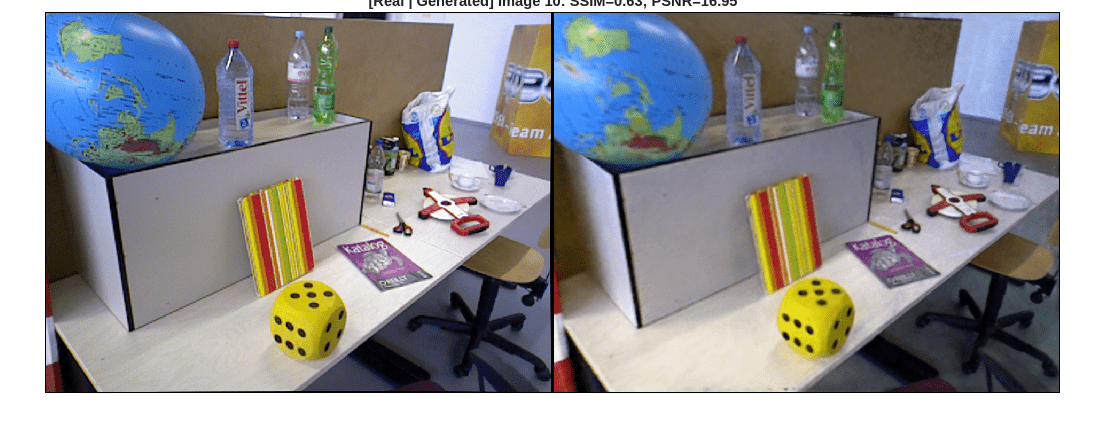

By training a NeRF model on a set of sparse input images and camera poses, you can create an internal representation of a 3D scene. You can use a trained NeRF model to generate a dense, colored point cloud, and render images from novel viewpoints.

A NeRF model represents a scene as a continuous 5D vector-valued function instantiated as a neural network with weights Ω. The function accepts the position and direction of a light ray and returns the color and density of 3D points along the ray.

When generating a novel view, the NeRF model first uses the neural network to calculate the color and density of 3D points along each ray from a virtual camera. The ray is defined as r(t) = o + td, where o is the origin coordinates of the ray, and d is the unit vector in the ray direction defined by the camera angles θ and ϕ. The neural network return the color c(t) if the ray r(t) hits a particle at distance t along the ray. The model then uses volumetric rendering to project the output colors and densities into an image.

During training, a NeRF model first samples a batch of rays that consist of multiple spatial locations and viewing directions. The neural network FΩ then predicts the color and density at 3D points along each ray, and the NeRF model uses volumetric rendering to project the output colors and densities onto an image. The model calculates the training loss by matching the image pixels of the rendered image against the captured images in the training data.

References

[1] Mildenhall, Ben, Pratul P. Srinivasan, Matthew Tancik, Jonathan T. Barron, Ravi Ramamoorthi, and Ren Ng. “NeRF: Representing Scenes as Neural Radiance Fields for View Synthesis.” In Computer Vision – ECCV 2020, edited by Andrea Vedaldi, Horst Bischof, Thomas Brox, and Jan-Michael Frahm. Springer International Publishing, 2020. https://doi.org/10.1007/978-3-030-58452-8_24.

[2] Tancik, Matthew, Ethan Weber, Evonne Ng, et al. “Nerfstudio: A Modular Framework for Neural Radiance Field Development.” Special Interest Group on Computer Graphics and Interactive Techniques Conference Proceedings, ACM, July 23, 2023, 1–12. https://doi.org/10.1145/3588432.3591516.

Version History

Introduced in R2026a