compressNetworkUsingTaylorPruning

Syntax

Description

Add-On Required: This feature requires the Deep Learning Toolbox Model Compression Library add-on.

The compressNetworkUsingTaylorPruning function reduces the

number of learnable parameters in a neural network by pruning the least important filters in

convolutional layers.

The compressNetworkUsingTaylorPruning function prunes a network

iteratively by repeating these steps:

Compute the importance score of each prunable filter.

Prune the least important filters.

Fine-tune the pruned network.

Tip

If you cannot train your network using the trainnet

function, then create a custom pruning loop by using the taylorPrunableNetwork function instead.

netPruned = compressNetworkUsingTaylorPruning(___,Name=Value)compressNetworkUsingTaylorPruning(net,data,lossFcn,options,LearnablesReductionGoal=0.3)

tries to remove 30% of learnable parameters.

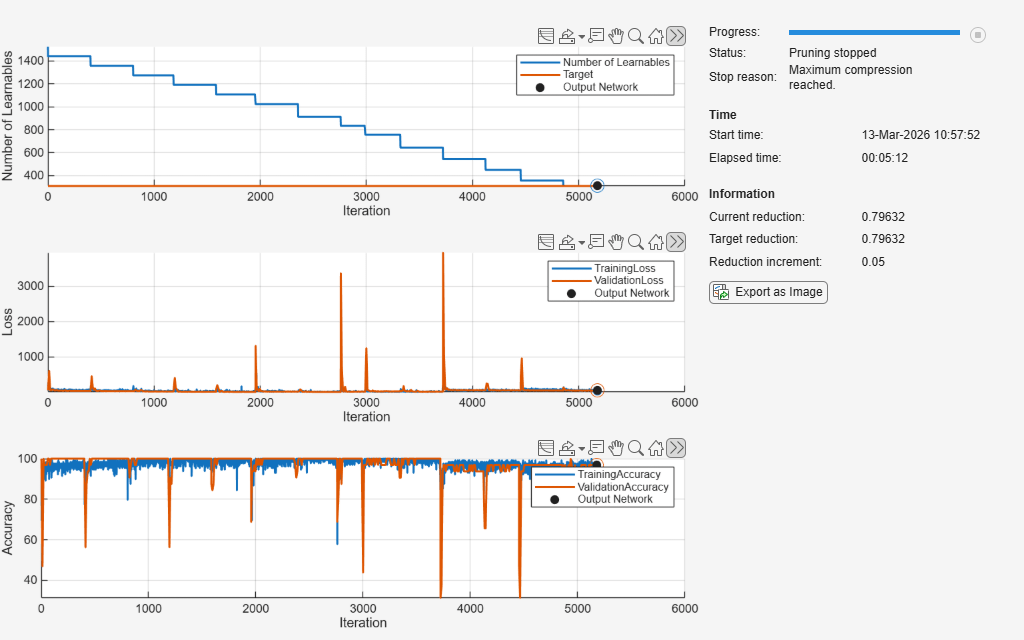

[

also returns information about the pruning process, such as the number of learnables in each

pruning iteration and the training loss in each fine-tuning iteration. You can use this

syntax with any of the input argument combinations in the previous syntaxes.netPruned,info] = compressNetworkUsingTaylorPruning(___)

Examples

Input Arguments

Name-Value Arguments

Output Arguments

More About

Tips

To speed up pruning, increase

LearnablesReductionIncrement, reduceNumImportanceScoreIterations, or reduce theMaxEpochsproperty of the fine-tuning optionsoptions.You can also stop fine-tuning early based on custom criteria by specifying the

OutputFcnproperty of the fine-tuning optionsoptions. To learn how to use an output function for early stopping, see Custom Stopping Criteria for Deep Learning Training.The

compressNetworkUsingTaylorPruningfunction retrains the pruned network during each fine-tuning iteration. If you speed up the training process, then the overall pruning process will speed up as well. To learn how to speed up neural network training, see Speed Up Deep Neural Network Training.To improve the predictive capability of the pruned network, reduce

LearnablesReductionIncrement, increaseNumImportanceScoreIterations, or increaseMaxEpochs.

Algorithms

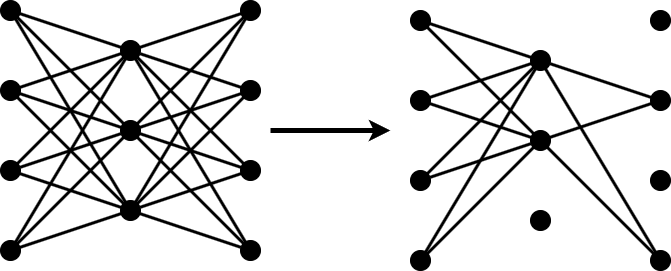

Pruning a neural network means removing the least important parameters to reduce the size of the network while preserving the quality of its predictions.

You can measure the importance of a set of parameters by the change in loss after you remove the parameters from the network. If the loss changes significantly, then the parameters are important. If the loss does not change significantly, then the parameters are not important and can be pruned.

Neural networks typically contain too many parameters for you to calculate the change in loss for all possible combinations of parameters. In that case, follow these steps to apply an iterative workflow instead.

Use an approximation to find and remove the least important parameter or a specified number of the least important parameters. For example, if you approximate the parameters to be independent, then you can measure the change in loss after removing each parameter by itself.

Fine-tune the new, smaller network by retraining it for several iterations.

Repeat steps 1 and 2 until you reach your compression goal.

To perform the approximation in step 1, calculate the Taylor expansion of the loss as a function of the individual network parameters. This method is called Taylor pruning.

For some types of layers, including convolutional layers, removing a parameter is equivalent to setting it to zero. In this case, the change in loss resulting from pruning a parameter θ can be expressed as

X is the training data of your network.

Calculate the Taylor expansion of the loss as a function of the parameter θ to first order using

Then, you can express the change of loss as a function of the gradient of the loss with respect to the parameter θ using

References

[1] Molchanov, Pavlo, Stephen Tyree, Tero Karras, Timo Aila, and Jan Kautz. "Pruning Convolutional Neural Networks for Resource Efficient Inference." arXiv, June 8, 2017. https://arxiv.org/abs/1611.06440.

Version History

Introduced in R2026a