fitcensemble

Fit ensemble of learners for classification

Syntax

Description

Mdl = fitcensemble(Tbl,ResponseVarName)Mdl) that contains the results of boosting 100

classification trees and the predictor and response data in the table

Tbl. ResponseVarName is the name of

the response variable in Tbl. By default,

fitcensemble uses LogitBoost for binary classification

and AdaBoostM2 for multiclass classification.

Mdl = fitcensemble(Tbl,formula)formula to fit the model to the predictor and

response data in the table Tbl. formula is

an explanatory model of the response and a subset of predictor variables in

Tbl used to fit Mdl. For example,

'Y~X1+X2+X3' fits the response variable

Tbl.Y as a function of the predictor variables

Tbl.X1, Tbl.X2, and

Tbl.X3.

Mdl = fitcensemble(___,Name,Value)Name,Value

pair arguments and any of the input arguments in the previous syntaxes. For

example, you can specify the number of learning cycles, the ensemble aggregation

method, or to implement 10-fold cross-validation.

[

also returns Mdl,AggregateOptimizationResults] = fitcensemble(___)AggregateOptimizationResults, which contains

hyperparameter optimization results when you specify the

OptimizeHyperparameters and

HyperparameterOptimizationOptions name-value arguments.

You must also specify the ConstraintType and

ConstraintBounds options of

HyperparameterOptimizationOptions. You can use this

syntax to optimize on compact model size instead of cross-validation loss, and

to perform a set of multiple optimization problems that have the same options

but different constraint bounds.

Examples

Create a predictive classification ensemble using all available predictor variables in the data. Then, train another ensemble using fewer predictors. Compare the in-sample predictive accuracies of the ensembles.

Load the census1994 data set.

load census1994Train an ensemble of classification models using the entire data set and default options.

Mdl1 = fitcensemble(adultdata,'salary')Mdl1 =

ClassificationEnsemble

PredictorNames: {'age' 'workClass' 'fnlwgt' 'education' 'education_num' 'marital_status' 'occupation' 'relationship' 'race' 'sex' 'capital_gain' 'capital_loss' 'hours_per_week' 'native_country'}

ResponseName: 'salary'

CategoricalPredictors: [2 4 6 7 8 9 10 14]

ClassNames: [<=50K >50K]

ScoreTransform: 'none'

NumObservations: 32561

NumTrained: 100

Method: 'LogitBoost'

LearnerNames: {'Tree'}

ReasonForTermination: 'Terminated normally after completing the requested number of training cycles.'

FitInfo: [100×1 double]

FitInfoDescription: {2×1 cell}

Properties, Methods

Mdl is a ClassificationEnsemble model. Some notable characteristics of Mdl are:

Because two classes are represented in the data, LogitBoost is the ensemble aggregation algorithm.

Because the ensemble aggregation method is a boosting algorithm, classification trees that allow a maximum of 10 splits compose the ensemble.

One hundred trees compose the ensemble.

Use the classification ensemble to predict the labels of a random set of five observations from the data. Compare the predicted labels with their true values.

rng(1) % For reproducibility [pX,pIdx] = datasample(adultdata,5); label = predict(Mdl1,pX); table(label,adultdata.salary(pIdx),'VariableNames',{'Predicted','Truth'})

ans=5×2 table

Predicted Truth

_________ _____

<=50K <=50K

<=50K <=50K

<=50K <=50K

<=50K <=50K

<=50K <=50K

Train a new ensemble using age and education only.

Mdl2 = fitcensemble(adultdata,'salary ~ age + education');Compare the resubstitution losses between Mdl1 and Mdl2.

rsLoss1 = resubLoss(Mdl1)

rsLoss1 = 0.1058

rsLoss2 = resubLoss(Mdl2)

rsLoss2 = 0.2037

The in-sample misclassification rate for the ensemble that uses all predictors is lower.

Train an ensemble of boosted classification trees by using fitcensemble. Reduce training time by specifying the 'NumBins' name-value pair argument to bin numeric predictors. This argument is valid only when fitcensemble uses a tree learner. After training, you can reproduce binned predictor data by using the BinEdges property of the trained model and the discretize function.

Generate a sample data set.

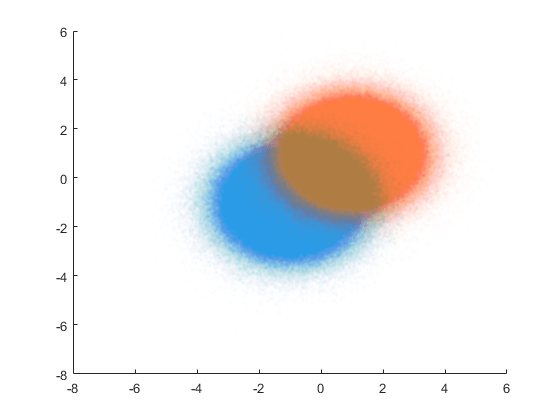

rng('default') % For reproducibility N = 1e6; X = [mvnrnd([-1 -1],eye(2),N); mvnrnd([1 1],eye(2),N)]; y = [zeros(N,1); ones(N,1)];

Visualize the data set.

figure scatter(X(1:N,1),X(1:N,2),'Marker','.','MarkerEdgeAlpha',0.01) hold on scatter(X(N+1:2*N,1),X(N+1:2*N,2),'Marker','.','MarkerEdgeAlpha',0.01)

Train an ensemble of boosted classification trees using adaptive logistic regression (LogitBoost, the default for binary classification). Time the function for comparison purposes.

tic Mdl1 = fitcensemble(X,y); toc

Elapsed time is 478.988422 seconds.

Speed up training by using the 'NumBins' name-value pair argument. If you specify the 'NumBins' value as a positive integer scalar, then the software bins every numeric predictor into a specified number of equiprobable bins, and then grows trees on the bin indices instead of the original data. The software does not bin categorical predictors.

tic

Mdl2 = fitcensemble(X,y,'NumBins',50);

tocElapsed time is 165.598434 seconds.

The process is about three times faster when you use binned data instead of the original data. Note that the elapsed time can vary depending on your operating system.

Compare the classification errors by resubstitution.

rsLoss1 = resubLoss(Mdl1)

rsLoss1 = 0.0788

rsLoss2 = resubLoss(Mdl2)

rsLoss2 = 0.0788

In this example, binning predictor values reduces training time without loss of accuracy. In general, when you have a large data set like the one in this example, using the binning option speeds up training but causes a potential decrease in accuracy. If you want to reduce training time further, specify a smaller number of bins.

Reproduce binned predictor data by using the BinEdges property of the trained model and the discretize function.

X = Mdl2.X; % Predictor data Xbinned = zeros(size(X)); edges = Mdl2.BinEdges; % Find indices of binned predictors. idxNumeric = find(~cellfun(@isempty,edges)); if iscolumn(idxNumeric) idxNumeric = idxNumeric'; end for j = idxNumeric x = X(:,j); % Convert x to array if x is a table. if istable(x) x = table2array(x); end % Group x into bins by using the discretize function. xbinned = discretize(x,[-inf; edges{j}; inf]); Xbinned(:,j) = xbinned; end

Xbinned contains the bin indices, ranging from 1 to the number of bins, for numeric predictors. Xbinned values are 0 for categorical predictors. If X contains NaNs, then the corresponding Xbinned values are NaNs.

Estimate the generalization error of ensemble of boosted classification trees.

Load the ionosphere data set.

load ionosphereCross-validate an ensemble of classification trees using AdaBoostM1 and 10-fold cross-validation. Specify that each tree should be split a maximum of five times using a decision tree template.

rng(5); % For reproducibility t = templateTree('MaxNumSplits',5); Mdl = fitcensemble(X,Y,'Method','AdaBoostM1','Learners',t,'CrossVal','on');

Mdl is a ClassificationPartitionedEnsemble model.

Plot the cumulative, 10-fold cross-validated, misclassification rate. Display the estimated generalization error of the ensemble.

kflc = kfoldLoss(Mdl,'Mode','cumulative'); figure; plot(kflc); ylabel('10-fold Misclassification rate'); xlabel('Learning cycle');

estGenError = kflc(end)

estGenError = 0.0769

kfoldLoss returns the generalization error by default. However, plotting the cumulative loss allows you to monitor how the loss changes as weak learners accumulate in the ensemble.

The ensemble achieves a misclassification rate of around 0.06 after accumulating about 50 weak learners. Then, the misclassification rate increase slightly as more weak learners enter the ensemble.

If you are satisfied with the generalization error of the ensemble, then, to create a predictive model, train the ensemble again using all of the settings except cross-validation. However, it is good practice to tune hyperparameters, such as the maximum number of decision splits per tree and the number of learning cycles.

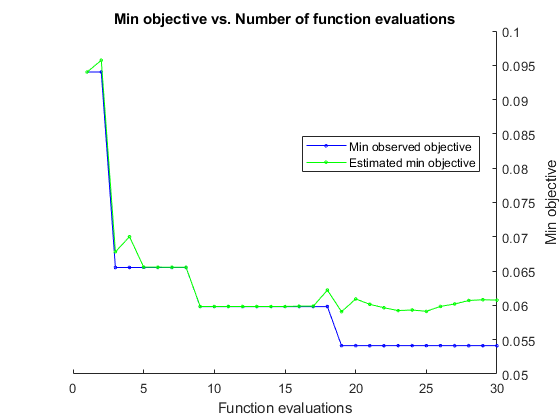

Automatically optimize hyperparameters of an ensemble classifier by using fitcensemble.

Load the ionosphere data set.

load ionosphereFind hyperparameters that minimize the 5-fold cross-validation loss by using automatic hyperparameter optimization. For reproducibility of the optimization, set the random seed and use the "expected-improvement-plus" acquisition function. For reproducibility of the random forest algorithm, specify the Reproducible name-value argument as true for tree learners.

rng(0,"twister") t = templateTree(Reproducible=true); hpoOptions = hyperparameterOptimizationOptions(AcquisitionFunctionName="expected-improvement-plus"); Mdl = fitcensemble(X,Y,OptimizeHyperparameters="auto", ... Learners=t,HyperparameterOptimizationOptions=hpoOptions)

|===================================================================================================================================|

| Iter | Eval | Objective | Objective | BestSoFar | BestSoFar | Method | NumLearningC-| LearnRate | MinLeafSize |

| | result | | runtime | (observed) | (estim.) | | ycles | | |

|===================================================================================================================================|

| 1 | Best | 0.10256 | 1.8674 | 0.10256 | 0.10256 | RUSBoost | 11 | 0.010199 | 17 |

| 2 | Best | 0.082621 | 6.8117 | 0.082621 | 0.083414 | LogitBoost | 206 | 0.96537 | 33 |

| 3 | Accept | 0.099715 | 4.3505 | 0.082621 | 0.082624 | AdaBoostM1 | 130 | 0.0072814 | 2 |

| 4 | Best | 0.068376 | 1.6976 | 0.068376 | 0.068395 | Bag | 25 | - | 5 |

| 5 | Best | 0.059829 | 1.8935 | 0.059829 | 0.062829 | LogitBoost | 58 | 0.19016 | 5 |

| 6 | Accept | 0.068376 | 1.8107 | 0.059829 | 0.065561 | LogitBoost | 58 | 0.10005 | 5 |

| 7 | Accept | 0.088319 | 14.793 | 0.059829 | 0.065786 | LogitBoost | 494 | 0.014474 | 3 |

| 8 | Accept | 0.065527 | 0.86434 | 0.059829 | 0.065894 | LogitBoost | 26 | 0.75515 | 8 |

| 9 | Accept | 0.15385 | 0.95951 | 0.059829 | 0.061156 | LogitBoost | 32 | 0.0010037 | 59 |

| 10 | Accept | 0.059829 | 4.3686 | 0.059829 | 0.059731 | LogitBoost | 143 | 0.44428 | 1 |

| 11 | Accept | 0.35897 | 1.592 | 0.059829 | 0.059826 | Bag | 54 | - | 175 |

| 12 | Accept | 0.068376 | 0.44847 | 0.059829 | 0.059825 | Bag | 10 | - | 1 |

| 13 | Accept | 0.12251 | 11.198 | 0.059829 | 0.059826 | AdaBoostM1 | 442 | 0.57897 | 102 |

| 14 | Accept | 0.11966 | 3.5104 | 0.059829 | 0.059827 | RUSBoost | 95 | 0.80822 | 1 |

| 15 | Accept | 0.062678 | 4.7874 | 0.059829 | 0.059826 | GentleBoost | 156 | 0.99502 | 1 |

| 16 | Accept | 0.065527 | 3.6223 | 0.059829 | 0.059824 | GentleBoost | 115 | 0.99693 | 13 |

| 17 | Best | 0.05698 | 1.9309 | 0.05698 | 0.056997 | GentleBoost | 60 | 0.0010045 | 3 |

| 18 | Accept | 0.13675 | 2.3559 | 0.05698 | 0.057002 | GentleBoost | 86 | 0.0010263 | 108 |

| 19 | Accept | 0.062678 | 2.7895 | 0.05698 | 0.05703 | GentleBoost | 88 | 0.6344 | 4 |

| 20 | Accept | 0.065527 | 1.1661 | 0.05698 | 0.057228 | GentleBoost | 35 | 0.0010155 | 1 |

|===================================================================================================================================|

| Iter | Eval | Objective | Objective | BestSoFar | BestSoFar | Method | NumLearningC-| LearnRate | MinLeafSize |

| | result | | runtime | (observed) | (estim.) | | ycles | | |

|===================================================================================================================================|

| 21 | Accept | 0.079772 | 0.44153 | 0.05698 | 0.057214 | LogitBoost | 11 | 0.9796 | 2 |

| 22 | Accept | 0.065527 | 15.083 | 0.05698 | 0.057523 | Bag | 499 | - | 1 |

| 23 | Accept | 0.068376 | 14.155 | 0.05698 | 0.057671 | Bag | 494 | - | 2 |

| 24 | Accept | 0.64103 | 0.89951 | 0.05698 | 0.057468 | RUSBoost | 30 | 0.088421 | 174 |

| 25 | Accept | 0.088319 | 0.40211 | 0.05698 | 0.057456 | RUSBoost | 10 | 0.010292 | 5 |

| 26 | Accept | 0.074074 | 0.41221 | 0.05698 | 0.05753 | AdaBoostM1 | 11 | 0.14192 | 13 |

| 27 | Accept | 0.099715 | 14.781 | 0.05698 | 0.057646 | AdaBoostM1 | 498 | 0.0010096 | 6 |

| 28 | Accept | 0.079772 | 13.204 | 0.05698 | 0.057886 | AdaBoostM1 | 474 | 0.030547 | 31 |

| 29 | Accept | 0.068376 | 14.396 | 0.05698 | 0.061326 | GentleBoost | 493 | 0.36142 | 2 |

| 30 | Accept | 0.065527 | 0.43476 | 0.05698 | 0.061165 | LogitBoost | 11 | 0.71408 | 16 |

__________________________________________________________

Optimization completed.

MaxObjectiveEvaluations of 30 reached.

Total function evaluations: 30

Total elapsed time: 155.2031 seconds

Total objective function evaluation time: 147.0263

Best observed feasible point:

Method NumLearningCycles LearnRate MinLeafSize

___________ _________________ _________ ___________

GentleBoost 60 0.0010045 3

Observed objective function value = 0.05698

Estimated objective function value = 0.061165

Function evaluation time = 1.9309

Best estimated feasible point (according to models):

Method NumLearningCycles LearnRate MinLeafSize

___________ _________________ _________ ___________

GentleBoost 60 0.0010045 3

Estimated objective function value = 0.061165

Estimated function evaluation time = 1.8943

Mdl =

ClassificationEnsemble

ResponseName: 'Y'

CategoricalPredictors: []

ClassNames: {'b' 'g'}

ScoreTransform: 'none'

NumObservations: 351

HyperparameterOptimizationResults: [1×1 classreg.learning.paramoptim.SupervisedLearningBayesianOptimization]

NumTrained: 60

Method: 'GentleBoost'

LearnerNames: {'Tree'}

ReasonForTermination: 'Terminated normally after completing the requested number of training cycles.'

FitInfo: [60×1 double]

FitInfoDescription: {2×1 cell}

Properties, Methods

The trained classifier Mdl corresponds to the best estimated feasible point and uses the same hyperparameter values for Method, NumLearningCycles, LearnRate, and MinLeafSize.

Find the hyperparameter values used to train Mdl by using the bestPoint function. By default, bestPoint uses the same best point criterion used by fitcensemble during the hyperparameter optimization ("min-visited-upper-confidence-interval"). In general, fit functions determine the best hyperparameter values based on the "min-visited-upper-confidence-interval" criterion (instead of the "min-observed" criterion) to avoid overfitting to noise in the data set.

bestEstimatedPoint = bestPoint(Mdl.HyperparameterOptimizationResults)

bestEstimatedPoint=1×4 table

Method NumLearningCycles LearnRate MinLeafSize

___________ _________________ _________ ___________

GentleBoost 60 0.0010045 3

Verify that the results match the properties of Mdl and its weak learners. Note that the template for the regression tree weak learners contains a MinLeaf value instead of a MinLeafSize value, but the two options are equivalent.

classifierProperties = table(string(Mdl.ModelParameters.Method), ... Mdl.ModelParameters.NLearn, ... Mdl.ModelParameters.LearnRate, ... VariableNames=["Method","NumLearningCycles","LearnRate"])

classifierProperties=1×3 table

Method NumLearningCycles LearnRate

_____________ _________________ _________

"GentleBoost" 60 0.0010045

weakLearnerProperties = Mdl.ModelParameters.LearnerTemplates{1}weakLearnerProperties =

Fit template for regression Tree.

SplitCriterion: []

MinParent: []

MinLeaf: 3

MaxSplits: 10

NVarToSample: []

MergeLeaves: 'off'

Prune: 'off'

PruneCriterion: []

QEToler: []

NSurrogate: []

MaxCat: []

AlgCat: []

PredictorSelection: []

UseChisqTest: []

Stream: []

Reproducible: 1

Version: 3

Method: 'Tree'

Type: 'regression'

One way to create an ensemble of boosted classification trees that has satisfactory predictive performance is by tuning the decision tree complexity level using cross-validation. While searching for an optimal complexity level, tune the learning rate to minimize the number of learning cycles.

This example manually finds optimal parameters by using the cross-validation option (the KFold name-value argument) and the kfoldLoss function. Alternatively, you can use the OptimizeHyperparameters name-value argument to optimize hyperparameters automatically. See Optimize Classification Ensemble.

Load the ionosphere data set.

load ionosphereTo search for the optimal tree-complexity level:

Cross-validate a set of ensembles. Exponentially increase the tree-complexity level for subsequent ensembles from decision stump (one split) to at most n - 1 splits. n is the sample size. Also, vary the learning rate for each ensemble between 0.1 to 1.

Estimate the cross-validated misclassification rate of each ensemble.

For tree-complexity level , , compare the cumulative, cross-validated misclassification rate of the ensembles by plotting them against number of learning cycles. Plot separate curves for each learning rate on the same figure.

Choose the curve that achieves the minimal misclassification rate, and note the corresponding learning cycle and learning rate.

Cross-validate a deep classification tree and a stump. These classification trees serve as benchmarks.

rng(1) % For reproducibility MdlDeep = fitctree(X,Y,CrossVal="on",MergeLeaves="off", ... MinParentSize=1); MdlStump = fitctree(X,Y,MaxNumSplits=1,CrossVal="on");

Cross-validate an ensemble of 150 boosted classification trees using 5-fold cross-validation. Using a tree template, vary the maximum number of splits using the values in the sequence . m is such that is no greater than n - 1. For each variant, adjust the learning rate using each value in the set {0.1, 0.25, 0.5, 1};

n = size(X,1); m = floor(log(n - 1)/log(3)); learnRate = [0.1 0.25 0.5 1]; numLR = numel(learnRate); maxNumSplits = 3.^(0:m); numMNS = numel(maxNumSplits); numTrees = 150; Mdl = cell(numMNS,numLR); for k = 1:numLR for j = 1:numMNS t = templateTree(MaxNumSplits=maxNumSplits(j)); Mdl{j,k} = fitcensemble(X,Y,NumLearningCycles=numTrees, ... Learners=t,KFold=5,LearnRate=learnRate(k)); end end

Estimate the cumulative, cross-validated misclassification rate for each ensemble and the classification trees serving as benchmarks.

kflAll = @(x)kfoldLoss(x,Mode="cumulative");

errorCell = cellfun(kflAll,Mdl,Uniform=false);

error = reshape(cell2mat(errorCell),[numTrees numel(maxNumSplits) numel(learnRate)]);

errorDeep = kfoldLoss(MdlDeep);

errorStump = kfoldLoss(MdlStump);Plot how the cross-validated misclassification rate behaves as the number of trees in the ensemble increases. Plot the curves with respect to learning rate on the same plot, and plot separate plots for varying tree-complexity levels. Choose a subset of tree complexity levels to plot.

mnsPlot = [1 round(numel(maxNumSplits)/2) numel(maxNumSplits)]; figure for k = 1:3 subplot(2,2,k) plot(squeeze(error(:,mnsPlot(k),:)),LineWidth=2) axis tight hold on h = gca; plot(h.XLim,[errorDeep errorDeep],"-.b",LineWidth=2) plot(h.XLim,[errorStump errorStump],"-.r",LineWidth=2) plot(h.XLim,min(min(error(:,mnsPlot(k),:))).*[1 1],"--k") h.YLim = [0 0.2]; xlabel("Number of trees") ylabel("Cross-validated misclass. rate") title(sprintf("MaxNumSplits = %0.3g", maxNumSplits(mnsPlot(k)))) hold off end hL = legend([cellstr(num2str(learnRate',"Learning Rate = %0.2f")); ... "Deep Tree";"Stump";"Min. misclass. rate"]); hL.Position(1) = 0.6;

Each curve contains a minimum cross-validated misclassification rate occurring at the optimal number of trees in the ensemble.

Identify the maximum number of splits, number of trees, and learning rate that yields the lowest misclassification rate overall.

[minErr,minErrIdxLin] = min(error(:));

[idxNumTrees,idxMNS,idxLR] = ind2sub(size(error),minErrIdxLin);

fprintf("\nMin. misclass. rate = %0.5f",minErr)Min. misclass. rate = 0.05128

fprintf("\nOptimal Parameter Values:\nNum. Trees = %d",idxNumTrees);Optimal Parameter Values: Num. Trees = 130

fprintf("\nMaxNumSplits = %d\nLearning Rate = %0.2f\n", ... maxNumSplits(idxMNS),learnRate(idxLR))

MaxNumSplits = 9 Learning Rate = 1.00

Create a predictive ensemble based on the optimal hyperparameters and the entire training set.

tFinal = templateTree(MaxNumSplits=maxNumSplits(idxMNS));

MdlFinal = fitcensemble(X,Y,NumLearningCycles=idxNumTrees, ...

Learners=tFinal,LearnRate=learnRate(idxLR))MdlFinal =

ClassificationEnsemble

ResponseName: 'Y'

CategoricalPredictors: []

ClassNames: {'b' 'g'}

ScoreTransform: 'none'

NumObservations: 351

NumTrained: 130

Method: 'LogitBoost'

LearnerNames: {'Tree'}

ReasonForTermination: 'Terminated normally after completing the requested number of training cycles.'

FitInfo: [130×1 double]

FitInfoDescription: {2×1 cell}

MdlFinal is a ClassificationEnsemble. To predict whether a radar return is good given predictor data, you can pass the predictor data and MdlFinal to predict.

Instead of searching optimal values manually by using the cross-validation option (KFold) and the kfoldLoss function, you can use the OptimizeHyperparameters name-value argument. When you specify OptimizeHyperparameters, the software finds optimal parameters automatically using Bayesian optimization. The optimal values obtained by using OptimizeHyperparameters can be different from those obtained using manual search.

mdl = fitcensemble(X,Y,OptimizeHyperparameters=["NumLearningCycles","LearnRate","MaxNumSplits"])

|====================================================================================================================|

| Iter | Eval | Objective | Objective | BestSoFar | BestSoFar | NumLearningC-| LearnRate | MaxNumSplits |

| | result | | runtime | (observed) | (estim.) | ycles | | |

|====================================================================================================================|

| 1 | Best | 0.094017 | 1.0109 | 0.094017 | 0.094017 | 137 | 0.001364 | 3 |

| 2 | Accept | 0.12251 | 0.29285 | 0.094017 | 0.095735 | 15 | 0.013089 | 144 |

| 3 | Best | 0.065527 | 0.23874 | 0.065527 | 0.067815 | 31 | 0.47201 | 2 |

| 4 | Accept | 0.19943 | 2.3554 | 0.065527 | 0.070015 | 340 | 0.92167 | 7 |

| 5 | Accept | 0.071225 | 0.22509 | 0.065527 | 0.065579 | 30 | 0.16943 | 3 |

| 6 | Accept | 0.17379 | 0.23947 | 0.065527 | 0.065543 | 40 | 0.0010285 | 1 |

| 7 | Accept | 0.11111 | 0.37218 | 0.065527 | 0.065548 | 33 | 0.0011114 | 346 |

| 8 | Accept | 0.065527 | 0.16602 | 0.065527 | 0.065528 | 24 | 0.67901 | 1 |

| 9 | Accept | 0.068376 | 0.26976 | 0.065527 | 0.065522 | 22 | 0.75248 | 7 |

| 10 | Accept | 0.091168 | 0.35755 | 0.065527 | 0.065525 | 28 | 0.93014 | 198 |

| 11 | Accept | 0.079772 | 0.1138 | 0.065527 | 0.065521 | 10 | 0.65965 | 1 |

| 12 | Accept | 0.094017 | 0.14859 | 0.065527 | 0.06552 | 18 | 0.27436 | 1 |

| 13 | Accept | 0.08547 | 0.18217 | 0.065527 | 0.065521 | 10 | 0.89412 | 238 |

| 14 | Best | 0.062678 | 0.20602 | 0.062678 | 0.062676 | 27 | 0.96045 | 2 |

| 15 | Accept | 0.065527 | 0.29758 | 0.062678 | 0.062668 | 52 | 0.75431 | 1 |

| 16 | Accept | 0.074074 | 0.27177 | 0.062678 | 0.062673 | 48 | 0.15762 | 1 |

| 17 | Best | 0.059829 | 0.24326 | 0.059829 | 0.059849 | 38 | 0.90089 | 1 |

| 18 | Accept | 0.11111 | 0.52381 | 0.059829 | 0.059844 | 46 | 0.045175 | 338 |

| 19 | Accept | 0.059829 | 0.15562 | 0.059829 | 0.05985 | 16 | 0.95104 | 2 |

| 20 | Accept | 0.062678 | 0.1583 | 0.059829 | 0.059844 | 17 | 0.98245 | 1 |

|====================================================================================================================|

| Iter | Eval | Objective | Objective | BestSoFar | BestSoFar | NumLearningC-| LearnRate | MaxNumSplits |

| | result | | runtime | (observed) | (estim.) | ycles | | |

|====================================================================================================================|

| 21 | Accept | 0.076923 | 0.15158 | 0.059829 | 0.060213 | 11 | 0.95945 | 9 |

| 22 | Accept | 0.059829 | 0.20195 | 0.059829 | 0.059804 | 31 | 0.98153 | 1 |

| 23 | Accept | 0.068376 | 0.37618 | 0.059829 | 0.059799 | 48 | 0.13159 | 7 |

| 24 | Accept | 0.065527 | 0.16975 | 0.059829 | 0.059923 | 17 | 0.99502 | 2 |

| 25 | Best | 0.05698 | 0.19868 | 0.05698 | 0.058965 | 30 | 0.98437 | 1 |

| 26 | Accept | 0.05698 | 0.21051 | 0.05698 | 0.058479 | 29 | 0.98991 | 1 |

| 27 | Accept | 0.11111 | 2.1008 | 0.05698 | 0.058498 | 215 | 0.0010548 | 272 |

| 28 | Accept | 0.05698 | 0.21482 | 0.05698 | 0.058097 | 33 | 0.9139 | 1 |

| 29 | Accept | 0.062678 | 0.23886 | 0.05698 | 0.058536 | 36 | 0.87964 | 1 |

| 30 | Accept | 0.17379 | 0.11248 | 0.05698 | 0.058541 | 10 | 0.0010334 | 1 |

__________________________________________________________

Optimization completed.

MaxObjectiveEvaluations of 30 reached.

Total function evaluations: 30

Total elapsed time: 19.5967 seconds

Total objective function evaluation time: 11.8045

Best observed feasible point:

NumLearningCycles LearnRate MaxNumSplits

_________________ _________ ____________

30 0.98437 1

Observed objective function value = 0.05698

Estimated objective function value = 0.058497

Function evaluation time = 0.19868

Best estimated feasible point (according to models):

NumLearningCycles LearnRate MaxNumSplits

_________________ _________ ____________

31 0.98153 1

Estimated objective function value = 0.058541

Estimated function evaluation time = 0.20591

mdl =

ClassificationEnsemble

ResponseName: 'Y'

CategoricalPredictors: []

ClassNames: {'b' 'g'}

ScoreTransform: 'none'

NumObservations: 351

HyperparameterOptimizationResults: [1×1 classreg.learning.paramoptim.SupervisedLearningBayesianOptimization]

NumTrained: 31

Method: 'LogitBoost'

LearnerNames: {'Tree'}

ReasonForTermination: 'Terminated normally after completing the requested number of training cycles.'

FitInfo: [31×1 double]

FitInfoDescription: {2×1 cell}

Input Arguments

Sample data used to train the model, specified as a table. Each

row of Tbl corresponds to one observation, and

each column corresponds to one predictor variable. Tbl can

contain one additional column for the response variable. Multicolumn

variables and cell arrays other than cell arrays of character vectors

are not allowed.

If

Tblcontains the response variable and you want to use all remaining variables as predictors, then specify the response variable usingResponseVarName.If

Tblcontains the response variable, and you want to use a subset of the remaining variables only as predictors, then specify a formula usingformula.If

Tbldoes not contain the response variable, then specify the response data usingY. The length of response variable and the number of rows ofTblmust be equal.

Note

To save memory and execution time, supply X and Y instead

of Tbl.

Data Types: table

Response variable name, specified as the name of the response variable in

Tbl.

You must specify ResponseVarName as a character

vector or string scalar. For example, if Tbl.Y is the

response variable, then specify ResponseVarName as

'Y'. Otherwise, fitcensemble

treats all columns of Tbl as predictor

variables.

The response variable must be a categorical, character, or string array, logical or numeric vector, or cell array of character vectors. If the response variable is a character array, then each element must correspond to one row of the array.

For classification, you can specify the order of the classes using the

ClassNames name-value pair argument. Otherwise,

fitcensemble determines the class order, and stores

it in the Mdl.ClassNames.

Data Types: char | string

Explanatory model of the response variable and a subset of the predictor variables,

specified as a character vector or string scalar in the form

"Y~x1+x2+x3". In this form, Y represents the

response variable, and x1, x2, and

x3 represent the predictor variables.

To specify a subset of variables in Tbl as predictors for

training the model, use a formula. If you specify a formula, then the software does not

use any variables in Tbl that do not appear in

formula.

The variable names in the formula must be both variable names in Tbl

(Tbl.Properties.VariableNames) and valid MATLAB® identifiers. You can verify the variable names in Tbl by

using the isvarname function. If the variable names

are not valid, then you can convert them by using the matlab.lang.makeValidName function.

Data Types: char | string

Predictor data, specified as numeric matrix.

Each row corresponds to one observation, and each column corresponds to one predictor variable.

The length of Y and the number of rows

of X must be equal.

To specify the names of the predictors in the order of their

appearance in X, use the PredictorNames name-value

pair argument.

Data Types: single | double

Response data, specified as a categorical, character, or string array,

logical or numeric vector, or cell array of character vectors. Each entry in

Y is the response to or label for the observation in

the corresponding row of X or Tbl.

The length of Y and the number of rows of

X or Tbl must be equal. If the

response variable is a character array, then each element must correspond to

one row of the array.

You can specify the order of the classes using the

ClassNames name-value pair argument. Otherwise,

fitcensemble determines the class order, and stores

it in the Mdl.ClassNames.

Data Types: categorical | char | string | logical | single | double | cell

Name-Value Arguments

Specify optional pairs of arguments as

Name1=Value1,...,NameN=ValueN, where Name is

the argument name and Value is the corresponding value.

Name-value arguments must appear after other arguments, but the order of the

pairs does not matter.

Before R2021a, use commas to separate each name and value, and enclose

Name in quotes.

Example: 'CrossVal','on','LearnRate',0.05 specifies to implement

10-fold cross-validation and to use 0.05 as the learning

rate.

Note

You cannot use any cross-validation name-value argument together with the

OptimizeHyperparameters name-value argument. You can modify the

cross-validation for OptimizeHyperparameters only by using the

HyperparameterOptimizationOptions name-value argument.

General Ensemble Options

Ensemble aggregation method, specified as the comma-separated pair

consisting of 'Method' and one of the following

values.

| Value | Method | Classification Problem Support | Related Name-Value Pair Arguments |

|---|---|---|---|

'Bag' | Bootstrap aggregation (bagging, for example,

random forest [2]) — If

'Method' is

'Bag', then

fitcensemble uses bagging

with random predictor selections at each split

(random forest) by default. To use bagging without

the random selections, use tree learners whose

'NumVariablesToSample' value is

'all' or use discriminant

analysis learners. | Binary and multiclass | N/A |

'Subspace' | Random subspace | Binary and multiclass | NPredToSample |

'AdaBoostM1' | Adaptive boosting | Binary only | LearnRate |

'AdaBoostM2' | Adaptive boosting | Multiclass only | LearnRate |

'GentleBoost' | Gentle adaptive boosting | Binary only | LearnRate |

'LogitBoost' | Adaptive logistic regression | Binary only | LearnRate |

'LPBoost' | Linear programming boosting — Requires Optimization Toolbox™ | Binary and multiclass | MarginPrecision |

'RobustBoost' | Robust boosting — Requires Optimization Toolbox | Binary only | RobustErrorGoal,

RobustMarginSigma,

RobustMaxMargin |

'RUSBoost' | Random undersampling boosting | Binary and multiclass | LearnRate,

RatioToSmallest |

'TotalBoost' | Totally corrective boosting — Requires Optimization Toolbox | Binary and multiclass | MarginPrecision |

You can specify sampling options

(FResample, Replace,

Resample) for training data when you use

bagging ('Bag') or boosting

('TotalBoost', 'RUSBoost',

'AdaBoostM1', 'AdaBoostM2',

'GentleBoost', 'LogitBoost',

'RobustBoost', or

'LPBoost').

The defaults are:

'LogitBoost'for binary problems and'AdaBoostM2'for multiclass problems if'Learners'includes only tree learners'AdaBoostM1'for binary problems and'AdaBoostM2'for multiclass problems if'Learners'includes both tree and discriminant analysis learners'Subspace'if'Learners'does not include tree learners

For details about ensemble aggregation algorithms and examples, see Algorithms, Tips, Ensemble Algorithms, and Choose an Applicable Ensemble Aggregation Method.

Example: 'Method','Bag'

Number of ensemble learning cycles, specified as the comma-separated

pair consisting of 'NumLearningCycles' and a positive

integer or 'AllPredictorCombinations'.

If you specify a positive integer, then, at every learning cycle, the software trains one weak learner for every template object in

Learners. Consequently, the software trainsNumLearningCycles*numel(Learners)learners.If you specify

'AllPredictorCombinations', then setMethodto'Subspace'and specify one learner only forLearners. With these settings, the software trains learners for all possible combinations of predictors takenNPredToSampleat a time. Consequently, the software trainsnchoosek(size(X,2),NPredToSample)learners.

The software composes the ensemble using all trained learners and

stores them in Mdl.Trained.

For more details, see Tips.

Example: 'NumLearningCycles',500

Data Types: single | double | char | string

Weak learners to use in the ensemble, specified as the comma-separated

pair consisting of 'Learners' and a weak-learner

name, weak-learner template object, or cell vector of weak-learner

template objects.

| Weak Learner | Weak-Learner Name | Template Object Creation Function | Method

Setting |

|---|---|---|---|

| Discriminant analysis | 'discriminant' | templateDiscriminant | Recommended for 'Subspace' |

| k-nearest neighbors | 'knn' | templateKNN | For 'Subspace' only |

| Decision tree | 'tree' | templateTree | All methods except

'Subspace' |

Weak-learner name (

'discriminant','knn', or'tree') —fitcensembleuses weak learners created by a template object creation function with default settings. For example, specifying'Learners','discriminant'is the same as specifying'Learners',templateDiscriminant(). See the template object creation function pages for the default settings of a weak learner.Weak-learner template object —

fitcensembleuses the weak learners created by a template object creation function. Use the name-value pair arguments of the template object creation function to specify the settings of the weak learners.Cell vector of m weak-learner template objects —

fitcensemblegrows m learners per learning cycle (seeNumLearningCycles). For example, for an ensemble composed of two types of classification trees, supply{t1 t2}, wheret1andt2are classification tree template objects returned bytemplateTree.

The default 'Learners' value is

'knn' if 'Method' is

'Subspace'.

The default 'Learners' value is

'tree' if 'Method' is

'Bag' or any boosting method. The default values

of templateTree() depend on the value of

'Method'.

For bagged decision trees, the maximum number of decision splits (

'MaxNumSplits') isn–1, wherenis the number of observations. The number of predictors to select at random for each split ('NumVariablesToSample') is the square root of the number of predictors. Therefore,fitcensemblegrows deep decision trees. You can grow shallower trees to reduce model complexity or computation time.For boosted decision trees,

'MaxNumSplits'is 10 and'NumVariablesToSample'is'all'. Therefore,fitcensemblegrows shallow decision trees. You can grow deeper trees for better accuracy.

See templateTree for the

default settings of a weak learner. To obtain reproducible results, you

must specify the 'Reproducible' name-value pair argument of

templateTree as true if

'NumVariablesToSample' is not

'all'.

For details on the number of learners to train, see

NumLearningCycles and Tips.

Example: 'Learners',templateTree('MaxNumSplits',5)

Printout frequency, specified as a positive integer or "off".

To track the number of weak learners or folds that

fitcensemble trained so far, specify a positive integer. That

is, if you specify the positive integer m:

Without also specifying any cross-validation option (for example,

CrossVal), thenfitcensembledisplays a message to the command line every time it completes training m weak learners.And a cross-validation option, then

fitcensembledisplays a message to the command line every time it finishes training m folds.

If you specify "off", then fitcensemble does not

display a message when it completes training weak learners.

Tip

For fastest training of some boosted decision trees, set NPrint to the

default value "off". This tip holds when the classification

Method is "AdaBoostM1",

"AdaBoostM2", "GentleBoost", or

"LogitBoost", or when the regression Method is

"LSBoost".

Example: NPrint=5

Data Types: single | double | char | string

Number of bins for numeric predictors, specified as a positive integer scalar. This

argument is valid only when fitcensemble uses a tree learner, that

is, Learners is either "tree" or a template

object created by using templateTree.

If the

NumBinsvalue is empty (default), thenfitcensembledoes not bin any predictors.If you specify the

NumBinsvalue as a positive integer scalar (numBins), thenfitcensemblebins every numeric predictor into at mostnumBinsequiprobable bins, and then grows trees on the bin indices instead of the original data.The number of bins can be less than

numBinsif a predictor has fewer thannumBinsunique values.fitcensembledoes not bin categorical predictors.

When you use a large training data set, this binning option speeds up training but might cause

a potential decrease in accuracy. You can try NumBins=50 first, and then

change the value depending on the accuracy and training speed.

A trained model stores the bin edges in the BinEdges property.

Example: "NumBins",50

Data Types: single | double

Categorical predictors list, specified as one of the values in this table.

| Value | Description |

|---|---|

| Vector of positive integers |

Each entry in the vector is an index value indicating that the corresponding predictor is

categorical. The index values are between 1 and If |

| Logical vector |

A |

| Character matrix | Each row of the matrix is the name of a predictor variable. The names must match the entries in PredictorNames. Pad the names with extra blanks so each row of the character matrix has the same length. |

| String array or cell array of character vectors | Each element in the array is the name of a predictor variable. The names must match the entries in PredictorNames. |

"all" | All predictors are categorical. |

Specification of 'CategoricalPredictors' is appropriate if:

'Learners'specifies tree learners.'Learners'specifies k-nearest learners where all predictors are categorical.

Each learner identifies and treats categorical predictors in the same way as

the fitting function corresponding to the learner. See 'CategoricalPredictors' of fitcknn

for k-nearest learners and 'CategoricalPredictors' of fitctree

for tree learners.

Example: 'CategoricalPredictors','all'

Data Types: single | double | logical | char | string | cell

Predictor variable names, specified as a string array of unique names or cell array of unique

character vectors. The functionality of PredictorNames depends on the

way you supply the training data.

If you supply

XandY, then you can usePredictorNamesto assign names to the predictor variables inX.The order of the names in

PredictorNamesmust correspond to the column order ofX. That is,PredictorNames{1}is the name ofX(:,1),PredictorNames{2}is the name ofX(:,2), and so on. Also,size(X,2)andnumel(PredictorNames)must be equal.By default,

PredictorNamesis{'x1','x2',...}.

If you supply

Tbl, then you can usePredictorNamesto choose which predictor variables to use in training. That is,fitcensembleuses only the predictor variables inPredictorNamesand the response variable during training.PredictorNamesmust be a subset ofTbl.Properties.VariableNamesand cannot include the name of the response variable.By default,

PredictorNamescontains the names of all predictor variables.A good practice is to specify the predictors for training using either

PredictorNamesorformula, but not both.

Example: PredictorNames=["SepalLength","SepalWidth","PetalLength","PetalWidth"]

Data Types: string | cell

Response variable name, specified as a character vector or string scalar.

If you supply

Y, then you can useResponseNameto specify a name for the response variable.If you supply

ResponseVarNameorformula, then you cannot useResponseName.

Example: ResponseName="response"

Data Types: char | string

Parallel Options

Options for computing in parallel and setting random numbers, specified as a structure. Create

the Options structure using statset.

Note

You need Parallel Computing Toolbox™ to run computations in parallel.

This table describes the option fields and their values.

| Field Name | Value | Default |

|---|---|---|

UseParallel | Set this value to | false |

UseSubstreams | Set this value to To compute reproducibly, set

| false |

Streams | Specify this value as a RandStream object or cell array of such objects. Use a single object except when the UseParallel value is true and the UseSubstreams value is false. In that case, use a cell array that has the same size as the parallel pool. | If you do not specify Streams, fitcensemble uses the

default stream or streams. |

For an example using reproducible parallel training, see Train Classification Ensemble in Parallel.

For dual-core systems and above, fitcensemble parallelizes

training using Intel® Threading Building Blocks (TBB). Therefore, specifying the

UseParallel option as true might not provide a

significant speedup on a single computer. For details on Intel TBB, see https://www.intel.com/content/www/us/en/developer/tools/oneapi/onetbb.html.

Example: Options=statset(UseParallel=true)

Data Types: struct

Cross-Validation Options

Cross-validation flag, specified as the comma-separated pair

consisting of 'Crossval' and 'on' or 'off'.

If you specify 'on', then the software implements

10-fold cross-validation.

To override this cross-validation setting, use one of these

name-value pair arguments: CVPartition, Holdout, KFold,

or Leaveout. To create a cross-validated model,

you can use one cross-validation name-value pair argument at a time

only.

Alternatively, cross-validate later by passing Mdl to crossval.

Example: 'Crossval','on'

Cross-validation partition, specified as a cvpartition object that specifies the type of cross-validation and the

indexing for the training and validation sets.

To create a cross-validated model, you can specify only one of these four name-value

arguments: CVPartition, Holdout,

KFold, or Leaveout.

Example: Suppose you create a random partition for 5-fold cross-validation on 500

observations by using cvp = cvpartition(500,KFold=5). Then, you can

specify the cross-validation partition by setting

CVPartition=cvp.

Fraction of the data used for holdout validation, specified as a scalar value in the range

(0,1). If you specify Holdout=p, then the software completes these

steps:

Randomly select and reserve

p*100% of the data as validation data, and train the model using the rest of the data.Store the compact trained model in the

Trainedproperty of the cross-validated model.

To create a cross-validated model, you can specify only one of these four name-value

arguments: CVPartition, Holdout,

KFold, or Leaveout.

Example: Holdout=0.1

Data Types: double | single

Number of folds to use in the cross-validated model, specified as a positive integer value

greater than 1. If you specify KFold=k, then the software completes

these steps:

Randomly partition the data into

ksets.For each set, reserve the set as validation data, and train the model using the other

k– 1 sets.Store the

kcompact trained models in ak-by-1 cell vector in theTrainedproperty of the cross-validated model.

To create a cross-validated model, you can specify only one of these four name-value

arguments: CVPartition, Holdout,

KFold, or Leaveout.

Example: KFold=5

Data Types: single | double

Leave-one-out cross-validation flag, specified as "on" or

"off". If you specify Leaveout="on", then for

each of the n observations (where n is the number

of observations, excluding missing observations, specified in the

NumObservations property of the model), the software completes

these steps:

Reserve the one observation as validation data, and train the model using the other n – 1 observations.

Store the n compact trained models in an n-by-1 cell vector in the

Trainedproperty of the cross-validated model.

To create a cross-validated model, you can specify only one of these four name-value

arguments: CVPartition, Holdout,

KFold, or Leaveout.

Example: Leaveout="on"

Data Types: char | string

Other Classification Options

Names of classes to use for training, specified as a categorical, character, or string

array; a logical or numeric vector; or a cell array of character vectors.

ClassNames must have the same data type as the response variable

in Tbl or Y.

If ClassNames is a character array, then each element must correspond to one row of the array.

Use ClassNames to:

Specify the order of the classes during training.

Specify the order of any input or output argument dimension that corresponds to the class order. For example, use

ClassNamesto specify the order of the dimensions ofCostor the column order of classification scores returned bypredict.Select a subset of classes for training. For example, suppose that the set of all distinct class names in

Yis["a","b","c"]. To train the model using observations from classes"a"and"c"only, specifyClassNames=["a","c"].

The default value for ClassNames is the set of all distinct class names in the response variable in Tbl or Y.

Example: ClassNames=["b","g"]

Data Types: categorical | char | string | logical | single | double | cell

Misclassification cost, specified as the comma-separated pair

consisting of 'Cost' and a square matrix or structure.

If you specify:

The square matrix

Cost, thenCost(i,j)is the cost of classifying a point into classjif its true class isi. That is, the rows correspond to the true class and the columns correspond to the predicted class. To specify the class order for the corresponding rows and columns ofCost, also specify theClassNamesname-value pair argument.The structure

S, then it must have two fields:S.ClassNames, which contains the class names as a variable of the same data type asYS.ClassificationCosts, which contains the cost matrix with rows and columns ordered as inS.ClassNames

The default is ones(, where K) -

eye(K)K is

the number of distinct classes.

fitcensemble uses Cost to adjust the prior

class probabilities specified in Prior. Then,

fitcensemble uses the adjusted prior probabilities for

training.

Example: 'Cost',[0 1 2 ; 1 0 2; 2 2 0]

Data Types: double | single | struct

Prior probabilities for each class, specified as the comma-separated

pair consisting of 'Prior' and a value in this

table.

| Value | Description |

|---|---|

'empirical' | The class prior probabilities are the class relative frequencies

in Y. |

'uniform' | All class prior probabilities are equal to 1/K, where K is the number of classes. |

| numeric vector | Each element is a class prior probability. Order the elements according to

Mdl.ClassNames or specify the order using the

ClassNames name-value pair argument. The

software normalizes the elements such that they sum to

1. |

| structure array | A structure

|

fitcensemble normalizes

the prior probabilities in Prior to sum to 1.

Example: struct('ClassNames',{{'setosa','versicolor','virginica'}},'ClassProbs',1:3)

Data Types: char | string | double | single | struct

Score transformation, specified as a character vector, string scalar, or function handle.

This table summarizes the available character vectors and string scalars.

| Value | Description |

|---|---|

"doublelogit" | 1/(1 + e–2x) |

"invlogit" | log(x / (1 – x)) |

"ismax" | Sets the score for the class with the largest score to 1, and sets the scores for all other classes to 0 |

"logit" | 1/(1 + e–x) |

"none" or "identity" | x (no transformation) |

"sign" | –1 for x < 0 0 for x = 0 1 for x > 0 |

"symmetric" | 2x – 1 |

"symmetricismax" | Sets the score for the class with the largest score to 1, and sets the scores for all other classes to –1 |

"symmetriclogit" | 2/(1 + e–x) – 1 |

For a MATLAB function or a function you define, use its function handle for the score transform. The function handle must accept a matrix (the original scores) and return a matrix of the same size (the transformed scores).

Example: ScoreTransform="logit"

Data Types: char | string | function_handle

Observation weights, specified as a numeric vector of positive values or name of a variable in

Tbl. The software weighs the observations in each row of

X or Tbl with the corresponding value in

Weights. The size of Weights must equal the

number of rows of X or Tbl.

If you specify the input data as a table Tbl, then

Weights can be the name of a variable in Tbl

that contains a numeric vector. In this case, you must specify

Weights as a character vector or string scalar. For example, if

the weights vector W is stored as Tbl.W, then

specify it as "W". Otherwise, the software treats all columns of

Tbl, including W, as predictors or the

response when training the model.

By default, Weights is

ones(, where

n,1)n is the number of observations in X

or Tbl.

The software normalizes Weights to sum up to the value of the prior

probability in the respective class. Inf weights are not supported.

Data Types: double | single | char | string

Sampling Options for Boosting Methods and Bagging

Fraction of the training set to resample for every weak learner, specified as a positive

scalar in (0,1]. To use 'FResample', set

Resample to 'on'.

Example: 'FResample',0.75

Data Types: single | double

Flag indicating sampling with replacement, specified as the

comma-separated pair consisting of 'Replace' and 'off' or 'on'.

For

'on', the software samples the training observations with replacement.For

'off', the software samples the training observations without replacement. If you setResampleto'on', then the software samples training observations assuming uniform weights. If you also specify a boosting method, then the software boosts by reweighting observations.

Unless you set Method to 'bag' or

set Resample to 'on', Replace has

no effect.

Example: 'Replace','off'

Flag indicating to resample, specified as the comma-separated

pair consisting of 'Resample' and 'off' or 'on'.

If

Methodis a boosting method, then:'Resample','on'specifies to sample training observations using updated weights as the multinomial sampling probabilities.'Resample','off'(default) specifies to reweight observations at every learning iteration.

If

Methodis'bag', then'Resample'must be'on'. The software resamples a fraction of the training observations (seeFResample) with or without replacement (seeReplace).

If you specify to resample using Resample, then it is good

practice to resample to entire data set. That is, use the default setting of 1 for

FResample.

AdaBoostM1, AdaBoostM2, LogitBoost, and GentleBoost Method Options

Learning rate for shrinkage, specified as the comma-separated pair consisting of

'LearnRate' and a numeric scalar in the interval (0,1].

To train an ensemble using shrinkage, set LearnRate to a value less than 1, for example, 0.1 is a popular choice. Training an ensemble using shrinkage requires more learning iterations, but often achieves better accuracy.

Example: 'LearnRate',0.1

Data Types: single | double

RUSBoost Method Options

Learning rate for shrinkage, specified as the comma-separated pair consisting of

'LearnRate' and a numeric scalar in the interval (0,1].

To train an ensemble using shrinkage, set LearnRate to a value less than 1, for example, 0.1 is a popular choice. Training an ensemble using shrinkage requires more learning iterations, but often achieves better accuracy.

Example: 'LearnRate',0.1

Data Types: single | double

Sampling proportion with respect to the lowest-represented class,

specified as the comma-separated pair consisting of 'RatioToSmallest' and

a numeric scalar or numeric vector of positive values with length

equal to the number of distinct classes in the training data.

Suppose that there are K classes

in the training data and the lowest-represented class has m observations

in the training data.

If you specify the positive numeric scalar

s, thenfitcensemblesampless*mIf you specify the numeric vector

[, thens1,s2,...,sK]fitcensemblesamplessi*mi,i= 1,...,K. The elements ofRatioToSmallestcorrespond to the order of the class names specified usingClassNames(see Tips).

The default value is ones(,

which specifies to sample K,1)m observations

from each class.

Example: 'RatioToSmallest',[2,1]

Data Types: single | double

LPBoost and TotalBoost Method Options

Margin precision to control convergence speed, specified as

the comma-separated pair consisting of 'MarginPrecision' and

a numeric scalar in the interval [0,1]. MarginPrecision affects

the number of boosting iterations required for convergence.

Tip

To train an ensemble using many learners, specify a small value

for MarginPrecision. For training using a few learners,

specify a large value.

Example: 'MarginPrecision',0.5

Data Types: single | double

RobustBoost Method Options

Target classification error, specified as the comma-separated

pair consisting of 'RobustErrorGoal' and a nonnegative

numeric scalar. The upper bound on possible values depends on the

values of RobustMarginSigma and RobustMaxMargin.

However, the upper bound cannot exceed 1.

Tip

For a particular training set, usually there is an optimal range

for RobustErrorGoal. If you set it too low or too

high, then the software can produce a model with poor classification

accuracy. Try cross-validating to search for the appropriate value.

Example: 'RobustErrorGoal',0.05

Data Types: single | double

Classification margin distribution spread over the training data, specified as the

comma-separated pair consisting of 'RobustMarginSigma' and a positive

numeric scalar. Before specifying RobustMarginSigma, consult the

literature on RobustBoost, for example, [3].

Example: 'RobustMarginSigma',0.5

Data Types: single | double

Maximal classification margin in the training data, specified

as the comma-separated pair consisting of 'RobustMaxMargin' and

a nonnegative numeric scalar. The software minimizes the number of

observations in the training data having classification margins below RobustMaxMargin.

Example: 'RobustMaxMargin',1

Data Types: single | double

Random Subspace Method Options

Number of predictors to sample for each random subspace learner,

specified as the comma-separated pair consisting of 'NPredToSample' and

a positive integer in the interval 1,...,p, where p is

the number of predictor variables (size(X,2) or size(Tbl,2)).

Data Types: single | double

Hyperparameter Optimization Options

Parameters to optimize, specified as the comma-separated pair

consisting of 'OptimizeHyperparameters' and one of

the following:

'none'— Do not optimize.'auto'— Use{'Method','NumLearningCycles','LearnRate'}along with the default parameters for the specifiedLearners:Learners='tree'(default) —{'MinLeafSize'}Learners='discriminant'—{'Delta','Gamma'}Learners='knn'—{'Distance','NumNeighbors','Standardize'}

Note

For hyperparameter optimization,

Learnersmust be a single argument, not a string array or cell array.'all'— Optimize all eligible parameters.String array or cell array of eligible parameter names

Vector of

optimizableVariableobjects, typically the output ofhyperparameters

The optimization attempts to minimize the cross-validation loss

(error) for fitcensemble by varying the parameters. To control the

cross-validation type and other aspects of the optimization, use the

HyperparameterOptimizationOptions name-value argument. When you use

HyperparameterOptimizationOptions, you can use the (compact) model size

instead of the cross-validation loss as the optimization objective by setting the

ConstraintType and ConstraintBounds options.

Note

The values of OptimizeHyperparameters override any values you

specify using other name-value arguments. For example, setting

OptimizeHyperparameters to "auto" causes

fitcensemble to optimize hyperparameters corresponding to the

"auto" option and to ignore any specified values for the

hyperparameters.

The eligible parameters for fitcensemble

are:

Method— Depends on the number of classes.Two classes — Eligible methods are

'Bag','GentleBoost','LogitBoost','AdaBoostM1', and'RUSBoost'.Three or more classes — Eligible methods are

'Bag','AdaBoostM2', and'RUSBoost'.

NumLearningCycles—fitcensemblesearches among positive integers, by default log-scaled with range[10,500].LearnRate—fitcensemblesearches among positive reals, by default log-scaled with range[1e-3,1].The eligible hyperparameters for the chosen

Learners:Learners Eligible Hyperparameters

Bold = Used By DefaultDefault Range 'discriminant'DeltaLog-scaled in the range [1e-6,1e3]DiscrimType'linear','quadratic','diagLinear','diagQuadratic','pseudoLinear', and'pseudoQuadratic'GammaReal values in [0,1]'knn'Distance'cityblock','chebychev','correlation','cosine','euclidean','hamming','jaccard','mahalanobis','minkowski','seuclidean', and'spearman'DistanceWeight'equal','inverse', and'squaredinverse'ExponentPositive values in [0.5,3]NumNeighborsPositive integer values log-scaled in the range [1, max(2,round(NumObservations/2))]Standardize'true'and'false''tree'MaxNumSplitsIntegers log-scaled in the range [1,max(2,NumObservations-1)]MinLeafSizeIntegers log-scaled in the range [1,max(2,floor(NumObservations/2))]NumVariablesToSampleIntegers in the range [1,max(2,NumPredictors)]SplitCriterion'gdi','deviance', and'twoing'Alternatively, use

hyperparameterswith your chosenLearners. Note that you must specify the predictor data and response when creating anoptimizableVariableobject.load fisheriris params = hyperparameters('fitcensemble',meas,species,'Tree');

To see the eligible and default hyperparameters, examine

params.

Set nondefault parameters by passing a vector of

optimizableVariable objects that have nondefault

values. For example,

load fisheriris params = hyperparameters('fitcensemble',meas,species,'Tree'); params(4).Range = [1,30];

Pass params as the value of

OptimizeHyperparameters.

By default, the iterative display appears at the command line,

and plots appear according to the number of hyperparameters in the optimization. For the

optimization and plots, the objective function is the misclassification rate. To control the

iterative display, set the Verbose option of the

HyperparameterOptimizationOptions name-value argument. To control the

plots, set the ShowPlots field of the

HyperparameterOptimizationOptions name-value argument.

For an example, see Optimize Classification Ensemble.

Example: 'OptimizeHyperparameters',{'Method','NumLearningCycles','LearnRate','MinLeafSize','MaxNumSplits'}

Options for optimization, specified as a HyperparameterOptimizationOptions object or a structure. This argument modifies the effect of the OptimizeHyperparameters name-value argument. If you specify HyperparameterOptimizationOptions, you must also specify OptimizeHyperparameters. All the options are optional. However, you must set ConstraintBounds and ConstraintType to return AggregateOptimizationResults. The options that you can set in a structure are the same as those in the HyperparameterOptimizationOptions object.

| Option | Values | Default |

|---|---|---|

Optimizer |

| "bayesopt" |

ConstraintBounds | Constraint bounds for N optimization problems, specified as an N-by-2 numeric matrix or | [] |

ConstraintTarget | Constraint target for the optimization problems, specified as | If you specify ConstraintBounds and ConstraintType, then the default value is "matlab". Otherwise, the default value is []. |

ConstraintType | Constraint type for the optimization problems, specified as | [] |

AcquisitionFunctionName | Type of acquisition function:

Acquisition functions whose names include | "expected-improvement-per-second-plus" |

LossFun | Type of validation loss to optimize, specified as "auto",

"classifcost", or "classiferror". In

the case of fitcensemble, the "auto" and

"classiferror" options are equivalent, and the software

uses the misclassified rate in decimal. "classifcost"

indicates to use the observed misclassification cost. | "auto" |

MaxObjectiveEvaluations | Maximum number of objective function evaluations. If you specify multiple optimization problems using ConstraintBounds, the value of MaxObjectiveEvaluations applies to each optimization problem individually. | 30 for "bayesopt" and "randomsearch", and the entire grid for "gridsearch" |

MaxTime | Time limit for the optimization, specified as a nonnegative real scalar. The time limit is in seconds, as measured by | Inf |

NumGridDivisions | For Optimizer="gridsearch", the number of values in each dimension. The value can be a vector of positive integers giving the number of values for each dimension, or a scalar that applies to all dimensions. The software ignores this option for categorical variables. | 10 |

ShowPlots | Logical value indicating whether to show plots of the optimization progress. If this option

is true, the software plots the best observed objective

function value against the iteration number. If you use Bayesian optimization

(Optimizer="bayesopt"), the software

also plots the best estimated objective function value. The best observed

objective function values and best estimated objective function values

correspond to the values in the BestSoFar (observed) and

BestSoFar (estim.) columns of the iterative display,

respectively. You can find these values in the properties ObjectiveMinimumTrace and EstimatedObjectiveMinimumTrace of the

SupervisedLearningBayesianOptimization object. If the

problem includes one or two optimization parameters for Bayesian optimization,

then ShowPlots also plots a model of the objective function

against the parameters. | true |

SaveIntermediateResults | Logical value indicating whether to save the optimization results. If this

option is true, the software overwrites a workspace variable

named SupervisedLearningBayesoptResults at each iteration.

The variable is a SupervisedLearningBayesianOptimization object. If you specify

multiple optimization problems using ConstraintBounds, the

workspace variable is an AggregateBayesianOptimization object named

AggregateBayesoptResults. | false |

Verbose | Display level at the command line:

For details, see the | 1 |

UseParallel | Logical value indicating whether to run the Bayesian optimization in parallel, which requires Parallel Computing Toolbox. Due to the nonreproducibility of parallel timing, parallel Bayesian optimization does not necessarily yield reproducible results. For details, see Parallel Bayesian Optimization. | false |

Repartition | Logical value indicating whether to repartition the cross-validation at every iteration. If this option is A value of | false |

| Specify only one of the following three options. | ||

CVPartition | cvpartition object created by cvpartition | KFold=5 if you do not specify a cross-validation option |

Holdout | Scalar in the range (0,1) representing the holdout fraction | |

KFold | Integer greater than 1 | |

Example: HyperparameterOptimizationOptions=struct(UseParallel=true)

Output Arguments

Trained classification ensemble model, returned as a ClassificationBaggedEnsemble

object, a ClassificationEnsemble object,

a ClassificationPartitionedEnsemble object, or a cell array of

model objects.

If you set any of the name-value arguments

CrossVal,CVPartition,Holdout,KFold, orLeaveout, thenMdlis aClassificationPartitionedEnsembleobject.If you specify

OptimizeHyperparametersand set theConstraintTypeandConstraintBoundsoptions ofHyperparameterOptimizationOptions, thenMdlis an N-by-1 cell array of model objects, where N is equal to the number of rows inConstraintBounds. If none of the optimization problems yields a feasible model, then each cell array value is[].Otherwise,

Mdlis aClassificationBaggedEnsembleorClassificationEnsemblemodel object, depending on theMethodname-value argument.

To reference properties of a model object, use dot notation.

Aggregate optimization results for multiple optimization problems, returned as an AggregateBayesianOptimization object. To return

AggregateOptimizationResults, you must specify

OptimizeHyperparameters and

HyperparameterOptimizationOptions. You must also specify the

ConstraintType and ConstraintBounds

options of HyperparameterOptimizationOptions. For an example that

shows how to produce this output, see Hyperparameter Optimization with Multiple Constraint Bounds.

Tips

NumLearningCyclescan vary from a few dozen to a few thousand. Usually, an ensemble with good predictive power requires from a few hundred to a few thousand weak learners. However, you do not have to train an ensemble for that many cycles at once. You can start by growing a few dozen learners, inspect the ensemble performance and then, if necessary, train more weak learners usingresumefor classification problems.Ensemble performance depends on the ensemble setting and the setting of the weak learners. That is, if you specify weak learners with default parameters, then the ensemble can perform poorly. Therefore, a good practice is to adjust the parameters of the weak learners using templates, and to choose values that minimize generalization error.

For example, this code shows how to adjust the maximum number of splits and the split criterion for tree weak learners.

Specifyt = templateTree(MaxNumSplits=5,SplitCriterion="deviance")Learners=tin the call tofitcensemble.If you specify to resample using

Resample, then it is good practice to resample to entire data set. That is, use the default setting of1forFResample.If the ensemble aggregation method (

Method) is'bag'and:The misclassification cost (

Cost) is highly imbalanced, then, for in-bag samples, the software oversamples unique observations from the class that has a large penalty.The class prior probabilities (

Prior) are highly skewed, the software oversamples unique observations from the class that has a large prior probability.

For smaller sample sizes, these combinations can result in a low relative frequency of out-of-bag observations from the class that has a large penalty or prior probability. Consequently, the estimated out-of-bag error is highly variable and it can be difficult to interpret. To avoid large estimated out-of-bag error variances, particularly for small sample sizes, set a more balanced misclassification cost matrix using

Costor a less skewed prior probability vector usingPrior.Because the order of some input and output arguments correspond to the distinct classes in the training data, it is good practice to specify the class order using the

ClassNamesname-value pair argument.To determine the class order quickly, remove all observations from the training data that are unclassified (that is, have a missing label), obtain and display an array of all the distinct classes, and then specify the array for

ClassNames. For example, suppose the response variable (Y) is a cell array of labels. This code specifies the class order in the variableclassNames.Ycat = categorical(Y); classNames = categories(Ycat)

categoricalassigns<undefined>to unclassified observations andcategoriesexcludes<undefined>from its output. Therefore, if you use this code for cell arrays of labels or similar code for categorical arrays, then you do not have to remove observations with missing labels to obtain a list of the distinct classes.To specify that the class order from lowest-represented label to most-represented, then quickly determine the class order (as in the previous bullet), but arrange the classes in the list by frequency before passing the list to

ClassNames. Following from the previous example, this code specifies the class order from lowest- to most-represented inclassNamesLH.Ycat = categorical(Y); classNames = categories(Ycat); freq = countcats(Ycat); [~,idx] = sort(freq); classNamesLH = classNames(idx);

When the ensemble aggregation method (

Method) is"AdaBoostM1"and one of the weak learners produces a training loss of0, thefitcensemblefunction omits the weak learner from the ensemble. If the software is unable to return a useful model, train the weak learner directly using the appropriate fit function (for example,fitctree).After training a model, you can generate C/C++ code that predicts labels for new data. Generating C/C++ code requires MATLAB Coder™. For details, see Introduction to Code Generation for Statistics and Machine Learning Functions.

Algorithms

For details of ensemble aggregation algorithms, see Ensemble Algorithms.

If you set

Methodto be a boosting algorithm andLearnersto be decision trees, then the software grows shallow decision trees by default. You can adjust tree depth by specifying theMaxNumSplits,MinLeafSize, andMinParentSizename-value pair arguments usingtemplateTree.If you specify the

Cost,Prior, andWeightsname-value arguments, the output model object stores the specified values in theCost,Prior, andWproperties, respectively. TheCostproperty stores the user-specified cost matrix (C) without modification. ThePriorandWproperties store the prior probabilities and observation weights, respectively, after normalization. For model training, the software updates the prior probabilities and observation weights to incorporate the penalties described in the cost matrix. For details, see Misclassification Cost Matrix, Prior Probabilities, and Observation Weights.For bagging (

'Method','Bag'),fitcensemblegenerates in-bag samples by oversampling classes with large misclassification costs and undersampling classes with small misclassification costs. Consequently, out-of-bag samples have fewer observations from classes with large misclassification costs and more observations from classes with small misclassification costs. If you train a classification ensemble using a small data set and a highly skewed cost matrix, then the number of out-of-bag observations per class can be low. Therefore, the estimated out-of-bag error can have a large variance and can be difficult to interpret. The same phenomenon can occur for classes with large prior probabilities.For the RUSBoost ensemble aggregation method (

'Method','RUSBoost'), the name-value pair argumentRatioToSmallestspecifies the sampling proportion for each class with respect to the lowest-represented class. For example, suppose that there are two classes in the training data: A and B. A has 100 observations and B has 10 observations. Suppose also that the lowest-represented class hasmobservations in the training data.If you set

'RatioToSmallest',2, thens*m2*10=20. Consequently,fitcensembletrains every learner using 20 observations from class A and 20 observations from class B. If you set'RatioToSmallest',[2 2], then you obtain the same result.If you set

'RatioToSmallest',[2,1], thens1*m2*10=20ands2*m1*10=10. Consequently,fitcensembletrains every learner using 20 observations from class A and 10 observations from class B.

For dual-core systems and above,

fitcensembleparallelizes training using Intel Threading Building Blocks (TBB). For details on Intel TBB, see https://www.intel.com/content/www/us/en/developer/tools/oneapi/onetbb.html.

References

[1] Breiman, L. “Bagging Predictors.” Machine Learning. Vol. 26, pp. 123–140, 1996.

[2] Breiman, L. “Random Forests.” Machine Learning. Vol. 45, pp. 5–32, 2001.

[3] Freund, Y. “A more robust boosting algorithm.” arXiv:0905.2138v1, 2009.

[4] Freund, Y. and R. E. Schapire. “A Decision-Theoretic Generalization of On-Line Learning and an Application to Boosting.” J. of Computer and System Sciences, Vol. 55, pp. 119–139, 1997.

[5] Friedman, J. “Greedy function approximation: A gradient boosting machine.” Annals of Statistics, Vol. 29, No. 5, pp. 1189–1232, 2001.

[6] Friedman, J., T. Hastie, and R. Tibshirani. “Additive logistic regression: A statistical view of boosting.” Annals of Statistics, Vol. 28, No. 2, pp. 337–407, 2000.

[7] Hastie, T., R. Tibshirani, and J. Friedman. The Elements of Statistical Learning section edition, Springer, New York, 2008.

[8] Ho, T. K. “The random subspace method for constructing decision forests.” IEEE Transactions on Pattern Analysis and Machine Intelligence, Vol. 20, No. 8, pp. 832–844, 1998.

[9] Schapire, R. E., Y. Freund, P. Bartlett, and W.S. Lee. “Boosting the margin: A new explanation for the effectiveness of voting methods.” Annals of Statistics, Vol. 26, No. 5, pp. 1651–1686, 1998.

[10] Seiffert, C., T. Khoshgoftaar, J. Hulse, and A. Napolitano. “RUSBoost: Improving classification performance when training data is skewed.” 19th International Conference on Pattern Recognition, pp. 1–4, 2008.

[11] Warmuth, M., J. Liao, and G. Ratsch. “Totally corrective boosting algorithms that maximize the margin.” Proc. 23rd Int’l. Conf. on Machine Learning, ACM, New York, pp. 1001–1008, 2006.

Extended Capabilities

fitcensemble supports parallel training

using the 'Options' name-value argument. Create options using statset, such as options = statset('UseParallel',true).

Parallel ensemble training requires you to set the 'Method' name-value

argument to 'Bag'. Parallel training is available only for tree learners, the

default type for 'Bag'.

To perform parallel hyperparameter optimization, use the UseParallel=true

option in the HyperparameterOptimizationOptions name-value argument in

the call to the fitcensemble function.

For more information on parallel hyperparameter optimization, see Parallel Bayesian Optimization.

For general information about parallel computing, see Run MATLAB Functions with Automatic Parallel Support (Parallel Computing Toolbox).

Usage notes and limitations:

fitcensemblesupports only decision tree learners. You can specify the name-value argumentLearnersonly as"tree", a learner template object or cell vector of learner template objects created bytemplateTree. If you usetemplateTree, you can specify the name-value argumentsSurrogateandPredictorSelectiononly as"off"and"allsplits", respectively.You can specify the name-value argument

Methodonly as"AdaBoostM1","AdaBoostM2","GentleBoost","LogitBoost", or"RUSBoost".You cannot specify the name-value argument

NPredToSample.If you use

templateTreeand the data contains categorical predictors, the following apply:For multiclass classification,

fitcensemblesupports only theOVAbyClassalgorithm for finding the best split.You can specify the name-value argument

NumVariablesToSampleonly as"all".