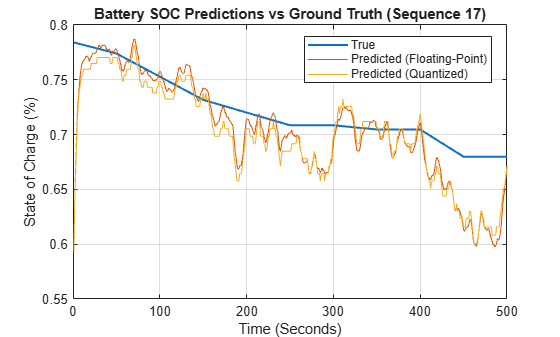

量化

将网络参数量化为精度降低的数据类型;为生成定点代码准备深度学习网络

将层的权重、偏置和激活量化为精度降低的缩放整数数据类型。然后,您可以从这个量化的网络中为 GPU、FPGA 或 CPU 部署生成 C/C++、CUDA® 或 HDL 代码。

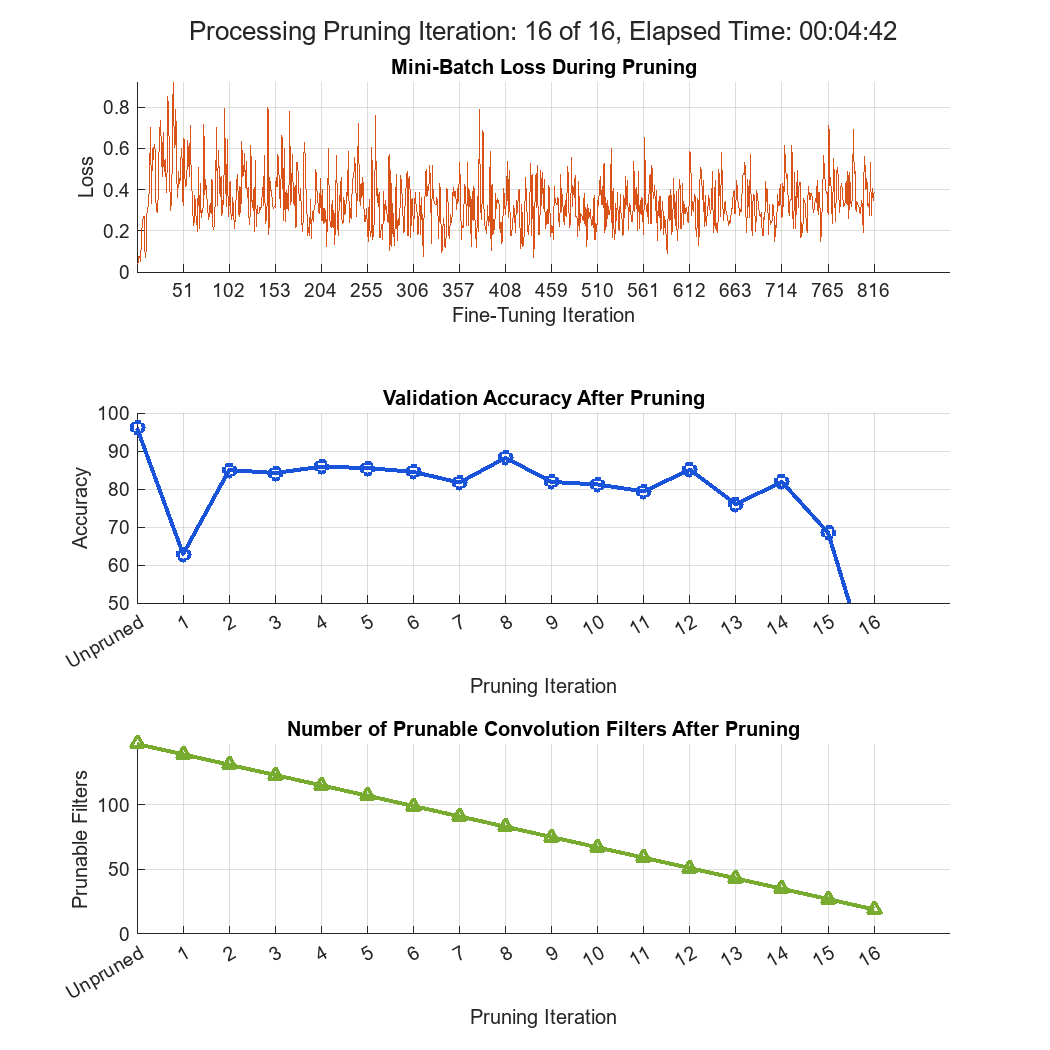

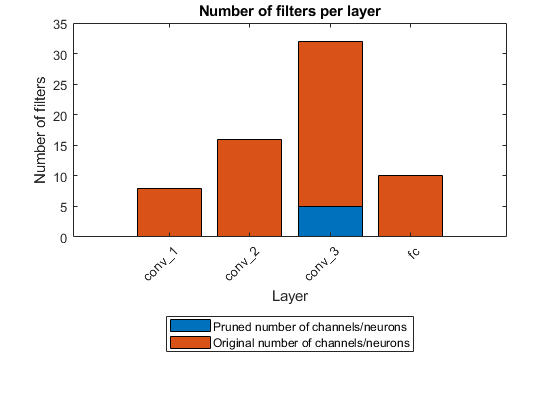

有关 Deep Learning Toolbox™ Model Compression Library 中提供的压缩技术的详细概述,请参阅Reduce Memory Footprint of Deep Neural Networks。

函数

dlquantizer | Quantize a deep neural network to 8-bit scaled integer data types |

dlquantizationOptions | Options for quantizing a trained deep neural network |

prepareNetwork | Prepare deep neural network for quantization (自 R2024b 起) |

calibrate | Simulate and collect ranges of a deep neural network |

quantize | Quantize deep neural network (自 R2022a 起) |

validate | Quantize and validate a deep neural network |

quantizationDetails | Display quantization details for a neural network (自 R2022a 起) |

estimateNetworkMetrics | Estimate network metrics for specific layers of a neural network (自 R2022a 起) |

equalizeLayers | Equalize layer parameters of deep neural network (自 R2022b 起) |

exportNetworkToSimulink | Generate Simulink model that contains deep learning layer blocks and subsystems that correspond to deep learning layer objects (自 R2024b 起) |

App

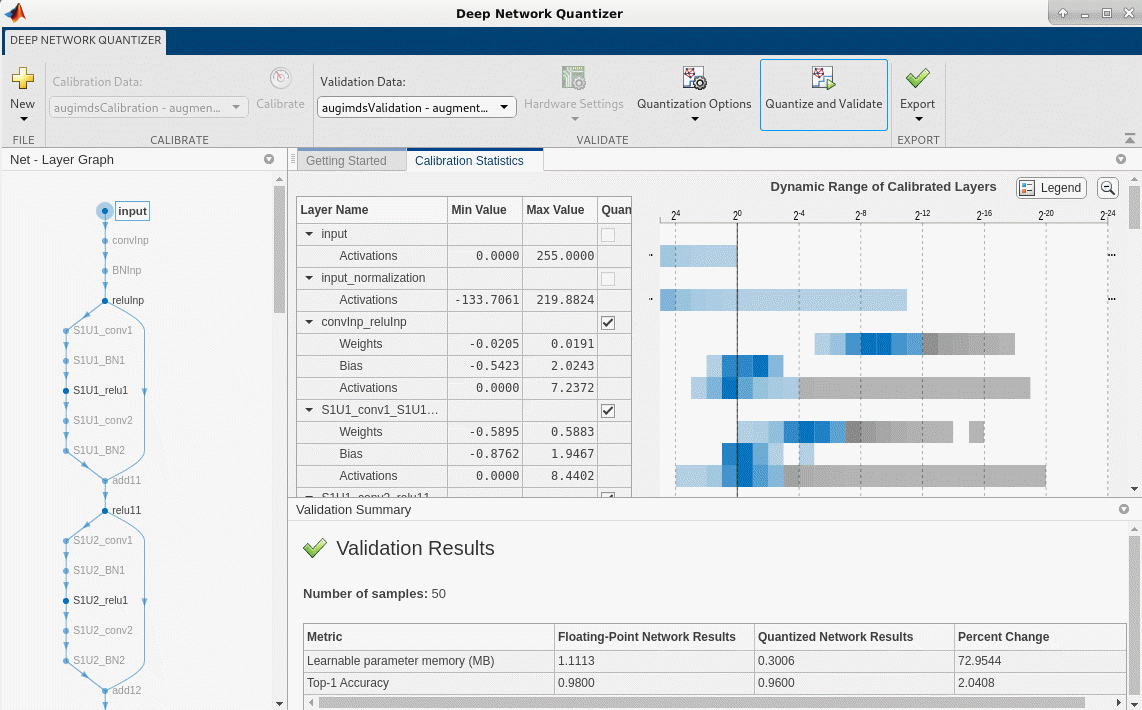

| 深度网络量化器 | Quantize deep neural network to 8-bit scaled integer data types |

主题

了解量化

- Quantization of Deep Neural Networks

Learn about deep learning quantization tools and workflows. - Data Types and Scaling for Quantization of Deep Neural Networks

Understand effects of quantization and how to visualize dynamic ranges of network convolution layers.

预部署工作流

- Prepare Data for Quantizing Networks

Learn about supported data formats for quantization workflows. - Quantize Multiple-Input Network Using Image and Feature Data

Quantize a network with multiple inputs. - Export Quantized Networks to Simulink and Generate Code

Export a quantized neural network to Simulink and generate code from the exported model. - Quantization-Aware Training with Pseudo-Quantization Noise

Perform quantization-aware training with pseudo-quantization noise on the MobileNet-V2 network. (自 R2026a 起)

部署

- Quantize Semantic Segmentation Network and Generate CUDA Code

Quantize a convolutional neural network trained for semantic segmentation and generate CUDA code. - Classify Images on FPGA by Using Quantized GoogLeNet Network (Deep Learning HDL Toolbox)

This example shows how to use the Deep Learning HDL Toolbox™ to deploy a quantized GoogleNet network to classify an image. - Compress Image Classification Network for Deployment to Resource-Constrained Embedded Devices

Reduce the memory footprint and computation requirements of an image classification network for deployment to resource-constrained embedded devices such as the Raspberry Pi®.

注意事项

- Quantization Workflow System Requirements

See what products are required for the quantization of deep neural networks. - Supported Layers for Quantization

Learn which deep neural network layers are supported for quantization.