高斯过程回归

App

| 回归学习器 | 使用有监督机器学习训练回归模型来预测数据 |

模块

| RegressionGP Predict | Predict responses using Gaussian process (GP) regression model (自 R2022a 起) |

函数

对象

RegressionGP | Gaussian process regression model |

CompactRegressionGP | Compact Gaussian process regression model class |

RegressionPartitionedGP | Cross-validated Gaussian process regression (GPR) model (自 R2022b 起) |

RegressionChainEnsemble | Multiresponse regression model (自 R2024b 起) |

CompactRegressionChainEnsemble | Compact multiresponse regression model (自 R2024b 起) |

主题

- 高斯过程回归模型

高斯过程回归 (GPR) 模型是基于核的非参数化概率模型。

- Kernel (Covariance) Function Options

In Gaussian processes, the covariance function expresses the expectation that points with similar predictor values will have similar response values.

- Exact GPR Method

Learn the parameter estimation and prediction in exact GPR method.

- Subset of Data Approximation for GPR Models

With large data sets, the subset of data approximation method can greatly reduce the time required to train a Gaussian process regression model.

- Subset of Regressors Approximation for GPR Models

The subset of regressors approximation method replaces the exact kernel function by an approximation.

- Fully Independent Conditional Approximation for GPR Models

The fully independent conditional (FIC) approximation is a way of systematically approximating the true GPR kernel function in a way that avoids the predictive variance problem of the SR approximation while still maintaining a valid Gaussian process.

- Block Coordinate Descent Approximation for GPR Models

Block coordinate descent approximation is another approximation method used to reduce computation time with large data sets.

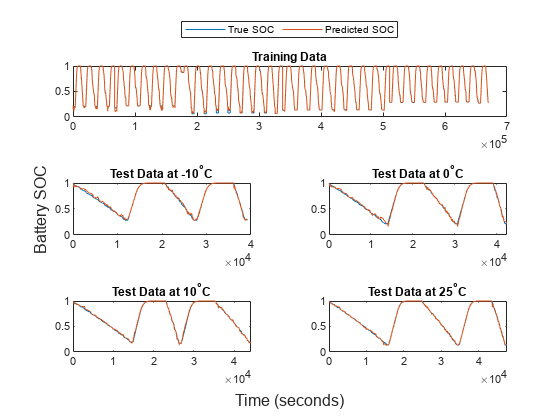

- Predict Responses Using RegressionGP Predict Block

Train a Gaussian process (GP) regression model, and then use the RegressionGP Predict block for response prediction.